Systematic Error in Biomedical Research: A Comprehensive Guide to Detection, Impact, and Mitigation

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for understanding and addressing systematic error.

Systematic Error in Biomedical Research: A Comprehensive Guide to Detection, Impact, and Mitigation

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for understanding and addressing systematic error. It covers the fundamental principles that distinguish systematic from random error, explores advanced detection methodologies like B-score normalization and hit distribution analysis in High-Throughput Screening (HTS), and offers practical strategies for mitigation through calibration, randomization, and blinding. The guide also examines validation techniques and compares systematic errors with random errors, highlighting why systematic errors are considered more detrimental to research validity. By synthesizing these intents, the article aims to equip professionals with the knowledge to significantly improve measurement accuracy and the reliability of scientific conclusions in biomedical and clinical research.

What is Systematic Error? Foundational Concepts for Researchers

Systematic error, also termed systematic bias, is a consistent, repeatable inaccuracy associated with faulty equipment or a flawed experimental design [1]. Unlike random errors which fluctuate unpredictably, systematic errors shift all measurements in the same direction, thus reducing the accuracy of an experiment, even if precision remains high [2] [3]. In the context of measurement accuracy research, understanding, identifying, and mitigating systematic error is paramount, as it can consistently bias results away from the true value, potentially leading to incorrect conclusions and, in fields like drug development, significant financial and clinical repercussions [4].

This whitepaper provides an in-depth technical guide to systematic error, detailing its core definition, contrast with random error, quantitative impacts across research domains, and robust methodologies for its detection and correction, framed specifically for researchers, scientists, and drug development professionals.

Core Concepts: Systematic vs. Random Error

The fundamental distinction between systematic and random error lies in their consistency, origin, and impact on data.

- Systematic Error (Bias): This is a flaw that causes measurements to consistently deviate from the true value in the same direction and magnitude. It is reproducible and stems from factors inherent to the system, such as a miscalibrated instrument or a flawed experimental protocol. Systematic errors affect the accuracy of results, meaning the closeness of measurements to the true value [1] [3].

- Random Error (Noise): This error is caused by unpredictable and fluctuating changes in the measurement process. It has no consistent pattern and varies randomly from one measurement to the next. Sources include environmental fluctuations, electronic noise, or human estimation in reading instruments. Random errors affect the precision of results, which is the closeness of repeated measurements to each other, but not necessarily to the true value [1] [3].

The following table summarizes the key differences:

Table 1: Fundamental Differences Between Systematic and Random Errors

| Feature | Systematic Error | Random Error |

|---|---|---|

| Definition | Consistent, repeatable error | Unpredictable, fluctuating error |

| Cause | Faulty equipment/experimental design | Uncontrollable environmental or measurement variations |

| Impact on | Accuracy | Precision |

| Direction | Consistently in one direction | Varies randomly in both directions |

| Reduction | Improved methods, calibration, design | Averaging repeated measurements, increasing sample size |

A classic visualization of these concepts demonstrates how accuracy and precision interact:

Figure 1: Conceptual relationship between accuracy and precision, determined by the levels of systematic and random error.

The Critical Impact of Systematic Error on Research Outcomes

Systematic error is not merely a theoretical concern; it has a demonstrably severe impact on research outcomes, often more so than random error. A simulation study on randomized clinical trials (RCTs) revealed a critical insight: while random errors added to up to 50% of cases produced only a slight inflation of variance in the estimated treatment effect, systematic errors produced significant bias even when introduced to a very small proportion of patients [4]. This finding underscores that resources in clinical trials should be prioritized toward minimizing systematic errors, which can severely bias results, rather than focusing exclusively on random errors, which primarily cause a small loss in statistical power [4].

The impact of systematic error is pervasive across scientific and engineering disciplines, as shown in the following table:

Table 2: Quantitative Impact of Systematic Errors Across Research Domains

| Field/Application | Source of Systematic Error | Documented Impact |

|---|---|---|

| Clinical Trials [4] | Errors in response endpoint favoring one treatment | Severe bias in estimated treatment effect with even small error rates. |

| Digital Image Correlation (DIC) [5] | Use of low-order shape functions to describe complex deformations | Primary source of error; improved algorithms reduced error by ~0.4 pixels. |

| Sinusoidal Encoders [6] | Amplitude mismatch, phase-imbalance, DC offsets in voltage outputs | Introduces error in angular displacement measurement; methods achieved >51% improvement. |

| Diffusion Tensor Imaging (DTI) [7] | B-matrix Spatial Distribution (BSD) errors, non-uniform magnetic fields | Significant disruption of Fractional Anisotropy (FA) and Mean Diffusivity (MD) measures; correction critical for tractography. |

Detection and Methodologies for Systematic Error

The Dimensional Sampling and Open Coding Protocol

A robust, systematic approach for detecting errors in complex systems, such as AI applications, involves a two-step process of creating a bootstrap dataset and analyzing traces [8].

1. Creating a Bootstrap Dataset via Dimensional Sampling: To overcome the initial lack of user data, a strategic synthetic dataset is generated. This involves:

- Defining Meaningful Dimensions: Identify key dimensions that vary across users and use cases. For example:

- Intent/Feature: Check availability, schedule tours, maintenance requests.

- User Persona: Prospective residents, current residents, property managers.

- Query Complexity: Highly ambiguous queries, crystal-clear requests [8].

- Two-Step Synthesis:

- Step 1: Generate combinations of these dimensions (e.g., "Schedule maintenance" + "Current resident" + "Very ambiguous query").

- Step 2: Feed these combinations to a Large Language Model (LLM) to generate naturalistic user queries. These queries are then manually reviewed for realism and accuracy [8].

2. Systematic Error Detection via Open Coding: Once a system is active, complete end-to-end records of user interactions, known as traces, are collected and analyzed using a qualitative technique called open coding [8].

- Process: A researcher reads each trace and writes brief, descriptive notes on observed problems, surprising actions, or unexpected behaviors.

- Outcome: This builds a deep understanding of how the system actually behaves, identifying specific failure modes like tool call hallucinations or poor user experience design [8].

The workflow for this methodology is outlined below:

Figure 2: Workflow for systematic error detection using dimensional sampling and open coding.

Technical Mitigation in Digital Image Correlation (DIC)

In experimental mechanics, DIC is a powerful technique for full-field deformation measurement, but it is susceptible to undermatched systematic errors. This occurs when a low-order shape function (e.g., first-order) is used to describe a high-order (e.g., second or third-order) displacement field within a subset [5]. Mitigating this without resorting to computationally expensive higher-order functions is an active research area.

Two advanced algorithms for this are:

- The Recovery Method: This method leverages the fact that DIC displacement results can be interpreted as the outcome of a Savitzky-Golay (S-G) filter applied to the actual displacement. It mitigates error by employing a linear combination of results from repeated applications of the S-G filter. Recent work has extended its effectiveness to second-order shape functions [5].

- The Improved Quasi-Gauss Point (IQGP) Method / Zero-Error Point (ZEP) Method: This approach identifies specific theoretical points within a subset where the undermatched error is zero. By using these points for calculation, the error is avoided. An enhanced version based on third-order displacement assumptions (ZEP) has shown an accuracy improvement of nearly 0.4 pixels over the traditional IQGP method [5].

The Scientist's Toolkit: Key Reagents & Materials

The following table details essential solutions and materials referenced in the featured research for mitigating systematic errors.

Table 3: Research Reagent Solutions for Systematic Error Mitigation

| Item / Solution | Function in Error Mitigation |

|---|---|

| Electronic Laboratory Notebook (ELN) [2] | Predefines data entry options to prevent transcriptional errors and manages equipment calibration schedules to prevent calibration drift. |

| B-matrix Spatial Distribution (BSD) Corrector [7] | A software tool that corrects for systematic spatial errors caused by non-uniformity of magnetic field gradients in Diffusion Tensor Imaging. |

| Magnitude-to-Time-to-Digital Converter [6] | A custom electronic circuit designed to quantify systematic errors (offsets, amplitude mismatch, phase-imbalance) in sinusoidal encoders without needing explicit ADCs. |

| Synthetic Data Generation Framework [8] | A systematic method for creating bootstrapped datasets to identify AI system failure modes before real-world deployment, ensuring data integrity from the start. |

| Savitzky-Golay (S-G) Filter Kernel [5] | A digital filter used in the Recovery Method for DIC to smooth data and reduce the impact of undermatched systematic errors by exploiting the low-pass filtering characteristic of DIC. |

Systematic error represents a consistent and insidious threat to measurement accuracy across scientific research and industrial application. Its defining characteristic—consistency over randomness—is what makes it particularly dangerous, as it can introduce severe bias even at low prevalence, a problem that is magnified in high-stakes fields like drug development [4]. Effectively addressing this challenge requires a multi-faceted approach: a deep understanding of its theoretical foundations, rigorous methodologies like dimensional sampling and open coding for detection [8], the application of field-specific advanced algorithms [6] [5] [7], and the strategic implementation of laboratory tools and automation to control human factors [2]. By prioritizing the identification and mitigation of systematic error, researchers can significantly enhance the accuracy, reliability, and overall integrity of their scientific findings.

In scientific research and drug development, the integrity of data is paramount. Measurement error, the difference between an observed value and the true value, is an inherent part of all empirical studies [9]. Understanding the fundamental distinction between systematic and random error is not merely an academic exercise; it is a critical prerequisite for ensuring data accuracy, interpreting experimental results correctly, and making valid conclusions in high-stakes environments like pharmaceutical development. This guide provides an in-depth technical examination of these error types, their impact on measurement accuracy, and the methodologies employed to mitigate them.

Defining Accuracy and Precision in a Research Context

In metrology, the science of measurement, the concepts of accuracy and precision have distinct and crucial meanings, often visualized using the analogy of a dartboard [9].

- Accuracy refers to how close a measurement is to the true value. It is the goal of hitting the bull's-eye. High accuracy indicates a lack of systematic error.

- Precision, however, refers to the reproducibility of a measurement. It is the closeness of agreement between independent measurements obtained under the same conditions. High precision indicates low random error.

A measurement can be precise but inaccurate (all darts are clustered tightly away from the bull's-eye) or accurate but imprecise (darts are scattered evenly around the bull's-eye). The ideal, of course, is a measurement that is both accurate and precise.

Systematic Error: A Deep Dive

Definition and Characteristics

Systematic error, also known as bias, is a consistent, predictable difference between the observed values and the true values [9] [10]. Unlike random errors, these inaccuracies are reproducible and skew results in a specific direction—either consistently higher or consistently lower than the true value [10]. Because they are consistent, systematic errors are not reduced by simply repeating measurements [10].

Systematic errors can originate from various aspects of the research process [9] [10] [11].

- Instrument-Related Errors: Faulty or miscalibrated equipment. Example: A scale that is not zeroed properly consistently adds 0.5 grams to every measurement [10].

- Environmental Errors: Uncontrolled external factors. Example: Temperature fluctuations affecting the performance of an electronic sensor, leading to a predictable drift [10] [12].

- Methodological/Procedural Errors: Flaws in the experimental design or protocol. Example: An observer consistently reading a measurement from an angle (parallax error) [10].

- Human Bias: Subjectivity in data collection. Example: In a clinical trial, a researcher's expectations unconsciously influencing how they assess a patient's response [9].

- Sampling Bias: When the study sample is not representative of the population. Example: Recruiting clinical trial participants primarily from a single demographic group, limiting the generalizability of the results [9].

Quantitative Analysis of Systematic Error Types

Systematic errors can be quantified as offset errors or scale factor errors [12].

Table 1: Quantifiable Types of Systematic Error

| Error Type | Description | Mathematical Model | Real-World Example |

|---|---|---|---|

| Offset Error | A constant value is added to or subtracted from the true measurement. Also called zero-setting or additive error [12]. | ( Observed = True + Offset ) | A pressure sensor always reads 2 kPa above the actual pressure, regardless of the pressure level. |

| Scale Factor Error | The measurement is consistently proportional to the true value. Also called multiplier error [12]. | ( Observed = Scale Factor \times True ) | A flow meter consistently measures 5% less than the actual flow rate across its entire operational range. |

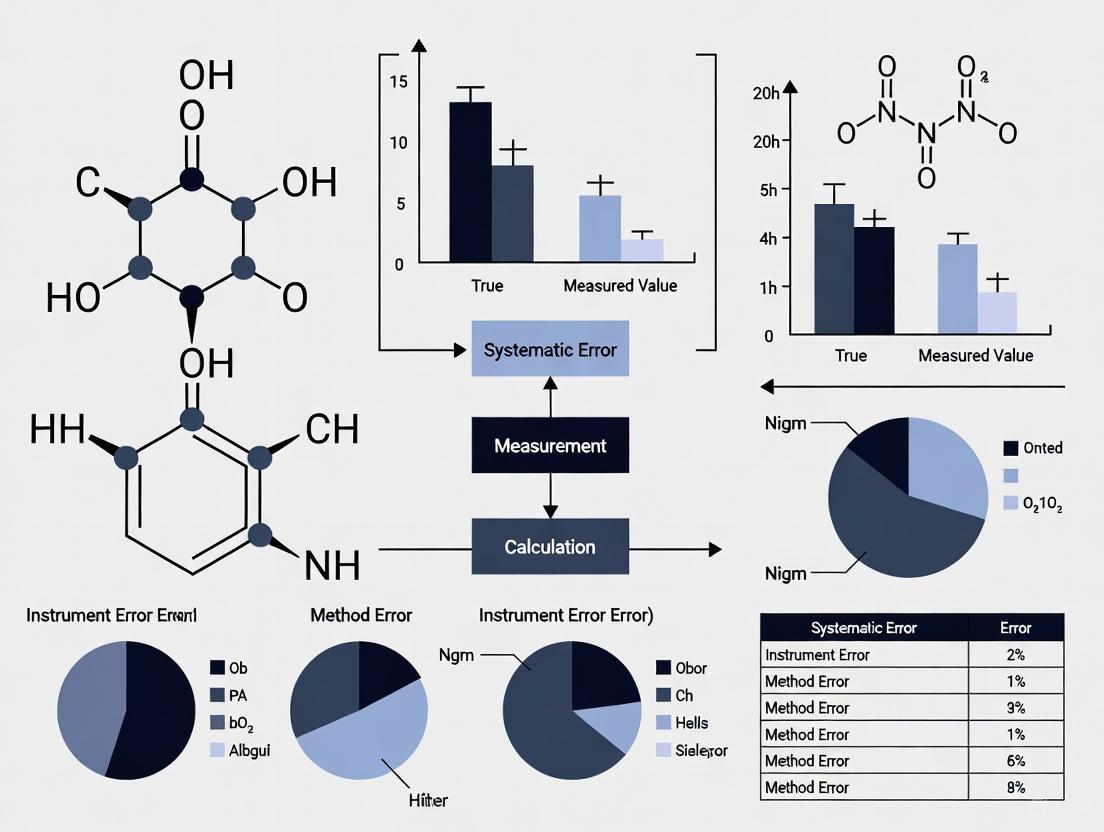

Figure 1: Systematic error introduces a predictable, directional bias.

Random Error: A Comprehensive Examination

Definition and Characteristics

Random error is a chance difference between the observed and true values that occurs unpredictably [9] [13]. These errors are not consistent in direction or magnitude and are often called "noise" because they obscure the true value, or "signal," of the measurement [9]. Random errors affect the precision of a dataset [9].

A key characteristic of random error is that it has an expected value of zero [13]. This means that while individual measurements may be higher or lower than the true value, the average of these errors over many measurements tends toward zero. This property is what allows random errors to be reduced through statistical means [13].

Sources of random error are typically unpredictable and stem from fluctuations in the experimental system [9] [13] [11].

- Natural Variations: Slight, uncontrollable changes in experimental contexts. Example: In a memory study, participants' performance varies due to unmeasured factors like time of day or stress level.

- Instrument Noise: Random fluctuations in electronic equipment. Example: Flickering in the last digit of a digital multimeter's display [12] [11].

- Human Variability: Limitations in human perception or reaction time. Example: A researcher pressing a stopwatch button with slight temporal variations when measuring reaction times.

- Sampling Errors: Random variations that occur when a sample is used to represent a population [11].

Statistical Properties and the Normal Distribution

When the same quantity is measured repeatedly, the random errors often follow a Gaussian or Normal Distribution [12]. In this distribution:

- The mean (μ) represents the average of all measurements.

- The standard deviation (σ) quantifies the spread of the measurements around the mean.

- Approximately 68% of measurements lie within μ ± 1σ, 95% within μ ± 2σ, and 99.7% within μ ± 3σ [12].

A smaller standard deviation indicates higher precision and lower random error.

Comparative Analysis: Systematic vs. Random Error

Understanding the contrasting features of these errors is critical for diagnosing and addressing data quality issues.

Table 2: A Comparative Analysis of Systematic and Random Error

| Feature | Systematic Error (Bias) | Random Error (Noise) |

|---|---|---|

| Impact on Data | Affects accuracy; creates a directional bias [9]. | Affects precision; creates data scatter [9]. |

| Direction & Pattern | Consistent, predictable, and reproducible [10]. | Unpredictable, varies in direction and magnitude [13]. |

| Cause | Identifiable issues in instrument, method, or environment [10]. | Uncontrollable, often unknown fluctuations [13]. |

| Reduction Strategy | Calibration, improved methods, blinding, triangulation [9] [10]. | Averaging repeated measurements, increasing sample size [9] [13]. |

| Statistical Property | Non-zero mean; does not average out with more data [10]. | Zero expected value; averages out with large sample size [9] [13]. |

Figure 2: Distinct mitigation pathways for systematic and random errors.

Experimental Protocols for Error Assessment and Mitigation

Robust research requires deliberate strategies to quantify and minimize both types of error.

Protocol 1: Identifying and Quantifying Systematic Error

Objective: To detect and measure the magnitude of systematic bias in a measurement system.

- Use of Certified Reference Materials (CRMs): Obtain a standard with a known true value traceable to a national standards body.

- Repeated Measurement: Measure the CRM repeatedly (e.g., n=10) under typical operating conditions.

- Data Analysis: Calculate the mean of the observed values.

- Bias Calculation: Determine the systematic error as: Absolute Error = |Observed Mean - True Value| [11]. A significant absolute error indicates the presence of systematic bias.

- Corrective Action: Recalibrate the instrument or adjust the methodology to eliminate the identified offset or scale factor error.

Protocol 2: Estimating and Reducing Random Error

Objective: To determine the precision of a measurement system and reduce variability.

- Repeated Sampling: For a stable and homogeneous sample, perform a large number of independent measurements (e.g., n=30).

- Statistical Calculation: Calculate the standard deviation (σ) and variance (σ²) of the dataset. The standard deviation is a direct measure of random error.

- Precision Improvement: To enhance precision, increase the sample size for the overall experiment. The standard error of the mean (SEM = σ/√n) will decrease, leading to a more precise estimate of the population mean [9].

The Scientist's Toolkit: Key Reagents and Materials for Error Control

Table 3: Essential Research Tools for Minimizing Measurement Error

| Tool / Reagent | Primary Function | Role in Error Mitigation |

|---|---|---|

| Certified Reference Materials (CRMs) | Substances with certified properties (e.g., purity, concentration). | Serves as a ground truth to identify, quantify, and correct for systematic error via instrument calibration [10]. |

| Calibration Standards | Physical standards for instrument calibration (e.g., standard weights, pH buffers). | Directly corrects for offset and scale factor systematic errors, ensuring instrument accuracy [9] [12]. |

| Automated Liquid Handlers | Robots for precise dispensing of liquids. | Minimizes random errors associated with human variability in pipetting, improving precision and reproducibility. |

| Environmental Control Systems | Chambers to regulate temperature, humidity, and pressure. | Controls external factors that cause both random errors (fluctuations) and systematic errors (drift) [10]. |

| Blinded Sample Kits | Clinician and patient kits where the treatment assignment is hidden. | Mitigates systematic error from observer bias and placebo effects in clinical trials, protecting the accuracy of outcome assessment [9]. |

Impact on Research and Drug Development

The failure to adequately address systematic error has profound implications, particularly in drug development. Systematic errors can lead to false positives (Type I errors) or false negatives (Type II errors) regarding a drug's efficacy or safety [9]. For instance, a systematic bias in how a patient outcome is assessed could lead a researcher to conclude a drug is effective when it is not, potentially resulting in the approval of an ineffective therapy. Conversely, a miscalibrated assay could mask a drug's true therapeutic effect, halting the development of a promising treatment. Because systematic error does not average out, its impact on the accuracy of conclusions is often more severe and insidious than that of random error [9] [10].

The critical distinction between systematic and random error is foundational to scientific integrity. Systematic error compromises accuracy by introducing a directional bias, while random error obscures the true signal by introducing imprecision. For researchers and drug development professionals, a rigorous approach involving regular calibration, methodological triangulation, robust experimental design, and appropriate statistical analysis is non-negotiable. By systematically implementing the protocols and utilizing the tools outlined in this guide, scientists can effectively mitigate these errors, thereby ensuring that their measurements—and the critical decisions based upon them—are both accurate and precise.

Systematic error, or bias, represents a fundamental challenge in scientific research, consistently skewing measurements away from true values and directly compromising data accuracy. This technical guide examines the mechanisms through which systematic error undermines measurement validity, exploring common sources such as miscalibrated instruments, experimenter drift, and flawed sampling methods. We present quantitative data from controlled studies, detail robust experimental protocols for error identification, and provide a structured framework for mitigation strategies, including triangulation, randomization, and rigorous calibration. Designed for researchers and drug development professionals, this whitepaper equips scientific teams with the necessary tools to enhance data integrity and research reproducibility by systematically controlling for bias.

In scientific research, measurement error is defined as the difference between an observed value and the true value of a quantity [9]. Systematic error, a consistent or proportional deviation from the true value, is particularly problematic because it introduces directional bias that cannot be reduced by mere repetition of experiments [9] [14]. Unlike random error, which affects precision and creates scatter around the true value, systematic error shifts the central tendency of measurements, directly undermining the accuracy of research findings [9] [14]. This fundamental distortion affects every stage of the research lifecycle, from initial data collection to final analysis, potentially leading to false positive or false negative conclusions (Type I or II errors) about relationships between variables [9]. In fields like drug development, where decisions have significant clinical and financial consequences, undetected systematic error can invalidate years of research and compromise patient safety. This guide examines the pervasive nature of systematic error through tangible laboratory examples, providing methodologies for its detection, quantification, and mitigation to uphold the validity of scientific research.

Fundamentals of Systematic Error

Systematic error operates differently from random error and requires distinct conceptual understanding and methodological approaches.

Systematic vs. Random Error

- Systematic Error (Bias): A consistent, predictable deviation from the true value that affects all measurements in the same direction [9] [14]. It reduces accuracy and remains constant across repeated measurements.

- Random Error (Noise): Unpredictable, chance variations that cause measurements to scatter randomly around the true value [9] [12]. It reduces precision but averages out with sufficient sample size.

The relationship between these errors and their impact on accuracy and precision is visually represented in the following diagram:

Quantitative Characterization of Systematic Error

Systematic errors can be quantitatively characterized into specific types, as detailed in the table below.

Table 1: Types and Characteristics of Quantifiable Systematic Errors

| Error Type | Technical Definition | Mathematical Expression | Common Source |

|---|---|---|---|

| Offset Error | Consistent deviation by a fixed amount from true value [9] | ( Observed = True + C ) | Improper zeroing of instruments [12] |

| Scale Factor Error | Proportional deviation from true value [9] | ( Observed = k × True ) | Miscalibrated measurement scale [12] |

| Drift Error | Gradual change in measurement bias over time [9] | ( Observed = True + f(t) ) | Instrument wear or environmental changes [9] |

Real-World Laboratory Examples of Systematic Error

The following examples illustrate how systematic error manifests in practical research settings, supported by quantitative data and experimental observations.

Instrument-Related Errors

Miscalibrated Measurement Devices

A classic example of systematic error occurs with improperly calibrated instruments. A miscalibrated analytical balance that has not been zeroed properly will consistently report masses that are either higher or lower than the true values by a fixed amount (offset error) [9] [12]. Similarly, a poorly calibrated pipette may consistently deliver volumes that deviate proportionally from the intended volume (scale factor error) [9]. These errors are particularly problematic because they directly affect experimental outcomes while remaining undetectable through statistical analysis of the measurements alone [15]. For instance, in pharmaceutical development, consistent over-delivery of an active ingredient by a miscalibrated pipette could lead to inaccurate dosage formulations with potential clinical consequences.

Eye-Tracking Research Case Study

A controlled study examining gaze tracking accuracy provides compelling quantitative evidence of systematic error in research instrumentation. The study compared systematic errors in monocular (single eye) versus version (averaged both eyes) signals in 143 participants across two experiments [16].

Table 2: Systematic Error in Eye-Tracking Signals (Quantitative Results)

| Signal Type | Experiment | Participants with Lower Systematic Error | Key Finding |

|---|---|---|---|

| Single Eye Signal | SF (n=79) | 29.5% | Superior accuracy for some subjects |

| Version Signal | SF (n=79) | 70.5% | Better accuracy for majority |

| Single Eye Signal | R038 (n=64) | 25.8% | Consistent pattern across experiments |

| Version Signal | R038 (n=64) | 74.2% | Majority preference but not universal |

This research demonstrates that systematic error characteristics vary significantly between individuals and measurement approaches, challenging the assumption that averaging signals always improves accuracy [16]. The findings underscore the importance of validating measurement approaches for specific research contexts rather than relying on generalized assumptions.

Researcher-Induced Errors

Experimenter Drift

Experimenter drift occurs when observers gradually depart from standardized procedures over extended periods of data collection or coding [9]. This form of systematic error typically manifests as slow, directional changes in measurement practices resulting from fatigue, boredom, or diminishing motivation [9] [17]. For example, in behavioral coding studies, researchers may gradually become more lenient in applying classification criteria, resulting in systematically different measurements between study phases. Similarly, in laboratory settings, technicians might unconsciously develop subtle variations in technique when performing repetitive manual operations, such as cell counting or sample preparation, introducing time-dependent bias into experimental results.

Interviewer and Response Bias

In research involving human subjects, interviewer bias occurs when researchers subtly influence participant responses through nonverbal cues, tone of voice, or questioning manner [18]. Conversely, response bias arises when participants provide answers they believe researchers want to hear, rather than reflecting their genuine experiences or beliefs [18]. This is particularly common in studies involving sensitive topics or subjective assessments, such as patient-reported outcomes in clinical trials. For instance, in pain assessment studies, participants might underreport discomfort if they perceive researchers want to demonstrate treatment efficacy, systematically skewing results toward favorable outcomes [9].

Procedural and Sampling Errors

Selection Bias

Selection bias occurs when certain segments of a population are systematically underrepresented in a study sample [9] [17]. In laboratory research, this might manifest when cell lines with specific growth characteristics are preferentially selected for experiments, potentially skewing results. In clinical research, recruiting participants exclusively from academic medical centers may systematically exclude populations with limited healthcare access, limiting the generalizability of findings [17]. Another form, omission bias, arises when particular groups are entirely excluded from sampling, such as studying cardiovascular drugs exclusively in male populations despite different manifestation and response in females [18].

Measurement Bias

Measurement bias occurs when data collection methods systematically distort findings [17]. This includes using instruments that are inappropriate for specific populations or contexts, such as applying assessment tools validated in intensive care settings to maternity care without proper adaptation [17]. Similarly, relying on retrospective self-reporting for phenomena like pain experiences introduces recall bias, as participants may not accurately remember or report events [17]. In biochemical assays, using substrates with different lot-to-lot variability without proper normalization can introduce systematic measurement differences across experimental batches.

Methodologies for Detecting Systematic Error

Detecting systematic error requires deliberate experimental strategies, as it cannot be identified through statistical analysis of data sets alone [15].

Calibration Validation Protocols

Regular calibration against known standards provides the most direct method for detecting systematic error in instrumentation [9] [15].

Experimental Protocol: Comprehensive Instrument Calibration

- Reference Standards: Acquire certified reference materials traceable to national or international standards [15].

- Zero-Point Calibration: Verify instrument reading with no applied input; adjust zero setting if necessary [15].

- Span Calibration: Apply reference standard at upper end of measurement range; record deviation from expected value [15].

- Intermediate Point Verification: Test additional reference points across measurement range to check for nonlinearity [15].

- Calibration Curve Construction: Plot measured values against known values to identify systematic patterns of deviation [15].

- Correction Factors: Develop mathematical corrections based on calibration results and apply to subsequent measurements [14].

This protocol should be performed at regular intervals determined by instrument stability and criticality of measurements, with documentation maintained for audit purposes [19].

Experimental Design for Error Detection

Method Comparison Studies

Comparing results obtained through different measurement methods provides powerful detection of method-specific systematic errors [9]. The following workflow illustrates this triangulation approach:

For example, when measuring stress levels, researchers might use survey responses, physiological recordings, and reaction time measurements concurrently [9]. Consistent results across these methods increase confidence in findings, while divergence indicates potential systematic error in one or more approaches.

Blinded Procedures Implementation

Blinding (masking) prevents researchers and/or participants from knowing group assignments or experimental hypotheses, thereby reducing systematic bias from expectations [9].

Experimental Protocol: Double-Blind Procedure

- Treatment Coding: Assign unique identifiers to experimental conditions rather than descriptive labels.

- Blinded Allocation: Conceal group assignment from both participants and researchers administering interventions.

- Blinded Assessment: Ensure outcome assessors are unaware of group assignments during data collection.

- Blinded Analysis: Implement coding schemes that conceal group identity during preliminary data analysis.

- Unblinding Protocol: Establish formal procedures for revealing group assignments only after primary analyses are complete.

This approach is particularly critical in drug development studies where knowledge of treatment allocation can systematically influence both participant reporting and researcher assessment of outcomes [9].

Mitigation Strategies and Research Best Practices

Proactive design considerations and methodological rigor provide the most effective defense against systematic error.

Systematic Error Control Framework

A comprehensive approach to controlling systematic error involves multiple complementary strategies throughout the research lifecycle.

Table 3: Systematic Error Mitigation Framework

| Strategy | Mechanism of Action | Application Example |

|---|---|---|

| Regular Calibration | Corrects inherent instrument deviation from true values [9] [15] | Using certified weights to calibrate laboratory balances monthly |

| Triangulation | Uses multiple methods to measure same construct [9] | Combining surveys, physiological data, and behavioral observations |

| Randomization | Balances unidentified confounding factors across groups [9] | Random assignment to treatment conditions in clinical trials |

| Blinding | Prevents expectation bias from influencing results [9] | Double-blind placebo-controlled drug trials |

| Standardization | Minimizes procedural variation across measurements [17] | Using detailed SOPs for all experimental procedures |

| Training | Reduces operator-induced errors through skill development [19] | Certification requirements for complex instrumentation operation |

Essential Research Reagents and Solutions

Proper selection and use of research materials is fundamental to minimizing systematic error in experimental systems.

Table 4: Essential Research Reagents for Error Control

| Reagent/Solution | Function in Error Control | Technical Specification |

|---|---|---|

| Certified Reference Materials | Calibration standard for instrument validation [15] | Traceable to national standards with documented uncertainty |

| Quality Control Materials | Monitoring measurement stability over time [19] | Stable, well-characterized materials with established target values |

| Standard Operating Procedures | Ensuring procedural consistency across experiments [17] | Step-by-step protocols with acceptance criteria and troubleshooting |

| Calibration Documentation | Maintaining measurement traceability [19] | Records of dates, standards used, corrections applied, and personnel |

Systematic error represents an ever-present challenge in scientific research, with demonstrated potential to significantly compromise measurement accuracy and research validity across diverse laboratory contexts. From fundamental instrumentation issues like miscalibrated scales to complex human factors such as experimenter drift, these biases operate consistently and insidiously, unaffected by statistical analysis or mere repetition of measurements. The case studies and methodologies presented in this whitepaper underscore that systematic error demands systematic solutions—rigorous calibration protocols, method triangulation, blinded procedures, and comprehensive researcher training. For drug development professionals and research scientists, implementing the structured framework outlined herein provides a pathway to enhanced data integrity, more reproducible findings, and ultimately, more valid scientific conclusions. As research methodologies grow increasingly complex, sustained vigilance against systematic error remains foundational to scientific progress and the advancement of knowledge.

Systematic error, often referred to as bias, represents a consistent, reproducible inaccuracy in the measurement process that skews data in a specific direction [9] [10]. Unlike random error, which causes statistical fluctuations around the true value, systematic error introduces a consistent deviation from the true value, leading to biased measurements and potentially false conclusions [20] [21]. This fundamental distinction makes systematic error particularly problematic in scientific research because it cannot be reduced by simply repeating experiments or increasing sample sizes [9] [20]. In the context of measurement accuracy research, understanding, quantifying, and mitigating systematic error is paramount, as it directly compromises the validity and generalizability of research findings [22] [23].

The impact of systematic error extends beyond simple inaccuracy. It can distort findings, reduce the generalizability of study results, lead to invalid conclusions, and ultimately erode trust in scientific research [22]. In fields like drug development, where decisions about efficacy and safety hinge on precise measurements, undetected systematic errors can have profound consequences, including inefficient resource allocation and missed opportunities for discovery [22] [24]. This paper provides a technical examination of how systematic error skews data, explores methodologies for its quantification, and outlines protocols for its mitigation, providing researchers with a framework for safeguarding the integrity of their measurements.

Mechanisms of Data Distortion

Systematic error introduces distortion through two primary quantifiable mechanisms: offset error and scale factor error [9] [6]. An offset error (also called additive or zero-setting error) occurs when a measurement instrument is not calibrated to a correct zero point, causing all measurements to be shifted upwards or downwards by a fixed amount [9]. For example, a weighing scale that always reads 0.5 grams when nothing is on it introduces a constant +0.5 gram offset to every measurement [10]. In contrast, a scale factor error (or multiplicative error) occurs when measurements consistently differ from the true value proportionally, such as by 10% across the entire measurement range [9]. This can result from issues like incorrect signal amplification [10]. These errors can be visualized by plotting observed values against true values, where an offset error appears as a parallel shift from the ideal line, and a scale factor error appears as a change in slope [9].

The direction and magnitude of these distortions directly threaten measurement accuracy. A systematic error consistently shifts results in one direction, either always increasing or always decreasing the measured values relative to their true values [9] [10]. This consistent bias affects the accuracy of a measurement—how close the observed value is to the true value—while potentially leaving the precision, or reproducibility, of the measurements unaffected [9] [20]. This distinction is crucial; a measurement can be precisely wrong if it is consistently biased. For instance, in a study of locomotive syndrome using the two-step test, young adults demonstrated a fixed bias, where retest results consistently increased compared to initial measurements, skewing the data in a specific, predictable direction [25].

The data distortion caused by systematic error directly facilitates false conclusions in research. By skewing data away from true values, systematic error can lead researchers to erroneously attribute observed effects to specific causes when, in fact, the effects are driven by the bias itself [22]. This can result in both false positive conclusions (Type I errors), where an effect is declared when none exists, and false negative conclusions (Type II errors), where a real effect is missed [9].

In environmental health research, for example, study sensitivity—a study's ability to detect a true effect—is critical. An insensitive study, potentially due to systematic measurement errors, may fail to detect a genuine hazard, leading to a false conclusion of no effect and potentially endangering public health [24] [26]. Systematic errors also limit the generalizability of findings. If the data collection process itself is biased, the results may not be accurately applicable to broader populations or different contexts, undermining the external validity of the research [22]. Furthermore, in systematic reviews and meta-analyses, which aim to synthesize evidence, the presence of uncontrolled systematic error in the primary studies can invalidate the overall conclusions and render the synthesis misleading [23].

Figure 1: Logical Pathway from Systematic Error to False Conclusions. This diagram illustrates how different types of systematic error lead to specific data distortions and ultimately result in various types of false scientific conclusions.

Quantitative Assessment of Systematic Error

Statistical Frameworks and Bias Parameters

Quantitative bias analysis (QBA) provides a methodological framework for estimating the direction and magnitude of systematic error's influence on observed results [21]. Unlike random error, which is quantified through standard deviations and confidence intervals and decreases with increasing sample size, systematic error represents a validity deficit that does not diminish with larger studies [21]. QBA requires the specification of bias parameters—quantitative estimates that characterize the features of the bias and relate the observed data to what the expected true data should be [21].

The specific bias parameters depend on the type of systematic error being assessed. For information bias (measurement error), the key parameters are the sensitivity and specificity of the measurements of exposures, outcomes, or confounders [21]. For selection bias, researchers must estimate participation rates from the target population across different levels of exposure and outcome in the analytic sample [21]. For unmeasured confounding, the required parameters include the prevalence of the unmeasured confounder among the exposed and unexposed groups, as well as the estimated strength of the association between the confounder and the outcome [21].

Methodologies for Quantitative Bias Analysis

Table 1: Methods for Quantitative Bias Analysis

| Method | Key Features | Data Requirements | Output | Best Use Cases |

|---|---|---|---|---|

| Simple Bias Analysis | Uses single values for bias parameters | Summary-level data (e.g., 2x2 table) | Single bias-adjusted estimate | Initial, rapid assessment of a single bias source |

| Multidimensional Bias Analysis | Applies multiple sets of bias parameters | Summary-level data | Set of bias-adjusted estimates | Contexts with uncertainty about parameter values |

| Probabilistic Bias Analysis | Specifies probability distributions for parameters; uses random sampling | Individual-level or summary-level data | Frequency distribution of revised estimates | Most robust analysis; incorporates maximum uncertainty |

Implementing QBA typically follows a structured process [21]. First, researchers must determine whether QBA is warranted, typically when results contradict prior findings or when concerns about systematic error exist. Next, they select which specific biases to address, informed by directed acyclic graphs (DAGs) that depict relationships between variables. Then, an appropriate modeling approach is selected based on the complexity needed, balancing computational intensity with the desired incorporation of uncertainty. Finally, sources of information for the bias parameters are identified, which can include internal or external validation studies, scientific literature, or expert opinion [21].

The result of a QBA is a bias-adjusted estimate that more accurately reflects the true relationship under investigation. For example, in a study of sinusoidal encoders, researchers quantified three systematic errors—offset, amplitude mismatch, and phase-imbalance—and implemented compensation functions that accurately estimated the true shaft angle [6]. Similarly, in observational oral health research, QBA has been applied to provide crucial context for interpreting associations, such as that between preconception periodontitis and time to pregnancy [21].

Experimental Protocols for Identifying Systematic Error

The Two-Step Test Protocol for Locomotive Syndrome

A recent study investigating systematic errors in the two-step test for locomotive syndrome risk assessment provides a detailed protocol for identifying fixed bias in measurement tools [25]. This cross-sectional study involved 95 young adults and 40 older adults who performed the two-step test twice within a 7-day interval [25]. The test requires participants to stand at a starting line with toes aligned and take two consecutive steps with the longest possible stride, bringing their feet together at the end [25]. The two-step length is measured, and a two-step value is calculated by dividing this length by the participant's height [25].

Key methodological details include [25]:

- Measurement Standardization: Two physical therapists, each with over 10 years of clinical experience, conducted measurements using standardized verbal instructions and a dedicated two-step test mat.

- Test Administration: Participants performed the test twice each session, with the maximum value used for analysis, while wearing shoes.

- Height Measurement: For young adults, self-reported height was used, while for older adults, height was measured by physical therapists using a validated method.

- Analysis Method: Bland-Altman analysis was used to assess fixed and proportional bias, and minimal detectable change (MDC) and limits of agreement (LOA) were calculated.

The study found that in young adults, the two-step test length was 279.2 ± 24.4 cm with a mean difference of 8.4 ± 12.3 cm between tests, indicating a fixed bias where results tended to increase during retesting [25]. In contrast, no systematic errors were detected in older adults [25]. The LOA ranged from -11.5 to 28.2 cm for length in young adults, and the MDC in older adults was 26.9 cm for length and 0.17 cm/height for the test value [25]. These quantitative measures provide thresholds for identifying clinically meaningful changes beyond measurement error.

Sinusoidal Encoder Calibration Protocol

Research on sinusoidal encoders (SEs) used for angular position measurement offers a technical protocol for quantifying systematic errors in instrumentation [6]. SEs ideally produce two voltage outputs that vary as perfect sine and cosine functions of the shaft angle, but practical devices exhibit systematic errors including offset, amplitude mismatch, and phase imbalance [6]. The mathematical representation of these errors is [6]:

Where α and β are DC offset voltages, τ represents amplitude mismatch, and ψ represents phase imbalance [6].

Experimental methodology for quantifying these errors involves [6]:

- Instrumentation: Two magnitude-to-time-to-digital converter circuits (DDI-1 for static conditions, DDI-2 for dynamic conditions) built using off-the-shelf electronic components, without requiring explicit analog-to-digital converters or look-up tables.

- Static Testing: For Method I, the shaft is rotated to five specific angles (0°, 90°, 180°, 270°, and 45°), and output voltages are measured to calculate error parameters.

- Dynamic Testing: DDI-2 employs an intermediate signal conditioner and modified direct-digitizer to process SE outputs under continuous shaft rotation.

- Compensation: After quantifying errors, compensation functions are applied to accurately estimate the true shaft angle.

The efficiency of these methods was quantified through simulation and experimental studies, with Method I achieving 88.33% efficiency and Method II achieving 95.45% efficiency in correcting systematic errors [6]. This protocol demonstrates how systematic errors can be rigorously quantified and compensated for in precision measurement instruments.

Figure 2: Experimental Workflow for Systematic Error Assessment. This workflow outlines the key steps in designing and executing an experiment to identify, quantify, and compensate for systematic errors in research measurements.

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Research Reagent Solutions for Systematic Error Investigation

| Tool/Reagent | Function/Application | Specific Examples from Research |

|---|---|---|

| Bland-Altman Analysis | Statistical method to assess agreement between two measurement methods, including fixed and proportional bias | Used to identify systematic errors in the two-step test for locomotive syndrome [25] |

| Quantitative Bias Analysis (QBA) | Set of methodological techniques to estimate direction and magnitude of systematic error's influence | Applied in observational oral health research to adjust for confounding, selection bias, and information bias [21] |

| Magnitude-to-Time-to-Digital Converters | Electronic circuits that quantify systematic errors in sinusoidal encoders without requiring explicit ADCs | DDI-1 (for static conditions) and DDI-2 (for dynamic conditions) used to quantify offset, amplitude mismatch, and phase imbalance [6] |

| Directed Acyclic Graphs | Visual tools for identifying and communicating hypothesized bias structures in observational research | Used in QBA to depict relationships between analysis variables and their measurements [21] |

| Calibration Standards | Reference materials with known values to check instrument accuracy and identify systematic errors | Certified thermocouples for temperature sensors; reference standards for industrial pressure sensors [10] |

Systematic error represents a fundamental challenge to measurement accuracy across scientific disciplines, consistently skewing data in specific directions and leading to potentially false conclusions [9] [10]. Unlike random error, which can be reduced through repeated measurements, systematic error arises from flaws in measurement systems, study design, or analytical procedures and persists regardless of sample size [20] [21]. The quantitative impact of systematic error can be substantial, as demonstrated in the two-step test study where young adults showed a mean difference of 8.4 ± 12.3 cm between tests due to fixed bias [25].

Robust methodological approaches, including Bland-Altman analysis, quantitative bias analysis, and specialized calibration protocols, provide researchers with powerful tools to quantify, account for, and mitigate systematic error [25] [6] [21]. By implementing these techniques and reporting bias-adjusted estimates alongside traditional measures, researchers can enhance the validity and reliability of their findings. In an era of increasing emphasis on research reproducibility and evidence-based decision making, particularly in critical fields like drug development, rigorously addressing systematic error is not merely a methodological refinement but an essential component of scientific integrity.

Why Systematic Error is a Greater Threat than Random Error to Research Validity

In the pursuit of scientific truth, particularly within drug development and biomedical research, distinguishing signal from noise is paramount. All empirical research is subject to measurement error, but all errors are not created equal. Systematic error, or bias, introduces a consistent distortion that compromises the very validity of research findings—the degree to which a study accurately reflects the true state of the phenomenon under investigation. In contrast, random error, stemming from unpredictable fluctuations, primarily affects the precision or reliability of measurements. This whitepaper, framed within a broader thesis on measurement accuracy, delineates the profound threat systematic error poses to research integrity. We argue that while random error can be quantified and mitigated through statistical means, systematic error is a more insidious threat due to its capacity to produce consistently biased results that statistical methods cannot easily correct, leading to false conclusions, wasted resources, and potentially unsafe clinical decisions. Supported by quantitative data, detailed experimental protocols, and visual aids, this guide provides researchers with the frameworks necessary to identify, assess, and correct for these critical errors.

The acquisition of knowledge in experimental science is an exercise in error management. The International Vocabulary of Metrology defines measurement error as the difference between a measured value and the true value [27]. This error is conceptually partitioned into two fundamental components: systematic error (bias) and random error (chance) [28] [29]. Understanding their distinct natures, origins, and impacts is the first step in safeguarding research validity.

- Systematic Error (Bias): A measurement error that is consistent and reproducible across measurements. It arises from flaws in the measurement system, including instrument calibration, study design, or observer bias [28] [27]. It causes measurements to systematically deviate from the true value in one direction (e.g., always too high or always too low).

- Random Error (Chance): A measurement error caused by unpredictable and inherent fluctuations in the measurement process. These fluctuations can stem from environmental variability, instrumental sensitivity, or procedural inconsistencies [28] [13]. Random error causes measurements to scatter randomly around the true value.

The relationship between these errors and core measurement properties is elegantly summarized by the target analogy [30]. As shown in the diagram below, accuracy—proximity to the true value—is determined by systematic error, while reliability (or precision)—the consistency of repeated measurements—is determined by random error.

Figure 1: Target Analogy for Accuracy and Reliability. This visualization illustrates how random error affects reliability (consistency) and systematic error affects accuracy (correctness).

Comparative Analysis: Systematic vs. Random Error

A thorough understanding of the characteristics of each error type reveals why systematic error presents a more formidable challenge to research validity. The table below provides a structured comparison.

Table 1: Fundamental Characteristics of Systematic and Random Error

| Characteristic | Systematic Error (Bias) | Random Error (Chance) |

|---|---|---|

| Definition | Consistent, directional deviation from the true value [28] | Unpredictable, non-directional scatter around the true value [28] [13] |

| Impact on | Accuracy and Validity [28] [30] | Reliability (Precision) [28] [30] |

| Cause | Flawed methods, uncalibrated instruments, confounding variables [28] [29] | Natural variability, environmental noise, measurement sensitivity limits [28] [12] |

| Predictability | Predictable in direction and magnitude (in principle) [28] | Unpredictable for any single measurement [13] |

| Statistical Mitigation | Cannot be reduced by averaging or increasing sample size [28] | Can be reduced by averaging repeated measurements and increasing sample size [28] [13] |

| Detection | Difficult; requires comparison against a known standard or alternative method [28] [27] | Easier; revealed by the variability (e.g., standard deviation) of repeated measurements [28] |

| Correction | Can be corrected if identified and quantified [27] | Cannot be corrected, only reduced [28] |

The Core Threat: Impact on Research Validity

The primary reason systematic error is considered more severe is its direct and uncompensating attack on validity—the cornerstone of credible research. Validity is the degree to which a study accurately measures what it purports to measure [29] [31].

- Systematic Error and Validity: Systematic error introduces a bias that severs the link between the measurement and the underlying truth. An invalid measurement, no matter how precise, is meaningless. For instance, in a clinical trial, a poorly calibrated assay will consistently overestimate a drug's effect, leading to a false conclusion about its efficacy. This bias can render a entire body of research unreliable [23].

- Random Error and Reliability: Random error, by contrast, affects reliability. It introduces uncertainty but does not systematically skew the result. The mean of many measurements will converge on the true value, thanks to the law of large numbers [28] [13]. While it can obscure real effects (leading to Type II errors), it does not invent them.

Furthermore, systematic error is notoriously resistant to statistical correction. As noted in high-throughput screening (HTS), applying sophisticated error-correction methods to data that does not contain systematic error can, paradoxically, introduce a bias [32]. This underscores that statistical procedures are not a panacea for fundamentally flawed designs.

Case Study: Systematic Error in High-Throughput Screening (HTS)

The field of drug discovery provides a compelling case study. High-Throughput Screening (HTS) involves testing thousands of chemical compounds to identify potential drug candidates (hits). The process is highly automated and exceptionally vulnerable to systematic artefacts [32].

Systematic errors in HTS can be caused by robotic failures, pipette malfunctions, temperature gradients across plates, or reader effects [32]. These errors are often location-based, affecting specific rows, columns, or well locations across multiple plates. This perturbs the data, potentially causing false positives (compounds appearing active when they are not) or false negatives (missing truly active compounds) [32].

The hit distribution surface is a powerful tool for visualizing this systematic bias. In an ideal, error-free experiment, hits are evenly distributed across the well locations of the screening plates. However, when systematic error is present, clear patterns emerge, such as over-representation of hits in specific rows or columns [32]. The workflow below outlines the process of identifying and correcting for these errors.

Figure 2: Workflow for Detecting and Correcting Systematic Error in HTS.

Experimental Protocol: Assessing Systematic Error with Hit Distribution

Aim: To statistically assess the presence of location-dependent systematic error in an HTS assay prior to hit selection.

Background: The hit selection process uses a threshold (e.g., μ - 3σ) to identify active compounds. A non-uniform distribution of these hits across the plate matrix suggests systematic bias [32].

Methodology:

- Data Collection: Collect raw measurement data from the HTS run, encompassing all plates and well locations.

- Hit Identification: Apply a predetermined hit selection threshold to the normalized data to label each well as a hit or non-hit.

- Create Hit Distribution Surface: For each well location (e.g., A1, A2, ... P24), compute the total number of hits across all plates.

- Visual Inspection: Plot the hit distribution surface as a heat map. Visual identification of row, column, or edge patterns indicates potential systematic error.

- Statistical Testing: Perform statistical tests to formally confirm the presence of systematic error. As recommended by [32], a Student's t-test can be used:

- Null Hypothesis (H₀): The mean measurement values from different plate regions (e.g., center vs. edge) are equal.

- Alternative Hypothesis (H₁): The mean measurement values from different plate regions are not equal.

- A statistically significant p-value (e.g., p < 0.05) allows for the rejection of the null hypothesis, confirming the presence of systematic error.

- Decision Point: If systematic error is confirmed, apply a robust normalization method like B-score normalization [32]. This method uses a two-way median polish to remove row and column effects within each plate, and then standardizes the residuals by the median absolute deviation (MAD), making the data comparable across plates.

The Scientist's Toolkit: Essential Reagents and Materials for HTS

Table 2: Key Research Reagents and Materials for HTS Experiments

| Item | Function in HTS |

|---|---|

| Multi-well Microplates (e.g., 384, 1536-well) | The standardized platform for holding compound libraries and biological assays during screening. |

| Compound Libraries | Collections of thousands of chemical compounds that are screened for biological activity against a target. |

| Cell Lines / Enzymes / Receptors | The biological target used in the assay to identify compounds that modulate its activity. |

| Detection Reagents (e.g., Fluorescent Dyes, Luminescent Substrates) | Enable the quantification of biological activity (e.g., enzyme activity, cell viability) within the assay. |

| Positive & Negative Controls | Substances with known activity levels used to normalize data, monitor assay performance, and detect plate-to-plate variability [32]. |

| Liquid Handling Robotics | Automated systems for precise and rapid dispensing of compounds, reagents, and cells into microplates. |

Quantitative Data and Error Mitigation Strategies

The quantitative impact of errors is assessed differently, reinforcing the distinction between them.

Quantifying the Errors

Table 3: Methods for Quantifying and Mitigating Systematic and Random Error

| Aspect | Systematic Error | Random Error |

|---|---|---|

| Quantification | Expressed as bias or percentage recovery [27]. Calculated as: Mean of Measurements - True Value. |

Expressed as standard deviation or variance. For a set of repeated measurements, it is quantified by the Standard Error of the Mean (SEM) or Median Absolute Deviation (MAD) [32] [33]. |

| Statistical Indicator | Confidence intervals for the mean will not contain the true value. | p-values and confidence intervals directly express the uncertainty introduced by random error [29]. |

| Primary Mitigation Strategy | Calibration against certified reference materials [28] [27]. Triangulation using multiple measurement techniques [28]. Improved study design (e.g., randomization, blinding) to control for confounding biases [28] [29]. | Averaging repeated measurements [28] [13]. Increasing sample size [28]. Using instruments with higher precision and controlling environmental variables [28] [12]. |

The Inseparability of Bias and Confounding

A critical manifestation of systematic error in observational research is confounding bias. A confounding variable is one that is associated with both the exposure (or independent variable) and the outcome (or dependent variable) but is not on the causal pathway [29]. Failure to control for confounders leads to a systematic miscalculation of the effect of interest.

Example from Research: A cohort study in Norway initially found that maternal preeclampsia increased the odds of a child having cerebral palsy (Odds Ratio: 2.5). However, after adjusting for the confounding variables "small for gestational age" and "preterm birth," the association was reversed, suggesting preeclampsia could be a protective factor for certain preterm infants [29]. This dramatic reversal highlights how an unaccounted-for confounder (prematurity) can introduce severe systematic error, completely invalidating the initial conclusion.

Within the rigorous framework of measurement accuracy research, the threat posed by systematic error is of a different magnitude than that of random error. Random error is a source of noise that can be managed and reduced through established statistical practices, and its effects are quantified in the confidence intervals around an estimate. Systematic error, however, is a silent saboteur of validity. It introduces a directional bias that cannot be mitigated by increasing sample size or repetition. It produces results that are consistently wrong, leading to false scientific conclusions, misdirected research resources, and, in fields like drug development, potential risks to human health.

A comprehensive quality assurance strategy must therefore prioritize the identification and elimination of systematic error. This involves rigorous study design, including randomization and blinding; diligent instrument calibration; the use of appropriate controls; and statistical testing for bias prior to data correction. Researchers must first confirm the absence of significant systematic error before applying corrective algorithms to avoid introducing further bias [32]. Ultimately, the path to valid and trustworthy research findings is paved with a relentless vigilance against systematic error.

Detecting Systematic Error: Methodologies for HTS and Complex Assays

In measurement accuracy research, statistical tests serve as fundamental tools for detecting differences, assessing distributions, and evaluating relationships within datasets. However, the validity of any statistical conclusion is profoundly influenced by the presence of systematic error, also known as bias. Systematic error represents consistent, non-random deviations from true values that can skew results in a particular direction and compromise research integrity [9] [14]. Unlike random errors, which tend to cancel out over repeated measurements and primarily affect precision, systematic errors persist throughout the data collection process and directly impact accuracy, potentially leading to false conclusions and flawed decision-making [34]. This technical guide examines three essential statistical tests—t-test, Kolmogorov-Smirnov, and Chi-square—within the context of systematic error, providing researchers in scientific and drug development fields with methodologies to detect, quantify, and mitigate bias in their measurements.

The distinction between random and systematic error is crucial for understanding measurement reliability. Random error, or "noise," causes variability around the true value with no consistent pattern, while systematic error, or "bias," creates a consistent directional shift from the true value [9]. In practice, systematic errors are generally more problematic than random errors because they cannot be reduced simply by increasing sample size and may lead to Type I or II errors in hypothesis testing [9] [34]. Common sources of systematic error include improperly calibrated instruments, flawed sampling methods, experimenter bias, and model assumption violations [14] [22]. The following sections explore specific statistical tests and their interactions with systematic error, providing frameworks for maintaining data integrity in research settings.

The Problem of Systematic Error in Research

Defining Systematic Error and Its Impact

Systematic error represents a fixed or predictable deviation from the true value that affects all measurements in a consistent direction [14]. These errors are particularly problematic in research because they introduce inaccuracy that cannot be eliminated through statistical averaging alone. As defined by metrology standards, systematic error "is a fixed deviation that is inherent in each and every measurement" [14], meaning the same biasing factor influences all observations within a dataset. This consistent directional influence distinguishes systematic error from random variation and makes it particularly dangerous for drawing valid scientific conclusions.

The impact of systematic error on research outcomes is multifaceted and potentially severe. When systematic errors go undetected or unaddressed, they can:

- Distort findings by consistently shifting results in one direction, creating false patterns or obscuring true effects [22]

- Reduce generalizability by introducing bias that limits applicability to broader populations or contexts [22]

- Invalidate conclusions when researchers erroneously attribute observed effects to specific causes rather than recognizing underlying measurement bias [9]

- Lead to Type I and II errors in hypothesis testing, resulting in false positives (detecting effects that don't exist) or false negatives (missing genuine effects) [9]

- Compromise decision-making in critical fields like drug development, where biased measurements could affect safety and efficacy evaluations [22]

Contrasting Random and Systematic Error

Understanding the distinction between random and systematic error is essential for proper research design and interpretation. Random error represents unpredictable fluctuations around the true value that occur due to natural variability in measurement processes, while systematic error creates consistent directional bias across all measurements [9]. This fundamental difference has important implications for how researchers address each type of error.

Table 1: Comparison of Random and Systematic Errors

| Characteristic | Random Error | Systematic Error |

|---|---|---|

| Definition | Unpredictable fluctuations around true value | Consistent directional deviation from true value |

| Impact on measurements | Creates imprecision (scatter) | Creates inaccuracy (bias) |

| Directionality | Non-directional (varies randomly) | Directional (consistent shift) |

| Effect of increasing sample size | Reduces impact through averaging | No reduction through averaging |

| Detectability | Revealed through repeated measurements | Difficult to detect without reference standards |

| Common sources | Natural variability, instrument sensitivity | Calibration errors, flawed protocols, selection bias |

Random error mainly affects precision—how reproducible measurements are under equivalent circumstances—while systematic error affects accuracy—how close observed values are to true values [9]. In practical terms, when only random error is present, repeated measurements of the same quantity will tend to cluster around the true value, with some observations higher and others lower. When systematic error is present, all measurements are shifted in a consistent direction away from the true value [9]. Critically, increasing sample size can help mitigate the effects of random error but does nothing to address systematic error, which requires different mitigation strategies including calibration, randomization, and triangulation [9] [34].

Core Statistical Tests for Detection

Student's t-Test: Comparing Group Means

The Student's t-test is a fundamental statistical procedure often described as "the bread and butter of statistical analysis" [35]. It tests whether the difference between group means is statistically significant, making it invaluable for comparing interventions, treatments, or conditions in research settings. The t-test exists in several forms, each with specific applications and assumptions.

The three primary types of t-tests include:

- One-sample t-test: Compares a single sample mean to a known or hypothesized population value [35] [36]

- Two-sample t-test: Compares means from two independent populations or groups [35] [36]

- Paired t-test: Compares two related measurements, such as pre-test and post-test scores on the same subjects [35] [36]

The mathematical foundation of the t-test relies on the t-statistic, which represents a signal-to-noise ratio where the difference between means constitutes the signal and the variability within groups constitutes the noise [34]. For a one-sample t-test, the formula is:

[ t = \frac{\bar{x} - \mu_0}{s/\sqrt{n}} ]

where (\bar{x}) is the sample mean, (\mu_0) is the hypothesized population mean, (s) is the sample standard deviation, and (n) is the sample size [36]. The resulting t-value is compared to critical values from the t-distribution to determine statistical significance.

Table 2: T-Test Types and Applications

| Test Type | Formula | Applications | Assumptions |

|---|---|---|---|

| One-sample | (t = \frac{\bar{x} - \mu_0}{s/\sqrt{n}}) | Comparing sample mean to known value or gold standard [35] | Normality, independence, random sampling |

| Independent two-sample | (t = \frac{\bar{x}1 - \bar{x}2}{sp\sqrt{1/n1 + 1/n_2}}) | Comparing means between two unrelated groups [35] [36] | Normality, equal variances (for standard test), independence |

| Paired | (t = \frac{\bar{d}}{s_d/\sqrt{n}}) | Comparing before/after measurements or matched pairs [35] [36] | Normality of differences, independence of pairs |

Systematic error can significantly impact t-test results by biasing group means in consistent directions. For example, an improperly calibrated measurement device might consistently overestimate values in one group but not another, creating apparent differences where none exist or masking true differences. Additionally, violation of t-test assumptions—particularly normality and homogeneity of variance—can introduce systematic error into significance tests [36]. When sample sizes are small (generally <15) and data are clearly skewed or contain outliers, nonparametric alternatives to the t-test are recommended to avoid the influence of systematic bias [35].

Kolmogorov-Smirnov Test: Comparing Distributions

The Kolmogorov-Smirnov (K-S) test is a nonparametric method that tests whether a sample comes from a specified distribution (one-sample case) or whether two samples come from the same distribution (two-sample case) [37]. Unlike the t-test, which focuses specifically on means, the K-S test is sensitive to differences in location, shape, and spread of distributions, making it a more comprehensive test of distributional equivalence.

The K-S statistic quantifies the maximum vertical distance between two cumulative distribution functions. For the one-sample case, the test statistic is:

[ Dn = \supx |F_n(x) - F(x)| ]

where (F_n(x)) is the empirical distribution function of the sample and (F(x)) is the cumulative distribution function of the reference distribution [37]. For the two-sample case, the statistic becomes:

[ D{n,m} = \supx |F{1,n}(x) - F{2,m}(x)| ]

where (F{1,n}) and (F{2,m}) are the empirical distribution functions of the first and second samples, respectively [37]. The K-S test is particularly valuable because it requires no assumptions about the underlying distributions' parameters, making it distribution-free.

The two-sample K-S test serves as one of the "most useful and general nonparametric methods for comparing two samples" because it detects differences in both location and shape of empirical cumulative distribution functions [37]. This sensitivity to various distributional characteristics makes it particularly valuable for identifying systematic shifts between groups that might not be apparent in mean comparisons alone.

Systematic error manifests in K-S testing when consistent measurement bias affects the shape or position of empirical distributions. For instance, if an instrument consistently records higher values across its measurement range, the K-S test may detect this as a distributional shift even when the mean remains unaffected. However, the K-S test requires a relatively large number of data points compared to other goodness-of-fit tests to properly reject the null hypothesis, which can limit its utility in small-sample research [37]. When parameters of the reference distribution are estimated from the data rather than known a priori, the test statistic's null distribution changes, requiring modifications like the Lilliefors test for normality [37].

Chi-Square Test of Independence

The Chi-square test of independence is a nonparametric statistic designed to analyze group differences when the dependent variable is measured at a nominal or categorical level [38]. It tests whether there is a significant association between two categorical variables by comparing observed frequencies in a contingency table with expected frequencies under the assumption of independence.

The Chi-square statistic is calculated as:

[ \chi^2 = \sum \frac{(O - E)^2}{E} ]