Systematic Error in Method Comparison: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive examination of systematic error within the context of analytical method comparison, a critical concern for researchers, scientists, and professionals in drug development.

Systematic Error in Method Comparison: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive examination of systematic error within the context of analytical method comparison, a critical concern for researchers, scientists, and professionals in drug development. It covers foundational concepts distinguishing systematic from random error, outlines the design and execution of robust comparison of methods experiments, and offers practical strategies for troubleshooting and minimizing bias. The content further details statistical validation techniques to quantify systematic error and concludes with insights on fostering a culture of quality to ensure data integrity and reproducible research outcomes in biomedical and clinical settings.

Understanding Systematic Error: Definitions, Impact, and Sources in Research

In scientific research, particularly in method comparison studies, all measurements possess a degree of uncertainty termed measurement error [1]. This error represents the difference between the true value of a measured quantity and the value obtained through measurement. Understanding and characterizing this error is fundamental to assessing the reliability of any methodological approach. Measurement error is broadly categorized into two distinct types: systematic error (bias) and random error [2] [3]. Systematic error refers to reproducible inaccuracies that consistently skew results in the same direction, thereby reducing the accuracy of a method. In contrast, random error arises from unpredictable fluctuations in the measurement process or system, affecting the precision of repeated measurements [1] [4]. The cumulative effect of both systematic and random error is known as the total error, which represents the overall uncertainty of a measurement [1]. For researchers and drug development professionals, correctly identifying and managing these errors is critical for validating new methodologies, ensuring the integrity of clinical trial data, and making sound scientific conclusions.

Defining Systematic Error: Core Concepts and Terminology

What is Systematic Error?

Systematic error, often termed bias, is a consistent, reproducible inaccuracy in measurement that skews results in one direction away from the true value [2] [1]. Unlike random variations, systematic error introduces a non-zero mean deviation that cannot be eliminated simply by repeating measurements, as the bias is reproduced with each iteration [1]. This type of error is particularly problematic in method comparison research because it directly compromises the trueness of a method—that is, the closeness of agreement between the average value obtained from a large series of test results and an accepted reference value [1]. In laboratory medicine, for instance, a test must be both accurate (true) and precise (reliable) to be clinically useful, and systematic error directly undermines this accuracy [1].

Key Characteristics of Systematic Error

Systematic error exhibits several defining characteristics that distinguish it from other error types. It is directional, meaning it consistently pushes measurements either above or below the true value [1] [4]. It is also reproducible; the same magnitude and direction of error will recur under identical measurement conditions [1]. Furthermore, systematic error is non-compensating, meaning it does not average out with repeated measurements. If multiple measurements are taken and averaged, the systematic error remains embedded in the mean value, leading to a biased estimate of the true quantity [4].

Forms of Systematic Error: Constant and Proportional Bias

In method comparison studies, systematic error typically manifests in two primary forms, which can occur independently or in combination [1]:

Constant Bias: This occurs when the difference between the observed measurement and the true value remains constant throughout the measurement range. It represents a fixed offset that affects all measurements equally, regardless of magnitude. Mathematically, it can be expressed as ( \text{Observed Value} = \text{True Value} + \text{Constant} ) [1].

Proportional Bias: This occurs when the difference between the observed and true values changes in proportion to the magnitude of the measurement. It represents a scale factor error where the inaccuracy increases as the quantity being measured increases. This relationship can be expressed as ( \text{Observed Value} = \text{True Value} \times \text{Factor} ) [1].

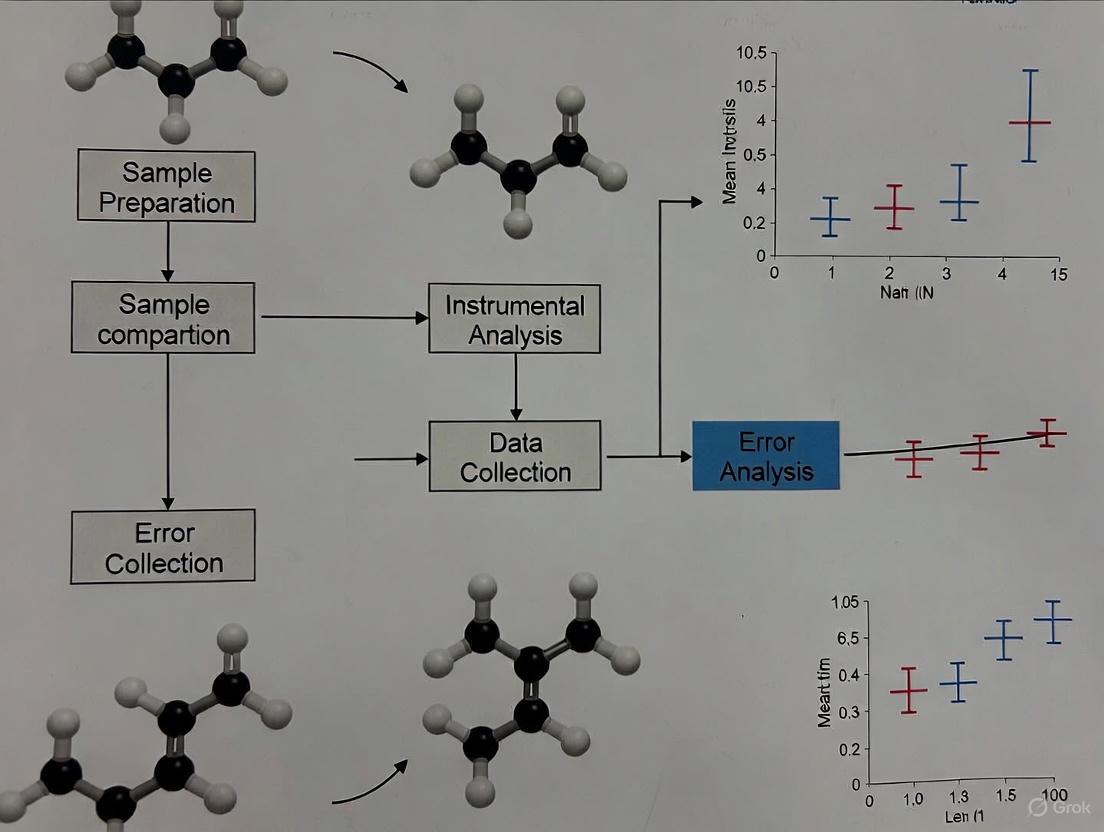

The following diagram illustrates the concepts of constant and proportional bias in comparison to an ideal, error-free measurement.

Diagram 1: Visualization of constant and proportional bias compared to an ideal measurement.

Contrasting Systematic and Random Error

Fundamental Differences in Nature and Impact

Systematic and random errors represent fundamentally different phenomena in scientific measurement, each with distinct causes, behaviors, and implications for research outcomes. The table below summarizes the key differences between these two error types:

| Characteristic | Systematic Error (Bias) | Random Error |

|---|---|---|

| Definition | Consistent, reproducible deviation from the true value [1] | Unpredictable fluctuations around the true value [1] [3] |

| Directional Effect | Skews results consistently in one direction [1] [4] | Scatters results equally in both directions [4] |

| Impact on Measurements | Reduces accuracy [2] [5] | Reduces precision [4] |

| Elimination via Averaging | Cannot be reduced by averaging [1] [4] | Can be reduced by averaging repeated measurements [1] [4] |

| Primary Causes | Flawed instrument calibration, procedural imperfections, experimental design flaws [5] [3] | Electronic noise, environmental fluctuations, human estimation variability [5] [3] |

| Detection Methods | Method comparison with reference standards, control samples, statistical tests [6] [1] | Replication studies, standard deviation analysis [1] [4] |

| Quantification Approaches | Linear regression (constant & proportional bias) [1] | Standard deviation, variance [4] |

Impact on Research Outcomes: Clinical Trials Evidence

The distinct impacts of systematic versus random error have been empirically demonstrated in randomized clinical trials. A study investigating the effect of data errors on trial outcomes found that random errors added to up to 50% of cases produced only slightly inflated variance in the estimated treatment effect, with no qualitative change in the p-value [7]. In contrast, systematic errors produced bias even for very small proportions of patients with added errors [7]. This research concluded that resources devoted to clinical trials should be spent primarily on minimizing sources of systematic errors which can severely bias estimated treatment effects, rather than on random errors which result only in a small loss in power [7].

Systematic errors can originate from various sources in the experimental process. Instrumental errors occur when measurement devices are improperly calibrated, damaged, or used outside their specified operating conditions [2] [3]. Examples include a scale that always reads 5 grams over the true value [2], a pH meter with a consistent 0.5 unit offset, or a calculator that rounds incorrectly [2]. Procedural errors arise from flaws in the experimental design or execution, such as using insensitive equipment that cannot detect low-level samples [5], or applying incorrect data correction methods to error-free data, which introduces bias rather than removing it [6].

Human-Induced Systematic Errors

Human factors frequently introduce systematic errors into experimental outcomes. Estimation errors occur when researchers must interpret measurements on analog instruments, such as viewing a meniscus from an incorrect angle when reading a liquid volume [5]. Confirmation bias represents another significant source, where experimenters are less likely to detect or question errors that cause data to align with their hypotheses [5]. Similarly, experimenter bias can occur in unblinded studies where knowledge of treatment conditions unconsciously influences measurements and judgments [5]. These human errors can be particularly challenging to identify and eliminate as they often involve unconscious processes.

Environmental factors can create consistent, directional biases in measurements. For example, temperature variations in a laboratory can affect reaction rates or instrument performance in reproducible ways [5]. Instrument drift represents another common source, where electronic components degrade over time or as instruments warm up, causing measurements to shift systematically in one direction [5]. Hysteresis effects, where a physically observable effect lags behind its cause, can also introduce systematic errors in certain measurement contexts [5].

Detection Methods for Systematic Error

Method Comparison and Reference Materials

The most direct approach for detecting systematic error involves method comparison with certified reference materials or gold standard methods [1]. This process involves repeatedly measuring samples with known values and comparing the results to the established reference values. A consistent deviation from the reference value across multiple measurements indicates the presence of systematic error [1]. In laboratory medicine, this approach is considered essential for initial assay validation and ongoing accuracy assessment [1]. The regression parameters obtained from comparing test methods to reference methods allow for quantification of both constant and proportional bias, enabling appropriate corrective measures [1].

Statistical Process Control Techniques

Statistical process control methods provide powerful tools for detecting systematic errors in ongoing measurement processes. Levey-Jennings plots visually display the fluctuation of control sample measurements around the mean over time, with reference lines indicating mean ±1, ±2, and ±3 standard deviations [1]. Systematic errors manifest as shifts or trends in the plotted values that violate expected random distribution patterns [1]. Westgard rules provide specific decision criteria for identifying systematic errors, including the 22S rule (two consecutive controls between 2 and 3 SD on same side of mean), 41S rule (four consecutive controls >1 SD from mean on same side), and 10x rule (ten consecutive controls on same side of mean) [1].

Statistical Testing Procedures

Formal statistical tests can be applied to assess the presence of systematic error in experimental data. In high-throughput screening, for example, researchers have tested procedures including the χ² goodness-of-fit test, Student's t-test, and Kolmogorov-Smirnov test preceded by Discrete Fourier Transform method to detect systematic patterns in data [6]. These approaches analyze either raw measurements or hit distribution surfaces to identify non-random patterns indicative of systematic error [6]. For many applications, the t-test has been recommended as an appropriate methodology to determine whether systematic error is present prior to applying any error correction method [6].

Experimental Protocols for Systematic Error Assessment

Protocol for Method Comparison Studies

Objective: To quantify systematic error between a test method and a reference method. Materials: Certified reference materials with known values, test and reference instrumentation, appropriate statistical software. Procedure:

- Select a range of certified reference materials covering the expected measurement range.

- Perform repeated measurements (n≥5) of each reference material using both test and reference methods.

- Record all measurements under controlled environmental conditions.

- Apply simple linear regression with the reference method values as the independent variable (x) and test method values as the dependent variable (y).

- Calculate the regression parameters using ordinary least squares: ( y = a + bx ).

- Interpret results: The intercept (a) estimates constant bias, while the slope deviation from 1 (b-1) estimates proportional bias [1]. Validation: A statistically significant intercept (p<0.05) indicates constant bias; a slope significantly different from 1 indicates proportional bias.

Protocol for Quality Control Monitoring

Objective: To continuously monitor measurement processes for systematic error using statistical process control. Materials: Control materials, measurement instrumentation, data recording system. Procedure:

- Establish control limits through a replication study: repeatedly measure control materials (n≥20) to calculate mean and standard deviation.

- Create a Levey-Jennings plot with time on the x-axis and measured values on the y-axis.

- Add reference lines for mean, mean ±1SD, mean ±2SD, and mean ±3SD.

- Plot daily control measurements in chronological order.

- Apply Westgard rules to evaluate control data:

- 12S: One measurement > ±2SD - warning

- 13S: One measurement > ±3SD - reject

- 22S: Two consecutive measurements > ±2SD on same side of mean - reject (systematic error)

- 41S: Four consecutive measurements > ±1SD on same side of mean - reject (systematic error)

- 10x: Ten consecutive measurements on same side of mean - reject (systematic error) [1]. Corrective Action: When systematic error is detected, investigate instrument calibration, reagent integrity, and procedural compliance.

Research Reagent Solutions for Error Detection

The following table details key materials and reagents essential for systematic error detection in method comparison studies:

| Resource | Function in Systematic Error Assessment |

|---|---|

| Certified Reference Materials | Provide samples with accurately known values for method comparison and bias quantification [1] |

| Control Samples | Stable materials with established expected values for ongoing quality control monitoring [1] |

| Calibration Standards | Enable instrument calibration to traceable standards, minimizing constant bias [5] |

| Electronic Laboratory Notebook (ELN) | Provides structured data entry and calibration management to reduce transcriptional errors [5] |

| Statistical Software | Facilit regression analysis, calculation of bias, and application of Westgard rules [1] |

| Barcode Labeling Systems | Enable automated sample tracking to prevent identification errors that could introduce bias [5] |

In method comparison research, systematic error represents a fundamental challenge to measurement validity, consistently skewing results in one direction and reducing methodological accuracy. Unlike random error, which scatters measurements unpredictably, systematic error manifests as reproducible bias that cannot be eliminated through replication alone. Its detection requires deliberate strategies including method comparison with reference standards, statistical process control techniques, and formal hypothesis testing. The profound impact of even small systematic errors on research conclusions—particularly in fields like clinical trials and drug development—necessitates rigorous attention to calibration, procedural design, and continuous quality assessment. By understanding the nature, sources, and detection methods for systematic error, researchers can develop more robust methodologies, draw more valid conclusions, and ultimately advance scientific knowledge with greater confidence in their measurement systems.

In scientific research, particularly in method comparison studies and drug development, the integrity of conclusions hinges on the accuracy of the data. Systematic error, also known as bias, represents a consistent or proportional deviation between observed values and the true value of what is being measured [8]. Unlike random error, which creates unpredictable variability and affects precision, systematic error skews measurements in a specific, predictable direction, directly undermining the accuracy of the data [8]. This fundamental characteristic makes it a critical problem, as it can lead to false positive or false negative conclusions about the relationship between variables, potentially derailing research and development efforts [8]. Within method comparison research, the core objective is to identify and quantify the systematic error (inaccuracy) of a new test method against a comparative method, forming the basis for judging its acceptability for clinical or research use [9].

The following diagram illustrates how systematic error fundamentally differs from random error in its effect on data.

Diagram 1: Systematic vs. Random Error.

The Critical Nature of Systematic Error in Research

Systematic errors are generally considered a more significant problem in research than random errors [8]. Random error, when dealing with large sample sizes, tends to cancel itself out as measurements are equally likely to be higher or lower than the true value; averaging these results yields a value close to the true score [8]. Systematic error, however, offers no such recourse. It consistently biases data in one direction, and this bias is not diminished by increasing the sample size. Instead, a larger sample merely provides a more precise, yet still inaccurate, estimate [8].

The ultimate risk is that systematic error can lead to Type I (false positive) or Type II (false negative) conclusions about the relationships between the variables being studied [8]. In fields like healthcare and drug development, the consequences of such erroneous conclusions can be severe, leading to misinformed decisions, unnecessary costs, and potential harm to patients [10]. A recent systematic assessment in health research identified 77 distinct types of errors and biases that can compromise the validity of systematic reviews, which are considered the highest form of evidence, underscoring the pervasive and complex nature of this threat [10].

Quantifying Systematic Error in Method Comparison Studies

The comparison of methods experiment is a critical procedure for estimating the inaccuracy or systematic error of a new method (test method) by analyzing patient samples using both the new method and a comparative method [9]. The systematic differences at medically critical decision concentrations are the primary errors of interest.

Core Experimental Protocol for Method Comparison

A robust comparison of methods experiment should adhere to the following validated protocol [9]:

- Comparative Method Selection: The choice of a comparative method is paramount. An ideal comparative method is a reference method whose correctness is well-documented. Differences from a reference method are attributed to the test method. When using a routine method as the comparative method, large, medically unacceptable differences require careful interpretation and additional experiments to identify which method is inaccurate [9].

- Specimen Requirements: A minimum of 40 different patient specimens is recommended. The quality and range of specimens are more critical than the quantity. Specimens should cover the entire working range of the method and represent the spectrum of diseases expected in its routine application. To assess specificity, 100-200 specimens may be needed [9].

- Measurement and Timeframe: Analyzing each specimen in duplicate by both methods helps identify sample mix-ups or transposition errors. The experiment should be conducted over a minimum of 5 days, and preferably over 20 days (2-5 specimens per day), to incorporate routine analytical variation and minimize the impact of systematic errors from a single run [9].

- Data Analysis Workflow: Data analysis involves both graphical and statistical techniques. An initial difference plot (test result minus comparative result vs. comparative result) should be inspected as data is collected to identify discrepant results for immediate reanalysis. For data covering a wide analytical range, linear regression statistics are preferred to estimate systematic error [9].

The following workflow summarizes the key stages of a method comparison experiment.

Diagram 2: Method Comparison Workflow.

Statistical Estimation of Systematic Error

For comparison results that cover a wide analytical range (e.g., glucose, cholesterol), linear regression analysis is the statistical method of choice. It allows for the estimation of systematic error at specific medical decision concentrations and provides insight into the constant or proportional nature of the error [9].

The calculations proceed as follows:

- Linear regression provides the slope (b) and y-intercept (a) of the line of best fit.

- The systematic error (SE) at a specific medical decision concentration (Xc) is calculated by first determining the corresponding Y-value (Yc) from the regression line, and then finding the difference [9]:

Yc = a + b * XcSE = Yc - Xc

For example, in a cholesterol comparison study where the regression line is Y = 2.0 + 1.03X, the systematic error at a critical decision level of 200 mg/dL would be [9]:

Yc = 2.0 + 1.03 * 200 = 208 mg/dL

SE = 208 - 200 = 8 mg/dL

This indicates a systematic error of +8 mg/dL at this decision level.

Table 1: Key Statistical Metrics in Method Comparison

| Metric | Description | Interpretation in Error Analysis |

|---|---|---|

| Slope (b) | The rate of change of test method results relative to comparative method results. | A slope ≠ 1.0 indicates a proportional error. |

| Y-Intercept (a) | The constant value difference between methods when the comparative method result is zero. | An intercept ≠ 0 indicates a constant error. |

| Standard Error of the Estimate (s~y/x~) | The standard deviation of the points around the regression line. | Quantifies the random error (imprecision) not explained by the systematic error. |

| Correlation Coefficient (r) | A measure of the strength of the linear relationship between the two methods. | Primarily useful for verifying a sufficiently wide data range (r ≥ 0.99); not a measure of agreement. |

Systematic errors can originate from numerous aspects of research, from design to execution. Understanding their typology is the first step toward mitigation.

Table 2: Common Types and Sources of Systematic Error

| Type of Systematic Error | Description | Example in Research |

|---|---|---|

| Offset Error | A consistent difference (offset) of a fixed amount between the measured and true value [8]. | A miscalibrated scale consistently registers all weights as 0.5 grams heavier [8]. |

| Scale Factor Error | A consistent difference that is proportional to the magnitude of the measurement [8]. | A measuring instrument consistently overestimates by 10% across its range (e.g., 10 at 100, 20 at 200) [8]. |

| Selection Bias | Error from systematic differences in how study populations are identified or included [10] [11]. | Survivorship Bias: Only including "survivors" of a process (e.g., only customers who completed onboarding) while ignoring those who failed or dropped out, leading to overly optimistic results [12]. |

| Information Bias | A systematic error affecting the accuracy of the data collected and reported [10]. | Recall Bias: Distorted results from variations in participants' memory of past events during surveys [10]. |

| Measurement Bias | Error from flawed measurement instruments or techniques [11]. | Differential Follow-up Bias: Comparing the risk of an event between groups observed for different amounts of time, skewing time-to-event metrics [12]. |

A Scientist's Toolkit: Reagents and Materials for Robust Method Comparison

While the specific reagents depend on the analytical method, the following table outlines essential conceptual "solutions" and their functions for conducting a valid comparison of methods study.

Table 3: Essential Method Validation Toolkit

| Tool / Material | Function in Experiment |

|---|---|

| Reference Method or Well-Characterized Comparative Method | Serves as the benchmark against which the test method's accuracy is judged. Its quality defines the validity of the comparison [9]. |

| Characterized Patient Pool (≥40 specimens) | Provides a matrix-matched, real-world sample set covering the analytical measurement range and pathological spectrum to challenge the method [9]. |

| Stability-Preserving Reagents | Anticoagulants, preservatives, or stabilizers that ensure analyte integrity between measurements by the test and comparative methods, preventing pre-analytical error [9]. |

| Calibration Traceability Materials | Certified reference materials and calibrators traceable to a higher-order standard, ensuring both methods are calibrated to a common, accurate baseline [9] [8]. |

| Statistical Analysis Software | Enables robust data analysis, including linear regression, difference plots, and calculation of systematic error at decision points [9]. |

Systematic error is not merely a statistical nuisance; it is a fundamental threat to the accuracy and validity of scientific research conclusions. Its consistent, directional nature makes it more dangerous than random error, as it is not mitigated by increasing sample size and can directly lead to false positive or negative findings [8]. In method comparison research, the disciplined application of established experimental protocols—including careful method selection, appropriate specimen panels, and rigorous statistical analysis using linear regression—is essential to quantify this error [9]. By recognizing the diverse sources of bias, from instrument calibration to study design flaws like survivorship bias, researchers and drug development professionals can implement strategies to reduce these errors, thereby ensuring that their conclusions are built upon a foundation of accurate and reliable data.

In biomedical research, the reliability of data is paramount. Systematic error, or bias, refers to a consistent, reproducible deviation of measured values from the true value, skewing results in a specific direction and threatening the validity of scientific conclusions [13] [8]. Unlike random error, which averages out with repeated measurements, systematic error cannot be eliminated through replication and requires specific detection and correction strategies [1]. This technical guide, framed within the context of method comparison research, details the common sources of systematic error stemming from instrumentation, procedures, and operators, and provides methodologies for their identification and mitigation.

Defining Systematic Error in Method Comparison

In method comparison studies, the core objective is to assess the systematic error between a new (test) method and a comparative (reference) method [9]. Systematic error can manifest in two primary forms:

- Constant Bias: A fixed difference between the test and reference methods that remains the same across the entire range of measurement. It is represented by the y-intercept in a regression analysis [13] [1].

- Proportional Bias: A difference that changes in proportion to the concentration of the analyte. It is represented by the slope of the regression line in a method comparison experiment [13] [9].

A perfect agreement would show a slope of 1 and an intercept of 0. The significance of any detected bias must be evaluated statistically, for example, by determining if the 95% confidence interval of the slope includes 1 or if the 95% confidence interval of the intercept includes 0 [13].

Systematic vs. Random Error

Instrumentation-Based Errors

Instrumental bias arises from inaccuracies or malfunctions in the measurement devices themselves [14] [2].

- Poor Calibration: Using instruments calibrated with non-traceable standards or failing to perform regular recalibration introduces offset (additive) errors [8] [15].

- Miscalibration: A scale that is not zeroed correctly will consistently read 5 grams over the true value [2].

- Amplitude Mismatch and Phase Imbalance: In complex instruments like sinusoidal encoders, systematic errors can include amplitude mismatch and phase imbalance between output signals, directly affecting angular position measurements [16].

- Instrument Drift: Gradual changes in instrument performance over time due to aging components or environmental fluctuations can cause slow, progressive bias [15].

- Non-Linearity: Loss of linearity near the upper and lower limits of an instrument's detection range can lead to proportional errors [13].

Procedure-Based Errors

Errors embedded in the experimental protocol or data handling are known as procedural errors [2].

- Non-Commutability of Reference Materials: Using reference materials (e.g., calibrators) that do not behave like fresh patient samples can introduce a fundamental bias in clinical assays [13].

- Specimen Handling and Stability: Analyzing samples outside their stability window (e.g., for ammonia or lactate) or under inconsistent handling conditions (e.g., temperature, time to separation) creates bias that is erroneously attributed to the analytical method [9].

- Faulty Experimental Design: In method comparison studies, using a narrow concentration range of samples or an inadequate sample size prevents reliable estimation of constant and proportional bias [9].

Operator-Induced Bias (Human Factors)

This category encompasses biases introduced by the researchers or technicians performing the measurements [14].

- Experimenter Drift: Over long periods of data collection or coding, observers may fatigue or become less motivated, slowly departing from standardized procedures in identifiable ways [8].

- Transcription and Recording Errors: Incorrectly recording or typing data from an instrument readout or data sheet is a common source of systematic error [2].

- Estimation Error: Consistently reading a measurement scale incorrectly, such as always interpolating between two marks on a ruler in a biased manner [2].

- Confirmation Bias: The tendency to search for, interpret, or prioritize data in a way that confirms one's pre-existing hypotheses or expectations [17].

Table 1: Common Sources and Examples of Systematic Error

| Source Category | Type of Error | Example in Biomedical Context |

|---|---|---|

| Instrumentation | Offset / Additive Error | Miscalibrated pH meter that consistently reads 0.5 units low [2] [8]. |

| Instrumentation | Proportional Error | Amplitude mismatch in sinusoidal encoder outputs [16]. |

| Procedures | Specimen Handling | Serum potassium measurements affected by delayed separation of serum from cells [9]. |

| Procedures | Reference Material | Using a non-commutable calibrator that yields different results with a new method versus the reference method [13]. |

| Operator | Experimenter Drift | Microscopist gradually changing cell counting criteria over the course of a long study [8]. |

| Operator | Confirmation Bias | A researcher unconsciously re-running an outlier test result that doesn't fit the expected pattern while accepting congruent results without verification [17]. |

Detection and Quantification Methodologies

The Comparison of Methods Experiment

This is the cornerstone experiment for estimating systematic error in laboratory medicine [9].

- Purpose: To estimate inaccuracy (systematic error) by analyzing patient samples using both a test method and a comparative method.

- Experimental Protocol:

- Sample Selection: A minimum of 40 patient specimens should be tested, selected to cover the entire working range of the method [9]. For a more robust evaluation, 100-200 specimens may be needed to assess specificity [9].

- Measurement: Analyze each specimen by both the test and comparative methods. Ideally, perform duplicate measurements in different analytical runs to identify sample-specific errors or transcription mistakes [9].

- Duration: The experiment should span a minimum of 5 days, but preferably longer (e.g., 20 days), to capture long-term sources of bias [9].

- Data Analysis:

- Graphical Inspection: Create difference plots (test minus comparative result vs. comparative result) or comparison plots (test result vs. comparative result) to visually identify constant/proportional bias and outliers [9].

- Statistical Calculation: Use linear regression analysis (

Y = a + bX) on data covering a wide analytical range. The systematic error (SE) at a critical medical decision concentration (Xc) is calculated as:Yc = a + b*Xcfollowed bySE = Yc - Xc[9]. The slope (b) indicates proportional bias, and the intercept (a) indicates constant bias [13] [1].

Quality Control Procedures with Reference Materials

Using certified reference materials (CRMs) or control samples with known assigned values is a routine quality control practice [13] [1].

- Levey-Jennings Plots: Control sample values are plotted over time against mean and standard deviation limits. Systematic error is suspected if control values show a persistent shift or trend [1].

- Westgard Rules: Specific statistical rules are applied to quality control data. Systematic error is indicated by violations such as the

2_2srule (two consecutive controls exceeding 2SD on the same side of the mean) or the10_xrule (ten consecutive controls on the same side of the mean) [1].

Statistical Tests for Bias Significance

A calculated bias must be tested for statistical significance.

- Confidence Interval Overlap: A practical method is to check the 95% confidence interval of the mean of repeated measurements and the target value. If the intervals do not overlap, the bias is considered significant [13].

- t-test: A paired t-test can be used to determine if the average difference (bias) between two methods is statistically different from zero [9].

Table 2: Methods for Detecting and Quantifying Systematic Error

| Method | Key Principle | Data Output | Identifies Error Type |

|---|---|---|---|

| Comparison of Methods | Parallel testing of patient samples on two systems [9]. | Regression equation (Slope, Intercept), Systematic Error at decision levels. | Constant & Proportional Bias |

| Levey-Jennings / Westgard Rules | Monitoring control materials with known values over time [1]. | Control charts with statistical rule violations. | Persistent shifts or trends (Systematic Error) |

| Passing-Bablok Regression | Non-parametric regression method less sensitive to outliers [13]. | Regression equation with confidence intervals for slope and intercept. | Constant & Proportional Bias |

Systematic Error Detection Workflow

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Error Detection

| Material / Reagent | Function in Systematic Error Detection |

|---|---|

| Certified Reference Materials (CRMs) | Provides an assigned value with metrological traceability, used to estimate bias directly by comparing the mean of measured values to the reference value [13]. |

| Fresh Patient Samples | Used in method comparison studies; considered the gold standard for assessing how a method will perform in routine practice, as they reflect the true matrix of the specimen [9]. |

| Commutable Control Materials | Processed control materials that behave like fresh patient samples across different methods; essential for valid method comparison and bias estimation [13]. |

| Calibrators | Solutions with known analyte concentrations used to adjust the output of an instrument; inaccuracies here propagate as systematic error through all subsequent measurements [13] [1]. |

Systematic error is an inherent challenge in biomedical methods that, if undetected, can lead to incorrect clinical diagnoses, flawed research data, and misguided health policies. A rigorous approach involving an understanding of its sources—instrumentation, procedures, and operators—is the first line of defense. Employing structured experimental protocols like the comparison of methods study, coupled with ongoing quality control using appropriate reference materials, allows researchers to quantify, and ultimately correct for, these biases. By systematically addressing these errors, scientists and drug development professionals can ensure the generation of accurate, reliable, and clinically relevant data.

In method comparison research, systematic errors represent a fundamental challenge to data integrity and experimental validity. Unlike random errors, which introduce unpredictable variability, systematic errors skew results in a consistent, directional manner, potentially leading to false conclusions and compromised research outcomes. This technical guide provides an in-depth examination of the two primary quantifiable types of systematic errors—offset errors and scale factor errors—within the context of scientific research and drug development. We explore their distinct characteristics, detection methodologies, and correction protocols through structured data presentation, experimental workflows, and practical implementation frameworks tailored for researchers, scientists, and drug development professionals seeking to enhance measurement accuracy and methodological rigor.

Systematic error, also referred to as bias, constitutes a consistent or reproducible inaccuracy in measurement that diverges from the true value in a predictable pattern [18] [8]. In method comparison research, particularly in pharmaceutical development and analytical science, these errors present a more significant problem than random errors because they systematically skew data away from true values, potentially leading to Type I or II errors in statistical conclusions [8] [19]. The fundamental distinction lies in their consistent nature—while random errors average out with repeated measurements, systematic errors persist despite replication, directly compromising accuracy rather than precision [8].

Systematic errors originate from identifiable sources within the measurement system, including faulty instrument calibration, imperfect experimental design, researcher bias, or suboptimal analytical procedures [18] [19]. In regulatory science and drug development, where method validation is paramount, understanding and quantifying these errors becomes essential for establishing analytical robustness and ensuring compliant manufacturing processes.

Theoretical Framework of Quantifiable Systematic Errors

Offset Errors

Offset error, also known as zero-setting error or additive error, occurs when a measurement instrument consistently deviates from the true value by a fixed amount across its entire operational range [8] [19]. This error manifests as a constant displacement where all measurements are shifted higher or lower by the same absolute value, regardless of the magnitude being measured.

Mathematical Representation:

Measured Value = True Value + Constant Offset

A practical example includes a weighing scale that registers 0.5 grams when no weight is applied, consequently adding this discrepancy to every measurement taken [18]. In analytical chemistry, this might appear as a spectrophotometer that consistently reports absorbance values 0.01 units higher than actual values due to improper zeroing with a blank solution.

Scale Factor Errors

Scale factor error, alternatively termed multiplicative error or proportional error, represents a systematic inaccuracy proportional to the magnitude of the measured quantity [8] [19]. Unlike offset errors, scale factor errors increase or decrease in absolute terms as the measurement value changes, maintaining a constant percentage deviation from the true value.

Mathematical Representation:

Measured Value = True Value × (1 + Scaling Factor)

For instance, if a scale repeatedly adds 5% to actual measurements, a 10kg mass would register as 10.5kg, while a 20kg mass would display as 21kg [19]. In chromatography, this might manifest as consistent percentage errors in peak area integration across different concentration levels due to incorrect calibration curve slope.

Comparative Analysis

Table 1: Fundamental Characteristics of Offset and Scale Factor Errors

| Characteristic | Offset Error | Scale Factor Error |

|---|---|---|

| Alternative Terminology | Additive error, Zero-setting error [19] | Multiplicative error, Proportional error [19] |

| Mathematical Relationship | Fixed value addition/subtraction [8] | Proportional scaling [8] |

| Directional Effect | Consistent shift across range | Expanding/contracting difference with magnitude |

| Impact on Measurements | Constant absolute error | Constant relative error |

| Typical Sources | Improper instrument zeroing, baseline drift [18] | Incorrect calibration slope, instrument sensitivity drift [18] |

Table 2: Detection and Quantification Methods

| Method | Offset Error Application | Scale Factor Error Application |

|---|---|---|

| Calibration Against Standards | Measure known zero value; deviation indicates offset [18] | Measure multiple standards across range; proportional pattern indicates scale error [18] |

| Statistical Analysis | Consistent mean difference from reference in Bland-Altman plots | Correlation analysis revealing proportional bias |

| Graphical Identification | All data points shifted equally from reference line on identity plot [8] | Fan-shaped pattern in residual plots [8] |

| Experimental Protocol | Linear regression with forced zero intercept | Comparison of regression slope against ideal value of 1 |

Experimental Protocols for Error Identification

Comprehensive Calibration Procedures

Protocol Objective: Establish reliable methodology for detecting and quantifying both offset and scale factor errors in analytical instruments.

Materials and Equipment:

- Certified reference standards covering operational measurement range

- Instrument under investigation (analytical balance, HPLC, spectrophotometer, etc.)

- Environmental monitoring equipment (temperature, humidity sensors)

- Statistical analysis software (R, Python, or specialized calibration packages)

Step-by-Step Implementation:

- Preparation Phase: Acquire certified reference materials with traceable values spanning the instrument's operational range. Allow sufficient acclimatization time for instruments and standards to reach environmental equilibrium [18].

- Zero-Point Assessment: Measure blank or zero standard repeatedly (n≥10) to establish baseline offset. Calculate mean and standard deviation to quantify zero-setting error.

- Multi-Point Calibration: Measure reference standards across operational range in randomized order with sufficient replication (n≥5 per level) to minimize interference from random error.

- Data Collection: Record measurements systematically, noting environmental conditions that might introduce additional systematic variations (temperature, humidity, etc.) [18].

- Regression Analysis: Perform linear regression of measured values against reference values. The y-intercept quantifies offset error, while deviation of the slope from unity quantifies scale factor error.

- Uncertainty Quantification: Calculate confidence intervals for both intercept and slope parameters to establish statistical significance of observed errors.

Method Comparison Studies

Protocol Objective: Identify systematic errors between established reference methods and new analytical procedures.

Experimental Design:

- Sample Selection: Obtain or prepare samples representing actual test matrices with values distributed across clinically or analytically relevant range.

- Measurement Protocol: Analyze all samples using both reference and test methods under identical conditions, employing randomization to minimize order effects.

- Data Analysis: Apply Bland-Altman analysis to identify fixed bias (offset error) and proportional bias (scale factor error) between methods.

- Statistical Testing: Use paired t-tests for offset detection and regression-based approaches for scale factor identification.

Visualization of Systematic Error Concepts

Conceptual Relationship Diagram

Figure 1: Systematic Error Taxonomy and Management Framework

Experimental Workflow for Systematic Error Assessment

Figure 2: Systematic Error Assessment and Correction Workflow

The Scientist's Toolkit: Essential Research Materials

Table 3: Research Reagent Solutions for Systematic Error Management

| Reagent/Equipment | Specification Requirements | Primary Function in Error Management |

|---|---|---|

| Certified Reference Materials | Traceable to national/international standards with documented uncertainty | Establish measurement traceability; quantify offset and scale factor errors through calibration [18] |

| Quality Control Materials | Stable, homogeneous materials with well-characterized properties | Monitor measurement system performance; detect systematic error drift over time |

| Calibration Standards | Purity ≥99.5%, covering analytical measurement range | Create multi-point calibration curves; identify proportional errors through linear regression |

| Blank Matrix Solutions | Matched to sample matrix without analytes of interest | Establish baseline measurements; identify and correct for offset errors |

| Environmental Monitors | Temperature (±0.1°C), humidity (±2% RH) sensors | Identify environmental factors contributing to systematic measurement variations [18] |

| Statistical Analysis Software | Capable of weighted regression, bias estimation, and uncertainty calculation | Quantify systematic error parameters and their statistical significance |

Quantitative Data Synthesis

Table 4: Systematic Error Impact and Correction Data

| Parameter | Offset Error | Scale Factor Error |

|---|---|---|

| Impact on Accuracy | Constant absolute inaccuracy | Proportional inaccuracy increasing with magnitude |

| Detection Confidence | High with adequate zero measurements | Requires multiple points across measurement range |

| Typical Magnitude Ranges | 0.1-5% of measurement range | 0.5-10% proportional deviation |

| Correction Efficacy | 90-99% reduction with proper zeroing [18] | 85-95% reduction with slope correction [18] |

| Residual Uncertainty | 0.01-0.5% of offset magnitude | 0.1-1% of scaling factor |

| Validation Requirements | Comparison to blank/zero standard | Linear regression through multiple standards |

Methodological Framework for Error Reduction

Triangulation Approach

Implement multiple measurement techniques to identify systematic biases between methods. For instance, in protein quantification, combine UV spectrophotometry, Bradford assay, and quantitative amino acid analysis to detect method-specific systematic errors [8].

Regular Calibration Protocols

Establish scheduled calibration intervals based on instrument stability data and regulatory requirements. Automated calibration systems demonstrate 15% higher consistency compared to manual processes, significantly reducing human-induced systematic errors [18].

Randomized Measurement Sequences

Counter systematic drift by randomizing sample analysis order, particularly in extended analytical runs where instrument performance may gradually change.

Environmental Control

Maintain consistent laboratory conditions (temperature, humidity) as systematic errors in temperature-sensitive equipment can reach 0.5% without proper environmental controls [18].

In method comparison research, particularly within regulated environments like drug development, the identification and quantification of offset and scale factor errors represent critical components of method validation. Through systematic implementation of the protocols and frameworks outlined in this guide, researchers can significantly enhance measurement accuracy, ensure regulatory compliance, and produce more reliable scientific conclusions. The quantitative differentiation between these distinct error types enables targeted correction strategies, ultimately strengthening the foundation for analytical decision-making in pharmaceutical development and scientific research.

Systematic errors in clinical medication dosing represent a significant and persistent challenge in healthcare, contributing to patient harm and increased medical costs. Framed within the broader context of method comparison research, this technical guide examines the nature and impact of these errors through real-world data and established methodologies for their quantification. We explore how fixed and proportional biases introduce inaccuracies in the medication use process, leading to wrong-drug events and dosing inaccuracies. The analysis leverages findings from healthcare safety reports and clinical studies to illustrate the consequences of these errors. Furthermore, this guide details experimental protocols for error detection and quantification, including comparison of methods experiments and Bland-Altman analysis. By presenting structured data, visual workflows, and a essential research toolkit, this whitepaper provides drug development professionals and clinical researchers with actionable strategies to identify, quantify, and mitigate systematic dosing errors, ultimately enhancing medication safety and patient outcomes.

In method comparison research, a systematic error is defined as a consistent, reproducible inaccuracy introduced by a flaw in the measurement system or methodology. Unlike random errors, which vary unpredictably, systematic errors deviate from the true value in a predictable pattern, often characterized as either fixed bias (constant across all values) or proportional bias (scaling with the magnitude of the measurement) [20] [9]. In the context of clinical medication dosing, these errors are not merely statistical concepts but represent critical risks to patient safety. They can originate from various sources, including instrumental miscalibration, procedural shortcomings, human factors, and inherent flaws in clinical processes.

The International Union of Crystallography provides a broad definition, stating that systematic errors constitute the "contribution of the deficiencies of the model to the difference between an estimate and the true value of a quantity" [21]. This "model" encompasses not only the physical instrumentation but also the entire clinical workflow—from prescription and transcription to dispensing and administration. When comparing a new method or process to an established one, the core objective is to estimate the inaccuracy or systematic error present. The systematic differences observed at critical medical decision points are of paramount interest, as they directly impact clinical outcomes [9]. This paper frames the issue of medication dosing errors within this rigorous methodological framework, treating the medication use process as a system whose outputs must be validated against the gold standard of patient safety and therapeutic intent.

Characteristics and Typology of Systematic Medication Dosing Errors

Systematic errors in medication dosing manifest in two primary forms, each with distinct characteristics and implications for clinical practice.

Fixed Bias refers to a constant discrepancy that is independent of the dose size. For example, a systematic miscalibration in an automated dispensing cabinet that consistently measures a 1 mg dose as 1.1 mg demonstrates a fixed bias of +0.1 mg. This type of error is particularly dangerous for high-potency medications or those with a narrow therapeutic index, where even a small absolute error can lead to toxicity or therapeutic failure. In method comparison studies, a fixed bias is indicated by a non-zero y-intercept in regression analysis [9].

Proportional Bias, in contrast, is an error whose magnitude is proportional to the dose being measured. This is often revealed in method comparison studies by a slope significantly different from 1.0 in regression analysis [9]. An example would be a smart pump that delivers 5% less volume than programmed, resulting in a 0.5 mL underdose for a 10 mL dose, but a 5 mL underdose for a 100 mL dose. This type of error can lead to significant under- or over-dosing across a wide range of medication orders, affecting a larger patient population.

A prominent real-world manifestation of systematic errors is the Wrong Drug Event (WDE), where a patient receives a medication different from the one intended. An analysis of 450 such events in Pennsylvania healthcare facilities revealed that insulin was the most frequently involved medication class, comprising 10.3% of all reported medications in WDEs. Other high-risk classes included antibacterials for systemic use, electrolyte solutions, and opioids [22]. These errors frequently occur within the same medication class, often driven by look-alike, sound-alike (LASA) drug names and shared stem names, such as the "phrine" stem in vasopressors (e.g., epinephrine and norepinephrine) [22]. The case of RaDonda Vaught, where vecuronium was fataly administered instead of midazolam, starkly illustrates the catastrophic potential of WDEs stemming from systematic process failures [22].

Table 1: Common Medication Classes Involved in Wrong Drug Events (WDEs)

| Medication Class | Percentage of Reported Medications in WDEs | Examples of Commonly Confused Pairs |

|---|---|---|

| Insulins | 10.3% | Different types of insulin (e.g., long-acting vs. rapid-acting) |

| Antibacterials for Systemic Use | 10.0% | Cefazolin vs. other cephalosporins |

| Electrolyte Solutions | 6.9% | Various concentrations of sodium chloride or potassium chloride |

| Opioids | 5.7% | Hydromorphone vs. morphine |

| Cardiac Stimulants | Information Missing | Epinephrine vs. norepinephrine |

Quantifying the Impact: Data from Real-World Case Studies

The consequences of systematic medication errors are quantifiable in terms of both their frequency and the severity of patient harm they cause. A large-scale study analyzing wrong drug events provides critical insight into the prevalence and distribution of these errors.

Error Frequency and Distribution by Care Area and Staff A retrospective analysis of hospital errors revealed that the majority of incidents (52.68%) were attributed to nurses, with the highest proportion of errors occurring during the night shift (42.60%) [23]. The most common types of errors identified were documentation errors (23.32%), medication errors (22.28%), and technical errors (17.69%) [23]. This distribution highlights critical vulnerabilities in the medication-use process, particularly at the points of administration and documentation. Furthermore, errors are not confined to a single care area. Data from 127 healthcare facilities showed that wrong drug events were most prevalent in medical/surgical units (19.6%), intensive care units (12.7%), emergency departments (12.4%), and surgical services (11.8%) [22], indicating a systemic risk across the healthcare environment.

Severity of Outcomes for Patients The ultimate impact of these errors on patients varies widely. Analysis shows that a significant portion of errors (25.55%) are intercepted before reaching the patient, while another 26.86% reach the patient but cause no detectable harm [23]. However, a concerning minority result in serious consequences: 2.28% of errors caused major harm to patients, and 1.04% directly led to patient deaths [23]. These figures underscore that while many errors are caught or are fortunate enough to be non-harmful, a persistent fraction results in severe, irreversible damage.

Impact of Technological Interventions The implementation of medication-related technology has proven to be a powerful strategy for mitigating systematic dispensing errors. A before-and-after study at a large academic medical center demonstrated that the introduction of Automated Dispensing Cabinets (ADC), Barcode Medication Administration (BCMA), and Smart Dispensing Counters (SDC) led to a dramatic 77.78% reduction in the average dispensing error incidence rate, from 0.0063% to 0.0014% [24]. Specifically, the frequency of "wrong drug" errors, the most common type at baseline, decreased by 81.26% following the full implementation of these technologies [24]. This provides compelling real-world evidence that targeted technological interventions can effectively address and reduce systematic flaws in the medication dispensing process.

Table 2: Severity and Outcomes of Reported Hospital Medication Errors

| Level of Harm Severity | Description | Percentage of Errors |

|---|---|---|

| No Error | Error occurred but did not reach the patient. | 25.55% |

| Error, No Harm | Error reached the patient but caused no detectable harm. | 26.86% |

| Minor Harm | Error contributed to or resulted in minor patient harm. | Data Not Specified |

| Major Harm | Error contributed to or resulted in major patient harm. | 2.28% |

| Death | Error directly or indirectly resulted in patient death. | 1.04% |

Methodological Framework: Experimental Protocols for Error Quantification

To systematically identify and quantify errors in clinical and research settings, standardized experimental protocols are essential. Two core methodologies are the Comparison of Methods Experiment and the Bland-Altman Analysis.

Comparison of Methods Experiment

This experiment is specifically designed to estimate the inaccuracy or systematic error between a new (test) method and a established (comparative) method.

- Purpose and Design: The primary goal is to estimate systematic error by analyzing patient samples using both the test and comparative methods, then calculating the observed differences [9]. The study should be designed to cover the entire working range of the method using a minimum of 40 different patient specimens carefully selected to represent the spectrum of diseases and concentrations encountered in routine practice [9].

- Comparative Method Selection: The choice of comparative method is critical. A reference method with documented correctness is ideal, as any differences can be attributed to the test method. When using a routine method as the comparator, large and medically unacceptable differences require additional investigation through recovery and interference experiments to identify which method is inaccurate [9].

- Data Collection and Analysis: Specimens should be analyzed within a short time frame (e.g., two hours) to minimize stability issues. Data analysis should begin with graphical inspection,

- using a difference plot (test result minus comparative result vs. comparative result) to visualize fixed and proportional biases and identify outliers [9].

- For data covering a wide analytical range, linear regression statistics (slope, y-intercept) are calculated. The systematic error (SE) at a critical medical decision concentration (Xc) is determined as SE = Yc - Xc, where Yc is the value calculated from the regression line Yc = a + bXc [9].

- For a narrow analytical range, the average difference (bias) and standard deviation of the differences are more appropriate metrics [9].

Bland-Altman Analysis for Fixed and Proportional Bias

Bland-Altman analysis is a robust statistical method used to assess the agreement between two measurement techniques, specifically designed to identify fixed and proportional biases.

- Protocol Application: This analysis was applied in a study investigating the two-step test for locomotive syndrome. Participants performed the test twice within a 7-day interval. The Bland-Altman plot was used to visualize the differences between the two tests against their means, establishing the limits of agreement (LOA) and calculating the minimal detectable change (MDC) [20].

- Interpretation of Bias: In the mentioned study, fixed bias was identified in young adults, where the result for the two-step test tended to significantly increase during retesting. In contrast, no systematic errors were detected in the older adult group under the same protocol [20]. This highlights how the same measurement protocol can exhibit different error characteristics across distinct populations.

- Calculation of Key Metrics: The analysis produces quantitative metrics that define the scope of error. For older adults, the MDC was calculated as 26.9 cm for test length and 0.17 cm/height for the normalized test value [20]. This means a change greater than these values is required to be considered a real change beyond measurement error. The LOA, which describes the range within which most differences between measurements lie, in young adults ranged from -11.5 to 28.2 cm for length and from -0.07 to 0.17 cm/height for the normalized value [20].

The Scientist's Toolkit: Essential Reagents and Methods for Error Research

Investigating systematic errors in medication dosing requires a combination of analytical techniques, reference materials, and specialized software tools. The following table details key components of a research toolkit for this field.

Table 3: Research Reagent Solutions for Investigating Systematic Dosing Errors

| Tool/Reagent | Function/Application | Specific Example in Research |

|---|---|---|

| Bland-Altman Analysis | A statistical method to quantify agreement between two measurement techniques, identifying fixed and proportional bias. | Used to assess systematic error and minimal detectable change in clinical tests like the two-step test [20]. |

| Linear Regression Statistics | Calculates the slope and y-intercept for method comparison data over a wide analytical range to quantify proportional and constant error. | Employed in comparison of methods experiments to estimate systematic error at critical decision concentrations [9]. |

| Anatomical Therapeutic Chemical (ATC) Classification | A standardized system for classifying medications, enabling systematic analysis of error patterns by drug class. | Used to categorize medications involved in Wrong Drug Events (e.g., insulin, antibacterials, opioids) [22]. |

| Weighting Schemes (e.g., SHELXL) | Algorithms used to correct for underestimated standard uncertainties in measurement data, improving error quantification. | Applied in crystallography to address variances in observed intensities, a concept applicable to analytical dose measurement [21]. |

| High-Alert Medication List | A curated list of drugs that bear a heightened risk of causing significant patient harm when used in error. | Serves as a reference for prioritizing risk-assessment and error prevention strategies (e.g., ISMP List) [22]. |

| Automated Dispensing Cabinet (ADC) | A technological intervention studied to reduce dispensing errors by controlling and tracking drug distribution near the point of care. | Implementation led to a 39.68% reduction in average dispensing error rates in a hospital study [24]. |

Systematic errors in clinical medication dosing are not random failures but predictable and quantifiable flaws embedded within healthcare processes and measurement systems. The real-world consequences, from wrong-drug events to inaccurate dosing, lead to significant patient harm and impose substantial costs on healthcare systems. As demonstrated through case studies and methodological analysis, a rigorous, scientific approach is required to combat these errors. This involves the application of standardized experimental protocols like the Comparison of Methods experiment and Bland-Altman analysis to precisely quantify fixed and proportional biases. Furthermore, the successful implementation of technological interventions such as ADCs, BCMA, and SDCs provides a clear path forward, having been proven to reduce dispensing errors by over 75% in real-world settings [24].

Future efforts must focus on the proactive identification of risks before they result in patient harm. This includes the widespread adoption of targeted best practices, such as those promoted by the ISMP, which address specific vulnerabilities like patient weight-based dosing and vaccine administration [25]. Cultivating a robust safety culture that empowers all healthcare staff to report concerns and participate in process improvement is equally critical. For researchers and drug development professionals, integrating these error detection and mitigation methodologies into the design of clinical trials and drug delivery systems will be paramount. By continuing to treat medication safety through the lens of method comparison and systematic error analysis, the healthcare and research communities can build more reliable, resilient systems that ultimately enhance therapeutic outcomes and protect patient lives.

Designing and Executing a Method Comparison Experiment

In method comparison studies, the core objective is to quantify the disagreement between two quantitative measurement methods when applied to the same set of samples. Estimating inaccuracy is central to this process, as it seeks to identify and measure systematic error, or bias, which constitutes a consistent deviation of one method from another or from a reference truth. Within the broader context of understanding systematic error in method comparison research, these experiments are crucial for determining whether a new, potentially faster, or cheaper method can reliably replace an established procedure without compromising the validity of the results [26].

Traditional statistical methods for assessing agreement, such as the well-known Bland-Altman limits of agreement, have often implicitly relied on the assumption of a constant underlying "individual" latent trait being measured [26]. This assumption is frequently violated in real-world biomedical and clinical research where the measured characteristic in a person (e.g., a biomarker) can exhibit natural biological variation, diurnal rhythms, or linear time trends. When this variability is unaccounted for, it can be confounded with the measurement error itself, leading to biased estimates of the methods' inaccuracy. Therefore, a modern approach to estimating inaccuracy must extend the standard measurement error model to disentangle true physiological variation from the systematic and random errors introduced by the measurement techniques [26].

Experimental Protocols and Workflows

A robust method comparison experiment requires a carefully designed protocol to ensure that estimates of inaccuracy (bias) and precision are valid and reliable.

Core Experimental Protocol

The following workflow outlines the key stages in a method comparison experiment designed to accurately estimate inaccuracy. It highlights the parallel measurements needed and the subsequent data analysis required to isolate systematic error.

Detailed Methodological Description

- Sample Selection and Preparation: A representative set of samples that covers the entire analytical measurement range of interest should be selected. The sample size must provide sufficient statistical power to detect a clinically relevant bias. If the underlying trait is suspected to be variable (e.g., biomarkers with diurnal variation), the timing of sample collection should be standardized or explicitly recorded for inclusion in the extended model [26].

- Data Collection: Each sample is measured by both Method A (typically the reference or standard method) and Method B (the new or alternative method). The measurements should be performed independently, and if possible, the order of analysis should be randomized to avoid sequence effects. Replicate measurements per sample are highly recommended to better estimate precision and account for random error [26].

- Data Cleaning and Preparation: The raw data must be inspected for errors, outliers, and missing values. Techniques such as imputation or case deletion may be applied to handle missing data, and transformations (e.g., log transformations) might be necessary to stabilize variance or normalize distributions [27].

- Statistical Analysis for Inaccuracy Estimation:

- Bland-Altman Analysis: A foundational technique where the differences between the two methods are plotted against their averages. The mean difference provides an estimate of the average bias (systematic error), while the standard deviation of the differences defines the limits of agreement (±1.96 SD), which capture expected variation between methods [26].

- Advanced Modeling: For situations where the latent trait is not constant, standard methods like Bland-Altman can be misleading. The general measurement error model extended by Taffé (2025) is more appropriate. This model can be estimated using a Two-Stage Method [26]:

- Stage 1: Model the relationship between the two methods, potentially identifying both a fixed bias (differential bias) and a proportional bias.

- Stage 2: Use the residuals from the first model to estimate the random error components (precision) of each method, separating them from the natural variability of the underlying trait.

- Interpretation: The estimated bias must be evaluated for clinical or practical significance. A statistically significant bias may be trivial in practice, while a small but consistent bias could be critical in certain contexts.

Quantitative Framework and Data Analysis

The quantitative assessment of inaccuracy relies on specific statistical parameters derived from the data. The following table summarizes the key metrics and their interpretations.

Table 1: Key Quantitative Metrics for Estimating Inaccuracy

| Metric | Description | Interpretation in Inaccuracy Estimation |

|---|---|---|

| Average Bias (Mean Difference) | The arithmetic mean of the differences (Method B - Method A). | Estimates the constant systematic error (fixed bias). A value significantly different from zero indicates inaccuracy. |

| Coefficient of Determination (R²) | The proportion of variance in one method explained by the other. | A high R² suggests a strong linear relationship, but does not guarantee agreement. It is necessary but not sufficient for confirming a lack of proportional bias. |

| Root Mean Square Error (RMSE) | The square root of the average squared differences between methods. | A comprehensive measure of total disagreement, incorporating both systematic and random errors. A lower RMSE indicates better overall agreement. |

| Limits of Agreement (LoA) | The range within which 95% of the differences between the two methods are expected to lie (Mean Difference ± 1.96 × SD of differences). | Quantifies the expected spread of differences for most individual measurements. Wide limits indicate high random error, which can obscure the detection of systematic error. |

The relationships between these statistical concepts and the final assessment of a method's accuracy can be visualized through the following logical pathway.

The Scientist's Toolkit: Essential Research Reagents and Materials

The execution of a method comparison study requires specific materials and solutions tailored to the analytical methods being evaluated. The following table details common essential categories.

Table 2: Key Research Reagent Solutions for Method Comparison Studies

| Item | Function |

|---|---|

| Certified Reference Materials (CRMs) | Provides a sample with a known, matrix-matched, and traceably assigned value. Serves as the highest-order standard for quantifying absolute inaccuracy (trueness) of a method against a definitive reference. |

| Quality Control (QC) Materials | Used to monitor the stability and precision of each method throughout the experiment. Helps distinguish actual systematic bias between methods from drift within a single method's performance. |

| Calibrators | A series of samples with known concentrations used to establish the analytical calibration curve for each instrument. Consistent and accurate calibration is fundamental to a fair comparison. |

| Sample Panel with Validated Linearity | A set of patient samples or pooled sera that covers the full reportable range (from low to high values). Essential for detecting proportional bias, where the disagreement between methods changes with concentration. |

| Statistical Software Packages (e.g., R, Python, SPSS, SAS) | Provides the computational environment for performing advanced statistical analyses, such as Bland-Altman plots, regression for proportional bias, and implementing specialized models like the two-stage method for non-constant traits [26] [27]. |

In method comparison research, the primary goal is to estimate systematic error, or inaccuracy, which represents the consistent deviation of a new test method's results from the true value [9]. Identifying and quantifying this error is a fundamental step in method validation, ensuring that laboratory measurements are reliable and medically useful [9]. Systematic errors can manifest as a constant shift across the measurement range (constant error) or as a deviation that changes proportionally with the analyte concentration (proportional error) [9].

The choice of a comparative method is critical because the interpretation of the observed systematic error depends on the quality of the method used for comparison [9]. This technical guide details the selection criteria and experimental protocols for using reference and routine methods as comparators, providing a framework for robust method validation.

Core Concepts: Reference Methods and Routine Methods

Reference Methods

A reference method is a thoroughly validated analytical procedure whose results are known to be correct through comparison with definitive methods or via traceable standard reference materials [9]. It possesses a high level of accuracy and minimal systematic error. When a test method is compared against a reference method, any significant observed difference is confidently attributed to the inaccuracy of the test method [9]. This makes reference methods the ideal choice for method comparison studies, though they are not always available for all analyses.

Routine Methods

A routine method, often referred to more generally as a comparative method, is a standardized procedure used in daily laboratory practice whose correctness has not necessarily been established to the same rigorous standard as a reference method [9]. When comparing a test method to a routine method, finding small differences suggests the two methods have similar, relative accuracy. However, if differences are large and medically unacceptable, additional investigations—such as recovery or interference experiments—are required to determine which method is the source of the error [9].

Table 1: Key Characteristics of Comparative Method Types

| Characteristic | Reference Method | Routine (Comparative) Method |

|---|---|---|

| Fundamental Definition | A high-quality method with documented correctness [9] | A general term for a method used in comparison, without implied documented correctness [9] |

| Basis of Accuracy | Traceability to definitive methods or reference materials [9] | Established through routine use and validation; may be relative [9] |

| Interpretation of Discrepancies | Differences are assigned to the test method [9] | Source of error (test or comparative method) is uncertain and requires investigation [9] |

| Availability | Limited for all analytes | Widely available |

| Typical Use Case | Definitive method validation studies [9] | Common laboratory method comparisons and transfers [9] |

Experimental Protocol for the Comparison of Methods

The comparison of methods experiment is designed to estimate systematic error using real patient specimens [9]. The following provides a detailed methodology.

Pre-Experimental Considerations

- Number of Patient Specimens: A minimum of 40 different patient specimens is recommended [9]. The quality and range of concentrations are more critical than the total number. Specimens should cover the entire working range of the method and represent the spectrum of diseases expected in its routine application.

- Specimen Stability: Specimens should be analyzed by both methods within two hours of each other to prevent degradation, unless specific stability data indicates otherwise [9]. Stability can be improved by using preservatives, separating serum/plasma, refrigeration, or freezing.

- Measurement Replication: While common practice is to analyze specimens singly by each method, performing duplicate measurements is advantageous [9]. Duplicates should be two different sample cups analyzed in different runs or different orders to help identify sample mix-ups or transposition errors.

- Time Period: The experiment should span a minimum of 5 days, and preferably up to 20 days, to incorporate different analytical runs and minimize bias from a single run [9]. This can involve analyzing 2-5 patient specimens per day.

Data Analysis and Interpretation

- Graphical Analysis: The first step in data analysis is to graph the results for visual inspection [9].

- Difference Plot: Used when methods are expected to show one-to-one agreement. Plot the difference (test method result minus comparative method result) on the y-axis against the comparative method result on the x-axis. Data should scatter around the zero line [9].

- Comparison Plot: Used when methods are not expected to agree one-to-one. Plot the test method result (y-axis) against the comparative method result (x-axis) to visualize the relationship and identify outliers [9].

- Statistical Calculations:

- For a Wide Analytical Range: Use linear regression analysis to calculate the slope (b), y-intercept (a), and standard deviation about the regression line (s~y/x~) [9]. The systematic error (SE) at a critical medical decision concentration (X~c~) is calculated as: SE = Y~c~ - X~c~, where Y~c~ = a + bX~c~ [9].