Systematic Error in Science: Definition, Examples, and Strategies for Accurate Research

This article provides a comprehensive overview of systematic error, a consistent and repeatable deviation from true values that can significantly compromise data accuracy in scientific research and drug development.

Systematic Error in Science: Definition, Examples, and Strategies for Accurate Research

Abstract

This article provides a comprehensive overview of systematic error, a consistent and repeatable deviation from true values that can significantly compromise data accuracy in scientific research and drug development. It covers foundational concepts, including definitions and common sources like instrument miscalibration and procedural flaws. The content extends to methodological applications for identifying these errors in various research contexts, offers practical troubleshooting and optimization techniques to minimize bias, and includes a validation framework comparing systematic error to random error. Aimed at researchers and drug development professionals, this guide synthesizes strategies to enhance measurement validity and data reliability in biomedical and clinical studies.

What is Systematic Error? Foundational Concepts and Sources

Systematic error, often termed bias, refers to a consistent, reproducible inaccuracy that skews measurements in the same direction away from the true value [1] [2]. Unlike random errors, which arise from unpredictable fluctuations, systematic errors are inherently directional, meaning they consistently increase or decrease results, reducing measurement accuracy and potentially leading to false conclusions [3] [4]. In scientific research and drug development, identifying and mitigating systematic error is crucial because it cannot be eliminated by simply repeating measurements or averaging data, making it a more significant threat to data integrity than random error [1] [3].

The core challenge with systematic error lies in its consistent nature. Because it reproduces the same directional bias, it can escape notice during routine analysis, systematically distorting the relationship between variables and increasing the risk of Type I or II errors in hypothesis testing [1]. This is particularly critical in laboratory medicine and biologics development, where measurement inaccuracy can affect diagnostic outcomes, drug efficacy, and patient safety [5] [2].

Types of Systematic Error

Systematic errors manifest in two primary, quantifiable forms, each with distinct characteristics [1] [3]:

- Offset Error (or Additive/Zero-Setting Error): This occurs when a measurement instrument is not calibrated to a correct zero point. It shifts all measurements by a fixed amount, consistently adding or subtracting the same value regardless of the measurement magnitude. For example, a scale that consistently reads 5 grams heavy for every measurement exhibits an offset error [1] [3].

- Scale Factor Error (or Multiplicative/Proportional Error): This error arises when measurements consistently differ from the true value by a constant proportion (e.g., by 10%). Unlike offset errors, the absolute difference caused by a scale factor error changes with the magnitude of the measurement. An example is a measuring tape that has stretched, causing it to underreport all lengths by a fixed percentage [1] [3].

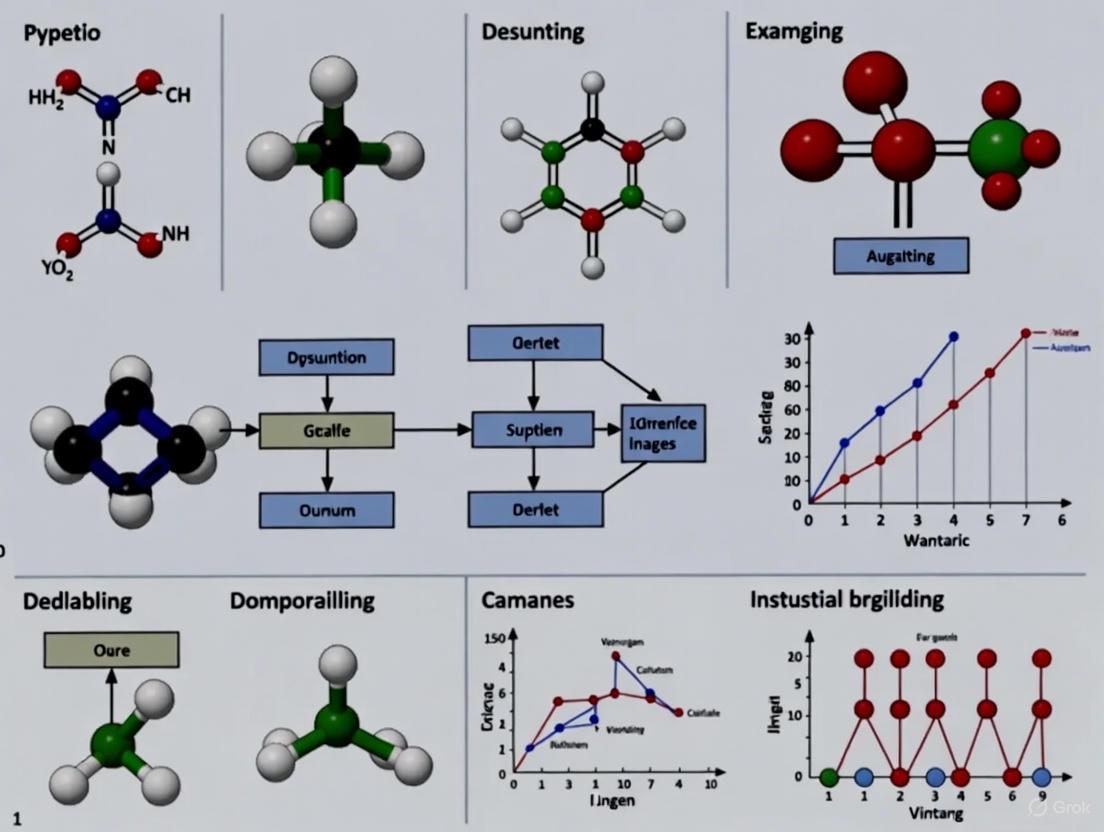

The following diagram illustrates the conceptual difference between these error types and their impact on data:

Systematic errors can originate from multiple aspects of the research process [1] [5] [3]:

- Faulty Instrumentation: Using miscalibrated, poorly maintained, or inherently inaccurate equipment. For instance, a balance that consistently adds 15 pounds to each measurement [3] or a thermometer with poor thermal contact that gives consistently low readings [3].

- Experimental Procedure Flaws: Imperfections in study design or execution, such as inadequate control of environmental conditions (e.g., temperature fluctuations [5]), sampling bias [1], or experimenter drift where researchers slowly depart from standardized procedures over time [1].

- Researcher-Induced Bias: This includes confirmation bias, where researchers may unconsciously favor data that aligns with their hypotheses [5], and response bias, where research materials like questionnaires lead participants to provide inauthentic responses [1].

Table: Sources and Examples of Systematic Error in Research

| Source Category | Specific Examples | Impact on Measurements |

|---|---|---|

| Instrumentation [5] [3] | Miscalibrated scales, instrument drift, using insensitive equipment | Consistent directional shift (e.g., always reading high or low) |

| Experimental Procedure [1] [5] | Sampling bias, inadequate environmental control, experimenter fatigue | Reduces accuracy and generalizability of findings |

| Researcher Influence [1] [5] | Confirmation bias, experimenter bias in unblinded studies | Skews results toward expected or desired outcomes |

Quantifying Systematic Error: Experimental Evidence

Case Study: Medication Errors in Clinical Practice

A prospective study on intravenous acetylcysteine administration for acetaminophen overdose provides compelling quantitative evidence of systematic errors in clinical settings [6]. Researchers analyzed 184 infusion bags across four medical centers and found significant deviations from prescribed dosages [6].

Table: Analysis of Medication Dosage Deviations in Clinical Practice [6]

| Deviation from Anticipated Dose | Number of Bags | Percentage of Total |

|---|---|---|

| Within ±10% | 68 | 37% |

| Within ±20% | 112 | 61% |

| >50% deviation | 17 | 9% |

| Systematic calculation errors | 3 patients (all bags) | ~5% of cases |

The study revealed that approximately 5% of patients received systemically incorrect dosages across all infusion bags, with errors of 50% or more [6]. This consistent directional error across multiple measurements for the same patients indicates systematic miscalculation rather than random variation. Additionally, about 9% of bags showed major errors in the drawing-up process, further demonstrating how systematic errors can compromise treatment accuracy even with complex dosing protocols [6].

Detection Methods and Statistical Protocols

Systematic error detection requires specialized methodologies beyond routine data analysis. Several established protocols provide frameworks for identification:

Westgard Rules for Quality Control In laboratory medicine, the Westgard rules use statistical process control to identify systematic errors [2]. Key rules for detecting bias include:

- 2₂S Rule: Indicates bias if two consecutive control values fall between 2 and 3 standard deviations on the same side of the mean [2].

- 4₁S Rule: Suggests bias if four consecutive control values fall on the same side of the mean and are at least one standard deviation away [2].

- 10ₓ Rule: Detects bias when ten consecutive control values fall on the same side of the mean [2].

Method Comparison Approach This technique involves measuring certified reference materials with known values to identify systematic error [2]. The measured values are compared against the reference standard using regression analysis to quantify constant bias (indicated by non-zero Y-intercept) and proportional bias (indicated by slope ≠ 1) [2]. The relationship is expressed as:

[ \text{Observed Value} = \text{Constant Bias} + (\text{Proportional Bias} \times \text{Expected Value}) ]

Systematic Visual Analysis Protocols For single-case research designs, systematic protocols have been developed to guide visual analysis of graphed data, operationalizing the process of identifying systematic patterns across experimental phases [7]. These protocols help researchers objectively evaluate changes in level, trend, and variability that might indicate systematic measurement errors [7].

The following workflow diagram illustrates a generalized approach to systematic error detection:

Research Reagent Solutions and Materials

Table: Essential Materials for Systematic Error Management in Laboratory Research

| Tool/Reagent | Primary Function | Role in Error Reduction |

|---|---|---|

| Certified Reference Materials [2] | Provide known, standardized quantities of analytes | Enable calibration and method comparison to identify instrumental bias |

| Control Samples [2] | Stable materials with predetermined characteristics | Monitor analytical performance over time using quality control processes |

| Electronic Lab Notebooks (ELN) [5] | Digital platform for structured data entry and management | Reduce transcriptional errors and automate calibration tracking |

| Automated Liquid Handling Systems [5] | Robotic equipment for precise specimen manipulation | Minimize human variation in sample preparation and measurement |

| Calibration Management Software [5] | Tools to track equipment status and calibration schedules | Ensure instruments remain properly calibrated and maintained |

Mitigation Strategies and Best Practices

Several evidence-based approaches can effectively reduce systematic errors in research:

- Triangulation: Using multiple techniques or instruments to measure the same variable [1] [3]. When findings converge across different methods, confidence in measurement accuracy increases, while discrepancies may indicate systematic error in one approach [1].

- Regular Calibration: Frequently comparing instrument readings with known standards and applying correction factors when systematic errors are identified [1] [5] [3]. This is particularly important for detecting and correcting offset and scale factor errors [3].

- Randomization: Using probability sampling methods and random assignment to treatment conditions helps ensure that samples don't systematically differ from the population, balancing participant characteristics across groups [1].

- Blinding (Masking): Concealing condition assignments from participants and researchers to prevent experimenter bias and demand characteristics from systematically influencing measurements [1] [5].

- Automation: Implementing laboratory information management systems and robotic equipment to perform repetitive tasks, reducing opportunities for human transcriptional error and protocol deviations [5].

The following diagram illustrates the relationship between key mitigation strategies and the types of systematic errors they address:

Systematic error represents a fundamental challenge in scientific measurement, characterized by its consistent directional bias that compromises data accuracy and can lead to invalid conclusions [1] [3] [2]. Unlike random error, which can be reduced through repeated measurements and averaging, systematic error requires specific detection methodologies such as method comparison, statistical quality control rules, and triangulation approaches [1] [2].

The impact of undetected systematic error is particularly significant in fields like drug development and laboratory medicine, where measurement inaccuracy can directly affect diagnostic outcomes and treatment efficacy [6] [5] [2]. By implementing robust detection protocols, maintaining rigorous calibration schedules, utilizing appropriate reference materials, and incorporating methodological safeguards like randomization and blinding, researchers can significantly reduce the influence of systematic error and enhance the validity of their scientific findings [1] [5] [2].

In scientific research, particularly in fields like drug development, measurement error is the difference between an observed value and the true value of a quantity [1]. Understanding and controlling for error is not merely a procedural formality; it is foundational to producing valid, reliable, and reproducible science. These errors are broadly categorized into two distinct types: random error and systematic error [1] [8]. While both are ever-present, they influence data in fundamentally different ways. Systematic error, often termed bias, is a consistent, repeatable inaccuracy that skews all measurements in a specific direction [1] [3] [9]. This persistent deviation is a primary driver of inaccuracy in research findings. In contrast, random error causes unpredictable fluctuations in measurements, leading to imprecision but not necessarily inaccuracy [1] [10]. The core distinction between these errors is best visualized through the concepts of accuracy and precision, which form the bedrock of data quality assessment in any scientific endeavor.

Defining Accuracy and Precision

In a scientific context, accuracy and precision have specific and distinct meanings. Accuracy refers to how close a measurement is to the true or accepted reference value [1] [9] [8]. It is a measure of correctness. Precision, on the other hand, refers to how close repeated measurements of the same quantity are to each other, regardless of whether they are correct or not [1] [9] [8]. It is a measure of reproducibility and consistency.

The relationship between these concepts and the types of error is direct and critical. Systematic error primarily affects accuracy, as it consistently pushes measurements away from the true value [1] [8]. Random error primarily affects precision, as it introduces scatter and variability between repeated measurements [1] [8]. The classic dartboard analogy, as referenced in multiple sources, effectively illustrates these relationships [1] [8].

The following diagram illustrates the core concepts of accuracy and precision in relation to systematic and random error.

Diagram 1: The relationship between accuracy, precision, and measurement error. High accuracy indicates closeness to the true value, while high precision indicates low scatter. Systematic error reduces accuracy, while random error reduces precision [1] [8].

An In-Depth Examination of Systematic Error

Definition and Key Characteristics

Systematic error is defined as a consistent or proportional difference between the observed values and the true values of something [1]. Unlike random errors, which vary unpredictably, systematic errors are repeatable and deterministic. They skew measurements in a specific direction (either higher or lower) and by a predictable amount [1] [3]. This consistent deviation means that simply repeating measurements and averaging the results will not eliminate the error; it will only reinforce the inaccuracy [1] [11]. For this reason, systematic error is often considered more problematic than random error in research, as it can lead to false positive or false negative conclusions (Type I or II errors) about the relationship between variables [1].

Types of Systematic Error

Systematic errors can be quantified into two primary types, which are illustrated in the diagram below.

Diagram 2: The two main types of quantifiable systematic error. Offset error shifts all measurements by a fixed amount, while scale factor error shifts them proportionally [1] [12] [3].

- Offset Error (or Zero-Setting Error): This occurs when an instrument does not read zero when the quantity to be measured is zero [12] [3]. It shifts all measurements by a fixed amount in the same direction. For example, a scale that always reads 0.5 grams with nothing on it has an offset error. This is also known as an additive error [1].

- Scale Factor Error (or Multiplier Error): This occurs when measurements consistently differ from the true value by a proportional amount (e.g., by 10%) [1] [12]. For instance, if a scale consistently reports weights 5% higher than the actual mass, the absolute error increases as the mass being measured increases.

Systematic errors can infiltrate research at various stages, from design to data collection and analysis. The following table summarizes key sources and their potential impact.

Table 1: Common Sources of Systematic Error in Scientific Research

| Source Category | Specific Examples | Impact on Data |

|---|---|---|

| Faulty Instrumentation [1] [3] | Miscalibrated scale; stretched measuring tape; instrument with an incorrect zero point [1] [12] [3]. | Consistent deviation in a specific direction (e.g., all weights are 1g too heavy). |

| Improper Instrument Use [12] [3] | Poor thermal contact between a thermometer and substance [12]; reading a graduated cylinder from the wrong angle [8]. | Measurements do not reflect the true physical quantity being measured. |

| Research Design & Materials [1] | Leading questions in surveys that prompt inauthentic responses (response bias) [1]; sampling bias where some population members are more likely to be selected than others [1]. | Data is skewed and not representative of the true population or phenomenon, reducing generalizability. |

| Experimental Procedures | Experimenter drift, where observers slowly depart from standardized procedures over time [1]; failure to control for external variables. | Introduces a consistent, non-random shift in how data is recorded or generated. |

| Data Analysis Methods [3] | Use of an incorrect theoretical model for data processing [10]; violation of statistical model assumptions (e.g., linearity, normality) [13]. | Conclusions are biased due to flawed underlying assumptions in the analysis. |

The Critical Impact of Systematic Error on Data Integrity

The pervasive nature of systematic error poses a significant threat to the integrity of scientific data. Its effects extend far beyond simple inaccuracies in individual measurements.

- Distorted Findings and Invalid Conclusions: Systematic error introduces a consistent bias that can lead researchers to erroneously attribute observed effects to specific causes when, in fact, the effects are driven by the error itself [13]. This can result in both false positive (Type I) and false negative (Type II) conclusions regarding the relationships between variables [1].

- Reduced Generalizability: When systematic error, such as selection bias, is present in the data collection process, the results may not be applicable or accurate for broader populations or contexts [1] [13]. This fundamentally undermines the external validity of the study.

- Compromised Decision-Making: In fields like drug development and climate science, decisions with far-reaching impacts are based on model outputs and experimental data. The presence of stubborn systematic errors, such as the "double ITCZ" problem in climate models, can pervasively affect forecasting skills and policy formulation [14].

- Inefficient Resource Allocation: If data analysis leads to incorrect conclusions, resources may be allocated based on flawed insights, leading to wasted time, funding, and scientific effort [13].

- Erosion of Trust: Inaccurate or biased data analysis can damage the credibility of the results and the researchers or organizations responsible, which has long-term reputational consequences [13].

Methodologies for Identifying and Mitigating Systematic Error

Experimental Protocols for Detection and Control

Given that statistical analysis of a data set alone cannot eliminate systematic error, proactive experimental design is paramount [11]. The following workflow outlines a strategic approach to managing systematic error.

Diagram 3: A comprehensive experimental workflow for the systematic management of error, from planning through execution to analysis.

Detailed Mitigation Methodologies

- Calibration Protocol: Calibrating an instrument involves comparing its readings with the true value of a known, standard quantity [1] [11]. This should be performed before starting an experiment and at regular intervals thereafter.

- Procedure: Establish a calibration curve using at least two points, ideally at the lower and upper ends of the expected measurement range [11]. For example, to calibrate a scale, first set it to zero with nothing on it, then measure a known weight (e.g., a standard weight from a doctor's office). If the scale is linear, these two points are sufficient to define a correction factor for all measurements [11].

- Triangulation Methodology: Triangulation involves using multiple independent techniques or methods to measure the same variable [1]. This helps ensure that the results are not dependent on the specific shortcomings of a single instrument.

- Procedure: If measuring a complex construct like cellular stress, employ survey responses, physiological recordings, and reaction times as concurrent indicators. The convergence of results from these disparate methods strengthens the validity of the findings [1].

- Randomization and Masking: These techniques are critical for mitigating biases introduced by the researcher or participant.

- Randomization: Use probability sampling methods to ensure the sample is representative of the population. In experiments, use random assignment to place participants into different treatment conditions, which helps balance participant characteristics across groups [1].

- Masking (Blinding): Wherever possible, hide the condition assignment from both participants and researchers. This controls for experimenter expectancies and participant demand characteristics, which can systematically influence behavior or data recording [1].

The Scientist's Toolkit: Key Reagents and Materials for Error Control

Table 2: Essential Research Materials for Managing Systematic Error

| Tool/Reagent | Primary Function in Error Control | Application Example |

|---|---|---|

| Certified Reference Materials (CRMs) | To provide a known, standardized quantity with a certified value for instrument calibration [9]. | Calibrating an analytical balance before weighing experimental compounds in drug formulation. |

| Standard Operating Procedures (SOPs) | To document exact procedures, minimizing variation and experimenter drift introduced by ad-lib techniques [15]. | Ensuring all technicians prepare a buffer solution identically to avoid pH variations. |

| Data Logging Systems | To automate measurement collection, reducing random and systematic errors associated with human fatigue or inconsistent timing [8]. | Continuously monitoring the temperature of a cell culture incubator instead of manual checks. |

| Placebo Controls | To account for the placebo effect and enable effective blinding in clinical trials, isolating the true effect of the drug [15]. | In a double-blind drug trial, the control group receives an identical-looking pill without the active ingredient. |

Systematic error represents a fundamental challenge to scientific accuracy. Its consistent, directional nature systematically distorts data away from the truth, leading to invalid conclusions, reduced generalizability, and ultimately, a compromise of scientific integrity. Unlike random error, it cannot be reduced by mere repetition. The path to robust science requires a proactive and vigilant approach: a deep understanding of the sources of error, a commitment to rigorous methodologies like calibration and triangulation, and a culture that prioritizes the identification and elimination of bias at every stage of research. For researchers and drug development professionals, mastering the control of systematic error is not just a technical skill—it is an essential component of producing reliable, trustworthy, and impactful science.

In scientific research, measurement error is the difference between an observed value and the true value of something [1]. These errors are broadly categorized into two main types: random error, which arises from unpredictable statistical fluctuations, and systematic error, which results from reproducible inaccuracies that are consistently in the same direction [1] [4]. While random error affects precision and can be reduced by taking repeated measurements, systematic error (or bias) affects accuracy by skewing results away from the true value in a specific, predictable direction [1] [12].

Systematic errors are generally more problematic in research because they cannot be reduced by simply increasing the number of observations and can lead to false conclusions about the relationship between variables being studied [1]. These errors can originate from multiple sources in the laboratory setting, primarily falling into three categories: instrumental, procedural, and environmental biases. Understanding, identifying, and mitigating these biases is crucial for ensuring the validity and reproducibility of scientific findings, particularly in high-stakes fields like drug development where erroneous conclusions can have significant consequences.

Defining Systematic Error and Its Impact on Research

Systematic error refers to consistent or proportional differences between observed values and the true values of what is being measured [1]. Unlike random errors, which vary unpredictably, systematic errors follow a consistent pattern and introduce bias into measurements. This bias can manifest as either a constant shift (offset error) or a proportional difference (scale factor error) across all measurements [1] [12].

In the context of laboratory research, systematic errors can be particularly insidious because they may go undetected while consistently skewing results in one direction. This can lead to Type I or II errors in statistical conclusions, where researchers either falsely identify an effect that doesn't exist or fail to detect a genuine effect [1]. The impact extends beyond individual studies, as evidenced by research showing that between 24% and 30% of laboratory errors influence patient care, with patient harm occurring in 3% to 12% of cases [16]. Furthermore, a survey of ecology scientists revealed that most researchers believe biases have a medium to high impact on science in general, but they consistently rate the impact of biases on their own studies as significantly lower—demonstrating a potentially dangerous blind spot in scientific self-assessment [17].

Table 1: Comparison of Systematic and Random Errors

| Characteristic | Systematic Error | Random Error |

|---|---|---|

| Definition | Consistent, reproducible inaccuracies in the same direction [1] | Statistical fluctuations in either direction [4] |

| Effect on Results | Reduces accuracy, skews measurements away from true value [1] | Reduces precision, creates variability around true value [1] |

| Sources | Instrument limitations, flawed methods, environmental factors [18] | Unknown or unpredictable changes in measurement [16] |

| Detection | Difficult to detect statistically, requires comparison with standards [4] | Revealed through statistical analysis of repeated measurements [4] |

| Reduction Methods | Calibration, improved procedures, instrument maintenance [16] [1] | Large sample sizes, multiple measurements, averaging [16] [1] |

| Elimination | Can be corrected once identified and quantified [4] | Cannot be eliminated, only reduced [4] |

Categorizing Common Laboratory Biases

Instrumental Biases

Instrumental biases arise from limitations, malfunctions, or improper use of laboratory equipment and reagents. These systematic errors can affect all measurements conducted with the affected instruments until the issues are identified and corrected.

Types of Instrumental Biases:

Calibration Errors: Occur when instruments are not properly calibrated against known standards, resulting in consistent offset or scale factor errors [12] [4]. For example, a balance that always reads 0.5 grams over the actual mass introduces a constant offset error.

Instrument Resolution Limitations: All instruments have finite precision that limits their ability to resolve small measurement differences [4]. A meter stick with millimeter divisions cannot reliably distinguish differences smaller than about 0.5 mm.

Reagent Errors: Caused by impure reagents, improper storage conditions, or contamination that consistently affects test results [18]. For instance, using degraded standards in spectrophotometric assays will systematically alter calculated concentrations.

Instrument Drift: Many electronic instruments exhibit gradual changes in readings over time due to component aging or environmental effects [4].

Zero Setting Error: Occurs when an instrument does not read zero when the quantity being measured is zero [12]. Failure to properly zero a device before measurement introduces a constant error that disproportionately affects smaller measured values [4].

Table 2: Common Instrumental Biases and Their Characteristics

| Bias Type | Main Features | Examples | Impact on Data |

|---|---|---|---|

| Calibration Error | Consistent offset or proportional error [12] | Miscalibrated scale, pH meter reading 0.5 units off [19] [18] | All measurements shifted consistently from true value [12] |

| Reagent Error | Affected by purity, concentration, storage [18] | Impure chemical standards, degraded reagents, contaminated water [18] | Systematic alteration of reaction outcomes or measurements [18] |

| Instrument Drift | Gradual change in readings over time [4] | Electronic components aging, temperature effects on sensors [4] | Progressive deviation from true values during extended experiments [4] |

| Zero Offset | Non-zero reading when measured quantity is zero [12] | Balance not tared properly, electrical meter with ground loop [4] | Constant error added to all measurements [12] |

| Resolution Limit | Finite smallest detectable difference [4] | Analog scale parallax, digital instrument least significant digit [4] | Limits ability to detect small effects or differences [4] |

Procedural Biases

Procedural biases stem from flaws in experimental design, execution, or analytical methods. These biases are often method-specific and can be challenging to identify without careful validation studies.

Types of Procedural Biases:

Method Errors: Intrinsic to the specific analytical technique being used [18]. Examples include incomplete precipitation in gravimetric analysis, incomplete reactions in titrations, or side reactions that interfere with endpoint detection [18].

Operator Bias: Occurs when researchers unconsciously influence results through subjective interpretations, such as discriminating color changes during titrations or reading measurement scales from different angles [18]. Studies show that confirmation bias (the tendency to search for, interpret, and favor information that confirms pre-existing beliefs) significantly affects research outcomes, with non-blind methods often resulting in overestimation of effects [17].

Incomplete Definition: Results from ambiguous measurement protocols that allow for different interpretations [4]. For example, if two people measure the length of the same string with different tension, they will obtain different results.

Lag Time and Hysteresis: Occurs when measurements are taken before instruments reach equilibrium or when instruments have a "memory" effect where previous readings influence subsequent ones [4].

Environmental Biases

Environmental biases result from external conditions in the laboratory setting that systematically affect measurement outcomes. These factors are sometimes overlooked during experimental design but can significantly impact result validity.

Types of Environmental Biases:

Thermal Fluctuations: Temperature changes can affect instrument performance, reaction rates, and material properties [4]. For example, a windy environment affecting a balance reading during mass measurement represents an environmental error [19].

Electronic Noise: Electrical interference from nearby equipment or power supply fluctuations can introduce noise into electronic measurements [12] [4].

Vibrations and Drafts: Mechanical disturbances can affect sensitive instruments, particularly those requiring precise alignment or stable platforms [4].

Electromagnetic Interference: External magnetic fields can influence instruments with magnetic components or affect measurements involving charged particles [4].

Contamination: Airborne particles, chemical vapors, or biological contaminants in the laboratory environment can systematically alter samples or interfere with analyses [18].

Detection and Quantification Methodologies

Comparison of Methods Experiment

A critical approach for assessing systematic errors involves the comparison of methods experiment, where patient specimens or standard samples are analyzed by both a test method and a reference method [20]. The systematic differences observed at critical decision concentrations provide estimates of inaccuracy.

Experimental Protocol:

Sample Selection: Analyze a minimum of 40 different patient specimens selected to cover the entire working range of the method [20]. Specimens should represent the spectrum of diseases or conditions expected in routine application.

Analysis Schedule: Conduct analyses over multiple days (minimum of 5 days recommended) to minimize systematic errors that might occur in a single run [20].

Measurement Approach: Analyze each specimen by both test and comparative methods within a short time frame (typically within two hours) to ensure specimen stability [20]. Duplicate measurements are preferred to identify potential outliers or mistakes.

Data Analysis: Graph the comparison results using difference plots (test result minus reference result versus reference result) or comparison plots (test result versus reference result) to visually identify systematic patterns [20].

Statistical Calculations: For data covering a wide analytical range, use linear regression to estimate slope (proportional error) and y-intercept (constant error) [20]. The systematic error (SE) at a critical decision concentration (Xc) is calculated as:

- Yc = a + bXc

- SE = Yc - Xc where a is the y-intercept and b is the slope of the regression line [20].

For data with a narrow analytical range, calculate the average difference (bias) between methods using paired t-test statistics [20].

Quantitative Bias Analysis (QBA)

Quantitative Bias Analysis provides formal methods for estimating the potential direction and magnitude of systematic error operating on observed associations [21]. QBA methods include:

Simple Bias Analysis: Uses single parameter values to estimate the impact of a single source of systematic bias [21].

Multidimensional Bias Analysis: Uses multiple sets of bias parameters to account for uncertainty in parameter estimates [21].

Probabilistic Bias Analysis: Incorporates probability distributions around bias parameter estimates through simulation techniques [21].

These methods require specification of bias parameters, which are quantitative estimates of features of the bias, such as sensitivity and specificity for measurement error, participation rates for selection bias, or prevalence and strength of association for unmeasured confounding [21].

Replication and Calibration Approaches

Fundamental methods for detecting and quantifying systematic errors include:

Regular Calibration: Comparing instrument readings with the true values of known, standard quantities to identify and correct systematic offsets [1] [4]. This should be performed using certified reference materials traceable to national or international standards.

Triangulation: Using multiple techniques or instruments to measure the same quantity provides a means to identify systematic method-specific errors [1]. For example, measuring stress levels using survey responses, physiological recordings, and reaction times concurrently.

Blind Assessment: Implementing blinding procedures where researchers are unaware of sample identities, treatment conditions, or expected outcomes during data collection and analysis helps minimize confirmation biases [17]. Studies comparing blind and non-blind methods frequently show that non-blind approaches overestimate effects [17].

Mitigation Strategies and Best Practices

Instrumental Bias Mitigation

Preventive Maintenance and Calibration: Establish regular calibration schedules using traceable standards [16] [1]. Maintain detailed records of instrument performance and calibration history. For critical measurements, verify calibration before and after use.

Equipment Validation: Confirm that instruments meet manufacturer specifications and are appropriate for the intended measurements [4]. Verify resolution, accuracy, and linearity across the expected working range.

Environmental Control: Maintain stable laboratory conditions (temperature, humidity, vibration isolation) appropriate for sensitive measurements [4]. Implement monitoring systems to detect environmental fluctuations that could affect instruments.

Reagent Quality Control: Use high-purity reagents from reputable suppliers, implement proper storage conditions, and monitor reagent stability over time [18]. Establish expiration dates and discard outdated materials.

Procedural Bias Mitigation

Method Validation: Thoroughly validate new methods before implementation, including assessment of accuracy, precision, linearity, and specificity [20]. Compare with reference methods when available.

Standardization: Develop and implement detailed, unambiguous standard operating procedures (SOPs) for all critical processes [4]. Provide comprehensive training to ensure consistent application across all personnel.

Experimental Controls: Incorporate appropriate positive and negative controls in experimental designs to detect systematic procedural errors [4]. Use randomization in sample processing order to distribute potential time-dependent biases.

Blinding: Implement blinding procedures where feasible to minimize observer bias [1] [17]. This may include blinding researchers to treatment groups during data collection, analysis, or outcome assessment.

Environmental Bias Mitigation

Laboratory Design: Implement appropriate engineering controls such as vibration isolation tables, electromagnetic shielding, clean benches, and stable power supplies for sensitive equipment [4].

Environmental Monitoring: Continuously monitor and record critical environmental parameters (temperature, humidity, particulate levels) in laboratory areas where sensitive measurements are performed [4].

Temporal Replication: Conduct critical experiments or measurements at different times, on different days, or by different operators to identify time-dependent or operator-dependent environmental effects [20].

Table 3: Mitigation Strategies for Common Laboratory Biases

| Bias Category | Preventive Strategies | Detection Methods | Correction Approaches |

|---|---|---|---|

| Instrumental | Regular calibration, preventive maintenance, equipment validation [16] [4] | Comparison with reference standards, control materials [20] | Calibration adjustments, correction factors [4] |

| Procedural | Method validation, standardized protocols, comprehensive training [20] | Method comparison, replication studies, control samples [20] | Protocol refinement, personnel retraining [4] |

| Environmental | Laboratory controls, environmental monitoring, equipment shielding [4] | Environmental parameter tracking, temporal replication [4] | Environmental stabilization, measurement timing optimization [4] |

| Human/Operator | Blind protocols, automation, clear documentation [16] [17] | Inter-operator comparisons, blind verification [17] | Training, procedural adjustments, automation [16] |

Table 4: Research Reagent Solutions for Bias Control

| Tool/Reagent | Function | Application Examples |

|---|---|---|

| Certified Reference Materials | Provide traceable standards for instrument calibration and method validation [20] | Balance calibration weights, pH standard solutions, purified analyte standards [20] |

| Control Samples | Monitor assay performance and detect systematic drift over time [20] | Known concentration quality control materials, positive/negative controls in assays [20] |

| High-Purity Reagents | Minimize interference and contamination-related biases [18] | HPLC-grade solvents, molecular biology-grade water, analytical standard compounds [18] |

| Stable Storage Systems | Maintain reagent integrity and prevent degradation-related biases [18] | Temperature-controlled storage, light-sensitive containers, moisture-free environments [18] |

| Automation Systems | Reduce human error and increase procedural consistency [16] | Automated liquid handlers, robotic sample processors, integrated workflow systems [16] |

Instrumental, procedural, and environmental biases represent significant threats to research validity and reproducibility across scientific disciplines. These systematic errors can originate from multiple sources throughout the experimental process, from initial study design to final data interpretation. Unlike random errors, which can be reduced through replication and statistical means, systematic errors require specific identification, quantification, and correction strategies tailored to their sources.

Effective management of laboratory biases requires a multifaceted approach including proper instrument selection and maintenance, rigorous method validation, comprehensive personnel training, controlled laboratory environments, and implementation of bias-detection methodologies such as method comparison studies and quantitative bias analysis. Furthermore, acknowledging the pervasive nature of cognitive biases and implementing countermeasures such as blinding and randomization is essential for objective research outcomes.

As research methodologies become increasingly sophisticated and the demand for reproducible findings grows, systematic attention to identifying and mitigating laboratory biases will remain fundamental to scientific progress, particularly in fields like drug development where research quality directly impacts human health.

In scientific research, the integrity of data is paramount. Systematic error, or bias, represents a fundamental threat to this integrity, referring to a consistent, predictable deviation from the true value that affects all measurements in the same way [9]. Unlike random errors, which scatter data points unpredictably and can be reduced through repeated trials, systematic errors cannot be mitigated by mere replication and often remain undetected by standard statistical analysis of the data itself [9]. These errors are cumulative; when a measurement depends on multiple variables, the total systematic error compounds, potentially leading to significantly skewed results and erroneous conclusions [9]. Understanding, identifying, and correcting for these biases is therefore a critical competency for researchers, scientists, and drug development professionals dedicated to producing valid and reliable evidence.

Defining Systematic Error in Scientific Research

Core Definition and Key Characteristics

A systematic error is a fixed or law-like deviation that is inherent in each and every measurement performed under the same conditions [9]. Its defining characteristic is its consistency; it skews measurements in a single direction, making them consistently higher or lower than the true value. This consistency makes it particularly insidious. For instance, if a balance is not zeroed before use, every reading will have the same small amount added to or subtracted from it [9]. This type of error cannot be detected by statistical examination of the readings alone, as it does not increase the scatter or variance of the data but instead shifts the entire dataset [9].

Contrasting Systematic and Random Error

The distinction between systematic and random error is crucial for understanding data quality. Accuracy requires both types of error to be small, whereas precision refers specifically to the freedom from random error [9]. The table below summarizes the key differences.

Table 1: Comparison of Systematic and Random Errors

| Feature | Systematic Error (Bias) | Random Error (Precision Error) |

|---|---|---|

| Definition | Consistent, predictable deviation in every measurement [9] | Unpredictable variation that differs between measurements [9] |

| Cause | Imperfectly calibrated instruments, flawed methods, observer bias [9] | Unknown or uncontrollable environmental factors [9] |

| Impact on Data | Shifts all measurements in one direction, affecting accuracy [9] | Causes "scatter" in repeated measurements, affecting precision [9] |

| Reduction Method | Identification, calibration, improved methods and design [9] | Replication and increasing sample size [9] |

| Detection | Comparison against a reference standard or different method [9] | Statistical analysis of data spread (e.g., standard deviation) [9] |

Cataloging Systematic Error: Types and Real-World Examples

Systematic errors manifest across diverse scientific fields. The following examples, drawn from clinical research, data collection, and measurement systems, illustrate their pervasive nature.

Measurement Error in Clinical and Scientific Data

In the context of clinical trials and real-world evidence generation, measurement error is a critical form of systematic bias. When combining data from rigorous clinical trials with real-world data (RWD), differences in how and when outcomes are assessed can introduce systematic error [22]. For example, in oncology, progression-free survival (PFS) measured in RWD may be systematically biased compared to trial standards due to less regimented assessment schedules, heterogeneous data sources, and missing information in electronic health records [22]. This is not merely random noise; it is a structured deviation that can lead to biased estimates of treatment efficacy if not properly addressed. Statistical methods like Survival Regression Calibration (SRC) have been developed specifically to correct for this type of systematic measurement error in time-to-event outcomes [22].

Another specialized field dealing with this issue is the analysis of circular data (e.g., wind directions, animal migration paths). In an "errors-in-variables" context, measurement errors from device miscalibration or observation difficulties introduce an excess bias proportional to the error's spread, which compounds the standard bias from statistical estimation methods [23].

Survey Bias in Research and Data Collection

Survey design is a common source of systematic error in fields ranging from market research to public health. Biased questions systematically steer respondents toward particular answers, distorting insights and leading to flawed conclusions [24]. The following table organizes common types of biased survey questions.

Table 2: Types and Examples of Systematic Survey Bias

| Bias Type | Description | Real-World Example | Unbiased Alternative |

|---|---|---|---|

| Leading Questions | Subtly pushes respondents toward a particular answer using suggestive language [24] [25] | “How much do you love our new feature?” [24] | “How satisfied are you with our new feature?” [24] |

| Loaded Questions | Contains a built-in assumption that may not be true for the respondent [24] [25] | “What do you like most about our excellent customer service?” [24] | “How would you rate our customer service?” followed by “Why did you give this rating?” [24] |

| Double-Barreled Questions | Asks about two or more issues but allows only one response [24] [25] | “How satisfied are you with our pricing and customer support?” [25] | Split into two questions: “How satisfied are you with our pricing?” and “How satisfied are you with our customer support?” |

| Scale-Based Bias | Uses an unbalanced rating scale that offers more positive than negative options [25] | Options: [Very Satisfied, Satisfied, Neutral, Dissatisfied] [25] |

Use a balanced scale: [Very Satisfied, Satisfied, Neutral, Dissatisfied, Very Dissatisfied] [25] |

| Social Desirability Bias | Respondents answer in a way they believe will be viewed favorably by others [24] | Overstating how often they recycle or exercise in a health study [24] | Assure anonymity, use neutral language, and frame questions to normalize behaviors [24] |

Instrumentation and Calibration Error

A classic example of systematic error is a miscalibrated measurement instrument. As noted, a balance that does not return to zero, or a scale that has not been calibrated with standard weights, will produce measurements with a zero offset [9]. This fixed deviation affects every single reading. In engineering, complex devices are susceptible to systematic errors from leaks, temperature variations, and pressure changes, all of which can influence accuracy in a consistent, predictable manner [9]. The mechanical design and dimensions of experimental systems are also a key source of such bias, requiring careful analysis and innovative design to minimize [9].

Methodologies for Detecting and Mitigating Systematic Error

Experimental Protocols for Detection

Detecting systematic error requires proactive strategies that go beyond analyzing the primary dataset.

- Protocol 1: Calibration with Certified Reference Materials. The most direct method is to perform a measurement on a certified reference material (CRM)—a substance or material with one or more properties that are sufficiently homogeneous and well-established to be used for instrument calibration [9]. A significant difference between the measured value and the certified value indicates a systematic error, the magnitude of which can be used to define a correction factor for future measurements [9].

- Protocol 2: Method Comparison. Using a fundamentally different, well-validated measurement technique (a "reference measurement procedure") to analyze the same samples can reveal systematic biases in the primary method [9]. Discrepancies between the results from the two methods can point to systematic error in one of them.

- Protocol 3: Instrument Inter-comparison. Measuring the same set of samples using multiple instruments of the same type can help identify if one instrument has a systematic bias, such as a zero offset, that is not present in the others.

The following workflow outlines a general approach for handling systematic error in research.

Statistical Correction Methods

When systematic error cannot be eliminated experimentally, statistical methods can be employed to correct for it.

- Regression Calibration: This established approach is used for handling mismeasured variables [22]. It involves obtaining a "validation sample" where both the true and mismeasured variables are collected. A model is fit to estimate their relationship, which is then used to adjust the mismeasured values in the full dataset [22].

- Survival Regression Calibration (SRC): An extension of regression calibration, SRC is specifically designed to correct for systematic measurement error in time-to-event outcomes (e.g., overall survival, progression-free survival) common in oncology studies [22]. It fits separate Weibull regression models to true and mismeasured outcomes in a validation sample and then calibrates parameter estimates in the full study according to the estimated bias [22].

- Deconvolution Methods: In specialized contexts like circular data analysis with measurement errors, deconvolution techniques using lower-bias kernel estimators can be applied to account for the excess bias introduced by the error [23].

The Scientist's Toolkit: Key Reagents and Materials

The following table details essential "research reagents" and methodological solutions for investigating and mitigating systematic error.

Table 3: Research Reagent Solutions for Managing Systematic Error

| Item / Solution | Function in Mitigating Systematic Error |

|---|---|

| Certified Reference Materials (CRMs) | Provides a ground truth with known property values to quantify and correct for instrumental bias via calibration [9]. |

| Internal Validation Sample | A subset of the main study where both the mismeasured variable and the "gold standard" measurement are collected, enabling statistical correction models [22]. |

| Regression Calibration Models | Statistical tool that uses data from a validation sample to estimate and correct for bias in the main study dataset [22]. |

| Deconvolution Kernel Estimators | A nonparametric statistical method, used in errors-in-variables contexts, to recover the true underlying distribution from mismeasured data [23]. |

| Standard Operating Procedures (SOPs) | Detailed, step-by-step instructions for equipment use and data collection to minimize bias introduced by operator variation. |

| Blinded Data Review | A protocol where outcome assessors are unaware of group assignments (e.g., treatment vs. control) to prevent assessment bias. |

Systematic error is an omnipresent challenge in scientific research, with the potential to undermine the validity of findings from the laboratory to the clinic. Its consistent nature makes it more dangerous than random error and necessitates specific, targeted strategies for its management. As demonstrated through examples from miscalibrated scales to leading survey questions and measurement error in real-world evidence, a profound understanding of these biases is the first line of defense. By integrating rigorous experimental design—including calibration with reference materials and method comparison—with advanced statistical correction techniques like regression calibration and deconvolution, researchers can safeguard the accuracy of their data. For drug development professionals and scientists, a relentless focus on identifying and mitigating systematic error is not merely a technical exercise but a fundamental component of research integrity and a prerequisite for generating reliable evidence.

In scientific research, measurement error is the difference between an observed value and the true value of a quantity [1]. Systematic error, also referred to as bias, is a consistent or proportional difference that skews measurements in a specific direction away from the true value [1] [26] [3]. Unlike random error, which creates statistical fluctuations that can be reduced by increasing sample size, systematic error does not decrease with larger sample sizes and is reproducible in its inaccuracy [1] [4]. This persistent nature makes systematic errors particularly problematic as they can lead to false conclusions and compromised research validity [1] [26]. Within the broad category of systematic errors, offset errors and scale factor errors represent two quantifiable types that researchers can identify and correct through careful calibration and analysis [1] [3].

Defining Offset and Scale Factor Errors

Core Characteristics of Offset Errors

Offset error, also known as additive error or zero-setting error, occurs when a measurement instrument is not calibrated to the correct zero point [1] [3]. This type of error introduces a constant difference (positive or negative) between measured and true values across the entire measurement range [1]. For example, if a scale reads 0.5 grams when nothing is placed on it, all subsequent measurements will be shifted by this constant amount regardless of the actual weight being measured [3]. The mathematical representation of an offset error can be expressed as:

Measured Value = True Value + Constant Offset

The key characteristic of offset error is that the magnitude of the error remains consistent, meaning the difference between measured and true values does not change as the quantity being measured increases or decreases [1]. This consistent deviation affects the accuracy of measurements while typically preserving precision, as repeated measurements of the same quantity will yield similar results [1] [4].

Core Characteristics of Scale Factor Errors

Scale factor error, also referred to as multiplicative error or proportional error, occurs when measurements consistently differ from true values by a constant proportion or percentage [1] [3]. Unlike offset errors, scale factor errors change in absolute magnitude depending on the value being measured [1]. For example, if a tape measure has stretched and adds 1% to all measurements, a true length of 100 cm would read as 101 cm, while a true length of 200 cm would read as 202 cm [3]. The mathematical representation of a scale factor error can be expressed as:

Measured Value = True Value × Scale Factor

The distinguishing feature of scale factor error is that the error magnitude scales proportionally with the measured quantity [1]. While the absolute error increases with larger measurements, the relative error remains constant across the measurement range [3]. This proportional relationship means scale factor errors can be particularly insidious in research spanning wide measurement ranges, as the absolute inaccuracy grows with larger values while maintaining consistent relative inaccuracy [1].

Table 1: Comparative Characteristics of Offset and Scale Factor Errors

| Characteristic | Offset Error | Scale Factor Error |

|---|---|---|

| Alternative Names | Additive error, Zero-setting error | Multiplicative error, Proportional error |

| Mathematical Relationship | Measured = True + Constant | Measured = True × Factor |

| Error Magnitude | Constant across range | Proportional to measured value |

| Effect on Measurements | Consistent shift in one direction | Increasing absolute error with larger values |

| Common Causes | Incorrect zero calibration, Zero offset | Instrument degradation, Calibration drift |

| Impact on Precision | Does not affect precision | Does not affect precision |

| Impact on Accuracy | Reduces accuracy consistently | Reduces accuracy proportionally |

Visualizing Systematic Error Relationships

The following diagram illustrates how offset and scale factor errors affect measurements differently compared to ideal conditions and random error:

Measurement Error Relationships

Offset errors typically originate from instrument calibration issues or operator errors that introduce a consistent shift in measurements [1] [3] [4]. In laboratory settings, a frequent cause is failure to zero an instrument before taking measurements [4]. For example, an electronic balance might display a small positive reading when no sample is present if it hasn't been properly tared [3]. In pharmaceutical research, improper calibration of pH meters can create offset errors that affect drug formulation processes [4]. Physical variations in experimental setups can also introduce offset errors, such as a micrometer caliper that doesn't fully close to zero or a thermometer that consistently reads above the actual temperature due to calibration drift [4]. In clinical research, interviewer bias can function as a form of offset error when researchers consistently record responses in a direction that aligns with their expectations [26] [27].

Scale factor errors often result from instrument degradation or improper calibration procedures that affect measurement proportionality [1] [3]. A common example is a stretched measuring tape that gives increasingly larger readings as the measured distance increases [3]. In electronic sensors, component aging can alter sensitivity, causing proportional errors across measurements [4]. In analytical chemistry, incorrect calibration curves can introduce scale factor errors in spectrophotometers or chromatographs [4]. For questionnaire-based research, response biases like extreme responding or acquiescence bias can function as scale factor errors when participants systematically alter their responses in a proportional manner across questions [27]. In regulatory science, errors in data capture processes within large-scale observational studies can introduce proportional misclassification that affects risk assessments [28].

Real-World Research Examples

Example 1: Pharmaceutical Weight Measurements In a drug development study, researchers consistently obtained sample weights 0.5 mg higher than known standards [1]. The discrepancy was traced to an offset error caused by a balance that hadn't been properly zeroed before measurements [3] [4]. This consistent shift of 0.5 mg across all samples represented a systematic error that could significantly impact dosage calculations in formulation studies [1].

Example 2: Biomechanical Force Analysis A research team studying tendon elasticity discovered their force measurements were consistently 5% higher than theoretical predictions [3]. Investigation revealed a scale factor error in their load cell calibration, which applied a multiplicative error of 1.05 to all readings [1] [3]. This proportional error meant that larger force measurements had greater absolute errors, potentially affecting stress-strain relationship conclusions [1].

Table 2: Experimental Examples of Systematic Errors

| Research Context | Error Type | Manifestation | Potential Impact |

|---|---|---|---|

| Clinical Trial Weight Measurements | Offset Error | Balance reads +0.5g with no load | Incorrect dosage calculations |

| Environmental Temperature Study | Offset Error | Thermometer calibrated 2°C high | Invalid climate trend conclusions |

| Chemical Solution Preparation | Scale Factor Error | Pipette delivers 3% extra volume | Incorrect concentration calculations |

| Economic Survey Research | Scale Factor Error | Response bias exaggerates all values | Proportional distortion of income data |

Methodologies for Identification and Quantification

Experimental Protocols for Error Detection

Protocol 1: Offset Error Identification through Standard Reference Materials

- Selection: Obtain certified reference materials with known values spanning the expected measurement range [3] [4].

- Measurement: Measure each reference material using the instrument under evaluation, following standard operating procedures [4].

- Analysis: Calculate the difference between measured values and certified values for each reference material [4].

- Interpretation: If differences are consistent in direction and magnitude across all reference materials, an offset error is likely present [1] [3]. The average difference represents the estimated offset [4].

Protocol 2: Scale Factor Error Identification through Linear Regression

- Sample Preparation: Prepare or obtain standards with known values covering the operational range [3] [4].

- Data Collection: Measure each standard multiple times to establish precision [4].

- Statistical Analysis: Perform linear regression with known values as independent variable and measured values as dependent variable [4].

- Interpretation: A best-fit line with slope significantly different from 1.0 indicates scale factor error, while a non-zero intercept suggests additional offset error [1] [3]. The deviation from unity (1.0) represents the scale factor [4].

Quantitative Bias Analysis Methods

Quantitative bias analysis (QBA) provides formal methods for quantifying uncertainty from systematic errors, including offset and scale factor errors [29] [30] [28]. These approaches estimate the direction, magnitude, and uncertainty associated with systematic errors using bias models that incorporate plausible values for bias parameters [28]. In regulatory settings, QBA methods are increasingly employed to assess the robustness of observational study findings by quantifying how systematic errors might affect measures of association [30] [28]. Advanced techniques include:

- Probabilistic bias analysis: Uses Monte Carlo simulation to propagate uncertainty from multiple bias sources [29] [28].

- Multiple bias models: Accounts for simultaneous effects of different bias types [28].

- Bayesian methods: Incorporates prior knowledge about bias parameters to estimate corrected effects [28].

The following workflow diagram illustrates the process for identifying and correcting systematic errors:

Systematic Error Identification Workflow

Table 3: Research Reagent Solutions for Systematic Error Management

| Tool or Resource | Primary Function | Application Context |

|---|---|---|

| Certified Reference Materials | Provides known values for calibration | Instrument verification across measurement range [3] [4] |

| Calibration Protocols | Standardized procedures for instrument setup | Ensuring consistent pre-measurement conditions [1] [4] |

| Data Acquisition Software with Diagnostic Features | Automated error detection and reporting | Identifying consistent patterns in large datasets [28] |

| Statistical Analysis Packages | Quantitative bias analysis implementation | Estimating magnitude and uncertainty of systematic errors [29] [30] [28] |

| Null Difference Instruments | Precision measurement through balancing | Eliminating source instability in sensitive measurements [4] |

Mitigation Strategies and Correction Methodologies

Procedural Approaches for Error Reduction

Regular calibration against certified standards is fundamental for identifying and correcting both offset and scale factor errors [1] [3] [4]. The frequency of calibration should be determined by instrument stability, usage intensity, and criticality of measurements [4]. Triangulation, using multiple measurement techniques to record observations, provides cross-validation that can reveal systematic errors not apparent when using a single instrument [1]. Method randomization in experimental procedures helps distinguish systematic errors from random variability by ensuring errors manifest consistently across randomized conditions [1]. Blinding techniques prevent researcher expectations from influencing measurements, particularly important in clinical and behavioral research where subjective assessment is required [1] [26] [27].

Mathematical Correction Procedures

Offset Error Correction:

- Determine offset value by measuring known zero condition or reference standard [3] [4].

- Subtract the offset value from all subsequent measurements [3].

- Verify correction by measuring independent standard with known value [4].

Scale Factor Error Correction:

- Measure multiple reference standards across operational range [3] [4].

- Calculate average ratio of measured values to known values to determine scale factor [3].

- Divide measured values by the scale factor to obtain corrected values [3] [4].

- For instruments with both offset and scale factor errors, apply correction formula: True Value = (Measured Value - Offset) / Scale Factor [31].

Research Design Considerations

Proper research design incorporates safeguards against systematic errors through prespecified analysis plans that identify potential bias sources before data collection [26] [28]. Prospective registration of studies prevents selective reporting of significant results, a form of publication bias [26] [27]. Comprehensive documentation of all measurement procedures, calibration activities, and protocol deviations creates an audit trail for identifying potential systematic errors during data interpretation [26] [4]. In regulatory science, quantitative bias analysis is increasingly formalized in study protocols to quantitatively assess how systematic errors might affect conclusions drawn from observational studies [30] [28].

Offset and scale factor errors represent quantifiable subtypes of systematic bias that threaten research validity through consistent measurement distortion [1] [3]. While offset errors introduce constant shifts, scale factor errors create proportional distortions that scale with measurement magnitude [1]. Through rigorous calibration protocols, appropriate statistical methods, and systematic error-aware research designs, scientists can identify, quantify, and correct these biases [1] [3] [4]. The development of standardized quantitative bias analysis frameworks continues to enhance our ability to account for systematic uncertainties, particularly in regulatory and biomedical research where accurate measurement is paramount for valid conclusions and decision-making [29] [30] [28].

Identifying and Quantifying Systematic Error in Research Data

In scientific research, measurement error represents the difference between an observed value and the true value. Systematic error, also known as systematic bias, is a consistent or proportional difference between observed and true values [1] [3]. Unlike random error, which introduces unpredictable variability, systematic error skews measurements in a specific direction, potentially leading to false conclusions about relationships between variables [1]. This persistent and consistent nature makes systematic errors particularly problematic in scientific research, especially in fields like drug development where accurate measurements are critical for safety and efficacy determinations.

Systematic errors are generally considered more problematic than random errors because they cannot be reduced simply by increasing sample size and consistently lead data away from true values [1] [3]. Where random error primarily affects measurement precision, systematic error directly compromises accuracy [1]. The detection and mitigation of systematic error through known standards and control experiments is therefore fundamental to research integrity across all scientific disciplines.

Defining Systematic Error: Types and Characteristics

Fundamental Types of Systematic Error

Systematic errors manifest in two primary forms, each with distinct characteristics:

Offset Error (Zero-Setting Error): This occurs when a measurement instrument does not read zero when the quantity to be measured is zero [1] [12]. It affects all measurements by the same absolute amount, effectively shifting the entire dataset by a fixed value. For example, a scale that consistently reads 0.5 grams with nothing placed on it would produce measurements all containing this offset error [3].

Scale Factor Error (Multiplier Error): This error occurs when measurements consistently differ from the true value proportionally [1] [12]. Unlike offset errors, scale factor errors increase or decrease in magnitude as the measured quantity changes. An instrument that consistently reads 5% higher than the true value exhibits scale factor error [3].

Table 1: Comparison of Systematic Error Types

| Error Type | Alternative Names | Nature of Error | Example |

|---|---|---|---|

| Offset Error | Additive error, Zero-setting error | Consistent absolute difference | Scale not zeroed before use |

| Scale Factor Error | Correlational systematic error, Multiplier error | Consistent proportional difference | Instrument calibration drift |

Systematic errors can originate from multiple aspects of the research process [1] [3]:

- Faulty Instruments: Imperfections or malfunctions in measurement equipment [12] [3]

- Researcher Error: Physical limitations, improper instrument use, or unconscious biases in data collection [3]

- Experimental Procedure Flaws: Poorly controlled variables or confounding factors [1]

- Analysis Method Errors: Inappropriate statistical approaches or data processing techniques [3]

- Research Materials: Leading questions in surveys or questionnaires that prompt inauthentic responses [1]

- Sampling Bias: When some population members are more likely to be included than others [1]

Core Detection Methodologies

Comparison Against Known Standards

The most fundamental method for detecting systematic error involves comparing experimental results against known reference standards [3]. This approach requires researchers to measure a standard with known properties using their experimental system, then compare the observed values against the expected values.

Experimental Protocol: Known Standard Comparison

Select Appropriate Standard: Choose a certified reference material (CRM) with properties closely matching your experimental samples. The standard should be traceable to national or international measurement systems.

Establish Measurement Conditions: Conduct measurements under identical conditions to those used for experimental samples, including the same instrument settings, environmental conditions, and analyst.

Execute Repeated Measurements: Perform multiple measurements of the standard to account for random error and obtain a reliable average observed value.

Calculate Discrepancy: Determine the difference between the observed value and the certified value of the standard.

Statistical Analysis: Apply appropriate statistical tests (e.g., t-test) to determine if the observed difference is statistically significant.

Document Results: Record both the magnitude and direction of any detected systematic error.

This methodology directly reveals both offset errors (through consistent differences from the standard) and scale factor errors (through proportional differences across measurement ranges) [3].

Control Experiments

Control experiments serve as powerful tools for detecting systematic errors that may not be apparent through direct standard comparison [3]. These experiments are designed to isolate specific variables or potential sources of error.

Experimental Protocol: Control Experiment Implementation

Identify Potential Error Sources: Systematically evaluate all aspects of your experimental design to identify potential sources of systematic error (instrumentation, procedures, environmental factors, researcher techniques).

Design Specific Controls: For each potential error source, design a control experiment that isolates that specific factor:

- Blank Controls: Measure samples with known zero values to detect offset errors.

- Positive Controls: Use samples with known expected responses to verify system performance.

- Method Controls: Apply multiple measurement techniques to the same samples.

Implement Randomization: Use random assignment for sample processing order and instrument allocation to prevent systematic patterns from emerging [1] [3].

Execute Control Measurements: Conduct control experiments interspersed with actual experimental measurements to account for potential temporal drift.

Analyze Control Data: Statistically compare control results against expected values to identify any consistent deviations.

Iterate Refinement: Use results from control experiments to refine methodologies and repeat controls until systematic errors are eliminated or quantified.

Control Experiment Workflow for Systematic Error Detection

Advanced Detection Strategies

Method Triangulation

Triangulation involves using multiple techniques, instruments, or methods to measure the same phenomenon [1] [3]. When different approaches consistently yield similar results, confidence in measurement accuracy increases. Discrepancies between methods may indicate systematic errors specific to particular techniques.

Implementation Protocol:

- Select Diverse Methods: Choose measurement techniques based on different physical principles or operational methodologies.

- Standardize Samples: Use identical sample sets across all measurement techniques.

- Coordinate Timing: Conduct measurements within a time frame that prevents sample degradation.

- Cross-Analyze Results: Statistically compare results across methods using ANOVA or similar techniques.

- Investigate Discrepancies: Systematically explore the causes of any consistent differences between methods.

Instrument Calibration and Monitoring

Regular calibration using certified standards is essential for detecting and correcting systematic errors [1] [3]. A comprehensive calibration protocol includes:

Experimental Protocol: Systematic Calibration

- Multi-Point Calibration: Use standards at multiple values across the measurement range to detect both offset and scale factor errors.

- Temporal Monitoring: Implement regular calibration checks at predetermined intervals to detect drift over time.

- Documentation System: Maintain detailed records of all calibration results, including dates, standards used, and observed deviations.

- Corrective Actions: Establish procedures for instrument adjustment or data correction when systematic errors exceed acceptable thresholds.

Table 2: Systematic Error Detection Methods and Their Applications

| Detection Method | Primary Error Type Identified | Key Implementation Requirements | Typical Experimental Context |

|---|---|---|---|

| Known Standard Comparison | Both offset and scale factor errors | Certified reference materials | Method validation |

| Control Experiments | Procedure-specific errors | Appropriate control design | Routine experimental runs |

| Method Triangulation | Method-specific systematic errors | Multiple measurement techniques | Critical measurements |

| Regular Calibration | Instrument drift and bias | Calibration standards and protocols | Equipment maintenance |

Data Analysis and Interpretation

Statistical Framework for Error Detection

Robust statistical analysis is essential for distinguishing systematic error from random variability [3]. Key analytical approaches include:

- Bland-Altman Analysis: Plots differences between two measurement methods against their averages to identify systematic biases.

- Linear Regression: Applied to observed versus expected values from standards; non-zero intercepts indicate offset error, while slopes different from 1.0 indicate scale factor error.

- t-Tests: Determine if the mean difference from known standards is statistically significant.

- Control Charting: Monitors measurement processes over time to detect systematic shifts or trends.

Quantification and Correction

Once detected, systematic errors should be quantified and corrected:

- Magnitude Determination: Calculate the average difference between observed and expected values across multiple standards.

- Direction Assessment: Note whether the error consistently increases or decreases values.

- Correction Factor Development: Create mathematical corrections based on the characterized error pattern.

- Uncertainty Estimation: Calculate the remaining uncertainty after correction.

Systematic Error Identification and Correction Process