Systematic Error Reduction in Analytical Methods: Strategies for Precision in Pharmaceutical and Clinical Research

This article provides a comprehensive framework for researchers and drug development professionals to identify, quantify, and reduce constant systematic error in analytical methods.

Systematic Error Reduction in Analytical Methods: Strategies for Precision in Pharmaceutical and Clinical Research

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to identify, quantify, and reduce constant systematic error in analytical methods. Covering foundational principles to advanced applications, it explores error sources, methodological corrections, troubleshooting, and validation strategies aligned with modern regulatory standards like ICH Q2(R2) and Q14. Readers will gain actionable insights into calibration techniques, Quality-by-Design (QbD), instrument optimization, and data analysis methods to enhance data integrity, improve method robustness, and ensure compliance in biomedical research.

Understanding Systematic Error: Sources and Impact on Analytical Data Quality

Troubleshooting Guides

Guide 1: Identifying and Troubleshooting Systematic Errors

Problem: Your experimental results are consistently skewed away from the known true value, even after repeating the measurements. Explanation: This is a classic symptom of systematic error (or bias), a consistent and repeatable inaccuracy that affects all measurements in the same direction [1] [2]. Unlike random errors, these will not average out with repeated trials [3].

Troubleshooting Steps:

- Check Instrument Calibration:

- Action: Perform a calibration using a known standard reference material. Compare your instrument's reading against the true value of the standard across the expected measurement range [4] [5].

- Example: If using a scale, weigh a standard 100g mass. If the scale reads 102g, you have a systematic offset that needs correction [4].

- Review Experimental Design and Procedure:

- Action: Critically examine your method. Could the instrument itself be altering the quantity you are measuring? Are there environmental factors, like temperature or pressure, that are not accounted for? Are you using the instrument correctly as per its design? [1] [4]

- Example: A large, room-temperature temperature probe inserted into a small, hot liquid sample may cool the sample and give a consistently low reading [4].

- Employ Triangulation:

- Implement Blinding (Masking):

- Action: Where possible, hide the condition assignment from both participants and researchers during data collection and analysis. This prevents subconscious influences, such as experimenter expectancies or participants responding to please the researcher, which can introduce bias [2].

Guide 2: Identifying and Minimizing Random Errors

Problem: Repeated measurements of the same quantity give slightly different results, creating scatter in your data. Explanation: This is caused by random error, which are unpredictable fluctuations in measurements due to unknown or uncontrollable factors [1] [7]. They affect the precision, or repeatability, of your data [2].

Troubleshooting Steps:

- Take Repeated Measurements:

- Increase Your Sample Size:

- Action: Collect data from a larger sample. In large samples, the positive and negative random errors cancel each other out more efficiently, increasing the precision and statistical power of your results [2].

- Control Experimental Variables:

- Action: Identify and stabilize environmental factors that may fluctuate, such as room temperature, humidity, or vibration. Ensure all procedures are as consistent as possible for all samples to reduce unintended variability [2].

- Use High-Precision Instruments:

- Action: If random error is too high, consider using measurement tools with better resolution and reliability. An instrument that is accurate to 0.001g will have less random variability than one accurate to 0.1g [2].

Frequently Asked Questions (FAQs)

Q1: From a practical standpoint, which type of error is more dangerous for my research conclusions? A: Systematic errors are generally considered more problematic [2]. While random error adds noise and reduces precision, it often averages out and can be quantified with statistics. Systematic error, however, skews all your data in one direction, leading to biased conclusions and false positives or negatives about the relationship between variables you are studying [2] [3]. You can be precisely wrong if you have a large systematic error.

Q2: I've calibrated my equipment. What other common sources of systematic error should I look for? A: Calibration is a key step, but systematic errors can originate from many parts of the research process:

- Methodology Errors: An incomplete chemical reaction or an incorrect sampling technique [5].

- Personal Bias: An observer consistently rounding measurements up or down [5].

- Reagent Impurities: Impurities in chemicals used for analysis [5].

- Sampling Bias: Using a non-representative sample that does not accurately reflect the whole population [2] [3].

Q3: How can I visually distinguish between systematic and random error in my data? A: The table below summarizes the core differences.

| Feature | Systematic Error | Random Error |

|---|---|---|

| Cause | Predictable, identifiable flaws in the system [1] [9] | Unpredictable, uncontrollable fluctuations [1] [7] |

| Impact on Values | Consistent deviation in one direction [2] | Scatter both above and below the true value [2] |

| Impact on Results | Reduces accuracy [2] | Reduces precision [2] |

| Elimination by Repetition | No [3] | Yes, through averaging [2] [7] |

| Statistical Detection | Difficult; requires comparison to a standard [4] [9] | Can be quantified (e.g., standard deviation) [1] |

Q4: Our research team has high turnover. How can we minimize errors during handoffs? A: Handoffs are error-prone periods. To mitigate risk:

- Standardize Processes: Create and use detailed, step-by-step Standard Operating Procedures (SOPs) and checklists for data handling and analysis [6].

- Ensure Clear Communication: Hold dedicated meetings during transitions to ensure incoming team members are familiar with the study background, design, and all data forms [6].

- Maintain a Single Source of Truth: Use a single, electronically-locked master data file. Any new versions should be clearly documented with a datetime stamp and the reason for the change [6].

Error Identification Workflow

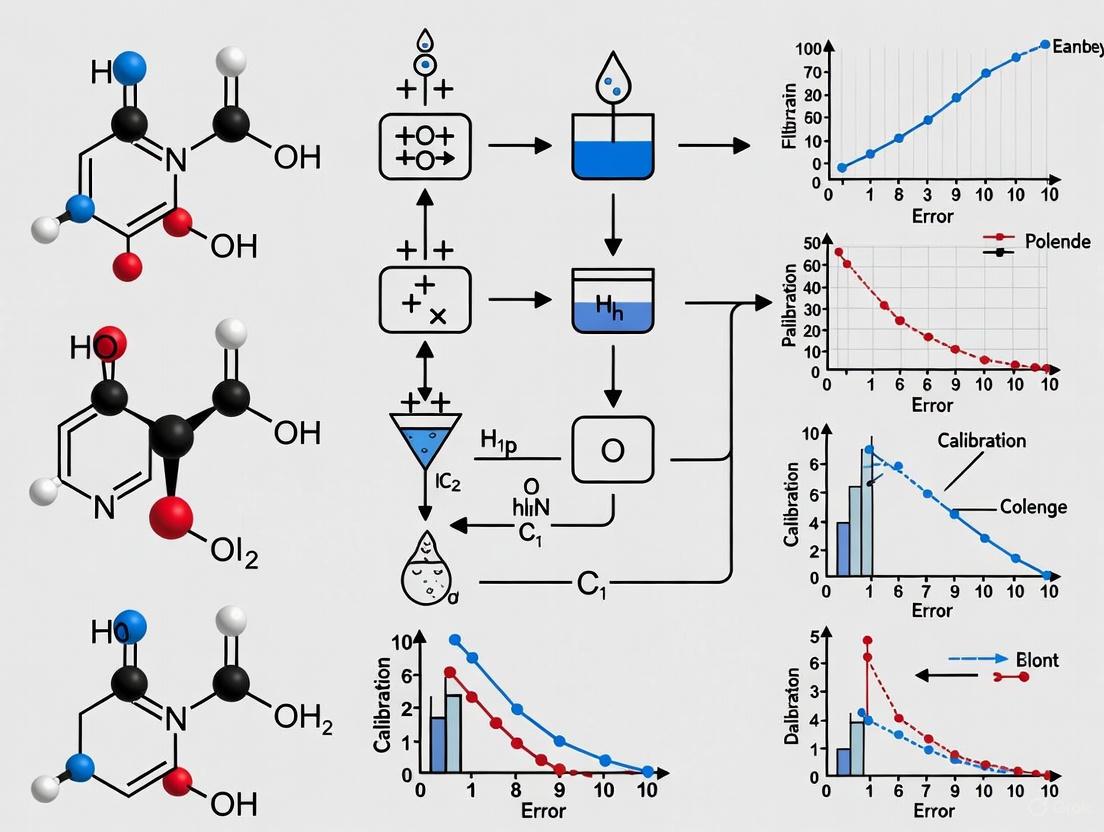

The following diagram outlines a logical workflow for diagnosing and addressing errors in your experimental data.

Research Reagent & Material Solutions

This table details key materials and their functions in minimizing errors in analytical research.

| Reagent / Material | Function in Error Reduction |

|---|---|

| Certified Reference Materials (CRMs) | Provides a known standard with certified properties to calibrate instruments and validate the accuracy of analytical methods, directly combating systematic error [4] [9]. |

| High-Purity Reagents | Minimizes reagent errors caused by impurities that can interfere with analytical reactions and introduce systematic bias into results [5]. |

| Standardized Buffers and Solutions | Ensures consistency in the experimental environment (e.g., pH), reducing random variability between assays and improving precision. |

| Electronic Data Capture (EDC) Systems | Using tablets/laptops for direct data entry eliminates errors from transcribing paper records, a source of random and systematic error [6]. |

Systematic error, also known as determinate error or bias, is a consistent, reproducible inaccuracy that occurs in the same direction in every measurement within an experiment [2] [10]. Unlike random errors, which vary unpredictably, systematic errors shift all measurements away from the true value by a fixed amount (constant error) or by an amount proportional to the measurement (proportional error) [2] [1]. This consistent deviation makes systematic errors particularly problematic in analytical research as they can lead to false conclusions and compromise the validity of study findings, ultimately affecting drug development processes and scientific conclusions [2] [6].

Table 1: Key Characteristics of Systematic vs. Random Error

| Feature | Systematic Error | Random Error |

|---|---|---|

| Definition | Consistent, repeatable error [11] | Unpredictable fluctuations [11] |

| Cause | Faulty equipment, flawed method, environmental factors [10] [12] | Unknown or unpredictable changes in the experiment [1] |

| Impact on Data | Affects accuracy [2] [11] | Affects precision [2] [11] |

| Direction | Always in the same direction [10] | Equally likely to be higher or lower [2] |

| Reduction | Identified and corrected through calibration and better design [4] [11] | Reduced by taking repeated measurements and increasing sample size [2] [11] |

This section details the most prevalent sources of constant systematic error in laboratory settings, providing targeted troubleshooting guidance.

Faulty Instrument Calibration

Description: This occurs when measuring instruments are not calibrated correctly against a known standard, leading to consistent offset (zero-setting error) or proportional (scale factor error) inaccuracies in all measurements [2] [1] [10].

Troubleshooting Guide:

- Problem: All mass measurements from a balance are 0.05 g too high.

- Detection Method: Regularly calibrate equipment using certified standard weights [4] [12]. Analyze Standard Reference Materials (SRMs) to verify measurement accuracy [12].

- Correction Protocol:

Improperly Used or Malfunctioning Equipment

Description: Errors can arise from using equipment in a manner inconsistent with its design or using equipment that is worn out or malfunctioning [10] [12].

Troubleshooting Guide:

- Problem: Using a 50 mL buret with a tolerance of ±0.05 mL to deliver a small volume like 5 mL, resulting in high percentage error [13].

- Detection Method: Compare results obtained from different instruments or methods [4] [12]. Conduct blank determinations to identify background contamination or noise [12].

- Correction Protocol:

- Select equipment with a capacity and tolerance appropriate for the intended measurement scale [13].

- Implement routine maintenance and inspection schedules for critical equipment.

- Train all personnel on the correct use of equipment to avoid errors like parallax (reading a meniscus from an angle) [13] [11].

Flawed Experimental Design or Methodology

Description: Inherent flaws in the experimental procedure can consistently bias results [12]. This includes poor choice of indicators in titrations, unaccounted environmental effects, or sampling bias [2] [13].

Troubleshooting Guide:

- Problem: In a titration, using phenolphthalein (endpoint at pH ~8.2) instead of methyl red (endpoint at pH ~5) for an acid-base reaction that requires an endpoint at pH 5, leading to a significantly underestimated endpoint volume [13].

- Detection Method: Use triangulation—measuring the same variable using multiple independent techniques—to see if the results converge [2].

- Correction Protocol:

- Validate the analytical method against a known standard or reference method before applying it to unknown samples [4].

- Control environmental variables like temperature and humidity where possible [13].

- Use random sampling and random assignment to prevent systematic bias in how samples or treatments are selected [2] [14].

Environmental Factors

Description: Changes in laboratory conditions, such as temperature, humidity, or pressure, can systematically affect the performance of instruments or the materials being measured [9] [12].

Troubleshooting Guide:

- Problem: The volume of a solvent, which expands with heat, is measured at 25°C when its nominal volume is defined at 20°C, introducing a measurable volume error [13].

- Detection Method: Monitor and log environmental conditions during experiments. Note any correlation between condition fluctuations and result shifts.

- Correction Protocol:

Table 2: Summary of Common Systematic Errors and Mitigation Strategies

| Error Source | Specific Example | Recommended Mitigation Strategy |

|---|---|---|

| Calibration | Scale not zeroed; adds 0.5g to every measurement [10] | Regular calibration against traceable standards [4] [12] |

| Instrument Use | Parallax error when reading a burette [13] | Proper training; use of automated instruments where possible [13] |

| Methodology | Incorrect indicator in titration [13] | Method validation and triangulation [2] |

| Reagents | Titrant concentration changes over time (e.g., iodine) [13] | Regular titer determination; proper storage of chemicals [13] |

| Environmental | Solution volume expansion due to temperature rise [13] | Environmental control; application of correction factors [13] |

Frequently Asked Questions (FAQs)

Q1: How can I detect if my experiment has a systematic error? You cannot detect systematic error through statistical analysis of your data alone [4]. The most reliable methods involve:

- Calibration: Testing your equipment and procedure on a known reference quantity [4].

- Independent Comparison: Comparing your results to those obtained using a different instrument or a completely different method [4] [12].

- Blank Determinations: Running blank samples to check for contamination or background signals that may be biasing your results [12].

Q2: Is systematic error or random error a bigger problem in research? Systematic error is generally considered more problematic [2]. While random errors can be reduced by averaging data from large sample sizes, systematic errors cannot be reduced by repetition and will consistently skew your data away from the true value, potentially leading to false conclusions (Type I or II errors) [2].

Q3: Can't I just repeat my measurements to get rid of systematic error? No. Repeating measurements and averaging the results helps to reduce the impact of random error but has no effect on systematic error [2] [4]. Given a particular experimental setup, no matter how many times you repeat and average your measurements, the systematic error remains unchanged [4].

Q4: Our lab's autotitrator still has systematic errors related to temperature. Why? Automation can eliminate many human-centric errors (e.g., visual perception, parallax), but some physical effects, like the thermal expansion of liquids, are intrinsic properties. Therefore, even automated systems may require temperature sensors and automatic temperature compensation to correct for this fundamental systematic error [13].

Experimental Workflow for Error Mitigation

The following diagram illustrates a robust experimental workflow designed to prevent, detect, and correct systematic errors, reinforcing the principles of a reliable analytical method.

Essential Research Reagent Solutions

The following table lists key reagents and materials used in titration, a common analytical method, and highlights their role in minimizing systematic error.

Table 3: Key Reagents and Materials for Minimizing Error in Titration

| Reagent/Material | Function in Experiment | Role in Error Control |

|---|---|---|

| Standard Reference Materials (SRMs) | Certified materials with known purity and concentration [12]. | Serves as a benchmark for calibrating instruments and validating the accuracy of the entire analytical method, directly detecting systematic bias [12]. |

| Primary Standards | High-purity compounds used to prepare standard solutions of known concentration (e.g., for titer determination) [13]. | Ensures the titrant concentration is accurate, preventing proportional systematic errors in all calculated results [13]. |

| Appropriate Chemical Indicator | A substance that changes color at or near the reaction's equivalence point [13]. | Selecting an indicator with a pKa that matches the endpoint pH of the specific titration is critical to avoid systematically misidentifying the endpoint volume [13]. |

| Absorption Tubes (e.g., with soda lime) | Tubes attached to reagent reservoirs to protect against atmospheric gases [13]. | Prevents systematic changes in titrant concentration (e.g., NaOH absorbing CO₂), which would lead to a progressive drift in results over time [13]. |

| Stable Buffer Solutions | Solutions with a known, stable pH. | Used to calibrate pH meters, eliminating zero-offset and scale-factor errors in pH measurement, a common source of systematic error [4]. |

The Impact of Uncontrolled Error on Data Integrity and Decision Making

In analytical methods research, data integrity refers to the overall accuracy, consistency, and reliability of data throughout its lifecycle [15]. For researchers and drug development professionals, maintaining data integrity is crucial as it forms the foundation for critical decisions regarding compound selection, dosage formulation, and clinical trial design.

Uncontrolled errors, particularly systematic errors, introduce consistent, reproducible inaccuracies that compromise data integrity and can lead to misguided conclusions [16] [17]. Unlike random errors that affect precision, systematic errors affect accuracy by consistently shifting measurements in a particular direction, making them particularly dangerous in pharmaceutical research where they can remain undetected without proper validation protocols [17].

Understanding Systematic vs. Random Errors

Definitions and Characteristics

Systematic Errors (determinate errors) are consistent, reproducible inaccuracies that affect measurement accuracy [16]. These errors arise from flaws in the measurement system itself and cause measurements to consistently deviate from the true value in a specific direction. Examples include instrumental drift, calibration errors, and biased sampling methods [17].

Random Errors (indeterminate errors) are unpredictable fluctuations that affect measurement precision [16]. These errors arise from uncontrollable variables in the measurement process and cause scatter in replicate measurements without a consistent pattern. Examples include electronic noise, environmental fluctuations, and variations in sample preparation [17].

Impact on Data Integrity

The table below summarizes the key differences between these error types:

Table: Comparison of Systematic and Random Errors

| Characteristic | Systematic Error | Random Error |

|---|---|---|

| Effect on Results | Affects accuracy | Affects precision |

| Directionality | Consistent direction | Unpredictable |

| Reproducibility | Reproducible in magnitude and direction | Not reproducible |

| Detection Method | Comparison to reference standards | Statistical analysis of replicates |

| Reduction Strategy | Method improvement and calibration | Replication and averaging |

Troubleshooting Guide: Common Data Integrity Issues and Solutions

Instrumentation and Measurement Errors

Q: How can we identify and correct systematic errors in sinusoidal encoders used for angular position monitoring?

A: Sinusoidal encoders (SEs) used in applications such as position estimation of accelerator pedals or engine throttle valves often exhibit systematic errors including DC offset, amplitude mismatch, and phase imbalance [18]. These errors can be quantified using magnitude-to-time-to-digital converter circuits without requiring explicit analog-to-digital converters (ADCs) or look-up tables (LUTs) [18].

Experimental Protocol for Error Quantification:

- For static conditions, employ Direct-Digitizer DDI-1 with Method I or II to estimate offset voltages (α, β), amplitude mismatch (τ), and phase imbalance (ψ) [18]

- For dynamic conditions with continuous shaft rotation, implement DDI-2 with an intermediate signal conditioner (ISC) and modified direct-digitizer (MDD) [18]

- Apply compensation functions using the quantified error values to accurately determine the true shaft angle (θ) [18]

Q: What are the primary sources of measurement error in analytical chemistry?

A: Measurement errors in analytical chemistry can be categorized as follows [16]:

Table: Categories of Measurement Errors

| Error Category | Examples | Impact |

|---|---|---|

| Sampling Errors | Non-representative sampling, contamination | Inaccurate representation of population |

| Method Errors | Incorrect calibration, flawed protocols | Systematic bias in results |

| Measurement Errors | Instrument tolerance, volumetric glassware limitations | Consistent inaccuracies within specified range |

| Personal Errors | Technique variation, transcription errors | Both systematic and random components |

Data Management and Integration Issues

Q: Our organization struggles with data integration across multiple legacy systems. How does this affect data integrity?

A: Lack of data integration creates data silos, inconsistencies, and duplications that significantly compromise data integrity [15] [19]. This is particularly problematic in pharmaceutical development where data must flow seamlessly between research, development, and manufacturing stages.

Troubleshooting Protocol:

- Conduct a comprehensive data audit to identify all sources and their compatibility issues [19]

- Clearly define integration requirements including data volume, transaction speed, and security needs [19]

- Implement robust middleware that supports all required protocols and features [19]

- Establish data validation checks pre- and post-integration to confirm completeness and accuracy [15]

Q: How does using multiple analytics tools impact data integrity?

A: Organizations using multiple analytics tools frequently encounter data integrity issues when these tools interpret and process data differently, leading to discrepancies in generated reports and insights [15]. This is especially problematic in drug development where consistency across studies is critical for regulatory submissions.

Prevention Strategy:

- Standardize data formats and structures before analysis [19]

- Implement a unified data preprocessing pipeline [19]

- Establish cross-tool validation checks to identify interpretation discrepancies [15]

- Clean and preprocess data after collection and aggregation to identify and repair low-quality data before analysis [19]

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Reagents and Materials for Error Reduction in Analytical Research

| Reagent/Material | Function | Error Mitigation Purpose |

|---|---|---|

| Certified Reference Materials | Calibration standards | Minimize systematic method errors through proper instrument calibration |

| High-Purity Solvents | Sample preparation and dilution | Reduce interference-related errors in spectroscopic and chromatographic analysis |

| Stable Isotope-Labeled Analytes | Internal standards for mass spectrometry | Correct for matrix effects and ionization efficiency variations |

| Pharmaceutical Grade Excipients | Formulation development | Enable proper assessment of drug-excipient compatibility and stability |

| GMP-Compliant Cell Culture Media | In vitro testing | Ensure consistency and reproducibility in biological assays |

Impact on Decision Making in Drug Development

Consequences of Uncontrolled Errors

Uncontrolled systematic errors directly impact decision-making throughout the drug development pipeline [15] [20]:

- Inaccurate reports and analysis lead to misguided decisions about compound progression, potentially advancing ineffective compounds or abandoning promising ones [15]

- Financial losses occur due to faulty reporting, resource misallocation, and costs associated with rectifying data integrity issues [15]

- Regulatory compliance issues arise when organizations fail to maintain accurate and reliable data required by regulatory bodies, resulting in fines, penalties, and reputational damage [15]

- Loss of trust in data causes stakeholders to question data-driven insights, potentially reverting to intuition-based decisions [15]

Systematic Error Reduction Framework

Implementing a comprehensive framework for systematic error reduction involves multiple layers of control:

Table: Systematic Error Reduction Framework

| Control Layer | Specific Techniques | Expected Outcome |

|---|---|---|

| Preventive Controls | Proper instrument calibration, staff training, method validation | Reduce introduction of systematic errors |

| Detective Controls | Regular data audits, control charts, reference standard analysis | Identify systematic errors before decision impact |

| Corrective Controls | Root cause analysis, method optimization, data correction protocols | Rectify identified errors and prevent recurrence |

Frequently Asked Questions (FAQs)

Q: What is the relationship between data integrity and data security? A: Data integrity and data security are related but distinct concepts. Data integrity ensures data is accurate, complete, and reliable, while data security focuses on protecting data from unauthorized access, theft, or damage through safeguards like encryption, access controls, and intrusion detection systems [19].

Q: How often should data audits be conducted to maintain data integrity? A: Regular data audits should be conducted according to a risk-based schedule, with higher-frequency audits for critical quality parameters in drug development. Each audit should have clear objectives, identify all data sources, map data flow, perform quality checks, and verify adequate security and compliance measures [19].

Q: What strategies can reduce human errors in data entry? A: Effective strategies include: (1) automating manual processes where possible; (2) implementing continuous employee training on data practices; (3) enhancing oversight and accountability; (4) adding built-in process checks; and (5) using least-privilege access controls for sensitive and error-prone operations [19].

Q: How do legacy systems contribute to data integrity issues? A: Legacy systems often lack necessary features, capabilities, or security measures to ensure data integrity. Additionally, integrating these systems with modern applications can be challenging, leading to data inconsistencies and inaccuracies. They also represent technical debt through the implied cost of added work required to use and maintain outdated technologies [15] [19].

Q: What are the most effective methods for quantifying systematic errors? A: Effective methods include: (1) using certified reference materials to identify measurement bias; (2) implementing standard addition methods to detect matrix effects; (3) conducting ruggedness testing to identify influential factors; and (4) utilizing specialized quantification techniques like magnitude-to-time-to-digital converters for specific instrument errors [18] [16].

FAQs on Instrument Drift and Calibration

What is instrument drift and why is it a problem? Drift is the change in an instrument’s reading or set point value over a period of time, causing it to deviate from a known standard [21]. In the context of reducing constant systematic error in analytical methods, unaddressed drift introduces a consistent, non-random inaccuracy into measurements, compromising the validity of research data and conclusions [21].

How often should I calibrate my instruments? Calibration frequency depends on several factors. A good practice is to follow a risk-based approach, considering the manufacturer’s recommendations, the criticality of the measurements, and the instrument's usage environment [22]. Key times for calibration include:

- At regular scheduled intervals (e.g., monthly, quarterly, or annually) [22].

- Before or after a critical measuring project where accurate data is essential for decision-making [22].

- After any event that might affect performance, such as exposure to shock, vibration, or extreme environmental conditions [22] [21].

- Whenever there is an indication that readings are not accurate or consistent [22].

What are the most common causes of instrument drift? The primary causes of drift are often related to the instrument's operating environment and usage [21]:

- Environmental Surroundings: Changes in temperature, humidity, or exposure to corrosive substances can affect performance. Even relocating an instrument to a different lab can cause drift [21].

- Aging and Over-use: Components can degrade over time or with intensive use, leading to a gradual loss of accuracy [21].

- Physical Shocks: Dropping an instrument or experiencing a sudden power outage (which can cause mechanical vibration) can knock it out of calibration [21].

- Human Error: Improper handling, incorrect use, or lack of maintenance can contribute to drift and measurement errors [21].

My instrument was just calibrated. Why are my results still showing a systematic bias? Calibration ensures the instrument itself is reading correctly against a traceable standard. A persistent bias after calibration suggests the systematic error may originate from your method or operational process. To reduce these errors, consider:

- Method Verification: Ensure your analytical method is validated and appropriate for the sample matrix.

- Operator Technique: Standardize procedures to minimize human error [23].

- Advanced Data Techniques: Emerging methods, such as complex-valued chemometrics in spectroscopy, which uses both the real and imaginary parts of the refractive index, have been shown to significantly reduce systematic errors caused by deviations from ideal models like Beer's Law [24].

Troubleshooting Guides

Guide 1: Systematic Troubleshooting for Unreliable Data

Follow this structured six-step process to efficiently find and fix problems [25].

Troubleshooting Workflow for Equipment Data Issues

Step 1: Problem Identification The initial "problem" is often a symptom. Identify the root cause by asking: Did the problem occur at startup? After maintenance? Focus on one major pain point at a time [25].

Step 2: Establish a Theory of Probable Cause Document all possible causes and rank them from highest to lowest probability. For data drift, consider environmental factors, recent maintenance, or operator changes [25].

Step 3: Establish a Plan of Action Create a documented plan to test your top probable causes. Ensure you have the right personnel and tools. Avoid using new or unverified spare parts during testing, as they can introduce new variables [25].

Step 4: Implement the Plan Critical: Make only one change at a time and test the results after each change. Making multiple changes simultaneously can cause unexpected results and make it impossible to identify the true fix, leading to wasted time and replaced parts [25].

Step 5: Verify Full Functionality Once the initial problem appears solved, test all aspects of the equipment's operation to ensure no new issues were introduced. If a new problem is found, you may need to reverse your steps and address it first [25].

Step 6: Document Findings, Actions, and Outcomes This creates a knowledge base for your lab. Accessible documentation significantly reduces future downtime and is crucial for maintaining the integrity of long-term research projects [25].

Guide 2: Troubleshooting Specific Drift Issues

| Symptom | Possible Cause | Corrective Action |

|---|---|---|

| Consistent positive or negative bias | Instrument out of calibration. | Perform full calibration using traceable reference standards [22]. |

| Gradual, increasing drift over time | Normal component aging, wear, or environmental exposure (e.g., temperature, humidity) [21]. | Schedule regular periodic calibration. Check and control the lab environment. |

| Sudden, large shift in readings | Physical shock (dropped instrument), power surge, or exposure to extreme conditions [22] [21]. | Inspect for physical damage. Calibrate immediately. Use voltage regulators and uninterruptible power supplies (UPS). |

| Erratic, non-repeatable readings | Loose connections, contaminated sensors, or human error in operation [21]. | Check and clean sensors. Verify operator training and use Standard Operating Procedures (SOPs) [23]. |

Calibration and Maintenance Protocols

Standard Calibration Procedure

This protocol outlines the general steps for calibrating instrumentation to ensure measurement accuracy and traceability [22].

1. Preparation

- Gather all necessary tools and reference standards. Reference standards must have known and documented accuracy, traceable to national or international standards [22].

- Ensure the calibration environment is stable and controlled to minimize the influence of external factors like temperature and humidity [22].

2. Initial Testing

- Run the calibration test by comparing the instrument's readings against the reference standard.

- Perform the test multiple times to ensure repeatability of results.

- Record all initial measurements [22].

3. Adjustment

- If discrepancies are found between the instrument and the standard, adjust the instrument's settings to align its readings with the reference [22].

4. Verification

- After adjustment, re-test the instrument to confirm that the errors have been corrected and it now performs within the specified accuracy limits [22].

5. Documentation

- Maintain detailed records of the entire process, including the date, environmental conditions, reference standards used, pre- and post-adjustment results, and the personnel involved. This is essential for traceability and compliance [22].

Recommended Calibration Frequencies

The following table summarizes general guidelines. Always consult manufacturer documentation and relevant regulatory requirements.

| Instrument Type | Measured Variable | Typical Calibration Interval | Key Considerations |

|---|---|---|---|

| Electrical | Voltage, Current, Resistance | 6-12 months | Frequency may increase with heavy usage or critical applications [22]. |

| Temperature | °C, °F (Thermocouples, RTDs) | 6-12 months | Critical for processes in pharmaceuticals and food processing [22]. |

| Pressure | psi, bar, kPa | 6-12 months | Essential for safety in aviation, oil & gas, and manufacturing [22]. |

| Mechanical | Mass, Force, Torque | 12-24 months | Varies with usage; check before high-precision engineering work [22]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

This table details key solutions and materials used in the management of instrument performance and data quality.

| Item | Function & Relevance to Systematic Error Reduction |

|---|---|

| Traceable Reference Standards | Physical artifacts or materials with certified values, traceable to national standards (e.g., NIST). They are the benchmark for calibration, providing the foundation for accurate and legally defensible measurements [22]. |

| Calibration Software | Automates calibration scheduling, data collection, and documentation. Ensures consistency, efficiency, and helps maintain compliance with quality standards like ISO/IEC 17025 [22]. |

| Complex-valued Chemometric Models | Advanced data processing methods that use complex numbers (e.g., incorporating both absorbance and phase information in spectroscopy). They can significantly reduce systematic errors from optical effects beyond traditional Beer-Lambert law approximation [24]. |

| Condition Monitoring Sensors | Sensors (e.g., for vibration, temperature) that provide real-time data on equipment health. They enable proactive maintenance and intervention before failure, prolonging equipment life and reliable operation [23]. |

| Standard Operating Procedures (SOPs) | Documented, step-by-step instructions for operation, maintenance, and calibration. Standardizes processes across users and over time, drastically reducing errors introduced by human inconsistency [23]. |

Strategies for Enhancing Long-Term Reliability and Data Integrity

1. Improve Data Quality and Metrics Implement a centralized system (like a CMMS) to collect and manage equipment data. Use reliability metrics such as MTBF (Mean Time Between Failures) and MTTR (Mean Time To Repair) to quantitatively track performance and identify problematic assets [23].

2. Rank Assets by Criticality Not all equipment requires the same level of scrutiny. Perform a Failure Mode, Effects, and Criticality Analysis (FMECA) to rank assets based on the severity of their failure's impact on your research. This allows you to focus resources on the most critical instruments [23].

3. Foster a Culture of Reliability Educate all team members, from researchers to technicians, on the importance of equipment reliability and their role in maintaining it. A shared understanding promotes proactive error reporting and adherence to best practices [23].

4. Incorporate Uncertainty Quantification (UQ) Adopt UQ methodologies to quantitatively assess the uncertainty in your simulation and measurement results. This builds credibility and allows decision-makers to understand the risks and confidence levels associated with the data [26].

FAQs: Addressing Common Researcher Questions

1. How do systematic and random errors differ in their effect on my measurements? Systematic errors are consistent, reproducible inaccuracies that bias measurements in a specific direction due to problems with the instrument, experimental setup, or environment. They affect the accuracy of your results but not the precision. In contrast, random errors are unpredictable fluctuations caused by varying conditions or observations, and they affect the precision of your measurements. Systematic errors cannot be reduced by repeating experiments alone and require calibration or design changes, whereas random errors can often be minimized by increasing sample sizes and averaging repeated measurements [27].

2. What are some common sources of systematic error related to environmental factors? Common sources include:

- Temperature Fluctuations: Drift in instrument calibration over time due to temperature changes [27].

- Humidity Variations: Changes in relative humidity can affect material properties and electronic sensor readings [27] [28].

- Observer Bias: Consistently incorrect interpretation of equipment readings or subjective visual judgments [27].

- Calibration Errors: Instrument zeroing errors or improper setup [27].

- Electromagnetic Interference: External fields interfering with electronic equipment [27].

3. My lab is in a humid climate. How might this specifically impact my analytical results? High humidity can introduce systematic errors in several ways. It can cause certain chemicals to absorb moisture, altering their concentration or mass. For electronic instruments, high humidity can lead to corrosion, electrical leakage, or changes in sensor response, all of which bias measurements. Furthermore, in thermal comfort and human subject research, humidity interacts with temperature to influence physiological and cognitive responses, which must be accounted for in your experimental design and analysis [29] [30].

4. I suspect an interference is affecting my assay. What is the first step in troubleshooting? The most critical rule is to change only one thing at a time [31]. Begin by carefully replicating the problem while documenting all conditions. Then, alter one potential variable—such as a reagent batch, a sample preparation step, or an instrument setting—and observe the effect. Changing multiple factors simultaneously makes it impossible to identify the true root cause and prevents you from building knowledge for future troubleshooting [31].

Troubleshooting Guides

Guide 1: Identifying and Categorizing Measurement Errors

Use this guide to diagnose the nature of an error in your data.

| Error Characteristic | Systematic Error | Random Error |

|---|---|---|

| Definition | Consistent, reproducible inaccuracy | Unpredictable, stochastic variation |

| Impact on Data | Affects accuracy; creates a bias | Affects precision; creates scatter |

| Common Causes | Calibration drift, environmental factors, flawed methodology [27] | Electrical noise, operator variability, unpredictable sample changes [27] |

| How to Detect | Comparison to a certified reference material or a different, validated method [32] | Replication of measurements; statistical analysis of spread [27] |

| Primary Reduction Strategy | Calibration, improved experimental design, control of environmental factors [27] | Increasing sample size, averaging repeated measurements [27] |

Guide 2: Mitigating Temperature and Humidity Effects

Environmental parameters are a frequent source of systematic error. Implement these strategies to reduce their impact.

| Environmental Factor | Potential Systematic Error | Mitigation Strategy | Experimental Example |

|---|---|---|---|

| Temperature Fluctuations | Calibration drift in sensors; altered reaction kinetics [27] [28] | Use temperature-controlled environments (e.g., incubators, water baths); allow instruments to acclimate; perform regular calibration [27] [33] | In potato storage research, a precise refrigeration system maintained temperature at 3°C ± 0.1°C to prevent spoilage and ensure consistent quality measurements [33]. |

| High/Low Humidity | Changes in chemical mass due to hygroscopy; impaired cognitive or physiological response in human studies [29] [30] | Use desiccants or humidifiers; store materials in controlled environments; utilize sealed sample chambers [33] | A climate chamber study on human thermal comfort used an ultrasonic humidifier to maintain specific relative humidity setpoints (e.g., 70% vs. 90%) to study its coupling effect with temperature [34]. |

| Dust & Particulates | Scattering or absorption of light in optical systems (e.g., spectrometers) [28] | Implement air filtration; use protective enclosures for optical paths; clean equipment regularly [28] | In infrared thermography, dust in industrial settings (e.g., near a blast furnace) is a major interference factor that requires compensation methods to obtain accurate temperature readings [28]. |

Experimental Protocols for Error Reduction

Protocol 1: The Interference Experiment

This experiment estimates the constant systematic error caused by a specific substance (interferent) in your sample [32].

1. Purpose: To determine if a suspected interferent (e.g., bilirubin, hemolysis, lipids, preservatives) causes a measurable, consistent bias in your analytical method.

2. Materials:

- Test method instrumentation

- Patient specimen or sample pool containing the analyte of interest

- Solution of the suspected interfering material ("interferer")

- Solvent or diluting solution without the interferer

- High-quality precision pipettes

3. Methodology:

- Sample Preparation:

- Test Sample A: Add a small volume of the interferer solution to an aliquot of the patient specimen.

- Control Sample B: Add the same small volume of pure solvent/diluent to another aliquot of the same patient specimen.

- It is critical that the volumes added are identical and small (e.g., <10% of total volume) to minimize dilution effects [32].

- Data Collection:

- Analyze both Sample A and Sample B in duplicate (or more) using the method under investigation.

- Repeat this paired-sample process for several different patient specimens to strengthen the data.

- Data Calculation and Analysis:

- Tabulate the results for all pairs of samples.

- Calculate the average of the replicates for each sample.

- Calculate the difference for each paired sample (Average of A - Average of B).

- Calculate the average difference across all specimens. This represents the systematic error caused by the interferer [32].

- Acceptability Judgment:

- Compare the observed average systematic error to your predefined allowable error (e.g., based on clinical or regulatory guidelines). If the observed error is larger, the interference is unacceptable, and the method must be modified or the interferent removed [32].

Protocol 2: The Recovery Experiment

This experiment estimates proportional systematic error, which increases as the analyte concentration increases. It is often used when a comparison method is not available [32].

1. Purpose: To determine if the method accurately recovers a known amount of analyte added to a sample, thereby testing for matrix effects or calibration issues.

2. Materials:

- Test method instrumentation

- Patient specimen with a known baseline level of the analyte

- High-purity standard solution of the sought-for analyte

- High-quality, accurately calibrated pipettes

3. Methodology:

- Sample Preparation:

- Test Sample A: Add a precise, small volume of a high-concentration standard solution to a known volume of the patient specimen.

- Base Sample B: Add the same volume of a suitable diluent to another aliquot of the same patient specimen.

- The amount of analyte added should be significant, ideally raising the concentration to a critical decision level [32].

- Data Collection:

- Analyze both Sample A and Sample B using the method under investigation.

- Data Calculation and Analysis:

- Calculate the concentration of analyte added:

Concentration_added = (Volume_standard × Concentration_standard) / Total_volume. - Calculate the concentration recovered:

Concentration_recovered = [Sample A] - [Sample B]. - Calculate the percent recovery:

% Recovery = (Concentration_recovered / Concentration_added) × 100.

- Calculate the concentration of analyte added:

- Interpretation:

- A recovery of 100% indicates no proportional systematic error.

- Consistent deviations from 100% indicate a proportional bias, often requiring recalibration or investigation into the method's specificity [32].

Visualized Workflows

Systematic Error Investigation Pathway

Recovery Experiment Workflow

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function in Error Reduction |

|---|---|

| Certified Reference Materials (CRMs) | Provides a ground truth with a known, certified value for calibrating instruments and validating method accuracy, directly combating systematic error [32]. |

| High-Purity Solvents & Reagents | Minimizes the introduction of contaminants that could cause chemical interference or side reactions, reducing both systematic and random noise. |

| Precision Pipettes & Volumetric Glassware | Ensures accurate and precise liquid handling, which is critical for both interference and recovery experiments to avoid volume-based errors [32]. |

| Environmental Monitoring System | Logs temperature and humidity in real-time, allowing researchers to correlate environmental fluctuations with data variability and identify systematic drift [33]. |

| Standardized Interferent Solutions | Prepared solutions of common interferents (e.g., bilirubin, Intralipid for lipids) used in controlled interference experiments to quantify their specific effect on an assay [32]. |

FAQs: Understanding and Managing Errors

Q1: What is the difference between a systematic error and a random error in analytical measurements?

A: Systematic errors (determinate errors) are reproducible inaccuracies consistently biased in one direction. They can be identified and minimized through corrective actions like calibration and running blanks [5] [35]. Random errors (indeterminate errors) are unpredictable fluctuations around the true value, caused by uncontrollable variables. They cannot be eliminated, but their impact can be reduced by increasing the number of observations [5].

Q2: What are common types of human failure and how can they be managed?

A: Human failures in the laboratory can be categorized as follows [36]:

- Slips and Lapses: Unintended errors during familiar tasks (e.g., pressing the wrong button, forgetting a step). These are best managed by improving equipment design and creating error-tolerant systems.

- Mistakes: Errors of judgment where the wrong action is taken believing it to be right. These are addressed through robust training and clear, validated procedures.

- Violations: Deliberate deviations from rules, often to improve efficiency. Managing these involves reviewing procedure practicality, explaining their rationale, and involving staff in procedure design.

Q3: A systematic error was identified in our research data after participant results were reported. What steps should we take?

A: A real-world case from a long-term clinical study provides a robust framework [37]. The key steps are:

- Immediate Investigation: Identify the root cause and scope of the error.

- Reanalysis and Reclassification: Re-analyze all affected data to determine the correct values.

- Develop a Communication Plan: Implement a coordinated plan to inform all stakeholders, including participants and their healthcare providers, particularly if the error could have led to inappropriate treatment decisions.

- Review Processes: Strengthen quality control measures to prevent recurrence.

Q4: How can we minimize personal errors during sample preparation and analysis?

A: Personal errors, though not fully eliminable, can be reduced through [35]:

- Proper Training: Ensuring all personnel are thoroughly trained on procedures.

- Automation: Using automated analysis systems to reduce manual handling.

- Specific Practices: Careful weighing with calibrated balances, ensuring complete drying of samples, and performing quantitative transfers correctly to avoid material loss.

Troubleshooting Guides

Guide 1: Troubleshooting Systematic (Determinate) Errors

Systematic errors skew results in one direction and are linked to the method, instrumentation, or operator.

table: Systematic Error Troubleshooting Guide

| Error Symptom | Potential Cause | Corrective Action |

|---|---|---|

| Consistently high or low recovery rates | Faulty instrument calibration [5] [35] | Calibrate the instrument using certified reference standards. Establish a regular calibration schedule. |

| Contamination or reagent interference | Impurities in reagents [5] | Use high-purity reagents. Run blank determinations to identify and subtract background interference [5]. |

| Consistent bias in results | Flawed analytical method [5] | Validate the method before adoption. Perform control determination with a standard substance under identical experimental conditions [5]. |

| Incomplete reaction or sampling error | Errors in methodology [5] | Review sampling procedures for correctness and ensure reaction completeness. |

Guide 2: Troubleshooting Human Errors

Human error stems from the operator and can be unintentional (slips, mistakes) or intentional (violations) [36].

table: Human Error Troubleshooting Guide

| Error Symptom | Potential Cause | Corrective Action |

|---|---|---|

| Skipped steps in a procedure (Error of Omission) [36] [38] | Lapse in memory or distraction [36]. | Simplify procedures; use checklists; reduce environmental distractions. |

| Performing a step incorrectly (Error of Commission) [36] [38] | Lack of knowledge (mistake) or using the wrong technique [36]. | Enhance training with hands-on sessions; improve procedure clarity; implement peer reviews. |

| Taking "shortcuts" around safety or quality procedures | Unworkable rules or peer pressure leading to violations [36]. | Involve operators in procedure design to ensure practicality; explain the rationale behind critical rules. |

| Parallax errors in volumetric readings or transcription mistakes | Personal bias or lack of attention [35]. | Implement automated data capture where possible; re-train on fundamental techniques. |

Experimental Protocols for Error Assessment

Protocol 1: Quality Control and Reanalysis Plan for Identifying Systematic Errors

Purpose: To provide a detailed methodology for identifying, quantifying, and mitigating a discovered systematic error, ensuring data integrity and participant safety [37].

Application: This protocol is essential when a systematic error is suspected or identified in a dataset, especially in studies where results inform clinical or safety-critical decisions.

Methodology:

- Error Identification: Trigger a review when an individual result is incongruent with clinical or expected historical data [37].

- Scope Definition: Define the specific parameters and dataset affected (e.g., all hip scans from "Scanner A" with a manual adjustment step) [37].

- Prioritized Reanalysis:

- First, reanalyze data from the subgroup at highest risk due to the error (e.g., all scans originally classified in the "osteoporosis" category) [37].

- Subsequently, conduct a comprehensive reanalysis of the entire affected dataset.

- Data Reclassification: Compare original and reanalyzed results to determine the correct classification for each data point [37].

- Mitigation and Communication: Develop and execute a structured communication plan to inform all relevant parties (e.g., participants, healthcare providers, ethics board) of the error and corrected results [37].

Protocol 2: Human Factors Error Assessment for Procedural Training

Purpose: To categorize and quantify human errors during a procedural task to identify specific training needs and performance gaps [38].

Application: Used in simulated or real training environments to assess competency in surgical, laboratory, or other complex manual procedures.

Methodology:

- Task Performance: Participants perform the defined procedure (e.g., a simulated laparoscopic repair) within a set time limit [38].

- Video Recording: Record the procedure from multiple angles to capture all actions [38].

- Post-hoc Error Coding: Trained analysts review the video recordings to identify and classify every error based on a predefined taxonomy [38]:

- By Type: Omission (skipping a step) vs. Commission (executing a step incorrectly).

- By Level: Cognitive (errors in information, diagnosis, strategy) vs. Technical (errors in action, procedure, mechanics).

- Data Analysis: Use software (e.g., Multimedia Video Task Analysis) to code error timing, duration, and context. Analyze the frequency and distribution of error types to pinpoint weaknesses in knowledge or technical skill [38].

- Feedback and Training: Provide structured feedback based on the error analysis to guide targeted training and re-assessment.

Visual Workflows

Diagram 1: Systematic Error Management Workflow

Diagram 2: Human Error Management Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

table: Essential Materials for Error Reduction in Analytical Research

| Item | Function & Role in Error Reduction |

|---|---|

| Certified Reference Materials | High-purity standards with certified properties. Used for instrument calibration and method validation to identify and correct for systematic instrumental and reagent errors [35]. |

| Control Samples | Samples with known characteristics analyzed alongside test samples. They monitor analytical process stability and help detect the introduction of systematic errors over time [5]. |

| Blank Samples | A sample without the analyte of interest. Used to identify, quantify, and correct for bias caused by background interference or contamination from reagents or the environment [5]. |

| Calibrated Equipment | Instruments and volumetric glassware that have been adjusted against a reference standard. Regular calibration is a primary defense against systematic instrumental errors [5] [35]. |

| Standardized Operating Procedures (SOPs) | Documented, validated step-by-step instructions. They minimize personal errors and mistakes by ensuring consistency and providing the correct strategy for all operators [36]. |

Proactive Error Reduction: From Calibration to Advanced Normalization Techniques

Troubleshooting Guides

Common Calibration Issues and Solutions

| Problem | Possible Causes | Recommended Solutions | Supporting Data |

|---|---|---|---|

| Inaccurate single-point calibration | Non-linear response; Calibration curve does not pass through origin [39] [40] | Perform regression analysis on multi-point data to test if the intercept significantly differs from zero [39]. Use multi-point calibration if the 95% confidence interval for the intercept does not contain zero [39]. | Statistical Test for Single-Point Feasibility [39]: Calculate the 95% confidence interval for the y-intercept. If the interval contains zero, single-point may be suitable. |

| Analyzer drift over time | Sensor aging; Temperature fluctuations; Exposure to high-moisture or corrosive gases [41] | Compare current calibration values against historical data; Replace aging components; Set drift thresholds in the data system for early alerts [41]. | Drift Monitoring [41]: Implement monthly analysis of drift trends to identify issues before data becomes invalid. |

| Inaccurate calibration gas delivery | Expired cylinders; Leaks in gas lines; Contaminated gas; Incorrect flow rates [41] | Use NIST-traceable gases within expiration; Perform leak checks; Verify gas flow rates (typically 1-2 L/min) with a calibrated flow meter [41]. | Gas Delivery Verification [41]: Keep a flow calibrator on-site for independent verification when anomalies are suspected. |

| Matrix effects causing bias | Difference in matrix between calibrators and patient samples; Ion suppression/enhancement in MS [42] [40] | Use matrix-matched calibrators where possible; Employ stable isotope-labeled internal standards (SIL-IS) for each target analyte [42]. | Matrix Effect Mitigation [42]: Using SIL-IS helps compensate for matrix effects and recovery losses during extraction. |

| Poor curve fit at low concentrations | Improper weighting factor for heteroscedastic data [42] [43] | Use a weighted regression model (e.g., 1/x or 1/x²) to normalize error across the concentration range, especially critical for wide dynamic ranges [43]. | Weighting Factor Impact [43]: A 1/x² weighting most correctly approximates variance at the low end of the curve, normalizing error across the range. |

Internal Standards and Curve Fitting

| Problem | Possible Causes | Recommended Solutions | Supporting Data |

|---|---|---|---|

| Inconsistent internal standard performance | Non-optimal IS concentration; Cross-signal contribution; Variable matrix effects [44] [42] | Establish optimal IS concentration during validation; Ensure no cross-signal between analyte and IS; Use stable isotope-labeled IS (SIL-IS) that mimics the analyte [44] [42]. | SIL-IS Criteria [44]: The relative response (analyte/SIL-IS ratio) must not be concentration-dependent and should be constant between batches. |

| High bias at upper calibration range | Incorrect regression model; Saturation of detector response; Improper calibrator spacing [42] [40] | Visually inspect the calibration plot for non-linearity; Ensure adequate number of calibrators (e.g., 6-10) to map detector response [42]. | Multi-point Advantage [40]: A multi-point standardization minimizes the effect of a determinate error in one standard and does not assume the response is independent of concentration. |

| Calibration curve fails acceptance criteria | Unrecognized heteroscedasticity; Use of R² alone for linearity assessment; Incorrect regression model [42] | Assess linearity with experimental data and appropriate statistics; Investigate heteroscedasticity and apply correct weighting [42]. | Linearity Assessment [42]: The correlation coefficient (r) or determination coefficient (R²) should not be the sole measure for assessing linearity. |

Frequently Asked Questions (FAQs)

General Calibration Strategy

Q1: When is it scientifically justified to use a single-point calibration instead of a multi-point curve?

A single-point calibration is justified only when a thorough multi-point evaluation confirms that the calibration curve is linear and the y-intercept does not differ significantly from zero across the entire working range [39] [40]. This must be validated for each specific method and matrix. For example, a study on 5-fluorouracil (5-FU) quantification demonstrated that a single-point calibration at 0.5 mg/L produced results clinically comparable to a multi-point method, but this was only after rigorous validation confirmed a linear relationship and no significant intercept [44].

Q2: What are the key advantages of using stable isotope-labeled internal standards (SIL-IS)?

SIL-IS are considered the gold standard because they most closely mimic the target analyte's chemical and physical behavior. They compensate for matrix effects (ion suppression/enhancement), losses during sample preparation, and variations in instrument response [42]. The effectiveness relies on the SIL-IS having a coincident retention time with the analyte and behaving identically during extraction and ionization [42].

Q3: How often should mass spectrometry instruments be calibrated?

The frequency depends on the instrument type and stability of the laboratory environment. For accurate mass measurements, time-of-flight (TOF) mass spectrometers may require daily calibration checks, while quadrupole mass spectrometers are typically calibrated a few times per year [45]. Consistent laboratory conditions (temperature, humidity) can extend the time between calibrations, but instruments should be checked regularly, especially if masses drift from expected values [45].

Technical Implementation

Q4: My calibration curve is linear but my quality controls (QCs) are inaccurate. What could be wrong?

This often indicates a matrix effect issue. The calibrators and QCs may be prepared in different matrices, or the patient sample matrix may differ from both. The solution is to ensure commutability by using matrix-matched calibrators and QCs, and to employ a well-characterized SIL-IS to correct for any residual matrix effects [42]. Spike-and-recovery experiments can help diagnose this problem [42].

Q5: What is the best way to handle data that spans a wide concentration range (e.g., 1–10,000 ng/mL)?

LC-MS/MS data is typically heteroscedastic, meaning the variance is not constant across the range. Using ordinary least squares (unweighted) regression can introduce significant bias. Applying a weighting factor (such as 1/x or 1/x²) is crucial to normalize the error across the concentration range and provide an accurate fit, particularly at the lower end near the limit of quantification (LOQ) [42] [43].

Q6: Are there efficient alternatives to running a full multi-point calibration curve with every batch?

Yes, several "reduced" calibration strategies can improve efficiency. These include:

- Single-point calibration: As described in Q1, if validated [44] [46].

- Response Factor (RF) approaches: Using a predetermined response factor from the analyte/SIL-IS ratio, which can be tracked over time (historical RF) [44] [46].

- Staggered calibration: Running a single calibration curve where calibrants are scattered at the beginning and end of the sample sequence, which can save time compared to running two full curves [43]. These strategies can conserve resources and enable random instrument access without compromising data quality when properly implemented [44] [46].

Experimental Protocols

Protocol 1: Validating a Single-Point Calibration Method

This protocol is adapted from a study quantifying 5-fluorouracil (5-FU) in human plasma using LC-MS/MS [44].

1. Objective: To validate that a single-point calibration method produces results analytically and clinically comparable to a fully validated multi-point calibration method.

2. Materials and Reagents:

- Analyte: 5-FU (≥99% chemical purity)

- Internal Standard: 5-FU 13C15N2 (SIL-IS, 99.6% isotopic purity)

- Matrix: Drug-free human plasma

- LC-MS/MS System: Shimadzu Prominence UFLC coupled to Shimadzu 8060 tandem mass spectrometer

- Chromatography Column: Phenomenex Luna Omega Polar C18 (50 × 3.0 mm, 3 µm)

3. Methodology:

- Multi-point Method Development: Develop and validate an LC-MS/MS method for 5-FU over the concentration range of 0.05–50 mg/L using a multi-point calibration curve (e.g., 6-10 concentrations) per established guidelines [44].

- Single-point Comparison: Quantify 5-FU in patient plasma samples using both the multi-point method and the single-point method (using a single calibrator at 0.5 mg/L).

- Statistical Comparison: Compare the results from the two methods using:

- Bland-Altman bias plot to assess the mean difference (bias) between methods.

- Passing-Bablok regression to evaluate the slope and intercept, where a slope of 1.0 and an intercept of 0 indicate perfect agreement [44].

- Clinical Impact Assessment: For drugs like 5-FU where dose adjustments are based on the calculated Area Under the Curve (AUC), assess whether the calibration method (single vs. multi-point) impacts the final dose adjustment decision [44].

4. Acceptance Criteria: The single-point method is considered valid if the mean difference between methods is clinically insignificant (e.g., -1.87% as in the 5-FU study), the slope from regression is close to 1.0 (e.g., 1.002), and there is no impact on clinical decisions [44].

Protocol 2: Statistical Testing for Single-Point Calibration Suitability

This protocol provides a step-by-step method to determine if a single-point calibration is appropriate for a given assay [39].

1. Objective: To determine if the calibration curve's y-intercept is statistically indistinguishable from zero, which is a key requirement for single-point calibration.

2. Procedure:

- Prepare and analyze at least 5-6 calibration standards across the desired working range.

- Using software like Excel's Data Analysis Toolpack, perform a linear regression analysis on the data (instrument response vs. concentration).

- From the regression output, locate the "Intercept" row and its associated "Lower 95%" and "Upper 95%" confidence interval values.

3. Interpretation:

- If the 95% confidence interval for the intercept INCLUDES zero, there is no significant statistical evidence that the intercept is different from zero. A single-point calibration that forces the line through the origin may be justified.

- If the 95% confidence interval for the intercept DOES NOT INCLUDE zero, the intercept is significantly different from zero. A multi-point calibration must be used to avoid systematic bias [39].

Workflow and Decision Diagrams

Calibration Strategy Decision Flow

Research Reagent Solutions

| Essential Material | Function in Calibration | Key Considerations |

|---|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Compensates for matrix effects, extraction losses, and instrument variability by behaving identically to the analyte but distinguished by mass [42]. | Must be chemically pure and have co-eluting retention time with the analyte. The level of unlabeled analyte in the IS must be undetectable [44] [42]. |

| Matrix-Matched Calibrators | Calibration standards prepared in a matrix that closely resembles the patient sample to conserve the signal-to-concentration relationship and minimize matrix-related bias [42]. | For endogenous analytes, a "proxy" blank matrix (e.g., charcoal-stripped serum) is used. Commutability between the calibrator matrix and patient matrix should be verified [42]. |

| NIST-Traceable Calibration Gases | Provide an absolute reference traceable to national standards for calibrating gas analyzers and systems like CEMS [41]. | Must be used within their expiration date and with verified gas delivery lines free of leaks or contamination [41]. |

| Weighting Factors (1/x, 1/x²) | Mathematical factors applied during regression to account for heteroscedasticity, ensuring accuracy across the entire calibration range, especially at low concentrations [42] [43]. | The choice of weighting (1/x vs. 1/x²) should be based on the nature of the variance in the data. 1/x² is often optimal for wide dynamic ranges in bioanalysis [43]. |

A technical support center for reducing constant systematic error

FAQs: Choosing and Applying Normalization Methods

Q1: What is the fundamental difference between Linear and LOESS normalization?

Linear Normalization (e.g., median, scale, or Z-score) fits a straight line through your data points. It is a global method, meaning it applies the same simple transformation (like scaling all values by a factor) across the entire dataset. It's most effective when the systematic bias you need to correct is constant and does not depend on the signal intensity [47].

LOESS Normalization (Locally Estimated Scatterplot Smoothing) fits a complex, non-linear curve. It is a local method that works like a sophisticated moving average. For each data point, it performs a weighted regression using only a subset of neighboring points, making it highly effective for correcting intensity-dependent biases where the systematic error changes across the dynamic range of your measurements [47].

Q2: When should I choose LOESS over Linear normalization for my HTS data?

Choosing the right method depends on your data's characteristics. The following table outlines key decision criteria:

| Situation | Recommended Method | Rationale |

|---|---|---|

| High hit-rate scenarios (>20% hits per plate) [48] | LOESS | Linear methods (e.g., B-score) perform poorly; LOESS reduces row/column/edge effects effectively. |

| Intensity-dependent bias is suspected [47] | LOESS | Corrects non-linear, local systematic errors that linear methods cannot address. |

| Correcting simple plate-to-plate variation | Linear (e.g., Z-score) | A robust, simple method for global scaling when no complex local artifacts exist [49]. |

| Multi-omics temporal study (Proteomics) [50] | Linear (Median), PQN, or LOESS | These methods preserved time-related variance, demonstrating robustness. |

| Multi-omics temporal study (Metabolomics/Lipidomics) [50] | PQN or LOESS (LOESS QC) | These methods optimally enhanced QC feature consistency. |

Q3: I'm getting errors when running LOESS normalization on my dataset with missing values. How can I fix this?

It is normal for some LOESS functions in packages like affy to not tolerate NA values [51] [52]. You have two main options:

- Remove NAs: Filter out rows (e.g., probes, compounds) with excessive missing values prior to normalization.

- Impute NAs: Replace missing values with estimated ones using imputation methods (e.g., k-nearest neighbors, minimum value). The choice depends on the nature of your data and the fraction of missingness [51].

Q4: Can normalization itself introduce bias into my data?

Yes. A critical step before any normalization is to statistically assess the presence of systematic error in your raw data [49]. Applying powerful corrections like LOESS or B-score to data that lacks systematic error can create artificial biases and lead to inaccurate hit selection [48] [49]. Always visualize your raw data (e.g., with heatmaps) to check for spatial patterns or use statistical tests before proceeding.

Troubleshooting Guides

Problem: Poor performance in differential expression analysis after normalization.

- Potential Cause 1: The normalization method was inappropriate for the data's characteristics.

- Solution: Revisit the selection criteria in Q2. For RNA-Seq data with GC-content bias, use within-lane GC normalization followed by between-lane normalization [53].

- Potential Cause 2: High hit-rate caused normalization to remove biological signal.

Problem: Normalization method masks the treatment-related biological variance.

- Potential Cause: Over-correction, especially with data-driven methods.

- Solution: This was observed in some cases with the SERRF machine learning method [50]. Compare the variance explained by your treatment before and after normalization. Consider using a less aggressive method or one that uses a stable external reference.

Experimental Protocols & Data Presentation

Detailed Methodology: Evaluating Normalization for Multi-omics Time-Course Data

This protocol is adapted from a study evaluating normalization strategies for mass spectrometry-based multi-omics datasets [50].

1. Sample Preparation:

- Cell Model: Use primary human cells relevant to your study (e.g., cardiomyocytes, motor neurons).

- Treatment: Expose cells to active compounds over a defined time course.

- Multi-omics Extraction: Prepare samples for metabolomics, lipidomics, and proteomics, ideally from the same cell lysate to minimize technical variation.

2. Data Acquisition & Preprocessing:

- Acquire raw data using your mass spectrometry platforms.

- Perform standard peak picking, alignment, and identification for each omics layer.

3. Application of Normalization Methods:

- Apply a range of common normalization methods to each dataset. The evaluated methods in the cited study included:

- Probabilistic Quotient Normalization (PQN)

- LOESS (LOESS QC)

- Median Normalization

- SERRF (a machine learning approach)

- Apply a range of common normalization methods to each dataset. The evaluated methods in the cited study included:

4. Evaluation of Effectiveness:

- Criterion 1: QC Feature Consistency. Assess how well the normalization improves the consistency of quality control (QC) samples. Good methods should make QC samples cluster tightly.

- Criterion 2: Preservation of Biological Variance. Examine the change in variance explained by the two main biological factors: treatment and time. An optimal method will maximize the desired biological variance while reducing unwanted technical variance [50].

5. Conclusion and Selection:

- Identify the top-performing method(s) for each omics data type that are both robust and effective for multi-omics integration in a temporal context.

The quantitative outcomes of such a study can be summarized as follows:

| Omics Data Type | Top-Performing Normalization Methods | Key Performance Metric |

|---|---|---|

| Metabolomics | PQN, LOESS (LOESS QC) | Optimally enhanced QC feature consistency [50]. |

| Lipidomics | PQN, LOESS (LOESS QC) | Optimally enhanced QC feature consistency [50]. |

| Proteomics | PQN, Median, LOESS | Preserved time-related or treatment-related variance [50]. |

The Scientist's Toolkit

| Category | Item / Solution | Function / Explanation |

|---|---|---|

| Software & Packages | R/Bioconductor | The primary environment for implementing normalization methods (e.g., affy, limma, EDASeq packages) [51] [53]. |

| MVAPACK | Open-source software with a suite of functions, including PQ and CS normalization, for preprocessing NMR metabolomics data [54]. | |

| Experimental Controls | Scattered Control Layout | Distributing positive/negative controls randomly across a plate to robustly capture and correct for spatial effects like edge evaporation [48]. |

| Spike-In Controls | Adding known amounts of foreign transcripts or compounds to the sample to serve as a stable reference for normalization, especially in skewed data [55]. | |

| Key Algorithms | Probabilistic Quotient (PQ) | Consistently a top performer in metabolomics; assumes most metabolite concentrations change by a constant factor [54]. |

| Constant Sum (CS) | A simple, robust linear method that scales all samples to a common total [54]. | |

| Quality Metrics | Z'-factor | A widely used metric to assess the quality and separation between positive and negative controls in an HTS assay [48]. |

| SSMD (Strictly Standardized Mean Difference) | Another metric for QC assessment, particularly for evaluating the strength of differential expression [48]. |

Workflow Visualization

This diagram illustrates the decision-making process for selecting and validating a normalization method within the context of reducing systematic error.

Troubleshooting Guides

FAQ 1: How can I distinguish between a Critical Quality Attribute (CQA) and a non-critical quality attribute during method development?

Issue: Uncertainty in classifying attributes as critical leads to an inefficient control strategy and potential method failure.

Solution: A Critical Quality Attribute (CQA) is a physical, chemical, biological, or microbiological property or characteristic that must be within an appropriate limit, range, or distribution to ensure the desired product quality [56]. The criticality is determined primarily by the severity of harm to the patient should the product fail to meet the required quality for that attribute [56]. Probability of occurrence or detectability does not impact the criticality.

- Action: Create a risk assessment based on the Analytical Target Profile (ATP). For each attribute, ask: "If this attribute falls outside its acceptable range, could it directly impact patient safety or drug efficacy?" If the answer is yes, it is a CQA [56] [57]. For example, in a chromatographic method, the resolution of a critical pair would be a CQA, as poor resolution could lead to inaccurate quantification of a harmful impurity [57].

FAQ 2: What is the relationship between the Method Operational Design Region (MODR) and the Design Space?