The Nelder-Mead Simplex Algorithm: A Comprehensive Guide for Biomedical Research and Optimization

This article provides a comprehensive exploration of the Nelder-Mead simplex algorithm, a foundational derivative-free optimization method widely used in scientific research and drug development.

The Nelder-Mead Simplex Algorithm: A Comprehensive Guide for Biomedical Research and Optimization

Abstract

This article provides a comprehensive exploration of the Nelder-Mead simplex algorithm, a foundational derivative-free optimization method widely used in scientific research and drug development. It begins with foundational concepts, detailing the algorithm's history and core mechanics, including reflection, expansion, and contraction operations. The guide then progresses to practical implementation methodologies and applications in fields like physiological parameter estimation and model fitting. It further covers essential troubleshooting techniques to avoid common pitfalls like premature convergence and discusses advanced hybrid optimization strategies. Finally, the article presents a comparative analysis with other modern algorithms, such as Differential Evolution, offering validation metrics and insights to help researchers select the most appropriate technique for their specific biomedical optimization challenges.

Understanding the Nelder-Mead Algorithm: Core Principles and Historical Context

What is the Nelder-Mead Method? Defining the Downhill Simplex

The Nelder-Mead (NM) method, also known as the downhill simplex method, is a cornerstone numerical algorithm for multidimensional unconstrained minimization of non-linear functions without requiring derivative information [1]. First published in 1965 by John Nelder and Roger Mead, this algorithm improved upon the earlier simplex method of Spendley, Hext, and Himsworth (1962) by allowing the simplex to not only change size but also its shape, enabling it to adapt to the function's landscape [1]. This seminal development allowed the algorithm to "elongate down long inclined planes, change direction on encountering a valley at an angle, and contract in the neighbourhood of a minimum" [1]. Over nearly six decades, despite the emergence of more sophisticated optimization techniques, the Nelder-Mead method has maintained remarkable popularity due to its conceptual simplicity, low storage requirements, and robustness when dealing with noisy, discontinuous, or non-differentiable objective functions [2] [1].

The method's name warrants clarification, particularly to distinguish it from Dantzig's simplex algorithm for linear programming, which is completely different both in application and fundamental approach [1]. The term "simplex" in the Nelder-Mead context refers to a geometric structure—specifically, the convex hull of n+1 points in n-dimensional space that are not all in the same hyperplane [3] [1]. For a two-dimensional problem, this simplex is a triangle; in three dimensions, it forms a tetrahedron [3]. The "downhill" descriptor refers to the algorithm's systematic approach of moving this simplex through the parameter space toward regions with lower function values, thus "going downhill" on the objective function's surface [3].

Core Algorithmic Framework

Fundamental Principles and Definitions

The Nelder-Mead algorithm addresses the classical unconstrained optimization problem of minimizing a given nonlinear function (f : {\mathbb R}^n \to {\mathbb R}) [1]. Its distinctive characteristic is that it uses only function values at points in ({\mathbb R}^n) without forming approximate gradients, placing it within the general class of direct search methods [1]. This property makes it particularly valuable for problems where the objective function is non-differentiable, discontinuous, noisy, or computationally expensive to evaluate [2].

The algorithm operates through an iterative process of transforming a simplex—a geometric structure defined by n+1 vertices in n-dimensional parameter space [3] [1]. Each vertex (xi) in the simplex represents a complete set of parameters, with a corresponding function value (fi = f(x_i)) [3]. The method progressively updates this simplex by replacing the worst vertex (with the highest function value) with a better point, using a series of geometric transformations relative to the centroid of the remaining points [4].

Mathematical Formulation of simplex Operations

The algorithm is controlled by four parameters that govern its transformation behavior: (\alpha) for reflection, (\beta) for contraction, (\gamma) for expansion, and (\delta) for shrinkage [1]. These parameters must satisfy the constraints: (\alpha > 0), (0 < \beta < 1), (\gamma > 1), (\gamma > \alpha), and (0 < \delta < 1) [3] [1]. The standard values used in most implementations are (\alpha = 1), (\beta = \frac{1}{2}), (\gamma = 2), and (\delta = \frac{1}{2}) [3] [1].

Each iteration follows a systematic procedure. First, the vertices are ordered according to their function values. For a simplex with vertices (x0, \ldots, xn), the indices (h), (s), and (l) correspond to the worst, second worst, and best vertices, respectively, satisfying (fh = \max{j} fj), (fs = \max{j \neq h} fj), and (fl = \min{j \neq h} fj) [1]. The centroid (c) of the best side (opposite the worst vertex (xh)) is then calculated as (c = \frac{1}{n} \sum{j \neq h} xj) [1].

The core transformations are then attempted in sequence, with each creating a candidate point to replace the worst vertex:

- Reflection: Compute (xr = c + \alpha(c - xh)). If (fl \leq fr < fs), accept (xr) and terminate the iteration [1].

- Expansion: If (fr < fl), compute (xe = c + \gamma(xr - c)). If (fe < fr), accept (xe); otherwise accept (xr) [1].

- Contraction:

- Shrinkage: If contraction fails, shrink the entire simplex toward the best vertex (xl) by replacing all vertices (xi) with (xl + \delta(xi - x_l)) for all (i \neq l) [3] [1].

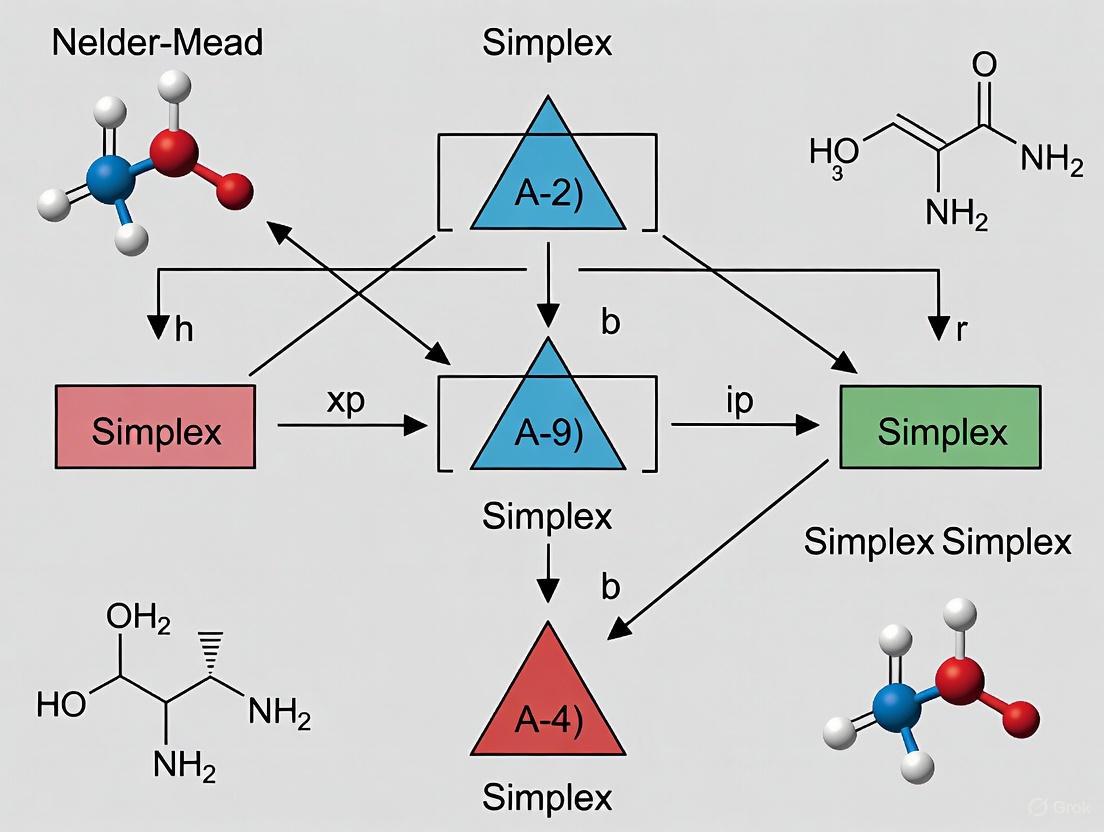

The following diagram illustrates the logical workflow of these simplex transformations:

Diagram: Logical workflow of Nelder-Mead simplex transformations

Initialization and Termination Criteria

The initial simplex is typically constructed by generating n+1 vertices around a given input point (x{in} \in {\mathbb R}^n) [1]. A common approach sets (x0 = x{in}), with the remaining n vertices generated to create either a right-angled simplex based on coordinate axes ((xj = x0 + hj e_j)) or a regular simplex with all edges having the same specified length [1].

Termination conditions vary across implementations but commonly include: when the working simplex becomes sufficiently small, when function values at the vertices become close enough, or when a maximum number of iterations is reached [4] [1]. One implementation stops "when all candidates in the simplex have values close to each other," indicating the simplex has converged to a minimum where the function surface is relatively flat [4].

Contemporary Research and Advancements

Modern Hybrid Approaches

Despite its age, the Nelder-Mead method continues to inspire new research, particularly through hybridization with other optimization paradigms. Recent studies have focused on addressing its limitations, such as poor convergence properties in high-dimensional spaces and susceptibility to becoming trapped in local optima [5] [6].

Table: Recent Hybrid Algorithms Incorporating Nelder-Mead

| Hybrid Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Deep Reinforcement Nelder-Mead (DRNM) [7] | Integrates RL with NM; replaces fixed heuristic rules with adaptive strategy | Reduces unnecessary function calls; enhances global exploration; computationally efficient | Requires careful tuning; complex implementation |

| Genetic and Nelder-Mead Algorithm (GANMA) [8] | Combines global search of GA with local refinement of NM | Balances exploration and exploitation; improved convergence speed and solution quality | Scalability challenges in higher dimensions; parameter sensitivity |

| GA-Nelder-Mead (GA-NM) [8] | Uses NM simplex method within GA to enhance solution precision | Improved precision in smooth, low-dimensional problems | Limited scalability; requires precise parameter settings |

| Modified Nelder-Mead with Differential Evolution [9] | Applies DE before shrinking operation to obtain global minimal solution | Better convergence; finds coherent biclusters with lower MSR | Application-specific (microarray data); increased computational complexity |

The Deep Reinforcement Nelder-Mead (DRNM) method represents a significant innovation by integrating reinforcement learning with the classical NM algorithm [7]. This approach enables the algorithm to learn an optimal decision-making policy for the NM process, replacing fixed heuristic rules with an adaptive strategy that significantly reduces unnecessary function calls—particularly valuable when each function evaluation is computationally expensive, such as in HVAC digital twin simulations [7].

Another promising direction is the Genetic and Nelder-Mead Algorithm (GANMA), which hybridizes the global exploration capabilities of Genetic Algorithms with the local refinement strength of NM [8]. This hybrid demonstrates superior performance across various benchmark functions, particularly for problems with high dimensionality and multimodality, effectively addressing the balance between global exploration and local exploitation that often challenges individual algorithms [8].

Convergence Properties and Theoretical Challenges

The Nelder-Mead algorithm presents intriguing theoretical challenges that continue to attract mathematical analysis. Recent research has identified several distinct convergence behaviors [6]:

- Function values at simplex vertices may converge to a common limit value while the function has no finite minimum and the simplex sequence is unbounded

- Simplex vertices may converge to a common limit point that is not a stationary point of the objective function

- The simplex sequence may converge to a limit simplex with positive diameter, resulting in different limit function values at the vertices

These behaviors negatively answer long-standing questions about whether the method guarantees convergence to a minimum [6]. McKinnon's famous counterexample demonstrates a case where the simplex converges to a non-stationary point, highlighting fundamental limitations [6].

Two main versions of the algorithm are currently studied: the 'original' unordered method of Nelder and Mead and the 'ordered' version by Lagarias et al., with evidence suggesting the ordered version exhibits better convergence properties [6]. The matrix representations of these algorithms have enabled more sophisticated analysis, connecting convergence to the properties of infinite matrix products [6].

Implementation Considerations

Practical Implementation Details

The Nelder-Mead algorithm is widely available in major scientific computing libraries. In Python's SciPy library, it is accessible through the minimize function in the scipy.optimize module with the method='Nelder-Mead' argument [2]. Similarly, in R, it can be accessed via the optim function or the optimx package by specifying method="Nelder-Mead" [2].

Key implementation considerations include handling failed evaluations, constraint management, and appropriate parameter selection. The algorithm can be extended to handle solver noise and even failed designs through penalty approaches [10]. For problems with a small number of design variables, the simplex method converges quite fast, but for larger numbers, more advanced methods like ARSM may be more suitable [10].

Table: Standard and Alternative Parameter Sets for Nelder-Mead

| Parameter | Standard Value | Parkinson & Hutchinson Alternate | Purpose |

|---|---|---|---|

| α (rho) | 1.0 | - | Controls reflection distance |

| β (chi) | 0.5 | - | Controls contraction factor |

| γ (gamma) | 2.0 | - | Controls expansion factor |

| δ (sigma) | 0.5 | - | Controls shrinkage factor |

| Initialization | Coordinate-axis based | Regular simplex | Determines initial search pattern |

Experimental Protocol and Research Applications

In research settings, proper experimental design is crucial when applying or evaluating the Nelder-Mead method. For performance validation, studies typically employ multiple benchmark functions with different characteristics (unimodal, multimodal, ill-conditioned) to comprehensively assess algorithm behavior [8]. Real-world applications additionally validate against domain-specific problems with known optimal solutions or comparative benchmarks [7].

A typical experimental protocol involves:

- Initialization: Construct initial simplex using either coordinate-axis or regular simplex approach around a defined starting point [1]

- Iteration Process: Apply transformation rules according to the logical workflow, tracking function evaluations and simplex characteristics at each iteration [4]

- Termination Check: Evaluate convergence criteria at each iteration, typically based on simplex size and function value improvement [4] [10]

- Result Validation: Compare final results with known optima or alternative methods, often using multiple random starts to mitigate local optima issues [7] [8]

In practical applications like HVAC digital twin optimization, the method is implemented within a comprehensive framework where the most computationally expensive component is the function evaluation (one complete execution of the simulation model) [7]. Here, the primary metric for computational efficiency becomes minimizing function calls while maintaining solution quality [7].

Research Reagent Solutions

Table: Essential Computational Tools for Nelder-Mead Research

| Tool/Category | Specific Examples | Research Function |

|---|---|---|

| Optimization Frameworks | SciPy (Python), optimx (R), MATLAB fminsearch | Provides reference implementations; enables method comparison and benchmarking |

| Benchmark Problem Sets | Classical test functions (Rosenbrock, Powell, etc.), CEC competition benchmarks | Standardized performance evaluation on functions with known properties and optima |

| Hybrid Algorithm Components | Genetic Algorithms, Differential Evolution, Reinforcement Learning | Enhances global exploration capabilities; addresses limitations of pure NM approach |

| Visualization Tools | Matplotlib, Plotly, ParaView | Enables geometric interpretation of simplex transformations in 2D/3D cases |

| Convergence Analysis Tools | Custom matrix analysis implementations, Lyapunov exponent calculators | Supports theoretical investigation of algorithm behavior and stability |

The Nelder-Mead downhill simplex method represents a remarkable example of algorithmic longevity in numerical optimization. Six decades after its introduction, it continues to serve as both a practical optimization tool and a subject of active theoretical research. Its enduring value lies in the elegant simplicity of its geometric intuition, derivative-free operation, and adaptability to challenging optimization landscapes where gradient-based methods struggle.

Contemporary research has enriched the original algorithm through hybridization with evolutionary methods and machine learning, enhanced theoretical understanding of its convergence properties, and extended its applications to emerging domains like digital twin optimization and bioinformatics. While fundamental limitations remain—particularly regarding convergence guarantees in high-dimensional spaces—ongoing innovations continue to expand its capabilities and applications.

For researchers and practitioners, the Nelder-Mead method offers a versatile optimization approach that balances computational efficiency with robust performance across diverse problem domains. Its continued evolution demonstrates how classical algorithms can find new life through integration with modern computational paradigms, ensuring its relevance for future optimization challenges in science and engineering.

The Nelder-Mead simplex algorithm stands as a cornerstone of derivative-free numerical optimization. Its development in 1965 marked a significant evolution from the earlier fixed simplex method of Spendley, Hext, and Himsworth, introducing adaptive transformations that could change both size and shape to navigate complex optimization landscapes efficiently [1]. This historical progression represents a critical chapter in the broader thesis research on the Nelder-Mead algorithm, illustrating how mathematical insights can transform a rudimentary search technique into a powerful heuristic method. For researchers, scientists, and drug development professionals, understanding this evolution provides valuable insights into the algorithm's behavior, strengths, and limitations when applied to complex problems such as parameter estimation in pharmacokinetics or optimization of experimental conditions. The algorithm's enduring popularity stems from its simplicity, low storage requirements, and ability to handle problems with non-smooth functions where derivative information is unavailable or unreliable [1] [11].

The Spendley, Hext, and Himsworth Foundation

The foundational work of Spendley, Hext, and Himsworth in 1962 introduced the first simplex-based direct search method for optimization [1]. Their approach utilized a regular simplex—a geometric shape where all edges have equal length—that maintained constant angles between edges throughout the optimization process. This method employed only two basic transformations:

- Reflection: Moving the worst vertex away from the simplex centroid.

- Shrinkage: Contracting the entire simplex toward the best vertex.

Despite its conceptual simplicity, this approach proved limited in practice because the simplex could not adapt its shape to the objective function's topography [1]. The rigid geometric structure constrained the algorithm's ability to navigate non-smooth or valley-like landscapes efficiently, often requiring excessive function evaluations to converge. Nevertheless, this pioneering work established the fundamental simplex-based framework that would later be refined and enhanced by Nelder and Mead, creating a versatile and powerful optimization tool widely adopted across scientific and engineering disciplines, including pharmaceutical research and drug development.

Table: Key Characteristics of the Spendley et al. Simplex Method

| Feature | Description |

|---|---|

| Simplex Type | Regular simplex (equal edge lengths) |

| Transformations | Reflection away from worst vertex; shrinkage toward best vertex |

| Shape Adaptation | No shape change possible; constant angles between edges |

| Size Adaptation | Limited to shrinkage; no expansion capability |

| Primary Limitation | Inability to adapt to local function landscape |

The Nelder-Mead Innovation

In 1965, John Nelder and Roger Mead introduced their seminal modification to the Spendley et al. algorithm, creating a significantly more adaptive and efficient optimization method [1]. Their key innovation was expanding the transformation repertoire to include expansion and contraction operations, enabling the simplex to dynamically adjust both its size and shape in response to the local characteristics of the objective function. As they poetically described in their original paper, "In the method to be described the simplex adapts itself to the local landscape, elongating down long inclined planes, changing direction on encountering a valley at an angle, and contracting in the neighbourhood of a minimum" [1].

The Nelder-Mead algorithm operates through a sequence of geometric transformations applied to a simplex traversing the n-dimensional parameter space. The method utilizes four key operations, each controlled by specific coefficients:

- Reflection (α = 1): Projects the worst point through the centroid of the opposing face [11]

- Expansion (γ = 2): Extends further in promising directions when reflection yields improvement [11]

- Contraction (β = 0.5): Reduces step size when reflection provides limited improvement [11]

- Shrinkage (δ = 0.5): Contracts all points toward the best point when other transformations fail [11]

This adaptive behavior allows the algorithm to accelerate down favorable slopes while cautiously navigating areas of poor improvement, creating an effective balance between exploration and exploitation in parameter space [12]. The method's simplicity and low computational overhead—typically requiring only one or two function evaluations per iteration—made it ideally suited for the minicomputers of the era and contributed to its rapid adoption across diverse scientific and engineering domains [1].

Diagram: Nelder-Mead Algorithm Transformation Workflow

Algorithmic Formulations and Variations

Original vs. Ordered Variants

The historical development of the Nelder-Mead method reveals two principal algorithmic variants with distinct convergence properties. The original 1965 formulation employs an unordered approach where indices for the worst (h), second worst (m), and best (l) vertices are recalculated at each iteration without imposing a complete ordering of all vertices [6]. In contrast, the ordered variant introduced by Lagarias et al. maintains the vertices in sorted order by function value (f(x₁) ≤ f(x₂) ≤ ⋯ ≤ f(xₙ₊₁)), consistently identifying ℓₖ=1, mₖ=n, and hₖ=n+1 [6]. This ordering imposes additional structure on the algorithm's behavior and has been shown to exhibit superior convergence characteristics in analytical studies.

The matrix representation provides a unified framework for understanding both variants. For nonshrinking iterations, the simplex transformation can be expressed as Sₖ₊₁ = SₖTₖ, where Tₖ represents the transformation matrix [6]. In the original Nelder-Mead formulation, this involves matrices Tⱼ(α) that replace the worst vertex, while the ordered variant utilizes permutation matrices P to maintain the sorted vertex ordering after each transformation [6]. This mathematical formalization has enabled more rigorous analysis of the algorithm's convergence properties and failure modes.

Computational Enhancements and Modern Variants

Recent research has focused on addressing known limitations of the classical Nelder-Mead algorithm, particularly its convergence properties in stochastic environments and high-dimensional spaces. The Stochastic Nelder-Mead (SNM) method incorporates a specialized sample size scheme to handle noisy response functions, effectively controlling the corruption of solution rankings by random variations [13]. This enhancement has proven valuable for simulation optimization problems where objective functions are nonsmooth or gradients do not exist, making it complementary to gradient-based approaches [13].

Hybrid approaches have emerged as powerful alternatives that combine the Nelder-Mead method with global optimization techniques. The GANMA (Genetic Algorithm and Nelder-Mead Algorithm) framework integrates the global exploration capabilities of genetic algorithms with the local refinement strength of Nelder-Mead, effectively balancing exploration and exploitation in complex optimization landscapes [8]. Similarly, the NM-PSO algorithm combines Nelder-Mead with particle swarm optimization, leveraging the local search accuracy of NM with the global search capability of PSO to address multi-peak, high-dimensional optimization problems more effectively [14].

Table: Nelder-Mead Algorithm Variants and Characteristics

| Variant | Key Features | Advantages | Limitations |

|---|---|---|---|

| Original NM | Unordered vertices, adaptive shape | Simple implementation, fast initial progress | Potential convergence issues |

| Ordered NM | Vertices maintained in sorted order | Better convergence properties | Increased computational overhead |

| Stochastic NM | Sample size scheme for noise control | Handles noisy objective functions | Requires careful parameter tuning |

| GANMA | Hybrid of Genetic Algorithm and NM | Balanced global/local search | Complex implementation |

| NM-PSO | Hybrid of Particle Swarm Optimization and NM | Effective for multi-peak problems | Computational intensity |

Convergence Analysis and Theoretical Foundations

The convergence behavior of the Nelder-Mead algorithm has been the subject of extensive mathematical investigation, revealing both strengths and limitations. Research has identified several distinct convergence scenarios that address fundamental questions raised by Wright [6]:

- Function values at simplex vertices may converge to a common limit while the objective function has no finite minimum and the simplex sequence remains unbounded

- Simplex vertices may converge to a common limit point that is not a stationary point of the objective function (as demonstrated by McKinnon's counterexample)

- The simplex sequence may converge to a limit simplex with positive diameter, resulting in different function values at the vertices

- Function values may converge to a common value while the simplex sequence converges to a limit simplex with positive diameter [6]

These diverse convergence behaviors illustrate the mathematical complexity underlying the apparently simple heuristic method. Recent convergence results have generalized the foundational work of Lagarias et al., demonstrating that under specific conditions, both the original and ordered variants exhibit reliable convergence properties [6]. For the ordered Nelder-Mead algorithm, sufficient conditions have been established that guarantee convergence of the function values f₁ᵏ → f* ask → ∞, providing theoretical support for observed empirical performance [6].

The convergence analysis typically distinguishes between two types of convergence: convergence of function values at the simplex vertices and convergence of the simplex sequence itself [6]. The first type of convergence has been more thoroughly studied, with results showing that the function values at the vertices will converge to a common value under certain continuity and boundedness conditions. The second type of convergence—convergence of the simplex vertices to a single point—has proven more challenging to establish and remains an active research area six decades after the algorithm's introduction.

Contemporary Applications and Research Directions

Modern Applications Across Disciplines

The Nelder-Mead algorithm continues to find novel applications across diverse scientific domains, particularly in problems where derivative information is unavailable or problematic. In biomedical engineering and healthcare, recent research has demonstrated its effectiveness in non-invasive blood pressure estimation, where it is combined with particle swarm optimization to refine empirical parameters based on body mass index [14]. This hybrid NM-PSO approach enhances computational efficiency and solution accuracy in processing remote photoplethysmography signals obtained through facial image analysis [14].

In industrial and manufacturing contexts, hybrid Nelder-Mead approaches have been successfully applied to complex optimization challenges including production planning with stochastic demands, financial portfolio selection with stochastic asset prices, and parameter optimization in plastic injection molding [8] [13]. The algorithm's robustness against non-smooth response functions makes it particularly valuable for real-world engineering problems where objective functions may exhibit discontinuities or other pathological features that challenge gradient-based methods.

Current Research Frontiers

Recent algorithmic advances have focused on enhancing the method's reliability and expanding its applicability to increasingly complex problem domains. Research on the Stochastic Nelder-Mead (SNM) method has established global convergence guarantees—proving that the algorithm can achieve global optima with probability one under appropriate conditions—while maintaining the derivative-free character that makes the approach valuable for simulation optimization [13]. This theoretical foundation complements practical performance improvements demonstrated through extensive numerical studies comparing SNM with competing approaches like Simultaneous Perturbation Stochastic Approximation and Pattern Search [13].

Ongoing research addresses persistent challenges including scalability to high-dimensional problems, adaptive parameter tuning, and balancing computational efficiency with solution quality. The development of restart strategies that execute multiple shorter runs with different initial points rather than single extended executions has shown significant performance improvements in empirical studies [12]. These contemporary research directions ensure that six decades after its introduction, the Nelder-Mead algorithm continues to evolve and maintain its relevance as a powerful tool for challenging optimization problems in science, engineering, and industry.

Table: Research Reagent Solutions for Nelder-Mead Implementation

| Component | Function | Implementation Considerations |

|---|---|---|

| Initial Simplex Generator | Constructs starting simplex around initial guess | Right-angled vs. regular simplex; step size selection |

| Transformation Controller | Manages reflection, expansion, contraction parameters | Standard values: α=1, γ=2, β=0.5, δ=0.5; adaptive schemes |

| Convergence Detector | Monitors termination conditions | Size-based, value-based, or iteration-based criteria |

| Function Evaluator | Computes objective function at simplex vertices | Handles noisy, expensive, or failure-prone evaluations |

| Restart Scheduler | Manages multiple runs with different initial conditions | Determines when to restart rather than continue iterating |

In the pursuit of scientific and engineering breakthroughs, researchers are often confronted with complex optimization problems where the calculation of derivatives is either impossible or impractical. Derivative-free optimization (DFO) methods provide a powerful toolkit for these scenarios, relying solely on function evaluations to guide the search for optimal solutions. Among these, the Nelder-Mead (NM) simplex algorithm stands as a cornerstone technique, first published in 1965 and remaining one of the best-known algorithms for multidimensional unconstrained optimization without derivatives [1].

This guide explores the core advantages of derivative-free methods, with a specific focus on the Nelder-Mead algorithm, and illustrates their critical role in solving real-world research problems across diverse fields including drug development, engineering, and finance.

Core Scenarios for Derivative-Free Optimization

Derivative-free methods are indispensable in several key research scenarios, as outlined in the table below.

Table 1: Key Research Scenarios Demanding Derivative-Free Optimization

| Scenario | Description | Representative Algorithm |

|---|---|---|

| Non-Smooth or Noisy Functions | Problems where the objective function is not differentiable, contains discontinuities, or is subject to experimental noise [1]. | Nelder-Mead Algorithm [1] |

| Function Value Uncertainty | Optimization where function values are uncertain, approximate, or come from stochastic simulations, such as parameter estimation in statistical models [1]. | Nelder-Mead Algorithm [1] |

| Black-Box Systems | Systems where the functional form is unknown or the evaluation is a complex computational process (e.g., computer simulations, machine learning models) [8]. | Genetic Algorithm and Nelder-Mead Hybrid (GANMA) [8] |

| Complex Constraint Handling | Problems with complex, non-convex, or simulation-defined constraints that make gradient calculation infeasible [8]. | Hybrid Algorithms (e.g., GA-NM) [8] |

The Nelder-Mead Simplex Algorithm: A DFO Workhorse

The Nelder-Mead algorithm is a simplex-based direct search method. A simplex in ( \mathbb{R}^n ) is a geometric figure formed by ( n+1 ) vertices—a triangle in 2D or a tetrahedron in 3D [1]. The method iteratively transforms this working simplex by comparing function values at its vertices.

Algorithmic Workflow and Transformation

The algorithm's operation can be visualized in the following workflow, which details the logical sequence of steps and transformations performed during each iteration.

The key transformations that drive the simplex are controlled by four parameters ( \alpha ) (reflection), ( \beta ) (contraction), ( \gamma ) (expansion), and ( \delta ) (shrinkage). The standard values used in most implementations are ( \alpha = 1 ), ( \beta = 0.5 ), ( \gamma = 2 ), and ( \delta = 0.5 ) [1].

Table 2: Nelder-Mead Simplex Transformation Parameters and Operations

| Operation | Parameter | Purpose | Standard Value |

|---|---|---|---|

| Reflection | ( \alpha ) | Moves the simplex away from the worst vertex. | 1.0 |

| Expansion | ( \gamma ) | Extends the simplex further in a promising direction. | 2.0 |

| Contraction | ( \beta ) | Shrinks the simplex in a less promising region. | 0.5 |

| Shrinkage | ( \delta ) | Reduces the entire simplex towards the best vertex. | 0.5 |

Experimental Protocols and Validation

The robustness of the Nelder-Mead algorithm is validated through its application to complex, real-world identification and estimation problems.

Protocol: Parameter Identification for Electric Motors

A 2023 study provided a direct comparison between the Nelder-Mead algorithm and a Differential Evolution (DE) algorithm for identifying the parameters of a Line-Start Permanent Magnet Synchronous Motor (LSPMSM) [15].

- Objective: To accurately identify the parameters (e.g., resistances, inductances, inertia) of a lumped-parameter LSPMSM model using measured data from the motor's start-up transient phase [15].

- Methodology: The algorithms were used to minimize the discrepancy between the simulated model output and the experimentally measured transient responses of phase currents and rotor speed during motor start-up [15].

- Key Findings: The Nelder-Mead algorithm demonstrated superior computational efficiency and accuracy for this specific parameter identification problem compared to the DE algorithm, making it more suitable for creating a reliable motor model [15].

Protocol: Hybrid Algorithm for Enhanced Optimization

To address the challenge of balancing global exploration with local refinement, a novel hybrid named GANMA integrates a Genetic Algorithm (GA) with the Nelder-Mead method [8]. The experimental workflow for such a hybrid approach is illustrated below.

- Objective: To develop a robust optimization strategy that efficiently navigates complex, high-dimensional search spaces [8].

- Methodology:

- The GA first performs a broad global search, maintaining a population of candidate solutions and using selection, crossover, and mutation operators [8].

- Once the GA's convergence slows, one or more of the best solutions are passed to the Nelder-Mead algorithm [8].

- NM performs an intensive local search from these promising starting points, precisely refining the solutions [8].

- Validation: GANMA was tested on 15 benchmark functions and applied to real-world parameter estimation tasks, showing improved performance in terms of robustness, convergence speed, and solution quality compared to using either algorithm alone [8].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and material "reagents" used in the featured experiments.

Table 3: Essential Research Reagents for DFO-Driven Studies

| Reagent / Tool | Function in the Experiment / Field |

|---|---|

| Nelder-Mead Algorithm | The core derivative-free optimizer used for local refinement and parameter estimation in models ranging from statistical to electromechanical [1] [15]. |

| Genetic Algorithm (GA) | A population-based global search algorithm inspired by evolution, used in hybrids to broadly explore the parameter space before NM refinement [8]. |

| LSPMSM Experimental Test Bench | A setup including a motor, sensors, and data acquisition systems to measure real-time phase currents and rotor speed during start-up, providing data for the identification problem [15]. |

| Benchmark Function Suites | A collection of standardized mathematical functions (e.g., multimodal, high-dimensional) used to rigorously test and validate the performance of optimization algorithms like GANMA [8]. |

| Lumped Parameter Motor Model | A simplified mathematical representation of the motor's electro-mechanical dynamics, whose parameters are tuned via optimization to match experimental data [15]. |

Derivative-free optimization methods, particularly the enduring Nelder-Mead algorithm, offer indispensable advantages in tackling complex research problems where gradients are unavailable. Their simplicity, robustness to noise and discontinuities, and low computational overhead per iteration make them uniquely suited for parameter estimation, statistical model fitting, and optimizing complex black-box systems. As evidenced by its successful standalone application in engineering and its role in powerful modern hybrids, the Nelder-Mead algorithm remains a vital component of the researcher's toolkit, enabling scientific and industrial progress across numerous domains.

The Nelder-Mead algorithm operates on a geometric structure known as a simplex, which serves as the fundamental building block for navigating the optimization landscape. In n-dimensional space, a simplex is defined as the convex hull of n+1 vertices that do not all lie in the same hyperplane [1]. This simple yet powerful geometric concept generalizes familiar shapes: a line segment in one dimension, a triangle in two dimensions, and a tetrahedron in three dimensions [11]. For higher-dimensional optimization problems, the simplex becomes an n-dimensional polytope, which the algorithm manipulates to traverse the objective function's topology without requiring gradient information.

The geometric properties of the simplex enable the Nelder-Mead algorithm to perform a structured yet flexible search. Unlike gradient-based methods that rely on derivative information, this direct search method uses only function evaluations at the vertices of the simplex [16] [1]. The algorithm progressively transforms the simplex by reflecting, expanding, contracting, or shrinking it based on relative function values at its vertices [4] [11]. This geometric approach allows the simplex to adapt to the local landscape, elongating down inclined planes, changing direction when encountering valleys, and contracting in the neighborhood of minima [1].

Fundamental Geometric Operations of the Nelder-Mead Algorithm

The Nelder-Mead algorithm employs four principal geometric transformations that manipulate the simplex's size, shape, and orientation in n-dimensional space. These operations are governed by specific parameters and are triggered based on the relative performance of function evaluations at test points.

Core Transformation Operations

Reflection: The worst vertex (xh) is reflected through the centroid (c) of the remaining n best vertices [11] [1]. The reflection operation is mathematically defined as xr = c + α(c - x_h), where α > 0 is the reflection coefficient [1]. This operation maintains the simplex volume while exploring promising directions away from poor regions.

Expansion: If the reflected point represents a significant improvement, the algorithm expands further in that direction using xe = c + γ(xr - c), where γ > 1 is the expansion coefficient [11] [1]. Expansion enables the simplex to accelerate movement along favorable trajectories, effectively elongating down inclined planes.

Contraction: When reflection yields insufficient improvement, the algorithm performs either an outside or inside contraction [11]. Outside contraction (xc = c + ρ(xr - c)) occurs when the reflected point is better than the worst but worse than the second worst, while inside contraction (xc = c + ρ(xh - c)) happens when the reflected point is worse than all current vertices, with 0 < ρ < 1 representing the contraction coefficient [1].

Shrinkage: If contraction fails to yield improvement, the simplex shrinks toward its best vertex by moving all other vertices closer using xi = xl + σ(xi - xl) for all i ≠ l, where 0 < σ < 1 is the shrinkage coefficient [11]. This operation helps the algorithm escape stagnation and is crucial for convergence in certain pathological cases [12].

Standard Parameter Values and Geometric Effects

Table 1: Standard Parameters for Nelder-Mead Geometric Operations

| Operation | Parameter | Standard Value | Geometric Effect |

|---|---|---|---|

| Reflection | α (alpha) | 1.0 | Maintains simplex size while exploring new directions |

| Expansion | γ (gamma) | 2.0 | Elongates simplex along promising trajectories |

| Contraction | ρ (rho) | 0.5 | Reduces simplex size when approaching minima |

| Shrinkage | σ (sigma) | 0.5 | Collapses simplex around best vertex |

These parameters create a dynamic geometric behavior where the simplex adapts to the objective function's topology. The algorithm preferentially uses expansion to accelerate movement along favorable directions, while contraction and shrinkage provide mechanisms for refinement and recovery from poor regions [17] [1]. The standard parameter values shown in Table 1 have proven effective across diverse applications, though research has explored adaptive parameter schemes for improved performance [17].

Algorithmic Workflow and Decision Logic

The Nelder-Mead algorithm follows a precise workflow that determines which geometric operation to apply based on function evaluations. The decision logic creates an efficient heuristic that balances exploratory moves with refinement steps.

Step-by-Step Geometric Transformation Process

Initialization: Construct an initial simplex with n+1 vertices in n-dimensional space, typically by generating points around a starting guess [1]. Common approaches include creating a right-angled simplex aligned with coordinate axes or a regular simplex with equal edge lengths [1].

Ordering and Centroid Calculation: At each iteration, order the vertices by function value from best (xl, fl) to worst (xh, fh), then compute the centroid (c) of the n best vertices (excluding x_h) [11] [1].

Transformation Selection: The algorithm follows a decision tree to select the appropriate geometric operation based on the performance of the reflected point (x_r):

Diagram 1: Nelder-Mead transformation decision workflow (7x4 inches)

The decision logic illustrated in Diagram 1 ensures the algorithm efficiently explores promising regions while avoiding unproductive areas. The process continues until termination criteria are satisfied, typically based on simplex size or function value convergence [18] [1].

Quantitative Analysis of Simplex Transformations

The geometric operations of the Nelder-Mead algorithm can be quantitatively characterized by their effects on simplex volume, convergence rates, and computational requirements across different dimensional spaces.

Performance Characteristics Across Dimensions

Table 2: Performance Characteristics of Nelder-Mead by Problem Dimension

| Dimension | Simplex Vertices | Function Evals per Iteration | Typical Convergence Rate | Relative Efficiency |

|---|---|---|---|---|

| 2D | 3 | 1-2 | Fast | High |

| 5D | 6 | 1-2 | Moderate | Medium |

| 10D | 11 | 1-2 | Slow | Low |

| 20D+ | 21+ | 1-2 | Very Slow | Very Low |

The data in Table 2 reveals a key characteristic of the Nelder-Mead method: while it requires only one or two function evaluations per iteration regardless of dimension [1], its convergence rate deteriorates as dimensionality increases. This occurs because the probability of simplex improvement decreases in high-dimensional spaces, leading to more shrinkage steps and slower progress [17].

Computational Efficiency and Resource Requirements

The algorithm's efficiency stems from its minimal function evaluation requirements compared to derivative-based methods or other direct search approaches. In experimental mathematics and parameter estimation problems where function evaluations are computationally expensive, this characteristic makes Nelder-Mead particularly attractive [1]. The method has been shown to perform reasonably well on functions with noisy evaluations [19], though it may converge to non-stationary points on problems that could be solved more effectively by alternative methods [11].

Experimental Protocols and Implementation Guidelines

Proper implementation of the Nelder-Mead algorithm requires careful attention to initialization strategies, termination criteria, and parameter selection to ensure robust performance across diverse optimization landscapes.

Initial Simplex Construction Methodologies

The initial simplex significantly impacts algorithm performance, with two primary construction methods employed in practice:

Coordinate-Aligned Simplex: Creates a right-angled simplex where x0 is the initial guess and remaining vertices are generated using xj = x0 + hj ej for j = 1,...,n, where hj is a step size in the direction of unit vector e_j [1]. This approach is simple to implement but may be sensitive to parameter scaling.

Regular Simplex: Constructs a simplex where all edges have equal length, providing uniform directional coverage [1]. This method is more rotationally invariant but requires more careful implementation.

Research indicates that a properly sized initial simplex should reflect the characteristic scale of the problem, with overly small simplices potentially leading to premature convergence to local minima [11].

Termination Criteria and Convergence Detection

Robust implementations employ multiple termination tests to balance solution quality with computational efficiency:

Simplex Size Criterion: Termination occurs when the simplex becomes sufficiently small, typically measured as the maximum distance between any vertex and the centroid [18]. This provides direct control over solution precision.

Function Value Convergence: The algorithm stops when function values at all vertices are sufficiently close, indicating proximity to a stationary point [4] [18].

Maximum Iteration Limit: A safeguard against excessive computation, particularly important for high-dimensional or pathological functions [19].

Practical implementations often combine these criteria, with the simplex size criterion generally proving most reliable for ensuring genuine convergence [18].

Research Reagent Solutions for Algorithm Implementation

Table 3: Essential Computational Tools for Nelder-Mead Experimentation

| Research Reagent | Function/Purpose | Example Implementations |

|---|---|---|

| Optimization Framework | Provides algorithm infrastructure and utilities | SciPy Optimize (Python), MATLAB fminsearch |

| Numerical Computation Library | Handles matrix operations and function evaluations | NumPy (Python), Eigen (C++) |

| Visualization Toolkit | Enables simplex transformation monitoring | Matplotlib (Python), D3.js (JavaScript) |

| Benchmark Function Suite | Tests algorithm performance on standard problems | Rosenbrock, Sphere, Rastrigin functions |

| Automatic Differentiation | Verifies results against gradient-based methods | Autograd (Python), JAX |

The "research reagents" in Table 3 represent the essential software components required for implementing, testing, and validating the Nelder-Mead algorithm in research environments. These tools enable researchers to reproduce published results, conduct comparative studies, and extend the basic algorithm for specialized applications.

Applications in Scientific and Industrial Contexts

The geometric principles of the Nelder-Mead algorithm have found diverse applications across scientific and industrial domains, particularly where gradient information is unavailable, unreliable, or computationally prohibitive.

In chemical and pharmaceutical research, the algorithm is extensively used for parameter estimation in kinetic modeling and curve fitting [1]. Its ability to handle noisy experimental data makes it valuable for fitting dose-response curves and optimizing reaction conditions. In engineering design, the method assists in structural optimization and control system tuning where simulation-based objective functions may be non-differentiable or computationally expensive to evaluate [16].

The algorithm's simplicity and low memory requirements continue to make it attractive for embedded systems and specialized hardware implementations [20], while its derivative-free nature provides advantages for experimental mathematics where functions may have discontinuous regions or other pathologies that challenge gradient-based approaches [1].

The simplex geometry underlying the Nelder-Mead algorithm represents a powerful conceptual framework for derivative-free optimization. Through carefully designed geometric transformations—reflection, expansion, contraction, and shrinkage—the method efficiently navig complex optimization landscapes without requiring gradient information. While the algorithm exhibits limitations in high-dimensional spaces and may converge to non-stationary points on certain problem classes [11], its simplicity, low computational overhead, and robustness to noise maintain its relevance across scientific and engineering disciplines. Future research continues to explore adaptive parameter strategies [17] and hybrid approaches that combine the global exploration capabilities of Nelder-Mead with complementary local search methods [16].

The Nelder-Mead simplex algorithm, developed in 1965, is a prominent direct search method for multidimensional unconstrained minimization without requiring derivatives [1]. Its popularity in fields like chemistry, medicine, and drug development stems from its simplicity and applicability to problems with non-smooth functions or noisy evaluations [1]. The algorithm's operation revolves around the dynamic transformation of a simplex—a geometric figure defined by n+1 vertices in n-dimensional space—guided by repeated evaluations of an objective function. This guide provides an in-depth technical examination of three core components: the vertices that form the simplex, the centroid used in transformation operations, and the critical role of objective function evaluation in directing the search process, framed within contemporary research on the method's capabilities and limitations.

Core Terminology and Mathematical Foundations

Simplex Vertices

In the Nelder-Mead algorithm, a simplex is a convex hull formed by n+1 vertices in an n-dimensional problem space [1]. For a two-dimensional problem, this simplex is a triangle; for three dimensions, it forms a tetrahedron [11]. Each vertex represents a candidate solution, and the algorithm maintains and updates these vertices iteratively.

During operation, vertices are ordered based on their objective function values:

- Best vertex (x₁): The vertex with the lowest function value

- Second-worst vertex (xₙ): The vertex with the second-highest function value

- Worst vertex (xₙ₊₁): The vertex with the highest function value [11] [1]

This ordering drives the transformation process, with the algorithm systematically attempting to replace the worst vertex with a better candidate through geometric operations.

Centroid

The centroid represents the center of the best side of the simplex—the face opposite the worst vertex [1]. Computed as the arithmetic mean of all vertices excluding the worst point, it serves as a pivot for several transformation operations:

where x_c denotes the centroid and n is the dimensionality of the problem [1]. The centroid provides a promising search direction away from the worst-performing region of the simplex.

Objective Function Evaluation

The objective function f(x) is the function being minimized, accepting an n-dimensional vector as input and returning a scalar value [1]. The Nelder-Mead algorithm relies exclusively on function values at the simplex vertices—not gradient information—to guide the optimization [1] [19]. This characteristic makes it suitable for non-differentiable, discontinuous, or noisy functions where derivatives are unavailable or unreliable [1].

Table 1: Standard Nelder-Mead Parameters and Their Roles

| Parameter | Symbol | Standard Value | Operation Controlled | Effect on Search |

|---|---|---|---|---|

| Reflection | α | 1.0 | Reflection | Moves away from worst vertex |

| Expansion | γ | 2.0 | Expansion | Explores promising direction further |

| Contraction | ρ | 0.5 | Contraction | Shrinks simplex near suspected minimum |

| Shrinkage | σ | 0.5 | Shrinkage | Resizes entire simplex toward best point |

Algorithmic Workflow and Transformation Logic

The Nelder-Mead method progresses through an iterative sequence of operations that reshape and reposition the simplex based on objective function evaluations at its vertices. The following diagram illustrates the complete decision workflow and transformation logic.

Transformation Operations

Each transformation operation generates a new candidate point by manipulating the worst vertex relative to the centroid:

Reflection: Projects the worst vertex through the centroid using

x_r = x_c + α(x_c - x_w)[11] [1]. This explores the opposite side of the simplex from the worst point.Expansion: If reflection identifies a promising direction (f(x_r) < f(x₁)), expansion further extends this direction using

x_e = x_c + γ(x_r - x_c)[11] [1]. This allows larger steps in high-improvement regions.Contraction: When reflection offers limited improvement, contraction generates a more conservative candidate:

Shrinkage: If contraction fails, the entire simplex shrinks toward the best vertex using

x_i = x_1 + σ(x_i - x_1)for all vertices [11] [1]. This focuses the search around the most promising region.

Experimental Protocols and Implementation

Initialization Methodologies

Proper initialization significantly impacts Nelder-Mead performance, particularly for computationally expensive problems [21]. Research comparing initialization methods reveals that both the size and shape of the initial simplex affect optimization outcomes.

Table 2: Initial Simplex Generation Methods

| Method Name | Simplex Shape | Generation Approach | Applicability |

|---|---|---|---|

| Pfeffer's Method | Mixed (Mostly Standard) | Combines standard basis with diagonal perturbations | General purpose |

| Nash's Method | Standard | Vertices correspond to standard basis vectors | Low-dimensional problems |

| Han's Method | Regular | All edges have equal length | Well-scaled problems |

| Varadhan's Method | Regular | Maintains equal edge lengths | Consistent search space |

| Std Basis Method | Standard | Uses standard basis vectors | Coordinate-aligned problems |

Empirical studies recommend normalizing the search space to a unit hypercube and generating a regular-shaped simplex that is as large as possible for limited-evaluation-budget scenarios [21].

Termination Criteria

Robust termination detection is crucial for effective implementation. Common approaches include:

Function Value Convergence: Stop when the standard error of function values at vertices falls below a threshold [22]:

Simplex Size Convergence: Stop when the simplex becomes sufficiently small [18]:

Evaluation Budget: Stop when exceeding a maximum function evaluation count [19].

Research indicates that criteria based on simplex size or function value variation are more reliable than those based solely on improvement rates, which can be fooled by periods of simplex reshaping without significant function improvement [18].

Constraint Handling Methods

The original Nelder-Mead algorithm was designed for unconstrained problems, but real-world applications often require boundary handling:

Table 3: Box Constraint Handling Methods

| Method | Approach | Advantages | Limitations |

|---|---|---|---|

| Extreme Barrier | Assign +∞ to infeasible points | Simple implementation | May reject promising search directions |

| Projection | Map infeasible points to boundary | Maintains feasibility | Creates flat regions on boundaries |

| Reflection | Reflect infeasible points into domain | Preserves search direction | May cause oscillatory behavior |

| Wrapping | Wrap infeasible points to opposite bound | Continuous parameter exploration | Discontinuous objective function |

Studies show that initialization with a normalized search space to a unit hypercube performs well regardless of the constraint handling method employed [21].

Research Reagent Solutions

The experimental implementation of the Nelder-Mead algorithm requires several computational components:

Table 4: Essential Research Reagents for Nelder-Mead Implementation

| Component | Function | Implementation Considerations |

|---|---|---|

| Objective Function Evaluator | Computes f(x) for candidate solutions | Handles noisy, expensive, or discontinuous functions |

| Simplex Initializer | Generates initial n+1 vertices | Controls initial size and shape; critical for performance |

| Vertex Ordering Module | Sorts vertices by function value | Manages tie-breaking consistently |

| Centroid Calculator | Computes center of best n vertices | Excludes worst vertex from calculation |

| Transformation Operator | Applies reflection, expansion, contraction | Implements adaptive or fixed parameters |

| Termination Checker | Evaluates stopping conditions | Combines multiple criteria for robustness |

| Constraint Handler | Manages boundary violations | Projects, reflects, or penalizes infeasible points |

Advanced Research and Hybrid Approaches

Recent research has addressed the Nelder-Mead method's limitations through hybridization with other algorithms. The Genetic Algorithm and Nelder-Mead Algorithm (GANMA) combines GA's global exploration with NM's local refinement, demonstrating improved performance across benchmark functions and parameter estimation tasks [8]. Similar hybrid approaches include:

- JAYA-NM: Integrates JAYA algorithm's population-based search with Nelder-Mead local optimization [16]

- PSO-NM: Combines Particle Swarm Optimization with Nelder-Mead for power system state estimation [16]

Contemporary convergence analysis reveals that the Nelder-Mead method exhibits complex behavior, including:

- Convergence of function values without simplex convergence [6]

- Convergence to non-stationary points [6]

- Dependence on both initial simplex and parameter choices [6] [21]

These findings underscore the importance of proper initialization and termination criteria for effective application in research and industrial contexts, particularly in drug development where objective function evaluations may be computationally expensive.

Implementing the Algorithm: A Step-by-Step Guide and Real-World Biomedical Applications

Within the extensive domain of optimization algorithms, the Nelder-Mead simplex method stands as a classic and enduring technique for minimizing objective functions without relying on gradient information. Its longevity, since its publication in 1965, is a testament to its conceptual elegance and practical utility [4]. This whitepaper delves into the core mechanics of the Nelder-Mead algorithm, dissecting the fundamental iterative cycle of ordering, centroid calculation, and transformation that underpins its search strategy. For researchers, scientists, and drug development professionals, understanding this cycle is paramount, as the algorithm sees application in complex, real-world parameter estimation problems, from calibrating models in pharmacokinetics to optimizing processes in bioinformatics [8]. The algorithm's heuristic nature, which mimics a structured trial-and-error process, allows it to navigate complex parameter spaces where derivatives are unavailable or unreliable, making it a valuable tool in the computational scientist's toolkit.

The Core Components of the Iterative Cycle

The Nelder-Mead method operates on a geometric construct known as a simplex. For a function of ( n ) parameters, the simplex is comprised of ( n+1 ) points in ( \mathbb{R}^n ) [23]. Each iteration of the algorithm is a systematic procedure to improve the worst point of this simplex by transforming its position relative to the others. The core of this procedure can be broken down into three critical and sequential stages: ordering, centroid calculation, and transformation.

Ordering: Establishing a Hierarchy

The first step in the iterative cycle is to order the vertices of the simplex based on their objective function values. Given a simplex with points ( xi ), the algorithm evaluates ( f(xi) ) for each point and sorts them so that: [ f(x1) \le f(x2) \le \cdots \le f(x{n+1}) ] This ordering establishes a clear hierarchy [23]. The point ( x1 ) becomes the best vertex (lowest function value), ( x{n+1} ) the worst vertex (highest function value), and ( xn ) the second-worst vertex. This classification is crucial as it determines which point will be targeted for replacement in the current iteration and provides the reference points for deciding the type of transformation to attempt.

Centroid Calculation: Finding a Pivot

After ordering, the algorithm calculates the centroid, which acts as a pivot point for the subsequent transformations. The centroid, denoted ( \bar{x} ), is the average position of all vertices excluding the worst point ( x{n+1} ) [23]. Mathematically, it is defined as: [ \bar{x} = \frac{1}{n}\sum{i=1}^{n} x_i ] This centroid represents the center of gravity of the face of the simplex opposite the worst vertex. It is the foundation upon which all potential new points are generated, as the algorithm essentially "reflects" the worst point across this centroid to explore potentially better regions of the parameter space. The centroid calculation effectively captures the collective information of the best ( n ) points, guiding the search away from the worst region.

Transformation: Exploring New Points

The final and most complex stage is transformation, where the algorithm generates a new candidate point to replace the worst point, ( x_{n+1} ). The choice of transformation is governed by a set of rules that compare the function value of candidate points against the existing hierarchy. The primary sequence of operations is as follows and is detailed in the workflow diagram (See Figure 1):

- Reflection: The algorithm first computes the reflected point, ( xr ), defined as ( \bar{x} + \alpha(\bar{x} - x{n+1}) ), where ( \alpha ) is the reflection coefficient (typically ( \alpha=1 )) [23] [4]. This point mirrors the worst point across the centroid.

- Expansion: If the reflected point is better than the best point (( f(xr) < f(x1) )), it indicates a promising direction. The algorithm then computes an expanded point, ( xe = \bar{x} + \gamma(xr - \bar{x}) ), where ( \gamma ) is the expansion coefficient (typically ( \gamma=2 )) [4]. If ( xe ) is better than ( xr ), it replaces the worst point; otherwise, ( x_r ) is used.

- Contraction: If the reflected point is better than the second-worst point (( f(xn) )) but not better than the best, an outside contraction is attempted. If the reflected point is worse than or equal to the second-worst point, an inside contraction is attempted. The contracted points are calculated as ( \bar{x} \pm \rho(\bar{x} - x{n+1}) ), where ( \rho ) is the contraction coefficient (typically ( \rho=0.5 )) [4].

- Shrinkage: If the contraction step fails to produce a better point, the entire simplex is shrunk towards the best point, ( x1 ). Each point ( xi ) in the simplex is replaced by ( x1 + \sigma(xi - x_1) ), where ( \sigma ) is the shrinkage coefficient (typically ( \sigma=0.5 )) [4].

Figure 1: The Nelder-Mead simplex transformation workflow illustrates the decision logic for reflection, expansion, contraction, and shrinkage.

The following table summarizes the key parameters and operations involved in the transformation phase.

Table 1: Summary of Nelder-Mead Transformation Operations

| Operation | Mathematical Expression | Typical Coefficient Value | Purpose |

|---|---|---|---|

| Reflection | ( xr = \bar{x} + \alpha(\bar{x} - x{n+1}) ) | ( \alpha = 1.0 ) [4] | Explore the region opposite the worst point. |

| Expansion | ( xe = \bar{x} + \gamma(xr - \bar{x}) ) | ( \gamma = 2.0 ) [4] | Extend further in a promising direction. |

| Contraction (Outside) | ( xc = \bar{x} + \rho(xr - \bar{x}) ) | ( \rho = 0.5 ) [4] | Make a conservative move towards a good reflected point. |

| Contraction (Inside) | ( xc = \bar{x} + \rho(x{n+1} - \bar{x}) ) | ( \rho = 0.5 ) [4] | Move away from a poor reflected point. |

| Shrinkage | ( xi^{new} = x1 + \sigma(xi - x1) ) | ( \sigma = 0.5 ) [4] | Refocus the search around the best point when other moves fail. |

Experimental Protocols and Evaluation

To empirically validate the Nelder-Mead algorithm's performance, researchers typically follow a standard protocol involving benchmark functions and careful termination criteria.

Algorithm Initialization

The algorithm requires an initial simplex. A common initialization routine, used in MATLAB's fminsearch, starts from a user-provided point ( x0 ). The remaining ( n ) vertices are set to ( x0 + \taui ei ), where ( ei ) is the unit vector in the ( i^{th} ) coordinate and:

[

\taui = \begin{cases} 0.05 & \text{if } (x0)i \neq 0, \ 0.00025 & \text{if } (x0)i = 0, \end{cases}

]

This scaling ensures that the initial simplex is appropriately sized relative to the starting point [23].

Termination Criteria

Determining when to halt the iterative cycle is critical. Nelder and Mead originally recommended stopping when the standard deviation of the function values at the simplex vertices falls below a predefined tolerance [23]. A common practical termination criterion, as implemented in fminsearch, is to stop when both of the following conditions are met:

[

\max{2 \le i \le n+1} |fi - f1| \le \text{TolFun} \quad \text{and} \quad \max{2 \le i \le n+1} || xi - x1 ||_\infty \le \text{TolX}

]

where TolFun is the function value tolerance and TolX is the parameter value tolerance. The algorithm also typically includes a maximum iteration or function evaluation count as a safeguard [23].

The Scientist's Toolkit: Research Reagent Solutions

Implementing and applying the Nelder-Mead algorithm requires a set of computational "reagents." The following table outlines essential components for a typical experimental investigation of the algorithm.

Table 2: Essential Computational Reagents for Nelder-Mead Experimentation

| Tool/Component | Function | Example Implementation/Note |

|---|---|---|

| Benchmark Test Functions | To evaluate algorithm performance, robustness, and convergence speed. | 2D Quadratic (( f(x,y)=x^2+y^2 )), Rosenbrock function, and other multimodal functions [23]. |

| Numerical Optimization Library | Provides robust, pre-written implementations of optimization algorithms. | Libraries in MATLAB, Python (SciPy), and R offer Nelder-Mead routines for direct application. |

| Initialization Routine | Generates a valid initial simplex from a single starting point. | The Gau (2012) method, which handles parameters of zero value robustly [23]. |

| Termination Condition Checker | Automatically evaluates stopping criteria to end the iterative cycle. | A function that checks the standard deviation of values or the maximum difference against TolFun and TolX [23] [4]. |

| Visualization Framework | To plot the simplex's movement across iterations and visualize convergence. | Essential for debugging and educational purposes, especially for 2D problems [4]. |

Advanced Applications and Hybridization

The core iterative cycle of Nelder-Mead proves powerful not only as a standalone method but also as a component in more advanced hybrid optimization strategies. The primary strength of Nelder-Mead is local refinement, but it can be limited in global exploration and scalability. Conversely, population-based metaheuristic algorithms excel at global exploration but may converge slowly. This complementary relationship has led to the development of powerful hybrids.

One prominent example is the Genetic and Nelder-Mead Algorithm (GANMA), which integrates the global search capabilities of Genetic Algorithms (GA) with the local refinement strength of Nelder-Mead. In this hybrid, GA first broadly explores the parameter space. Then, the Nelder-Mead method is applied to refine the best solutions found by GA, fine-tuning them to high precision. This synergy enhances performance in terms of robustness, convergence speed, and solution quality across various benchmark functions and real-world parameter estimation tasks [8].

Another innovative hybrid is the Nelder-Mead Particle Swarm Optimization (NM-PSO) algorithm. In this model, the PSO algorithm performs a global search. Once PSO identifies a promising region, the Nelder-Mead method is employed to perform a precise local search, accurately determining the optimal solution. This combination helps prevent PSO from premature convergence and enhances the likelihood of discovering the global optimum, making the hybrid more stable and effective for complex, multi-peak problems [14].

Figure 2: The hybrid optimization strategy combines global and local search algorithms.

These hybrid approaches are particularly valuable in demanding fields. In engineering, they help optimize complex designs with stringent constraints. In finance, they improve models for portfolio management and risk assessment. In the life sciences, including drug development and bioinformatics, they are used for critical parameter estimation tasks, where accurately calibrating models to experimental data is essential [8]. The Nelder-Mead iterative cycle thus serves as a fundamental and reliable component in modern computational optimization.

The Nelder-Mead simplex algorithm, introduced in 1965 by John Nelder and Roger Mead, is a prominent direct search method for multidimensional optimization problems where derivatives are unavailable or unreliable [11] [6]. This heuristic search technique is particularly valuable in scientific fields, including drug development, for calibrating models or minimizing cost functions associated with experimental data. The algorithm operates by evolving a simplex—a geometric figure of n+1 vertices in n dimensions—through a series of geometric transformations. These core operations are Reflection, Expansion, Contraction, and Shrinkage [11] [12]. Together, they enable the simplex to navigate the objective function's landscape, moving towards minima by reflecting away from poor regions, expanding along promising directions, contracting to refine the search, and shrinking to escape non-productive areas.

Mathematical Foundation of the Simplex Operations

The algorithm maintains a simplex of n+1 points for an n-dimensional optimization problem. At each iteration, the vertices are ordered based on their objective function values, ( f(x1) \leq f(x2) \leq \cdots \leq f(x{n+1}) ), identifying the best point ((x1)), the worst point ((x{n+1})), and the second-worst point ((xn)) [11]. The centroid, (xo), of the best n points (excluding the worst vertex, (x{n+1})) is central to all operations [11]. It is computed as (xo = \frac{1}{n}\sum{i=1}^{n} x_i) [16].

All subsequent operations are defined relative to this centroid and the worst point. The core transformations use a standard line search formula, (x(\alpha) = (1+\alpha)xo - \alpha x{n+1}), where different values of the coefficient (\alpha) define different operations [16] [6]. The standard coefficients for these operations are summarized in the table below.

Table 1: Standard Coefficients and Formulae for Simplex Operations

| Operation | Coefficient ((\alpha)) | Mathematical Formula | Standard Coefficient Value |

|---|---|---|---|

| Reflection | (\alpha_R) | (xr = xo + \alphaR (xo - x_{n+1})) | (\alpha_R = 1) [11] |

| Expansion | (\alpha_E) | (xe = xo + \alphaE (xr - x_o)) | (\alpha_E = 2) [11] |

| Contraction | (\alpha_C) | (xc = xo + \alphaC (x{n+1} - x_o)) (Inside) | (\alpha_C = 0.5) [11] |

| (xc = xo + \alphaC (xr - x_o)) (Outside) | (\alpha_C = 0.5) [11] | ||

| Shrinkage | (\sigma) | (xi = x1 + \sigma (xi - x1)) for all (i \neq 1) | (\sigma = 0.5) [11] |

These coefficients are heuristic but have become the de facto standard due to their robust performance across various problems [11]. The contraction operation has two variants: "outside contraction" is performed when the reflected point is better than the worst point but worse than the second-worst, and "inside contraction" is performed when the reflected point is worse than the worst point [11] [4].

Detailed Breakdown of Core Operations

Reflection

Reflection is the default operation for moving the simplex. It projects the worst vertex through the centroid of the opposing face, maintaining the simplex's volume and exploring the landscape in a direction opposite to the worst point [11] [12].

- Purpose: To move away from a region of high function value.

- Mechanism: The worst vertex, (x{n+1}), is reflected to a new point, (xr), using the formula (xr = xo + (xo - x{n+1})) [11]. Graphically, this flips the worst point across the centroid to the opposite side of the simplex [12].

- Decision Criteria: Reflection is accepted if the reflected point is better than the second-worst point but not better than the best point ((f(x1) \leq f(xr) < f(x_n))). This indicates a promising direction without being the best found [11].

Expansion

If reflection discovers a significantly better point, expansion pushes further in that direction to accelerate improvement [12].

- Purpose: To exploit a promising search direction rapidly.

- Mechanism: If the reflected point, (xr), is better than the best point ((f(xr) < f(x1))), an expansion point, (xe), is computed as (xe = xo + 2(xr - xo)) [11]. This point is located further along the line from the centroid through the reflected point.

- Decision Criteria: The expansion point, (xe), is accepted if it is better than the reflected point ((f(xe) < f(x_r))). Otherwise, the reflected point is accepted [11]. Expansion effectively elongates the simplex downhill.

Contraction

When reflection does not yield a sufficient improvement, contraction moves the worst point closer to the centroid, reducing the simplex size to hone in on a potential minimum [11] [4].

- Purpose: To refine the search area when progress is limited.

- Mechanism and Variants:

- Outside Contraction: If the reflected point is better than the worst point but not better than the second-worst ((f(xn) \leq f(xr) < f(x{n+1}))), the algorithm computes (xc = xo + 0.5(xr - xo)). If (xc) is better than (xr), it replaces the worst point [11].

- Inside Contraction: If the reflected point is worse than or equal to the worst point ((f(xr) \geq f(x{n+1}))), the algorithm computes (xc = xo - 0.5(xo - x{n+1})). If (xc) is better than the worst point, it replaces it [11] [4].

- Outcome: Contraction typically results in a smaller simplex, focusing the search on a more localized region.

Shrinkage

Shrinkage is a global rescue operation used when contraction fails. It preserves only the best vertex and shrinks the entire simplex towards it [11].

- Purpose: To reset the search landscape and avoid getting stuck in non-minimizing configurations, such as when the simplex is trying to "pass through the eye of a needle" [11] [12].

- Mechanism: Every vertex except the best one, (x1), is moved halfway towards it. For all (i = 2, ..., n+1), the new vertex is (xi^{new} = x1 + 0.5(xi - x_1)) [11].

- Decision Criteria: Shrinkage is performed if the contraction point ((x_c)) in the inside contraction step is not better than the current worst point [11] [4]. This radical transformation can help the algorithm escape stagnation but reduces the simplex size, potentially requiring more iterations to resume progress.

Workflow and Visualization of the Algorithm

The Nelder-Mead algorithm follows a deterministic workflow to select the appropriate operation at each iteration. The following diagram illustrates this decision-making process and the subsequent transformation of the simplex.

Diagram 1: Nelder-Mead algorithm's operational logic and simplex transformations.

The algorithm's iterative nature can be visualized by tracking the movement of a simplex across a two-dimensional parameter space. The following diagram illustrates the path taken by a simplex as it navigates towards a minimum, employing the various operations.

Diagram 2: Simplex movement via reflection, expansion, and contraction toward a minimum.

Experimental Protocol and Research Implementation

For researchers aiming to implement or test the Nelder-Mead algorithm, a detailed protocol and a clear understanding of the computational toolkit are essential.

Detailed Experimental Protocol

A robust implementation of the Nelder-Mead algorithm for a scientific study, such as parameter estimation in pharmacokinetic modeling, should follow this structured protocol:

Problem Definition: Define the objective function, (f(x)), to be minimized. In drug development, this could be the sum of squared errors between experimental data and model predictions. Ensure the function is implemented efficiently, as it will be evaluated frequently [18] [4].

Algorithm Initialization: