Validating Analytical Methods for Drug Substance Assay: A 2025 Guide to ICH Q2(R2) Compliance and Advanced Strategies

This article provides a comprehensive guide for researchers and drug development professionals on validating analytical methods for drug substance assays, aligned with the latest 2025 regulatory and technological trends.

Validating Analytical Methods for Drug Substance Assay: A 2025 Guide to ICH Q2(R2) Compliance and Advanced Strategies

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating analytical methods for drug substance assays, aligned with the latest 2025 regulatory and technological trends. It covers foundational principles from ICH Q2(R2) and Q14 guidelines, explores modern methodological approaches incorporating Quality-by-Design (QbD) and Artificial Intelligence (AI), offers troubleshooting strategies for common challenges like data integrity and complex modalities, and details comparative validation paradigms for biosimilars and advanced therapies. The content synthesizes current FDA guidance, technological innovations, and practical applications to ensure robust, compliant, and efficient analytical method lifecycle management.

Foundations of Analytical Validation: Understanding ICH Q2(R2), Q14, and the 2025 Regulatory Landscape

Analytical method validation provides documented evidence that a laboratory procedure is robust, reliable, and reproducible for its intended purpose, forming a critical pillar of quality assurance in pharmaceutical development [1]. This application note details the core principles and experimental protocols for validating methods used in drug substance quality control, aligning with modern International Council for Harmonisation (ICH) guidelines Q2(R2) and ICH Q14 [2]. We outline a systematic approach—from defining the Analytical Target Profile (ATP) to establishing a full validation protocol—ensuring methods consistently produce reliable results that confirm the identity, purity, potency, and safety of drug substances [3] [2].

The Regulatory Imperative and Analytical Lifecycle

Regulatory bodies like the U.S. Food and Drug Administration (FDA) mandate method validation to safeguard public health, requiring proof that analytical procedures are fit-for-purpose before approving new drugs [1] [2]. The ICH provides the harmonized technical guidelines that achieve global consistency, with ICH Q2(R2), "Validation of Analytical Procedures," serving as the primary reference [2].

Modern regulations emphasize an analytical procedure lifecycle model [2]. This model begins with proactive planning using an ATP, followed by method development, validation, and continuous monitoring in routine use. This represents a significant shift from a one-time "check-the-box" validation event to a science- and risk-based framework that builds quality into the method from the outset [2].

Core Validation Parameters for Drug Substance Assay

For a quantitative method like a drug substance assay, specific performance characteristics must be evaluated and documented. The table below summarizes these core parameters, their definitions, and typical experimental approaches [2].

Table 1: Core Validation Parameters for a Quantitative Drug Substance Assay

| Parameter | Definition | Experimental Protocol Summary |

|---|---|---|

| Accuracy | Closeness of test results to the true value [2]. | Analyze a minimum of 3 concentration levels (e.g., 80%, 100%, 120% of target), each in triplicate, using a drug substance standard of known purity. Calculate percent recovery. |

| Precision | Degree of scatter among a series of measurements [2]. | Repeatability: Analyze 6 independent preparations at 100% of test concentration. Intermediate Precision: Repeat the procedure on a different day, with a different analyst, or using different equipment. |

| Specificity | Ability to assess the analyte unequivocally in the presence of potential interferents [2]. | Analyze the drug substance alone and in the presence of impurities, degradation products (from forced degradation studies), and matrix components. Demonstrate peak purity and separation. |

| Linearity | Ability to obtain test results proportional to analyte concentration [2]. | Prepare and analyze a minimum of 5 concentration levels (e.g., 50-150% of target). Plot response vs. concentration and calculate correlation coefficient, slope, and y-intercept. |

| Range | The interval between upper and lower analyte concentrations for which linearity, accuracy, and precision are demonstrated [2]. | Established based on the linearity and accuracy data, typically encompassing the concentrations from the intended application (e.g., 80-120% for an assay). |

| LOD/LOQ | LOD (Limit of Detection): Lowest amount of analyte that can be detected. LOQ (Limit of Quantitation): Lowest amount that can be quantified with acceptable accuracy and precision [2]. | Based on signal-to-noise ratio (e.g., 3:1 for LOD, 10:1 for LOQ) or standard deviation of the response and the slope of the calibration curve. |

Detailed Experimental Protocol: Accuracy and Precision

This protocol provides a detailed methodology for establishing the accuracy and precision of a chromatographic assay for a drug substance, in accordance with the principles of ICH Q2(R2) [2].

Objective

To demonstrate that the analytical method provides accurate and precise results for the quantification of [Drug Substance Name] within the specified range.

Materials and Reagents

- Drug Substance Standard: Certified Reference Material of known high purity (e.g., >99.0%).

- Placebo/Matrix: Components of the drug product formulation excluding the active ingredient.

- Mobile Phase, Diluent: Prepared as per the analytical method specification.

- Instrumentation: High-Performance Liquid Chromatography (HPLC) system with [specify detector, e.g., UV/VIS].

Experimental Procedure

- Preparation of Standard Solution: Accurately weigh and dissolve the drug substance standard in diluent to obtain a stock solution at the target concentration (100%).

- Preparation of Accuracy/Precision Solutions:

- Accuracy (Recovery): Prepare solutions at 80%, 100%, and 120% of the target concentration by spiking known amounts of the drug substance standard into a placebo matrix. Prepare three independent samples for each level.

- Precision (Repeatability): Prepare six independent samples at the 100% level without placebo.

- Analysis: Inject each solution in triplicate into the HPLC system following the established method conditions ([specify column, flow rate, wavelength, etc.]).

- Intermediate Precision: Repeat the entire procedure for the 100% level (with and without placebo) on a different day using a different analyst and/or a different HPLC system.

Data Analysis and Acceptance Criteria

- Accuracy: Calculate the mean percent recovery for each level. The mean recovery at each level should be within 98.0-102.0%, with a relative standard deviation (RSD) of ≤2.0%.

- Precision (Repeatability): Calculate the RSD of the six 100% measurements. The RSD should be ≤2.0%.

- Intermediate Precision: Compare the results from the original and repeat studies. The combined RSD should be ≤2.0%, demonstrating that the method is robust under varied conditions.

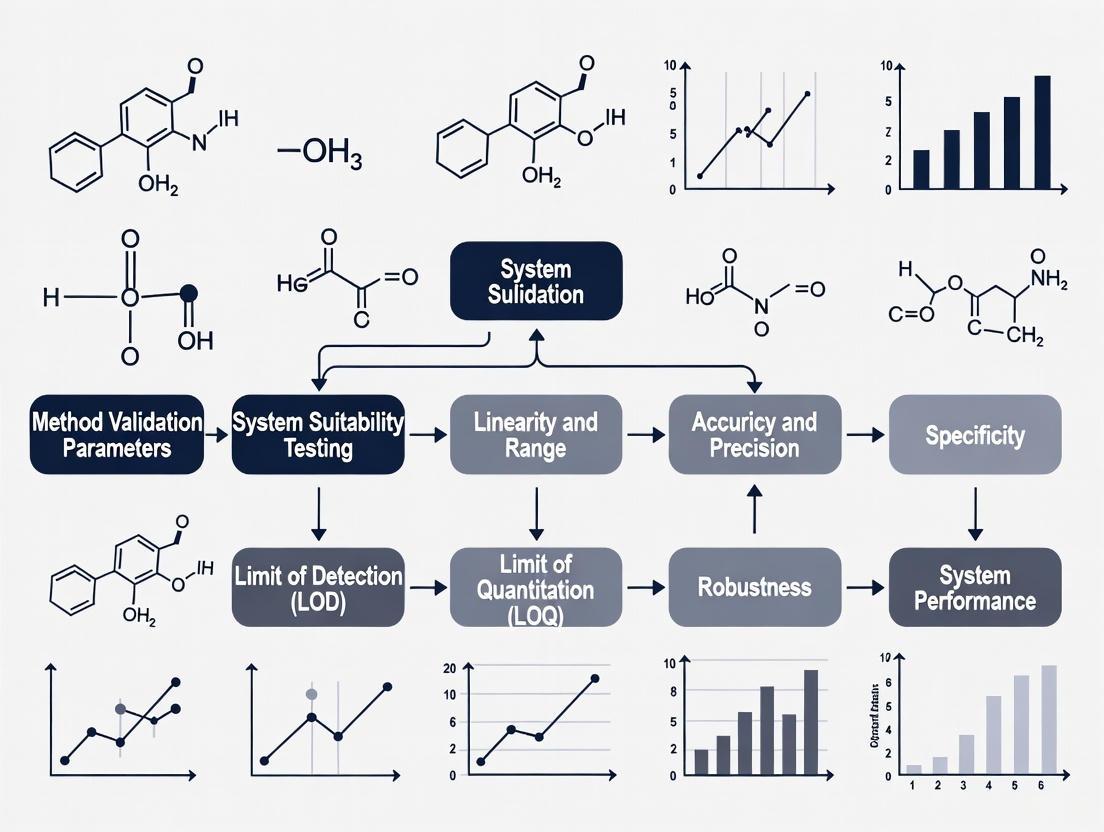

The Analytical Method Validation Workflow

The following diagram illustrates the key stages of the analytical method lifecycle, from initial planning to ongoing monitoring.

The Scientist's Toolkit: Essential Research Reagents and Materials

A robust analytical method relies on high-quality, well-characterized materials. The following table lists essential items for developing and validating a drug substance assay.

Table 2: Key Research Reagent Solutions and Materials for Analytical Method Validation

| Item | Function / Purpose |

|---|---|

| Certified Reference Standard | Serves as the benchmark for quantifying the drug substance; its certified purity and identity are essential for accurate and precise results [2]. |

| Pharmaceutical Grade Placebo | Used in specificity and accuracy experiments to confirm the method can distinguish the active ingredient from non-active components without interference [2]. |

| Forced Degradation Samples | Samples of the drug substance intentionally exposed to stress conditions (heat, light, acid, base, oxidation) to generate impurities and demonstrate method specificity and stability-indicating properties [2]. |

| System Suitability Solutions | A reference preparation used to verify that the chromatographic system is performing adequately at the start of the analysis (e.g., for resolution, tailing factor, and repeatability) [4]. |

| High-Purity Solvents & Reagents | Critical for preparing mobile phases and diluents to ensure low background noise, consistent chromatographic performance, and accurate detection. |

A rigorous, well-documented approach to analytical method validation is non-negotiable for ensuring drug substance quality, regulatory compliance, and ultimately, patient safety [1]. By adopting the modern, lifecycle approach outlined in ICH Q2(R2) and ICH Q14—beginning with a clear ATP and following structured protocols for key parameters like accuracy, precision, and specificity—developers can build quality and reliability directly into their analytical procedures [2]. This foundational work provides the critical data needed to support Chemistry, Manufacturing, and Controls (CMC) activities and smooths the path to successful regulatory submission and market approval.

The International Council for Harmonisation (ICH) guidelines Q2(R2) Validation of Analytical Procedures and Q14 Analytical Procedure Development represent a harmonized framework for the lifecycle of analytical procedures used in the assessment of drug substance and drug product quality [5]. These documents provide critical guidance for researchers and drug development professionals, establishing a science- and risk-based foundation for ensuring that analytical methods are consistently fit for their intended purpose [6].

ICH Q2(R2) provides a comprehensive discussion of the elements required to establish objective evidence that an analytical procedure is suitable for detecting or quantifying a quality attribute, delivering validation principles for techniques ranging from classical methods to advanced spectroscopic analyses [7] [6]. ICH Q14 complements this by outlining systematic approaches for developing and maintaining analytical procedures, describing both traditional and enhanced scientific methodologies [8]. Together, these guidelines facilitate more efficient regulatory evaluations and science-based post-approval change management, ultimately supporting the availability, safety, and efficacy of pharmaceutical products [6].

The scope of these guidelines encompasses new or revised analytical procedures used for release and stability testing of commercial drug substances and products, including both chemical and biological/biotechnological entities [7] [8]. While focused on commercial applications, the principles can be applied in a phase-appropriate manner throughout the product lifecycle, including clinical development stages [5].

Core Principles and Regulatory Framework

ICH Q2(R2): Validation of Analytical Procedures

ICH Q2(R2) establishes a general framework for validating analytical procedures, serving as a collection of standardized terms and their definitions to ensure consistent interpretation across regulatory regions [7]. The guideline addresses the most common purposes of analytical procedures, including assay/potency, purity, impurities, identity, and other quantitative or qualitative measurements [7]. A significant enhancement in the revised version is the inclusion of specific examples and illustrative approaches for advanced analytical techniques, providing much-needed clarity for methods such as mass spectrometry and qPCR that are essential for modern biopharmaceutical analysis [9].

The validation process according to Q2(R2) focuses on establishing documented evidence that the analytical procedure consistently delivers results that are scientifically valid for their intended use. The guidance emphasizes that the extent of validation should be justified based on the purpose of the procedure and its place in the overall control strategy [7]. For biological products specifically, the guidance helps clarify analytical methods that can best support development, particularly at critical phases where methodological uncertainty could lead to significant delays or additional costs [9].

ICH Q14: Analytical Procedure Development

ICH Q14 introduces a structured framework for developing analytical procedures using science- and risk-based approaches [8]. A foundational concept in this guideline is the Analytical Procedure Lifecycle, which encompasses all stages from initial development through routine use and eventual retirement or replacement of the method [5]. This lifecycle approach ensures that procedures remain suitable for their intended purpose despite changes in manufacturing processes, raw materials, or technological advancements.

The guideline describes two distinct approaches to analytical procedure development:

- Minimal Approach: A traditional methodology focusing on identifying procedure attributes, selecting appropriate technology, conducting development studies, and providing a procedural description [5]

- Enhanced Approach: Incorporates Quality by Design (QbD) principles, including defining an Analytical Target Profile (ATP), conducting risk assessments, performing multivariate experiments when appropriate, and establishing a comprehensive control strategy with defined lifecycle change management plans [5]

While application of the enhanced approach is not mandatory, regulators encourage applying its individual elements to improve analytical understanding and facilitate more efficient change management [5].

Integrated Lifecycle Management

The integration of Q2(R2) and Q14 establishes a complete framework for the analytical procedure lifecycle, connecting development activities with validation requirements and post-approval change management [5] [9]. This integrated approach aligns with existing ICH quality guidelines (Q8, Q9, Q10) that emphasize understanding processes, maintaining a state of control, and pursuing continuous improvement [5].

The FDA has issued both Q2(R2) and Q14 as final guidances in March 2024, replacing the previous draft versions and providing regulatory certainty for implementation [6]. Furthermore, in July 2025, ICH published comprehensive training materials developed by the Q2(R2)/Q14 Implementation Working Group to support harmonized global understanding and consistent application of these guidelines [10].

Analytical Procedure Validation: ICH Q2(R2) Requirements

Key Validation Characteristics

ICH Q2(R2) defines specific validation characteristics that must be evaluated to demonstrate an analytical procedure is suitable for its intended purpose. The selection of which characteristics to validate depends on the nature of the analytical procedure (identification, testing for impurities, assay, etc.). The table below summarizes the core validation characteristics and their applicability to different types of analytical procedures.

Table 1: Analytical Procedure Validation Characteristics per ICH Q2(R2)

| Validation Characteristic | Identification | Testing for Impurities | Assay/Potency |

|---|---|---|---|

| Accuracy | Not required | Required | Required |

| Precision | |||

| - Repeatability | Not required | Required | Required |

| - Intermediate Precision | Not required | May be required | May be required |

| Specificity | Required | Required | Required |

| Detection Limit (LOD) | Not required | Required | Not required |

| Quantitation Limit (LOQ) | Not required | Required | Not required |

| Linearity | Not required | Required | Required |

| Range | Not required | Required | Required |

Detailed Validation Protocols

Accuracy Validation Protocol

Purpose: To demonstrate the closeness of agreement between the value accepted as a true value or reference value and the value found [7].

Experimental Methodology:

- Prepare a minimum of 9 determinations over a minimum of 3 concentration levels covering the specified range (e.g., 3 concentrations, 3 replicates each)

- For drug substance analysis using comparison to a reference standard:

- Prepare homogeneous samples of the drug substance with known purity

- Spike with known quantities of reference standard at levels representing 80%, 100%, and 120% of target concentration

- Analyze samples using the validated method

- Calculate recovery: %Recovery = (Measured Concentration/Added Concentration) × 100

- Acceptance criteria typically require mean recovery between 98-102% with RSD ≤2% for assay methods

Data Analysis:

- Calculate mean value, standard deviation, and relative standard deviation (RSD)

- Perform statistical t-test to evaluate if there is significant difference between measured and true values

- Report confidence intervals for the mean recovery

Precision Validation Protocol

Purpose: To demonstrate the degree of scatter between a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions [7].

Experimental Methodology for Repeatability:

- Prepare a minimum of 6 determinations at 100% of test concentration

- Analyze samples independently by the same analyst using the same equipment on the same day

- Alternatively, prepare a minimum of 9 determinations over a minimum of 3 concentration levels (e.g., 3 concentrations, 3 replicates each)

Experimental Methodology for Intermediate Precision:

- Demonstrate the influence of random events on the analytical procedure

- Vary factors such as different days, different analysts, different equipment

- Use experimental design (DOE) approaches to efficiently evaluate multiple factors

- Design study to include a minimum of 6 independent preparations at 100% test concentration

Data Analysis:

- Calculate variance, standard deviation, and relative standard deviation (RSD)

- For intermediate precision, perform ANOVA to separate contributions from different factors

- Acceptance criteria for assay methods typically require RSD ≤2% for repeatability and ≤3% for intermediate precision

Specificity Validation Protocol

Purpose: To demonstrate the ability to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, degradation products, and matrix components [7].

Experimental Methodology for Drug Substance Assay:

- Prepare individual samples of drug substance containing:

- Drug substance alone (positive control)

- Placebo or matrix components (blank)

- Drug substance with intentionally added impurities or degradation products

- Stressed samples (forced degradation studies)

- Analyze all samples using the proposed procedure

- For chromatographic methods, demonstrate resolution between the analyte peak and the closest eluting potential impurity

- Verify that the blank does not produce interfering peaks at the retention time of the analyte

Acceptance Criteria:

- Resolution factor ≥2.0 between analyte and closest eluting potential impurity

- Peak purity tests (e.g., DAD or MS) confirm analyte peak homogeneity

- No interference from blank at analyte retention time

Analytical Procedure Development: ICH Q14 Requirements

Analytical Target Profile (ATP)

The Analytical Target Profile (ATP) is a foundational element of the enhanced approach in ICH Q14, defined as a "summary of the expected characteristics of the analytical procedure" [5]. The ATP outlines the performance requirements necessary for the procedure to be fit for its intended purpose, serving as the foundation for design, development, and lifecycle management.

Key Elements of an ATP:

- Measurand (what is being measured)

- Required performance criteria (accuracy, precision, etc.)

- Conditions under which the measurement is performed

- The purpose of the measurement and its decision rules

- Required level of confidence in the results

Example ATP for Drug Substance Assay: "The procedure must be capable of quantifying the drug substance in the presence of process impurities and degradation products with an accuracy of 98-102% and precision of ≤2% RSD, providing results with 95% confidence that the true value lies within ±2% of the reported value."

Minimal vs. Enhanced Approach

ICH Q14 describes two complementary approaches to analytical procedure development, as summarized in the table below:

Table 2: Comparison of Minimal and Enhanced Approaches to Analytical Procedure Development

| Aspect | Minimal Approach | Enhanced Approach |

|---|---|---|

| Philosophy | Traditional, empirical | Science-based, risk-managed |

| ATP Definition | Not required | Recommended foundation |

| Risk Assessment | Informal | Structured and documented |

| Experimental Design | Univariate experimentation | Uni- or multi-variate experiments |

| Knowledge Management | Limited documentation | Systematic knowledge capture |

| Control Strategy | Fixed operating parameters | Proven Acceptable Ranges (PARs) |

| Change Management | Case-by-case assessment | Pre-defined based on knowledge |

Risk Management in Analytical Procedure Development

ICH Q14 emphasizes the application of formal risk management principles (aligning with ICH Q9) to identify and prioritize factors that may impact analytical procedure performance [5].

Risk Assessment Protocol:

- Define the ATP: Establish target measurement uncertainty and performance requirements

- Identify Potential Risk Factors: Brainstorm factors that may impact the procedure's ability to meet the ATP

- Material attributes (sample composition, stability)

- Instrument parameters (detector sensitivity, precision)

- Method parameters (mobile phase composition, pH, temperature)

- Environmental factors (temperature, humidity)

- Analyst technique

- Risk Analysis: Evaluate and prioritize risks based on severity, probability, and detectability

- Risk Evaluation: Determine which risks require experimental investigation

- Design of Experiments (DOE): Develop structured experiments to study high-priority risk factors

- Define Control Strategy: Establish proven acceptable ranges for critical parameters

Practical Application and Case Studies

Implementation Workflow

The following workflow diagram illustrates the integrated application of ICH Q14 and ICH Q2(R2) throughout the analytical procedure lifecycle:

Method Validation Experimental Design

For the validation of a drug substance assay method, the following experimental design ensures comprehensive evaluation of all relevant validation characteristics:

Case Study: HPLC Assay Method for Small Molecule Drug Substance

Background: Development and validation of a stability-indicating HPLC method for a small molecule drug substance assay.

Application of ICH Q14 Enhanced Approach:

- ATP Definition: "The method must quantify the drug substance in the presence of known and unknown impurities with accuracy of 98-102%, precision of ≤2% RSD, and demonstrate specificity to separate all potential degradants with resolution ≥2.0."

- Risk Assessment: Identified critical method parameters including mobile phase pH, column temperature, gradient profile, and detection wavelength

- DoE Implementation: Used a fractional factorial design to evaluate the effect of 5 method parameters on 3 critical quality attributes (resolution, tailing factor, runtime)

- Establishment of PARs: Defined proven acceptable ranges for critical parameters:

- Mobile phase pH: ±0.2 units

- Column temperature: ±3°C

- Gradient slope: ±2%

ICH Q2(R2) Validation Results: Table 3: HPLC Method Validation Results for Drug Substance Assay

| Validation Characteristic | Protocol | Results | Acceptance Criteria |

|---|---|---|---|

| Accuracy | 9 determinations at 80%, 100%, 120% | Mean recovery: 99.8% RSD: 0.7% | 98-102% RSD ≤2% |

| Precision - Repeatability | 6 determinations at 100% | RSD: 0.5% | RSD ≤2% |

| Precision - Intermediate Precision | Different analyst, instrument, day | Overall RSD: 0.8% | RSD ≤3% |

| Specificity | Forced degradation (heat, light, acid, base, oxidation) | Resolution from closest impurity: 2.5 Peak purity: Pass | Resolution ≥2.0 |

| Linearity | 5 concentrations (50-150%) | R² = 0.9998 | R² ≥0.998 |

| Range | 80-120% of target concentration | Demonstrated suitable accuracy, precision, linearity | Meets accuracy, precision, linearity requirements |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of ICH Q2(R2) and ICH Q14 requires carefully selected reagents and materials that ensure analytical method reliability and reproducibility. The following table details essential research reagent solutions for drug substance assay development and validation.

Table 4: Essential Research Reagent Solutions for Analytical Development

| Reagent/Material | Function | Critical Quality Attributes |

|---|---|---|

| Reference Standards | Quantitation and method calibration | Certified purity, stability, well-characterized impurities |

| HPLC/UPLC Grade Solvents | Mobile phase preparation | Low UV cutoff, low particulate content, controlled water content |

| Chromatography Columns | Analyte separation | Column efficiency (N), retention reproducibility, peak symmetry |

| Buffer Salts | Mobile phase modification | pH accuracy, low UV absorbance, high purity |

| Stable Isotope-labeled Internal Standards | Mass spectrometry quantification | Isotopic purity, chemical stability, absence of interference |

| System Suitability Standards | Daily performance verification | Well-characterized resolution, tailing factor, and retention time |

Advanced Applications: Biologics and Multivariate Methods

Special Considerations for Biological Products

The application of ICH Q2(R2) and ICH Q14 to biological products requires special considerations due to their inherent complexity and the nature of the analytical methods employed [9].

Key Challenges and Solutions:

- Potency Assays: Often biologically-based with higher variability

- Solution: Implement parallel line analysis with wider acceptance criteria

- Focus on intermediate precision rather than just repeatability

- Product-Related Impurity Methods: Impurities may not be fully characterized

- Solution: Focus on validation of what can be controlled

- Implement orthogonal methods for comprehensive characterization

- Platform Procedures: Common for monoclonal antibodies and novel modalities

- Solution: Leverage prior knowledge while demonstrating suitability for specific product

- Use modular validation approaches where appropriate

Multivariate Analytical Procedures

ICH Q14 includes specific guidance on developing multivariate analytical procedures, such as Near Infrared (NIR) and Raman spectroscopy [5] [9]. These methods require different validation approaches compared to univariate methods.

Validation Considerations for Multivariate Methods:

- Model Robustness: Evaluate the effect of variations in sample presentation, environmental conditions, and instrument response

- Specificity: Demonstrate the model's ability to identify and quantify the analyte in the presence of expected variations in the sample matrix

- Accuracy: Validate using primary reference methods, accounting for the total error of the multivariate prediction

- Precision: Include both repeatability of sample measurements and reproducibility of model predictions

Lifecycle Management and Post-Approval Changes

ICH Q14 provides principles for managing analytical procedures throughout their lifecycle, including post-approval changes [5]. A well-documented enhanced approach facilitates more efficient regulatory reporting of changes, as the understanding built during development provides scientific justification for the proposed changes.

Change Management Protocol:

- Change Identification: Document proposed change and its potential impact

- Risk Assessment: Evaluate potential impact on method performance relative to ATP

- Bridging Studies: Design experiments to demonstrate comparable performance

- Reporting Categorization: Determine regulatory reporting category based on risk assessment

- Implementation: Deploy change with appropriate documentation and training

The enhanced approach with proper knowledge management enables some changes to be implemented under the company's pharmaceutical quality system without prior approval, as the existing knowledge provides sufficient evidence that the change does not impact method performance [5].

Within drug substance assay research, the validation of analytical methods is a fundamental prerequisite for generating reliable and meaningful data. It provides documented evidence that a specific analytical procedure is fit for its intended purpose, ensuring the identity, potency, purity, and quality of drug substances. This document, framed within a broader thesis on analytical method validation, details the application notes and experimental protocols for five core validation parameters: Accuracy, Precision, Specificity, Linearity, and Range. These parameters, as defined in the ICH Q2(R2) guideline, form the foundation for demonstrating that an analytical method is suitable for providing trustworthy results to support drug development and regulatory compliance [7].

Core Parameters: Application Notes & Protocols

The following sections provide a detailed examination of each core validation parameter, including its definition, regulatory basis, and a standardized experimental protocol.

Accuracy

Application Notes: Accuracy measures the closeness of agreement between the value found by the analytical method and the value accepted as either a conventional true value or an accepted reference value. It is sometimes termed "trueness" and is established across the specified range of the method [11]. For drug substance assays, accuracy is typically assessed by applying the method to a drug substance that has been spiked with known quantities of impurities, or by comparison to a well-characterized reference method [11].

Experimental Protocol:

- Sample Preparation: Prepare a minimum of nine determinations at a minimum of three concentration levels (e.g., 80%, 100%, 120% of the target concentration) covering the specified range. Each concentration level should be prepared in triplicate [11].

- Reference Standard: Use a certified reference standard of the drug substance with known purity for preparation of the accuracy samples.

- Analysis: Analyze the prepared samples using the method under validation.

- Data Analysis: Calculate the recovery (%) for each measurement using the formula: (Measured Concentration / Known Concentration) × 100. Report the mean recovery and the relative standard deviation (RSD) or confidence interval for each concentration level [11].

Table 1: Example Acceptance Criteria for Accuracy

| Analytical Procedure | Concentration Level | Typical Acceptance Criteria (Mean Recovery %) |

|---|---|---|

| Drug Substance Assay | 100% (Target) | 98.0 - 102.0% |

| 80% - 120% of Target | 98.0 - 102.0% | |

| Impurity Quantification | Reporting Threshold | Varies based on impurity level and relevance |

Precision

Application Notes: Precision expresses the closeness of agreement (degree of scatter) between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions [11]. It is investigated at three levels:

- Repeatability (Intra-assay Precision): Precision under the same operating conditions over a short interval of time [12] [11].

- Intermediate Precision: Precision within the same laboratory, including variations such as different days, different analysts, or different equipment [11].

- Reproducibility (Ruggedness): Precision between different laboratories, typically assessed during method transfer studies [11].

Experimental Protocol:

- Repeatability: Analyze a minimum of six determinations at 100% of the test concentration, or a minimum of nine determinations covering the specified range (e.g., three concentrations/three replicates each) [11].

- Intermediate Precision: Perform the same procedure as for repeatability, but using a different analyst on a different day with different equipment (e.g., HPLC system and column). A collaborative experimental design between two analysts is recommended [11].

- Data Analysis: For all precision measurements, report the standard deviation (SD) and the relative standard deviation (%RSD) of the results. For intermediate precision, the % difference in the mean values between the two analysts' results can be subjected to statistical tests (e.g., Student's t-test) [11].

Table 2: Example Acceptance Criteria for Precision

| Type of Precision | Sample Type | Typical Acceptance Criteria (%RSD) |

|---|---|---|

| Repeatability | Drug Substance (Assay) | Not more than (NMT) 1.0% |

| Intermediate Precision | Drug Substance (Assay) | NMT 1.5% (and no significant difference between analysts based on t-test) |

Specificity

Application Notes: Specificity is the ability to assess unequivocally the analyte of interest in the presence of other components that may be expected to be present, such as impurities, degradants, or matrix components [13] [14]. A specific method ensures that a peak's response is due to a single component, with no co-elutions. For chromatographic methods, specificity is commonly demonstrated by the resolution of the two most closely eluted compounds and can be confirmed using peak purity tests based on photodiode-array (PDA) or mass spectrometry (MS) detection [11].

Experimental Protocol:

- For Identification: Demonstrate the ability of the method to discriminate between compounds of closely related structure which are likely to be present.

- For Assay and Impurity Tests: Inject individual solutions of the drug substance and potential interfering components (e.g., impurities, degradants, excipients) to demonstrate that they do not interfere with the analyte peak.

- Forced Degradation Studies: Stress the drug substance sample (e.g., with acid, base, oxidation, heat, and light) and analyze the samples to demonstrate that the assay is unaffected by the presence of degradants and that the analyte peak is pure and free from co-eluting peaks.

- Data Analysis: Report the resolution between the analyte peak and the closest eluting potential interfering peak. For PDA, report the peak purity index, confirming that the analyte peak is spectrally homogeneous [11].

Linearity and Range

Application Notes: Linearity is the ability of the method to obtain test results that are directly proportional to the concentration of the analyte in a given range. Range is the interval between the upper and lower concentrations of the analyte for which it has been demonstrated that the analytical procedure has a suitable level of precision, accuracy, and linearity [13] [14]. The range is normally expressed in the same units as the test results.

Experimental Protocol:

- Sample Preparation: Prepare a minimum of five concentration levels of the analyte spanning the specified range. A typical range for a drug substance assay is from 80% to 120% of the target concentration [11].

- Analysis: Analyze each concentration level in a randomized order.

- Data Analysis: Plot the analyte response against the known concentration. Perform a linear regression analysis on the data. Report the regression line equation, the coefficient of determination (r²), and the y-intercept. Evaluate the residuals (the difference between the observed and the predicted values) to check for any systematic non-linear patterns [11].

Table 3: Example Acceptance Criteria for Linearity

| Parameter | Typical Acceptance Criteria |

|---|---|

| Correlation Coefficient (r) | Not less than (NLT) 0.997 |

| Coefficient of Determination (r²) | NLT 0.995 |

| Y-Intercept | Should be not significantly different from zero (e.g., p > 0.05) |

| Residuals | Randomly distributed around zero |

Visualizing the Validation Workflow and Relationships

The core validation parameters are not isolated; they are interconnected components of a comprehensive validation strategy. The following diagrams illustrate the logical workflow for method validation and the relationships between the key parameters.

Diagram 1: Sequential Validation Workflow

Diagram 2: Interrelationship of Core Parameters

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful execution of validation protocols relies on a set of essential materials and reagents. The following table details key items required for experiments, particularly those involving chromatographic techniques for drug substance assay.

Table 4: Essential Research Reagents and Materials for Validation Studies

| Item | Function in Validation |

|---|---|

| Certified Reference Standard | Provides an accepted reference value with known purity and identity, essential for assessing Accuracy and Linearity [11]. |

| High-Purity Solvents & Reagents | Ensure the analytical signal is specific to the analyte and prevent interference or baseline noise that affects LOD/LOQ and Specificity. |

| Chromatographic Column | The stationary phase for separation; critical for achieving Specificity by resolving the analyte from impurities [11]. |

| Mass Spectrometry (MS) Detector | Provides unequivocal confirmation of peak identity and purity, offering orthogonal data for Specificity validation [11]. |

| Photodiode-Array (PDA) Detector | Enables collection of UV spectra across a peak, used for confirming peak homogeneity and purity for Specificity [11]. |

| Stable Isotope-Labeled Internal Standard | Used in complex matrices to improve the Precision and Accuracy of quantitation by correcting for sample preparation variability. |

The Impact of Data Integrity and ALCOA+ Principles on Method Validation

The validation of analytical methods is a critical pillar in pharmaceutical research and development, ensuring that the methods used to quantify drug substances are reliable, reproducible, and fit for their intended purpose. In the context of drug substance assay research, the integrity of the data generated throughout the method validation lifecycle is paramount. The ALCOA+ framework provides a foundational set of principles that, when embedded into validation activities, safeguards data quality and regulatory compliance. These principles—Attributable, Legible, Contemporaneous, Original, and Accurate, expanded with Complete, Consistent, Enduring, and Available—have evolved from a regulatory concept into a practical toolkit for ensuring data trustworthiness from initial method development through to routine use [15].

Global regulatory authorities, including the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA), intensely focus on data integrity during inspections. Analyses indicate that a significant majority of FDA warning letters cite data integrity lapses, often linked to inadequate controls over electronic records and audit trails [16] [15]. For analytical scientists, this translates to a non-negotiable requirement: method validation must be planned and executed with ALCOA+ as a core design feature, not as a retrospective add-on. This approach is especially crucial for drug substance assays, where the accuracy of results directly impacts decisions about product safety, efficacy, and quality [17].

The ALCOA+ Framework in the Context of Method Validation

The ALCOA+ principles provide a clear and actionable roadmap for maintaining data integrity at every stage of an analytical method's lifecycle. The table below defines each principle and illustrates its specific application in drug substance assay validation.

Table 1: Application of ALCOA+ Principles in Analytical Method Validation for Drug Substance Assay

| ALCOA+ Principle | Core Definition | Application in Method Validation & Drug Substance Assay |

|---|---|---|

| Attributable | Who acquired the data or performed an action, and when? | Linking all data (e.g., chromatograms, sample weights, results) to the specific analyst and instrument used. Using unique user logins for computerized systems like HPLC to track all actions [18] [19]. |

| Legible | Can the data be read and understood permanently? | Ensuring all records, including electronic raw data files, notebook entries, and printouts, remain readable and accessible for the entire required retention period [18] [20]. |

| Contemporaneous | Was the data recorded at the time the activity was performed? | Documenting sample preparation, instrument analysis, and observations in real-time, not retrospectively. Using system-generated, synchronized timestamps for all electronic records [16] [21]. |

| Original | Is this the first capture or a certified copy of the data? | Preserving the source data file from the instrument (e.g., the raw chromatographic data sequence) as the definitive record, not a processed printout or transcribed result [16] [19]. |

| Accurate | Is the data error-free and truthful? | Implementing controls such as calibrated balances and pipettes, validated calculations within software, and scientifically sound procedures to prevent and detect errors [16] [20]. |

| Complete | Is all data present, including repeats and rejects? | Retaining all data generated during validation, including all replicate injections, failed runs, and out-of-specification (OOS) results, with associated metadata and audit trails [18] [22]. |

| Consistent | Is the data sequenced logically with aligned timestamps? | Sequencing all steps chronologically with consistent date/time formats. Ensuring system clocks are synchronized across all devices (e.g., HPLC, balance, PC) to avoid contradictions [16] [18]. |

| Enduring | Is the data preserved for the required retention period? | Storing all validation data and records in a durable, validated format (e.g., secure electronic archives with regular backups) to prevent loss or degradation [18] [23]. |

| Available | Can the data be retrieved and reviewed when needed? | Ensuring that all data, metadata, and audit trails are readily accessible for the lifetime of the record for review, audit, or inspection purposes [16] [18]. |

The progression from the original five ALCOA principles to the expanded ALCOA+ reflects the industry's and regulators' response to the complexities of modern, digital data systems. The "+" attributes ensure that data is not only created reliably but also remains reliable, reconstructible, and trustworthy throughout the method's entire lifecycle [15] [22]. Some regulatory frameworks, such as the draft EU GMP Chapter 4, are now further formalizing ALCOA++, which explicitly adds Traceable to the list, emphasizing the need for a fully reconstructible data history [15].

Application Notes: Integrating ALCOA+ into the Method Validation Lifecycle

Embedding data integrity by design, guided by ALCOA+, is the most effective strategy for ensuring the credibility of an analytical method. The following workflow visualizes how these principles are integrated into the key stages of the method validation lifecycle for a drug substance assay.

Diagram 1: ALCOA+ in the Method Validation Lifecycle (LCM: Lifecycle Management)

Method Development and Validation Planning

The foundation of data integrity is laid during the planning and development stages. A risk-based approach should be employed to identify and control potential data integrity vulnerabilities.

- Risk Assessment and Controls: Identify critical data and processes within the method where errors or manipulation could occur. For a drug substance assay, this includes steps like standard/ sample weighing, solution preparation, dilution, and chromatographic integration. Mitigating controls include using calibrated and connected balances that log weights electronically, validated titration instruments, and integrated chromatography data systems (CDS) with enabled and secured audit trails [19] [17].

- Validation Protocol Design: The validation protocol itself must be a Complete and Consistent document. It should predefine all acceptance criteria for validation parameters (specificity, accuracy, precision, linearity, range, robustness) and explicitly state the procedures for data recording, including the types of raw data to be collected and the review processes for data and audit trails [17].

Execution of Validation Studies

During the hands-on phase of validation, the core ALCOA principles are put into practice to ensure the trustworthiness of the generated data.

- Attributable and Contemporaneous Data Capture: Analysts must use unique login credentials for all computerized systems, such as the CDS and Electronic Lab Notebook (ELN). All data entries must be made at the time of the activity. System clocks must be synchronized to a network time protocol (NTP) server to ensure Contemporaneous and Consistent timestamps across all instruments and records [16] [21].

- Accuracy and Originality of Data: All equipment (e.g., HPLC systems, balances, pH meters) must be within their calibration due dates. The Original raw data files from instruments must be saved to a secure, managed location immediately upon acquisition. Any manual transcription of data (e.g., from a balance to an ELN) should be verified by a second person, though automated data capture is strongly preferred [20] [23].

Data Management, Reporting, and Lifecycle Management

Once data is generated, the "plus" attributes of ALCOA+ ensure its long-term reliability and utility.

- Complete and Enduring Data Retention: All data generated during the validation must be retained, including chromatograms for all replicates, sample preparation records, and instrument qualification logs. This includes metadata and audit trails that track any reprocessing or changes. Data must be backed up and archived in a validated system to ensure it is Enduring and Available throughout the record retention period [18] [22].

- Traceable Reporting and Lifecycle Management: The final validation report must be Accurate and Complete, allowing for the clear Traceability of all results back to the Original source data. Any changes to the method after its implementation, or performance issues identified during routine use, should be managed through a formal change control process, triggering method re-validation or improvement, thus closing the lifecycle loop [15] [17].

Experimental Protocols for ALCOA+-Compliant Method Validation

Protocol: Determination of Accuracy for a Drug Substance Assay

1.0 Objective To demonstrate the accuracy of the analytical method by spiking a drug substance into a placebo (if applicable) or sample matrix at known concentrations and determining the recovery of the assay.

2.0 ALCOA+ Considerations & Pre-Execution Checks

- Attributable: Confirm unique user logins for the CDS and LIMS/ELN.

- Original & Accurate: Verify HPLC system calibration and performance qualification (e.g., system suitability test). Use certified reference standards.

- Complete: Plan for three concentration levels (e.g., 80%, 100%, 120% of target), each with three replicates. The protocol must include all these samples.

3.0 Procedure

- Sample Preparation: Precisely weigh and prepare the drug substance to produce solutions at 80%, 100%, and 120% of the target test concentration. Perform each preparation independently three times.

- Data Acquisition: Inject each preparation into the HPLC system following the validated method. The CDS sequence file, which links the sample to the specific vial position and analyst, is the Original record.

- Data Recording: Record all sample weights, dilution volumes, and preparation dates Contemporaneously in an ELN or controlled worksheet. The CDS automatically captures the injection timestamp and raw data file.

4.0 Data Analysis and Acceptance Criteria

- Calculate the percentage recovery for each preparation and the mean recovery at each concentration level.

- The method is considered accurate if the mean recovery at each level is within 98.0–102.0%, and the Relative Standard Deviation (RSD) for the replicates is ≤2.0%.

5.0 Data Integrity & Documentation Requirements

- Retain: The complete CDS sequence and raw data files for all injections, including any failed or invalid runs.

- Retain: Electronic lab notebook pages or controlled worksheets with all calculations.

- Review: The audit trail for the CDS sequence file to verify there were no unauthorized or unexplained changes post-acquisition [16] [21].

Protocol: Determination of Precision for a Drug Substance Assay

1.0 Objective To demonstrate the precision of the analytical method, expressed as repeatability (intra-assay precision) by analyzing multiple preparations of a homogeneous sample.

2.0 ALCOA+ Considerations

- Consistent: Ensure all preparations and analyses are performed by the same analyst, using the same instrument, in a single session to qualify as repeatability.

- Complete: The dataset must include all replicate results without exclusion.

3.0 Procedure

- Prepare six independent sample solutions from a single, homogeneous batch of the drug substance at 100% of the test concentration.

- Inject each solution once into the HPLC system under the same analytical conditions.

4.0 Data Analysis and Acceptance Criteria

- Calculate the %RSD of the assay results obtained from the six injections.

- The method is considered precise if the %RSD is ≤2.0%.

5.0 Data Integrity & Documentation Requirements

- Retain: The raw data files for all six injections and the system suitability injections.

- Document: Justification for any outlier removal, which must be pre-defined in the protocol and involve statistical analysis. The original result must still be retained [18] [22].

Table 2: Key Research Reagent Solutions and Materials for Drug Substance Assay Validation

| Material/Reagent | Function in Validation | ALCOA+ Integrity Consideration |

|---|---|---|

| Certified Reference Standard | Provides the known, high-purity substance against which the method's Accuracy and Linearity are calibrated. | Must be traceable to a primary standard (e.g., USP) with a valid certificate of analysis (Attributable, Accurate). Log usage and weight to ensure data Completeness [17]. |

| HPLC-Grade Solvents & Buffers | Used in mobile phase and sample preparation. Purity is critical for baseline stability, specificity, and preventing false peaks. | Prepare with calibrated pH meters and record batch numbers of solvents. Document preparation dates and expiration times to ensure Accuracy and Consistency [23]. |

| Chromatography Data System (CDS) | Software for controlling the HPLC, acquiring data, and processing results (e.g., peak integration). | Must be validated [21 CFR Part 11/Annex 11 compliant]. Requires unique user logins (Attributable), an enabled audit trail (Complete, Traceable), and secure, backed-up data storage (Enduring, Available) [19] [24]. |

| Electronic Lab Notebook (ELN) | Digital system for recording sample prep details, observations, and results. | Promotes Contemporaneous recording and structured data capture. Configurable workflows and e-signatures ensure Attributability and Completeness versus paper [21] [23]. |

Adherence to ALCOA+ principles is no longer a best practice but a regulatory mandate. The FDA, EMA, and other global authorities explicitly reference these principles in their guidance documents [15] [23]. Failure to demonstrate robust data integrity controls during method validation can lead to serious regulatory consequences, including FDA Form 483 observations, warning letters, and rejection of regulatory submissions, ultimately compromising drug approval [16] [22].

In conclusion, the impact of data integrity and ALCOA+ principles on analytical method validation is profound and all-encompassing. For a drug substance assay, which forms the bedrock of quality control for a pharmaceutical product, a method is only scientifically valid if the data proving its validity is itself trustworthy. By integrating ALCOA+ into every stage of the validation lifecycle—from initial risk assessment and protocol design through to data acquisition, management, and reporting—organizations can ensure the generation of reliable, defensible, and inspection-ready data. This not only fulfills regulatory expectations but also builds a solid foundation of quality and safety for the patient.

Exploring the Shift from Traditional to Lifecycle Management Approaches

The foundation of pharmaceutical quality control is undergoing a fundamental transformation, moving from static, compliance-focused validation exercises toward dynamic, science-based lifecycle management of analytical procedures. This paradigm shift is driven by updated international regulatory guidelines, particularly ICH Q2(R2) on analytical procedure validation and ICH Q14 on analytical procedure development, which were finalized in 2023 and implemented in 2024 [25]. These guidelines, together with the United States Pharmacopeia's revised general chapter <1225> on Validation of Compendial Procedures, form an interconnected framework that demands the industry abandon the comfortable fiction that validation is a discrete event rather than an ongoing commitment to analytical quality [26].

The traditional approach to analytical method validation has followed a familiar script: conduct studies demonstrating acceptable performance for specific parameters, generate validation reports showing data meets predetermined acceptance criteria, and file these reports for regulatory submissions [26]. This "check-the-box" methodology often created what has been described as "compliance theater"—a performance of rigor that may not reflect the method's actual capability to generate reliable results under routine conditions [26]. In contrast, the lifecycle management perspective championed by ICH Q14 and USP <1220> treats validation as just one stage in a continuous process of ensuring analytical fitness for purpose [26]. This modern framework consists of three interconnected stages: Stage 1 (Procedure Design), which generates understanding of how method parameters affect performance; Stage 2 (Procedure Performance Qualification), which confirms the method performs as intended under specified conditions; and Stage 3 (Continued Procedure Performance Verification), which treats method capability as dynamic rather than static [26] [2].

This application note examines the practical implementation of this paradigm shift within the specific context of validating analytical methods for drug substance assay research. It provides detailed protocols, visualization tools, and comparative frameworks to enable researchers, scientists, and drug development professionals to successfully navigate this transition and build genuinely robust analytical systems rather than just impressive validation packages.

Comparative Analysis: Traditional vs. Lifecycle Approaches

Foundational Principles and Regulatory Basis

Table 1: Core Differences Between Traditional and Lifecycle Validation Approaches

| Aspect | Traditional Validation Approach | Lifecycle Management Approach |

|---|---|---|

| Regulatory Foundation | ICH Q2(R1) [2] | ICH Q2(R2), ICH Q14, USP <1220>, ICH Q12 [26] [2] [27] |

| Underlying Philosophy | "Check-the-box" compliance [26] | Science- and risk-based understanding [2] |

| Temporal Nature | One-time event at method completion [26] | Continuous process throughout method lifespan [26] [2] |

| Primary Focus | Demonstrating acceptance criteria are met [26] | Ensuring ongoing fitness for purpose [26] |

| Key Planning Tool | Validation protocol [2] | Analytical Target Profile (ATP) [2] |

| Knowledge Management | Limited connection between development and validation [26] | Enhanced approach leveraging prior knowledge [2] [27] |

| Change Management | Complex, often requiring prior approval [27] | Facilitated through established conditions (ECs) and PACMPs [27] |

Impact on Drug Substance Assay Validation

For drug substance assay research, the lifecycle approach introduces several transformative concepts that fundamentally change how methods are developed, validated, and maintained. The Analytical Target Profile (ATP) serves as the cornerstone of this approach—a prospective summary that describes the intended purpose of an analytical procedure and its required performance characteristics [2]. By defining the ATP at the start of method development, researchers can ensure the method is designed to be fit-for-purpose from the very beginning [2].

The concept of "reportable result" represents another significant shift, forcing scientists to validate what they actually use for quality decisions, not just individual measurements [26]. For a drug substance assay, this means validating the precision and accuracy of the final reported value (e.g., the mean of duplicate sample preparations), rather than just demonstrating acceptable performance for individual injections [26]. This distinction is crucial because a method might show excellent repeatability for individual injections while exhibiting problematic variability when the full analytical procedure is executed under intermediate precision conditions.

The replication strategy concept further enhances this approach by ensuring that validation studies employ the same replication scheme that will be used for routine sample analysis to generate reportable results [26]. This alignment brings validation studies closer to "work-as-done" rather than "work-as-imagined," creating a more realistic assessment of method performance under actual operating conditions.

Implementation Framework and Experimental Protocols

Stage 1: Analytical Procedure Design with Enhanced Approaches

The initial stage of the analytical procedure lifecycle focuses on designing a robust method based on a clearly defined ATP and enhanced understanding of method parameters. The following protocol outlines the systematic approach for drug substance assay development.

Table 2: Analytical Target Profile for a Small Molecule Drug Substance Assay

| ATP Element | Specification | Justification |

|---|---|---|

| Intended Purpose | Quantification of active pharmaceutical ingredient (API) in drug substance release testing | Required for batch release specification |

| Measurement Type | % (w/w) of labeled claim | Consistent with regulatory filing requirements |

| Accuracy | Mean recovery 98.0-102.0% | Based on product quality requirements |

| Precision | RSD ≤ 1.0% for repeatability; RSD ≤ 2.0% for intermediate precision | Justified by manufacturing process capability |

| Specificity | No interference from known impurities, degradation products, or excipients | Ensures selective measurement of API |

| Linearity Range | 70-130% of target assay concentration | Covers from QL to well above expected range |

| Quantitation Limit | ≤ 0.5% of target concentration | Ensures adequate control of potential impurities |

Protocol 1: Enhanced Analytical Procedure Development for Drug Substance Assay

Objective: To develop a stability-indicating HPLC method for drug substance assay using enhanced, science-based approaches that facilitate lifecycle management.

Materials and Reagents:

- Drug substance reference standard (characterized for purity)

- Known impurities and degradation products (if available)

- HPLC-grade solvents (acetonitrile, methanol, water)

- Buffer salts (e.g., potassium phosphate, ammonium acetate)

- pH adjustment solutions (e.g., phosphoric acid, sodium hydroxide)

Equipment:

- HPLC system with photodiode array (PDA) or equivalent detector

- Analytical balance (calibrated)

- pH meter (calibrated)

- Ultrasonic bath for degassing and dissolution

Experimental Design:

- Risk Assessment: Conduct initial risk assessment using methodology aligned with ICH Q9 to identify high-risk method parameters that may impact method performance (e.g., column temperature, mobile phase pH, gradient profile).

- Design of Experiments (DoE): Implement a structured DoE to evaluate the effect of critical method parameters on key performance responses (e.g., resolution, peak asymmetry, runtime). A central composite design is often appropriate for this purpose.

- Forced Degradation Studies: Subject the drug substance to stress conditions (acid, base, oxidation, thermal, photolytic) to generate degradation products and validate the stability-indicating capability of the method.

- Method Optimization: Based on DoE results, establish the method operational design range (MODR) for each critical parameter that ensures robust method performance.

Data Analysis:

- Construct mathematical models describing the relationship between method parameters and performance attributes.

- Identify the MODR where the method meets all ATP requirements.

- Document all development data and decisions in a method development report.

Stage 2: Procedure Performance Qualification (Validation)

The second stage of the lifecycle involves formal validation of the analytical procedure to demonstrate it is fit for its intended purpose as defined in the ATP.

Protocol 2: Lifecycle-Based Validation for Drug Substance Assay

Objective: To qualify the performance of the developed HPLC method for drug substance assay according to the ATP requirements and ICH Q2(R2) recommendations.

Validation Parameters and Experiments:

- Specificity: Inject individually solutions of the drug substance, known impurities, degradation products from forced degradation studies, and mobile phase blanks. Demonstrate baseline separation between the analyte and potential interferants, and establish that the method is stability-indicating.

- Linearity and Range: Prepare and analyze standard solutions at a minimum of five concentration levels spanning the range of 70-130% of the target assay concentration. Plot peak response versus concentration and calculate correlation coefficient, y-intercept, and slope of the regression line.

- Accuracy: Prepare recovery samples at three concentration levels (80%, 100%, 120%) in triplicate by spiking known amounts of drug substance reference standard into placebo (if applicable) or diluent. Calculate percentage recovery for each level and overall mean recovery.

- Precision:

- Repeatability: Analyze six independent preparations at 100% of test concentration by the same analyst on the same day. Calculate %RSD of assay results.

- Intermediate Precision: Perform the same analysis on different days, by different analysts, or using different instruments. Incorporate the same replication strategy that will be used for routine reportable results [26].

- Quantitation Limit (QL): Determine the lowest amount of analyte that can be quantified with acceptable accuracy and precision, typically using signal-to-noise ratio of 10:1 or based on the standard deviation of the response and the slope.

- Robustness: Deliberately introduce small, deliberate variations in critical method parameters (e.g., mobile phase composition ±2%, temperature ±5°C, flow rate ±10%) to evaluate method resilience.

Data Analysis and Acceptance Criteria: All validation data should be evaluated against pre-defined acceptance criteria derived from the ATP. For a drug substance assay, typical acceptance criteria include:

- Specificity: Resolution > 2.0 between analyte and closest eluting peak

- Linearity: Correlation coefficient (r) ≥ 0.999

- Accuracy: Mean recovery 98.0-102.0%

- Precision: RSD ≤ 1.0% for repeatability; RSD ≤ 2.0% for intermediate precision

Table 3: Validation Report Summary for Drug Substance Assay

| Validation Parameter | Results Obtained | Acceptance Criteria | Status |

|---|---|---|---|

| Specificity | Resolution > 2.5 from all known impurities; No interference at retention time | Resolution > 2.0; No interference | Pass |

| Linearity | r = 0.9998; y-intercept not significantly different from zero (p > 0.05) | r ≥ 0.999 | Pass |

| Range | 70-130% of test concentration | 70-130% | Pass |

| Accuracy | Mean recovery 99.8% (Range 99.2-100.5%) | 98.0-102.0% | Pass |

| Repeatability | RSD = 0.45% (n=6) | RSD ≤ 1.0% | Pass |

| Intermediate Precision | RSD = 0.78% (n=12, across two analysts/two days) | RSD ≤ 2.0% | Pass |

| Robustness | All variations met system suitability; Method robust within established MODR | Meet system suitability under all conditions | Pass |

Stage 3: Continued Procedure Performance Verification

The third stage of the lifecycle ensures the method remains in a state of control throughout its operational use and accommodates necessary improvements or technology updates.

Protocol 3: Ongoing Monitoring and Change Management

Objective: To ensure the continued fitness for purpose of the drug substance assay method throughout its lifecycle and facilitate science-based change management.

System Suitability Testing (SST):

- Establish SST parameters based on the ATP and validation knowledge (e.g., tailing factor, theoretical plates, %RSD for replicate injections)

- Require SST execution before each analytical run

- Implement trending of SST parameters to detect potential performance drift

Ongoing Data Collection and Trend Analysis:

- Monitor assay results for the drug substance over time using control charts

- Periodically assess precision through duplicate or replicate testing

- Document all deviations and investigations

Change Management Process:

- Risk Assessment: Evaluate proposed changes based on potential impact to the ATP and product quality

- Bridging Studies: Design appropriate comparative studies to demonstrate equivalence between original and modified methods

- Regulatory Assessment: Determine reporting category based on established conditions (ECs) and product lifecycle management (PLCM) documents [27]

- Implementation: Execute change following approved protocols and documentation requirements

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagent Solutions for Lifecycle-Based Method Validation

| Reagent/Material | Function in Validation | Lifecycle Considerations |

|---|---|---|

| Well-Characterized Reference Standard | Primary standard for quantification; basis for accuracy determination | Requires ongoing monitoring of stability and qualification of new lots; critical for long-term method consistency |

| System Suitability Test Mixtures | Verification of method performance before each use | Should contain key analytes and critical separations; may need updates based on knowledge gained during lifecycle |

| Known Impurity Standards | Specificity demonstration and quantification of impurities | Portfolio should expand as new impurities are identified; establishes method selectivity |

| Stability-Indicating Stress Samples | Specificity verification under forced degradation conditions | Confirms method remains stability-indicating; may be used for tech transfer and troubleshooting |

| Quality Control Check Samples | Ongoing verification of method performance | Monitors method precision and accuracy over time; establishes historical performance baselines |

The transition from traditional validation to analytical procedure lifecycle management represents a fundamental evolution in how the pharmaceutical industry ensures analytical quality. This shift from a "check-the-box" compliance exercise to a science-based, holistic approach enhances method robustness, facilitates continuous improvement, and ultimately provides greater assurance of product quality and patient safety. By implementing the frameworks, protocols, and tools outlined in this application note, researchers and drug development professionals can successfully navigate this paradigm shift and build analytical methods that remain fit-for-purpose throughout their entire operational lifespan.

The successful adoption of lifecycle approaches requires organizational commitment to enhanced method development, robust knowledge management, and science-based change management practices. As regulatory frameworks continue to evolve toward these principles, organizations that embrace these concepts early will benefit from more efficient method maintenance, more flexible post-approval changes, and ultimately, more reliable analytical data for critical quality decisions.

Modern Method Development and Application: Implementing QbD, AI, and Advanced Technologies

Adopting a Quality-by-Design (QbD) Framework for Robust Analytical Procedure Development

Analytical Quality by Design (AQbD) represents a paradigm shift in the development of analytical methods, moving away from traditional, empirical approaches toward a systematic, proactive, and risk-based framework. Rooted in the principles of Quality by Design (QbD), which was formally introduced to the pharmaceutical industry through ICH Q8-Q11 guidelines, AQbD aims to ensure the quality of analytical methods through deliberate design rather than relying solely on end-product testing [28] [29]. This approach begins with predefined objectives and emphasizes method understanding and control based on sound science and quality risk management [30] [31].

The traditional trial-and-error approach to analytical method development often proves time-consuming, resource-intensive, and may lack reproducibility, potentially leading to out-of-trend (OOT) and out-of-specification (OOS) results during routine application [31]. In contrast, AQbD provides a structured framework for building quality into the analytical method from the outset, focusing on understanding the relationship between Critical Method Parameters (CMPs) and Critical Method Attributes (CMAs) [31]. This enhanced understanding leads to more robust methods that remain reliable throughout their lifecycle, ultimately supporting the broader product development lifecycle and ensuring consistent drug quality [30] [32].

For drug substance assay research, implementing AQbD is particularly valuable as it directly impacts the reliability of critical quality attribute (CQA) data used to make decisions about drug safety and efficacy. Regulatory agencies, including the FDA and EMA, actively encourage the implementation of QbD principles, recognizing their potential to enhance product quality and facilitate continuous improvement [33] [29].

Core Principles and Workflow of AQbD

The AQbD Workflow

The implementation of AQbD follows a systematic workflow that parallels the QbD approach for pharmaceutical products but is tailored to analytical method development. This workflow ensures that method performance requirements are clearly defined, potential risks are identified and mitigated, and method conditions are optimized to produce reliable, high-quality data [30] [31].

The following diagram illustrates the comprehensive AQbD workflow, from defining measurement requirements to establishing a control strategy for ongoing method verification:

Key Principles of AQbD

Several key principles distinguish AQbD from traditional method development approaches:

- Proactive Design: Quality is built into the analytical method during the design phase rather than verified solely through retrospective testing [29] [31].

- Risk-Based Approach: Systematic risk assessment tools are employed to identify and prioritize factors that may impact method performance, allowing resources to be focused on the most critical variables [30] [31].

- Scientific Understanding: The functional relationships between method inputs (parameters) and outputs (performance characteristics) are established through structured experimentation [28] [30].

- Lifecycle Management: Method performance is continuously monitored and verified throughout its operational life, with provisions for continuous improvement [30] [31].

These principles collectively ensure that analytical methods developed under the AQbD framework are robust, reproducible, and capable of providing reliable data to support decision-making in drug development [30].

Implementation Protocol for AQbD in Drug Substance Assay

Stage 1: Method Design and Development

Define the Analytical Target Profile (ATP)

The Analytical Target Profile serves as the foundation for all AQbD activities. It is a prospective summary of the analytical method's requirements, defining the characteristics that the method must demonstrate to be fit for its intended purpose [30] [31]. The ATP should directly align with the Quality Target Product Profile (QTPP) of the drug product and specify the required quality of the measurement needed to evaluate Critical Quality Attributes (CQAs) of the drug substance [31].

Protocol for ATP Definition:

- Identify the Analyte: Clearly define the drug substance and any potential impurities, degradation products, or related substances that require quantification [31].

- Establish Performance Criteria: Define the required performance characteristics for the method, including:

- Accuracy: Typically ±10% of the true value for drug substance assay [30]

- Precision: Relative standard deviation (RSD) not more than 2% for repeatability [30]

- Specificity: Ability to unequivocally quantify the analyte in the presence of impurities, degradation products, and matrix components [30] [31]

- Linearity and Range: Typically a correlation coefficient (r²) > 0.998 over specified range (e.g., 50-150% of target concentration) [30]

- Detection and Quantitation Limits: Based on signal-to-noise ratio or standard deviation of response [30]

- Document the ATP: Create a comprehensive ATP document that will guide all subsequent development activities and serve as a reference for method validation [30].

Identify Critical Quality Attributes (CQAs)

CQAs for analytical methods are physical, chemical, biological, or microbiological properties or characteristics that should be within an appropriate limit, range, or distribution to ensure the desired product quality [31]. For drug substance assay methods, CQAs typically include parameters such as accuracy, precision, specificity, and robustness [31].

Protocol for CQA Identification:

- Link to Product CQAs: Identify how analytical method attributes relate to the drug substance CQAs [28] [31].

- Prioritize Method CQAs: Use risk assessment to prioritize which method attributes are truly critical to ensuring the method meets its ATP [30] [31].

Risk Assessment

Risk assessment is a fundamental component of AQbD that systematically identifies and evaluates potential sources of variability in analytical method performance [31]. This process enables developers to focus experimental efforts on the most critical factors.

Protocol for Risk Assessment:

- Identify Potential Risk Factors: Brainstorm all potential factors that could impact method performance using tools such as Ishikawa (fishbone) diagrams [31]. For a typical HPLC method for drug substance assay, consider:

- Instrument Parameters: Detector wavelength, column temperature, flow rate, injection volume

- Mobile Phase Factors: pH, buffer concentration, organic modifier ratio

- Sample Preparation Factors: Extraction time, solvent composition, dilution volume

- Environmental Factors: Temperature, humidity

- Operator Factors: Technique, experience level [31]

- Prioritize Risk Factors: Use a structured approach such as Failure Mode Effects Analysis (FMEA) to rank factors based on their severity, occurrence, and detectability [31]. The following table illustrates a typical risk prioritization matrix:

Table 1: Risk Prioritization Matrix for Analytical Method Development

| Risk Factor | Severity (1-10) | Occurrence (1-10) | Detectability (1-10) | Risk Priority Number | Priority Level |

|---|---|---|---|---|---|

| Mobile Phase pH | 8 | 6 | 4 | 192 | High |

| Column Temperature | 7 | 5 | 3 | 105 | High |

| Flow Rate | 6 | 4 | 3 | 72 | Medium |

| Detection Wavelength | 5 | 2 | 2 | 20 | Low |

| Injection Volume | 4 | 3 | 3 | 36 | Low |

- Document Risk Assessment: Maintain comprehensive documentation of the risk assessment process, including rationale for prioritization decisions [31].

Stage 2: Method Qualification and Design Space Establishment

Design of Experiments (DoE)

DoE is a critical tool in AQbD that enables efficient, systematic evaluation of multiple factors and their interactions simultaneously [28] [31]. By applying statistical principles to experimental design, DoE maximizes information gain while minimizing the number of experiments required.

Protocol for DoE Application:

- Select Factors and Ranges: Based on the risk assessment, select the high-priority factors to include in the DoE and establish appropriate ranges for evaluation [31].

- Choose Experimental Design: Select an appropriate experimental design based on the number of factors and the objectives of the study:

- Define Responses: Identify the CQAs that will serve as responses in the experimental design [31].