Validating Simplex Optimization: Strategies for Accuracy and Reliability in Drug Discovery

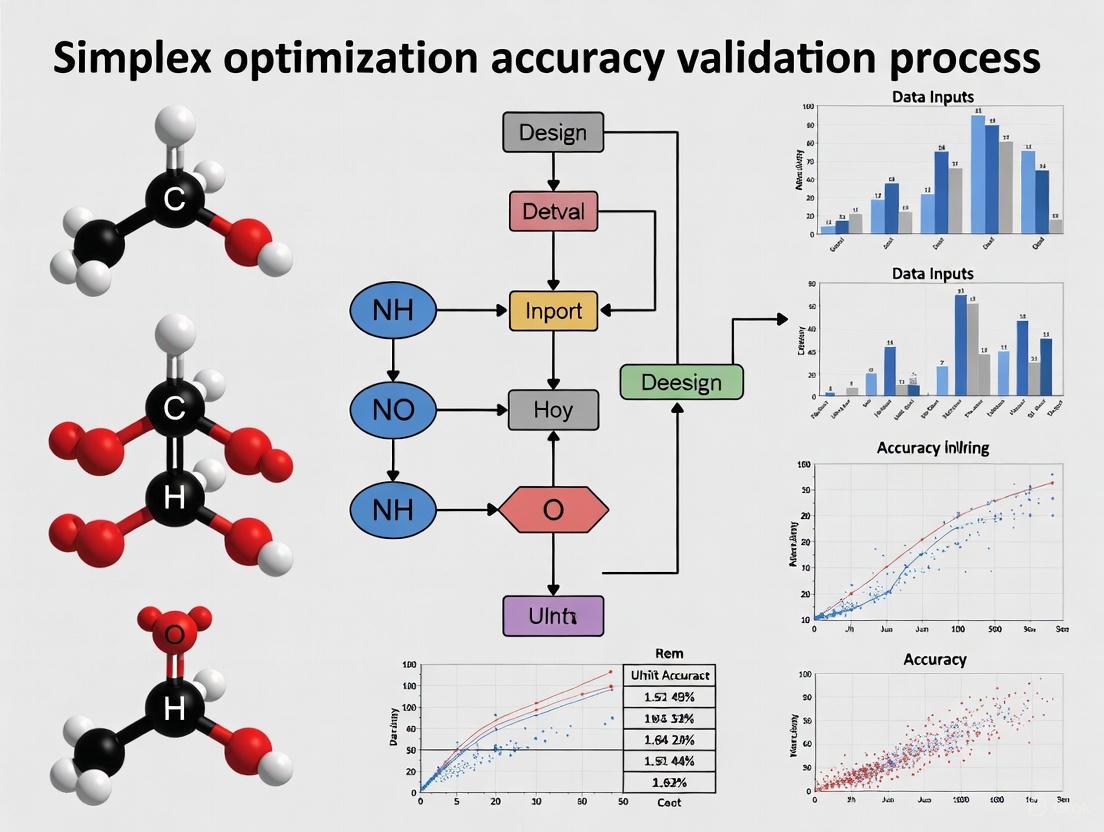

This article provides a comprehensive framework for validating the accuracy of simplex optimization methods, crucial for ensuring reliable outcomes in drug discovery and development.

Validating Simplex Optimization: Strategies for Accuracy and Reliability in Drug Discovery

Abstract

This article provides a comprehensive framework for validating the accuracy of simplex optimization methods, crucial for ensuring reliable outcomes in drug discovery and development. It explores the foundational principles of simplex algorithms, details their methodological application in critical tasks like mixture design and machine learning model calibration, and addresses common troubleshooting challenges such as premature convergence and noise. Through comparative analysis of validation techniques and performance metrics, the article equips researchers and scientists with practical strategies to confirm the robustness of their optimization results, ultimately accelerating the development of safe and effective therapeutic agents.

Simplex Optimization Fundamentals: Core Principles for Robust Validation

Simplex-based algorithms constitute a fundamental pillar of computational optimization, providing powerful strategies for solving complex problems in logistics, engineering, and data science. This field encompasses two distinct but similarly named algorithmic families: the Nelder-Mead simplex method for derivative-free nonlinear optimization, and the simplex algorithm (or simplex method) for linear programming problems. Despite sharing the "simplex" terminology, these approaches employ fundamentally different mathematical principles and serve different application domains.

The development of simplex methodologies represents an ongoing pursuit of optimal performance in computational problem-solving. From George Dantzig's pioneering work on the simplex algorithm for linear programming in 1947 to the Nelder-Mead simplex method for unconstrained optimization in 1965, these algorithms have continuously evolved through theoretical refinements and hybrid implementations [1] [2] [3]. This guide examines the defining characteristics, performance metrics, and practical implementations of simplex-based algorithms, providing researchers with a comprehensive framework for selecting appropriate optimization strategies within scientific computing and drug development contexts.

Algorithm Fundamentals and Classification

Simplex-based optimization algorithms can be conceptually divided into two primary categories based on their mathematical foundations and application domains.

The Linear Programming Simplex Algorithm

The classical simplex algorithm, developed by George Dantzig in 1947, addresses linear programming problems through a geometric approach [1] [2]. The method operates on the fundamental principle that the optimal solution to a linear program lies at a vertex (extreme point) of the feasible region, which takes the shape of a convex polytope. The algorithm systematically navigates from one vertex to an adjacent vertex along the edges of this polytope, improving the objective function value at each step until reaching the optimal solution [2].

The mathematical formulation begins with a linear program in standard form:

- Maximize: $\mathbf{c^{T}} \mathbf{x}$

- Subject to: $A\mathbf{x} \leq \mathbf{b}$ and $\mathbf{x} \geq 0$

The algorithm transforms inequalities to equalities using slack variables and operates through pivot operations that exchange basic and nonbasic variables, progressively moving toward improved solutions [2]. This vertex-hopping process efficiently explores the solution space while guaranteeing eventual convergence to the global optimum for linear problems.

The Nelder-Mead Nonlinear Simplex Method

In contrast to the linear programming simplex algorithm, the Nelder-Mead simplex method (1965) addresses unconstrained nonlinear optimization problems without using derivative information [3]. This direct search method employs a geometric simplex—a convex hull of n+1 vertices in n-dimensional space—that adaptively transforms through reflection, expansion, contraction, and shrinkage operations to navigate the objective landscape.

The algorithm continuously modifies the simplex based on function evaluations at each vertex, replacing the worst-performing vertex with a new point obtained through geometric transformations [3]. Unlike the linear programming simplex method, Nelder-Mead is a heuristic approach that does not guarantee convergence to a global optimum and may stagnate at non-stationary points under certain conditions, as identified by McKinnon and subsequent researchers [3].

Table: Fundamental Characteristics of Simplex Algorithm Types

| Feature | Linear Programming Simplex | Nelder-Mead Nonlinear Simplex |

|---|---|---|

| Problem Domain | Linear programming | Nonlinear derivative-free optimization |

| Mathematical Basis | Linear algebra & vertex enumeration | Geometric transformations (reflection, expansion, contraction) |

| Solution Guarantees | Global optimum (finite convergence) | Possibly converges to non-stationary points |

| Primary Applications | Logistics, resource allocation, supply chain | Parameter tuning, engineering design, experimental calibration |

| Theoretical Complexity | Exponential worst-case, polynomial smoothed complexity | No general convergence guarantee |

Performance Analysis and Comparison

The practical utility of optimization algorithms depends critically on their computational performance across various problem domains. This section examines empirical results and theoretical efficiency for simplex-based methods.

Computational Efficiency of Linear Programming Simplex

The simplex algorithm for linear programming demonstrates a remarkable discrepancy between theoretical worst-case complexity and observed practical performance. Klee and Minty (1972) established that the worst-case complexity grows exponentially with problem size, requiring the algorithm to visit all $2^n$ vertices of the feasible polytope for certain pathological problems [4]. This theoretical limitation, however, rarely manifests in practical applications.

In 2001, Spielman and Teng revolutionized the theoretical understanding of simplex performance through smoothed analysis, demonstrating that when inputs undergo slight random perturbations, the expected running time becomes polynomial [1] [4]. This breakthrough explained the paradoxical contrast between exponential worst-case bounds and consistently efficient practical performance. Recent research by Huiberts and Bach has further refined these bounds, providing stronger mathematical justification for the observed efficiency of simplex implementations in commercial solvers [1].

Nelder-Mead Performance in Engineering Applications

The Nelder-Mead simplex method excels in applications requiring derivative-free optimization of nonlinear systems. In microwave engineering, a recent hybrid approach combining simplex-based regressors with dual-fidelity electromagnetic simulations achieved remarkable efficiency, solving complex design problems in approximately 50 simulations—dramatically fewer than population-based metaheuristics that typically require thousands of evaluations [5].

Similar performance advantages appear in data clustering applications, where a simplex-enhanced cuttlefish optimization algorithm (SMCFO) demonstrated superior accuracy and convergence speed compared to particle swarm optimization (PSO) and other metaheuristics across 14 standard datasets [6]. The integration of Nelder-Mead operations within the bio-inspired algorithm enhanced local search capability while maintaining global exploration, reducing premature convergence issues that plague many nature-inspired approaches.

Table: Experimental Performance Comparison Across Applications

| Application Domain | Algorithm | Performance Metrics | Reference |

|---|---|---|---|

| Microwave Circuit Design | Simplex with surrogate models | ~50 EM simulations (vs. 1000+ for alternatives) | [5] |

| Data Clustering | SMCFO (Simplex-enhanced CFO) | Higher accuracy, faster convergence vs. PSO, SSO, SMSHO | [6] |

| Linear Programming | Randomized Simplex | Polynomial expected time under smoothed analysis | [1] [4] |

| General MIDFO | MISO, NOMAD | Best performers on large-scale mixed-integer problems | [7] |

Hybrid Implementations and Modified Algorithms

The integration of simplex methodologies with other optimization approaches has generated powerful hybrid algorithms that leverage complementary strengths.

Simplex-Enhanced Metaheuristics

A novel cuttlefish optimization algorithm (SMCFO) enhanced with simplex operations demonstrates the value of hybrid approaches for data clustering applications. This implementation partitions the population into four subgroups, with one subgroup specifically employing the Nelder-Mead method to refine solution quality while other subgroups maintain exploration diversity [6]. This selective integration substitutes conventional stochastic operations with deterministic reflection, expansion, contraction, and shrinkage operations, significantly improving local search capability without compromising global exploration.

Experimental results across 14 datasets from the UCI Machine Learning Repository confirmed that SMCFO consistently outperformed established clustering algorithms including PSO, SSO, and standard CFO in accuracy, convergence speed, and solution stability [6]. The algorithm's superior performance was statistically validated through nonparametric tests, demonstrating that simplex enhancement effectively addresses premature convergence limitations in bio-inspired optimization.

Surrogate-Assisted Simplex Methods

Computationally expensive engineering applications have motivated the development of surrogate-assisted simplex approaches. In microwave optimization, researchers have implemented simplex-based regressors that model circuit operating parameters rather than complete frequency responses, dramatically improving optimization reliability while reducing computational costs [5]. This approach regularizes the objective function landscape, facilitating more efficient identification of optimal designs.

The methodology employs dual-fidelity electromagnetic simulations, using lower-resolution models for global exploration and initial surrogate construction, while reserving high-resolution simulations for final parameter tuning [5]. This strategic allocation of computational resources, combined with restricted sensitivity updates based on principal directions, enables globalized optimization with unprecedented efficiency—addressing a fundamental challenge in simulation-based engineering design.

Research Reagents and Computational Tools

Implementing simplex-based optimization requires specific computational tools and methodological approaches tailored to problem characteristics.

Essential Research Reagents

Table: Computational Tools for Simplex Optimization Research

| Tool Category | Representative Examples | Research Function |

|---|---|---|

| Linear Programming Solvers | GLPK, Commercial LP solvers | Implementation of simplex method for linear programming |

| Derivative-Free Optimization Frameworks | NOMAD, MISO | MIDFO algorithm implementation with simplex components |

| Benchmark Problem Sets | UCI Repository, MIPLIB | Standardized performance evaluation and validation |

| Computational Modeling Environments | MATLAB, Python SciPy | Algorithm prototyping and experimental analysis |

The selection of appropriate computational tools significantly impacts research outcomes. For mixed-integer derivative-free optimization (MIDFO) problems, comprehensive benchmarking has identified MISO and NOMAD as top-performing solvers, with MISO excelling on large-scale and binary problems, while NOMAD performs best on mixed-integer, non-binary discrete, and small to medium-sized problems [7]. These solvers incorporate sophisticated implementations of simplex-inspired search strategies within broader optimization frameworks.

Specialized computational resources are particularly important for engineering applications such as microwave design, where evaluation costs necessitate sophisticated model management strategies. The most effective approaches employ variable-fidelity simulations, with low-resolution models enabling rapid exploration while high-resolution models verify final solutions [5]. This strategic allocation of computational resources dramatically reduces optimization costs while maintaining engineering reliability.

Experimental Protocols and Methodologies

Rigorous experimental methodology is essential for validating simplex algorithm performance across diverse application domains.

Performance Evaluation in Linear Programming

Theoretical analysis of simplex algorithm complexity requires carefully constructed worst-case problems such as the Klee-Minty cube, which forces the algorithm to visit an exponential number of vertices [4]. In contrast, practical performance evaluation employs standardized test sets including Netlib and MIPLIB problems, which represent real-world optimization scenarios.

Smoothed analysis methodology, pioneered by Spielman and Teng, introduces slight random perturbations to problem inputs, then evaluates expected running time across these perturbed instances [1] [4]. This approach has demonstrated polynomial-time expected performance for simplex variants, explaining the stark contrast between theoretical worst-case bounds and observed practical efficiency. Recent advances have reduced the exponent in polynomial complexity bounds, providing increasingly realistic performance estimates for real-world applications.

Derivative-Free Optimization Assessment

For derivative-free simplex methods, standard evaluation protocols employ benchmark problems from established repositories such as the UCI Machine Learning Repository for clustering applications [6] or specialized test suites for engineering domains [5]. Performance metrics typically include solution quality, computational cost (often measured in function evaluations), convergence rate, and algorithm reliability across multiple runs.

In microwave engineering, experimental protocols employ dual-fidelity electromagnetic simulations, using coarse-discretization models for global exploration and fine-discretization models for final verification [5]. This approach provides accurate performance assessment while managing computational costs. Validation includes comparison against multiple benchmark algorithms including nature-inspired methods, random-start local search, and alternative machine learning strategies to establish statistical significance of performance improvements.

Simplex-based optimization algorithms represent a diverse and evolving family of computational methods with demonstrated efficacy across scientific and engineering domains. The linear programming simplex algorithm continues to benefit from theoretical advances that explain its practical efficiency, while Nelder-Mead methods and their hybrid derivatives offer powerful approaches for derivative-free nonlinear optimization.

Future research directions include developing simplex variants with provable linear-time complexity for broader problem classes, enhancing hybrid algorithms through more sophisticated integration strategies, and extending simplex methodologies to emerging challenges in data science and artificial intelligence. The continued evolution of these foundational algorithms will further establish their indispensable role in the computational toolkit of researchers and practitioners across scientific disciplines.

Key Metrics for Assessing Optimization Accuracy and Convergence

Optimization algorithms form the computational backbone of modern scientific discovery, particularly in fields like drug development where identifying optimal solutions efficiently is paramount. Within this landscape, the simplex method has maintained relevance for nearly 80 years as a powerful tool for solving linear programming problems under constraints, despite long-standing theoretical concerns about its worst-case exponential runtime [1]. Contemporary research has sought to validate its practical efficiency and enhance its convergence properties. This guide provides a structured framework for assessing the accuracy and convergence of optimization algorithms, with a specific focus on recent advancements in simplex-based methods and a comparison against state-of-the-art alternatives. The evaluation is framed within a broader thesis on simplex optimization accuracy validation, providing researchers and drug development professionals with the metrics and experimental protocols needed for rigorous algorithmic comparison.

Core Metrics for Optimization Performance

Evaluating an optimization algorithm requires a multi-faceted approach that considers not just the final solution quality, but also the computational journey to reach it. The following metrics are indispensable for a comprehensive assessment.

Solution Quality Metrics: These metrics evaluate the accuracy and optimality of the solution found by the algorithm.

- Objective Function Value: The primary indicator of solution quality, representing the value of the fitness function (e.g., profit, energy, binding affinity) at the identified optimum. The proximity to a known global optimum or the best-known solution for a benchmark problem is a key measure of accuracy [6] [8].

- Constraint Violation: The degree to which the solution adheres to the problem's constraints. A valid solution must have negligible or zero constraint violation.

- Statistical Significance: In stochastic algorithms, performance must be evaluated over multiple independent runs. Non-parametric statistical tests, such as the rank-sum test, are used to confirm that performance improvements over baseline methods are not due to chance [6].

Convergence Behavior Metrics: These metrics assess the efficiency and stability of the optimization process.

- Convergence Rate: The speed at which the algorithm approaches the optimum, often visualized by plotting the best objective function value against the number of iterations or function evaluations [6]. A steeper descent indicates faster convergence.

- Computational Complexity: The computational resources required, often expressed as the number of function evaluations or wall-clock time needed to reach a solution of a certain quality [1] [5]. For the simplex method, recent research has focused on establishing that its runtime scales polynomially with the number of constraints, alleviating fears of exponential worst-case scenarios [1].

- Stability and Variance: The consistency of an algorithm's performance across multiple runs. A lower variance in final objective values or convergence paths indicates a more robust and stable algorithm [6].

Comparative Analysis of Modern Optimization Algorithms

The table below provides a quantitative comparison of several contemporary optimization algorithms, highlighting their performance across key metrics on various benchmark problems.

Table 1: Performance Comparison of Modern Optimization Algorithms

| Algorithm | Core Methodology | Reported Accuracy (Sample Benchmark) | Convergence Speed | Key Strengths | Primary Applications |

|---|---|---|---|---|---|

| SMCFO [6] | Cuttlefish Optimization enhanced by Simplex Method | Higher accuracy vs. CFO, PSO, SSO, SMSHO on 14 UCI datasets | Faster convergence, improved stability | Balances global exploration and local exploitation | Data clustering, high-dimensional & nonlinear data |

| DANTE [8] | Deep Active Optimization with Neural-Surrogate-Guided Tree Exploration | 10-20% improvement over SOTA; finds global optimum in 80-100% of synthetic functions | Effective in high-dimensional spaces (up to 2000D) with limited data (~200 points) | Excels with limited, costly data; handles noncumulative objectives | Scientific discovery, alloy/drug design, complex systems |

| Simplex-Based Microwave Optimization [5] | Simplex surrogates for operating parameters & dual-fidelity EM models | Competitive design quality | High cost-efficiency (~45 EM simulations) | Exceptional computational efficiency, global search potential | Microwave circuit design, engineering optimization |

| Modern Simplex Method [1] | Randomized pivoting rules (post-Spielman-Teng) | Practical effectiveness long observed | Polynomial-time runtime guarantees | Proven practical efficiency, reliability | Logistical planning, supply-chain decisions |

Analysis of Comparative Data

The data reveals distinct performance trade-offs. Algorithm hybridization, as seen in SMCFO, demonstrates that enhancing a metaheuristic (Cuttlefish Optimization) with a deterministic local search method (Nelder-Mead Simplex) can yield superior accuracy and faster convergence across diverse datasets [6]. In contrast, data-driven approaches like DANTE showcase a remarkable ability to handle very high-dimensional problems with limited data, a common and challenging scenario in real-world scientific research [8].

Furthermore, the simplex method itself continues to evolve. Its application in novel contexts, such as using simplex-based regressors for microwave design, highlights its enduring value when adapted to specific problem structures [5]. Theoretical breakthroughs have also provided rigorous explanations for its observed practical efficiency, solidifying its position as a reliable choice for linear optimization problems [1].

Experimental Protocols for Validation

To ensure the reproducibility and validity of optimization benchmarks, a structured experimental protocol is essential. The following workflow outlines the key stages for a robust comparison.

Detailed Methodological Breakdown

Benchmark Problem Selection: The foundation of a fair evaluation is a diverse set of benchmark problems. These should include:

- Synthetic Functions: Functions with known global optima (e.g., Ackley, Rastrigin) are used to rigorously test global exploration and local exploitation capabilities. Recent studies evaluate functions with dimensionalities ranging from 20 to 2,000 [8].

- Real-World Datasets: Public repositories, such as the UCI Machine Learning Repository, provide standard datasets for evaluating performance on practical tasks like data clustering [6].

- Domain-Specific Problems: For fields like drug development, benchmarks could include molecular docking simulations or quantitative structure-activity relationship (QSAR) model fitting.

Performance Metric Definition: Prior to running experiments, researchers must operationalize the metrics outlined in Section 2. This involves:

- Defining the threshold for a "successful" run (e.g., finding a solution within 1% of the global optimum).

- Setting a maximum budget of function evaluations or time for each run.

- Determining the number of independent runs (e.g., 30 or more) to ensure statistical power [6].

Algorithm Configuration: Each algorithm must be tuned for a fair comparison. This includes:

- Using standardized tuning procedures or hyperparameter optimization for each algorithm.

- Reporting the final parameters used for each method in the benchmark.

- Ensuring all algorithms are run on identical hardware and software environments.

Data Collection and Analysis: The execution phase involves:

- Running each algorithm on every benchmark problem for the predetermined number of trials.

- Logging the best objective value, constraint violation, and computational time at regular intervals during the optimization process.

- Performing post-hoc statistical analysis (e.g., Wilcoxon signed-rank test) on the collected data to determine the significance of observed performance differences [6].

The Scientist's Toolkit: Essential Research Reagents

The following table catalogues key computational "reagents" and resources essential for conducting rigorous optimization research, as evidenced in the reviewed literature.

Table 2: Key Research Reagent Solutions for Optimization Experiments

| Reagent / Resource | Function in Research | Specific Examples / Notes |

|---|---|---|

| Benchmark Repositories | Provides standardized problems for objective algorithm comparison. | UCI Machine Learning Repository [6], Synthetic Test Functions (Ackley, Rosenbrock, etc.) [8] |

| Deep Neural Network (DNN) Surrogates | Acts as a computationally cheap proxy for expensive-to-evaluate functions, enabling optimization of complex systems. | Used in DANTE to approximate high-dimensional, nonlinear solution spaces with limited data [8] |

| Simplex-Based Regressors | Simplifies the objective function landscape by modeling key operating parameters instead of full system responses, accelerating optimization. | Employed in microwave design to model parameters like center frequency and power split ratio [5] |

| Dual/Multi-Fidelity Models | Balances evaluation cost and model accuracy; low-fidelity models for rapid exploration, high-fidelity for final validation. | Uses low & high-resolution EM simulations to pre-screen and then fine-tune microwave circuit parameters [5] |

| Non-Parametric Statistical Tests | Validates that observed performance improvements are statistically significant and not due to random chance. | Rank-sum tests used to confirm SMCFO's superior performance over baselines [6] |

| Tree Search Algorithms (e.g., NTE) | Guides the exploration of the search space by balancing the exploration of new regions with the exploitation of promising ones. | Neural-surrogate-guided tree exploration in DANTE uses a data-driven UCB for noncumulative objectives [8] |

The assessment of optimization accuracy and convergence requires a meticulous, multi-metric approach grounded in rigorous experimental protocols. The contemporary research landscape demonstrates that while the classic simplex method remains a robust and theoretically well-understood tool, its modern hybrids and entirely new paradigms like deep active optimization offer powerful alternatives. Algorithms like SMCFO and DANTE show that the strategic integration of different computational strategies—such as combining metaheuristics with local search or leveraging deep surrogates with tree search—can yield significant gains in accuracy, convergence speed, and the ability to solve previously intractable high-dimensional problems. For researchers in drug development and other computational sciences, this comparative guide provides a framework for selecting, validating, and advancing the optimization tools that will drive the next wave of scientific discovery.

The Critical Role of Validation in High-Stakes Drug Discovery

In the high-stakes world of drug discovery, validation is the critical gatekeeper determining which potential therapies advance and which are abandoned. As artificial intelligence and novel technologies compress early-stage timelines, the focus shifts from merely accelerating discovery to ensuring that accelerated candidates are genuinely efficacious and safe. This paradigm demands rigorous validation at every stage, from computational prediction to clinical confirmation. The industry's central challenge is no longer simply generating candidates but demonstrating pharmacological relevance and predicting therapeutic benefit with high confidence before committing to expensive clinical trials [9] [10]. This guide examines the validation frameworks and performance metrics defining success for modern drug discovery platforms, providing researchers with a structured approach for comparative evaluation.

Validation in AI-Driven Drug Discovery Platforms

Comparative Analysis of Leading Platforms

Table 1: AI Drug Discovery Platform Validation Metrics & Clinical Progress

| Platform | Key Validation Approach | Clinical-Stage Candidates (by end-2024) | Reported Efficiency Gains | Validation Strengths | Validation Limitations |

|---|---|---|---|---|---|

| Exscientia | Centaur AI (human-AI collaboration); patient-derived biology & phenotypic screening [9] | 8+ designed clinical compounds [9] | 70% faster design cycles; 10x fewer compounds synthesized [9] | Integrated target selection to lead optimization; ex vivo validation on patient samples [9] | Pipeline prioritization halting programs (e.g., A2A antagonist); no Phase III candidates yet [9] |

| Insilico Medicine | End-to-end AI (PandaOmics, Chemistry42); generative models for novel targets & molecules [9] | AI-designed IPF drug to Phase I in 18 months [9] | Compression of traditional ~5-year discovery to ~2 years [9] | Rapid de novo target discovery and molecule generation [9] | Steep learning curve for non-AI experts [11] |

| Recursion | Phenomics & biological data at scale; AI-driven phenotypic screening [9] | Multiple candidates in clinical stages [9] | Massive proprietary dataset for predictive accuracy [11] | High-content cellular data provides rich validation dataset [9] | Complex platform can overwhelm smaller teams [11] |

| BenevolentAI | Knowledge-graph-driven target discovery; analysis of scientific literature & clinical data [9] | Success in identifying COVID-19 treatments for repurposing [11] | Cuts development costs by up to 70% [11] | Uncovers hidden biological connections for novel target validation [11] | High dependency on input data quality [11] |

Experimental Protocols for AI Model Validation

Validating AI models in drug discovery requires moving beyond generic metrics to domain-specific evaluation protocols that account for biological complexity and imbalanced datasets [12].

Protocol 1: Addressing Imbalanced Data for Compound Activity Prediction

- Objective: To evaluate an ML model's ability to identify rare active compounds amidst abundant inactive ones.

- Methodology: Use precision-at-K over accuracy. Rank predicted compounds and calculate precision for the top K candidates. This prioritizes the model's performance on the most critical, rare events [12].

- Validation Data Split: Temporal validation, where the model is trained on older data and tested on newer, held-out data, simulates real-world deployment and prevents data leakage [13].

Protocol 2: Rare Event Sensitivity for Toxicity Prediction

- Objective: To maximize detection of infrequent toxicological signals in transcriptomics or other omics data.

- Methodology: Optimize for rare event sensitivity and precision-weighted scoring. A case study by Elucidata demonstrated a 4x increase in detection speed for toxicological signals by implementing these custom metrics [12].

Validation of Target Engagement and Biological Mechanisms

Target Validation and Qualification Frameworks

Table 2: A Portfolio Assessment Tool for Target Validation & Qualification [10]

| Component | Key Metrics (in ascending priority) | Purpose & Rationale |

|---|---|---|

| Target Validation (Human Data) | To build confidence in the target's link to human disease | |

| Tissue Expression | 1. Protein/mRNA in relevant tissue2. Cell type specificity3. Change in disease state | Confirms the target is present and modulated in the relevant pathological context. |

| Genetics | 1. Gene association in humans2. Rare variant association3. Functional genomics | Human genetic evidence provides one of the strongest signals for a target's causal role in disease. |

| Clinical Experience | 1. Known drug pharmacology2. Natural history mutations3. Biomarker data in patients | Prior clinical evidence de-risks a target by providing direct human proof-of-concept. |

| Target Qualification (Preclinical Data) | To establish a clear role in the disease process and safety | |

| Pharmacology | 1. In vitro potency/selectivity2. In vivo proof of concept (PD)3. In vivo efficacy (disease model) | Demonstrates that modulating the target produces the intended pharmacological and therapeutic effect. |

| Genetically Engineered Models | 1. KO/KI phenotype2. Conditional KO/KI3. Transgenic model phenotype | Provides causal evidence that the target is involved in the disease pathway. |

| Translational Endpoints | 1. Biomarker concordance (preclinical/clinical)2. Imaging endpoints3. Clinical scales/outcomes | Ensards that findings from preclinical models can be translated and measured in human trials. |

Experimental Protocol for Cellular Target Engagement

A critical step in validation is confirming that a drug candidate physically engages its intended target in a physiologically relevant environment.

- Cellular Thermal Shift Assay (CETSA) Protocol

- Objective: To quantitatively measure drug-target engagement in intact cells or tissues, confirming direct binding and cellular permeability [14].

- Methodology:

- Cell Treatment: Incubate cells with the drug compound at varying concentrations.

- Heating: Heat aliquots of the cell suspension to a range of temperatures.

- Cell Lysis and Fractionation: Lyse heated cells and separate soluble protein from precipitated protein.

- Detection: Use immunoblotting or high-resolution mass spectrometry to quantify the target protein in the soluble fraction.

- Validation Readout: A leftward shift in the protein's thermal melting curve indicates stabilization due to drug binding. Dose-dependent and temperature-dependent stabilization, as shown in work quantifying DPP9 engagement in rat tissue, provides robust, system-level validation [14].

The following diagram illustrates the key workflow and decision points in a multi-stage validation pipeline for drug discovery.

Multi-Stage Validation Pipeline - A sequential workflow for target validation and invalidation, from in silico prediction to in vivo confirmation.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Research Reagents for Experimental Validation

| Reagent / Solution | Function in Validation | Application Context |

|---|---|---|

| CETSA Reagents | Quantify direct drug-target engagement in physiologically relevant cellular or tissue environments [14]. | Mechanistic validation in intact cells; confirming membrane permeability and binding. |

| Patient-Derived Samples | Provide ex vivo validation on biologically relevant models, improving translational predictivity [9]. | Phenotypic screening (e.g., Exscientia's use of patient tumor samples). |

| Multi-Omics Data Suites | Enable systems biology validation through integrated analysis of genomics, proteomics, and transcriptomics data [9] [11]. | Target identification and qualification; biomarker discovery. |

| Validated Antibodies | Detect and quantify target proteins and specific post-translational modifications in immunoassays. | Immunoblotting for CETSA, immunohistochemistry, measuring protein expression. |

| High-Content Screening Assays | Provide multiparametric readouts from cell-based systems for phenotypic validation [9]. | Complex phenotypic screening; assessing efficacy and toxicity. |

Validation Through Clinical Translation

The Critical Need for Better Biomarkers

Clinical validation remains the ultimate test, yet Phase II trials have the highest failure rates (~66%), primarily due to inadequate efficacy [10]. This underscores the need for better biomarkers to objectively measure biological states and therapeutic effects early in development [10].

- Strategic Solutions for Biomarker Development:

- Embed multiple biomarkers in Phase I/IIa trials to quickly develop pharmacodynamic profiles.

- Develop synaptic and functional biomarkers in humans that provide a readout in a short timeframe.

- Target upstream processes before irreversible downstream damage occurs [10].

- Case Example - Alzheimer's Disease: Combining PET amyloid imaging with functional MRI (fc-MRI) has demonstrated that amyloid pathology is linked to neural dysfunction, providing a more functional correlate of therapeutic response than cognitive testing alone [10].

Experimental Protocol: Integrating Biomarkers in Early Trials

- Objective: To establish proof of mechanism and early signs of efficacy before large Phase III trials.

- Methodology:

- Phase I Embedded Biomarkers: Incorporate target engagement biomarkers (e.g., CETSA, imaging) to confirm the drug reaches and modulates its intended target in humans.

- Phase IIa Patient Stratification: Use molecularly defined biomarkers to enroll patient subpopulations most likely to respond, moving beyond syndromic diagnoses [10].

- Validation Endpoints: Successful modulation of the target pathway, coupled with early clinical signals in a biomarker-defined population, builds confidence for progression.

The following diagram outlines a target validation strategy based on the β-amyloid hypothesis for Alzheimer's disease, illustrating the connection between target, mechanism, and therapeutic intervention.

Alzheimer's Target Validation Pathway - A therapeutic strategy showing mGluR antagonist BCI-838 inhibiting β-amyloid42 production and promoting neurogenesis.

Validation is the indispensable thread connecting computational prediction to clinical success in drug discovery. As technologies like AI generate candidates at unprecedented speeds, the rigor of validation protocols becomes the true rate-limiting step and the most significant determinant of return on investment. The frameworks, metrics, and experimental protocols detailed in this guide provide a roadmap for researchers to critically assess platform performance and compound potential. The organizations that will lead the next wave of therapeutic innovation are those that treat validation not as a procedural hurdle, but as a strategic, integrated discipline spanning from in silico models to patient bedside. In an era of accelerated discovery, the most valuable asset is not simply the ability to generate candidates quickly, but the wisdom to validate them rigorously.

Understanding Aleatoric vs. Epistemic Uncertainty in Model Predictions

In the rigorous field of simplex optimization accuracy validation, particularly for high-stakes applications like drug development, a nuanced understanding of model uncertainty is not merely beneficial—it is essential. Predictive models are foundational to research, guiding decisions from molecular screening to clinical trial design. However, a model's prediction without a measure of its confidence is an incomplete piece of information. Uncertainty quantification (UQ) provides the necessary framework to evaluate the reliability of these predictions, enabling researchers to distinguish between high- and low-confidence outcomes.

The paradigm of uncertainty is traditionally divided into two core types: aleatoric and epistemic [15] [16]. Aleatoric uncertainty, often termed data uncertainty, captures the inherent noise or randomness in the data-generating process. It is irreducible, meaning it cannot be diminished by collecting more data from the same experimental process; it is a fundamental property of the system itself [15] [17]. In contrast, epistemic uncertainty, or knowledge uncertainty, stems from a lack of knowledge or information on the part of the model. This could be due to insufficient training data, especially in regions of feature space far from the existing training examples, or an overly simplistic model structure [16] [17]. Crucially, epistemic uncertainty is reducible by gathering more relevant data or improving the model [16].

For research scientists and drug development professionals, this distinction is a powerful tool for directing research resources. A prediction dominated by aleatoric uncertainty suggests that the underlying process is inherently stochastic, and further data collection may yield diminishing returns. Conversely, a prediction with high epistemic uncertainty flags a knowledge gap, indicating a prime opportunity for targeted experimentation or data acquisition to improve the model's foundational understanding [18]. Within the context of simplex optimization—a method used to find the optimal solution by traversing the vertices of a feasible region—accurately characterizing these uncertainties ensures that the identified optimum is not merely an artifact of model ignorance or noisy data, but a robust and reliable finding. This guide provides a comparative analysis of these uncertainties, complete with experimental data and protocols, to empower more validated and confident decision-making.

Conceptual Framework: Defining the Uncertainty Dichotomy

The following table provides a consolidated comparison of these two fundamental types of uncertainty, synthesizing perspectives from various fields including machine learning, engineering, and risk assessment [15] [16] [17].

Table 1: Core Characteristics of Aleatoric and Epistemic Uncertainty

| Feature | Aleatoric Uncertainty | Epistemic Uncertainty |

|---|---|---|

| Nature & Origin | Inherent randomness, stochasticity, or noise in the data itself [19]. | Lack of knowledge, information, or understanding in the model [17]. |

| Common Aliases | Data uncertainty, statistical uncertainty, irreducible uncertainty, stochastic uncertainty [15] [17]. | Knowledge uncertainty, systematic uncertainty, model uncertainty, reducible uncertainty [16] [17]. |

| Reducibility | Irreducible with more data from the same process/experiment [15]. | Reducible by collecting more data, especially in data-sparse regions, or improving the model [16] [17]. |

| Representation | Often modeled by placing a distribution over the model's output (e.g., predictive variance) [15]. | Often modeled by placing a distribution over the model's parameters (e.g., Bayesian neural networks) [15]. |

| Primary Cause | Noisy sensors, stochastic environmental factors, low-resolution data, or fundamental physical randomness [19]. | Sparse training data, insufficient feature representation, unobserved variables, or an inadequate model class [16]. |

| Example in Drug Dev. | Variability in a biological assay measurement due to limitations of the experimental technique. | Uncertainty in a model's prediction for a novel molecular scaffold not represented in the training set. |

It is crucial to recognize that this clear-cut dichotomy, while useful, is sometimes an oversimplification. Recent research highlights that the boundaries can be blurred, and the two uncertainties are often intertwined in practice [18]. For instance, the line between what is irreducible (aleatoric) and what is reducible (epistemic) can depend on the defined model class and the scope of the investigation [18] [20]. A quantity considered aleatoric in one model might be partly explained and its uncertainty reduced by a more complex, sophisticated model, thereby transferring some uncertainty from the aleatoric to the epistemic domain. Furthermore, in interactive systems like a chatbot for literature mining, the uncertainty about an answer can shift from epistemic to aleatoric as the system gathers more information through follow-up questions, dynamically changing the input on which the prediction is made [18]. Despite these nuances, the aleatoric-epistemic framework remains an immensely valuable starting point for reasoning about and quantifying the unknowns in our models.

A Visual Guide to Uncertainty Relationships

The diagram below illustrates the fundamental relationship between predictive, aleatoric, and epistemic uncertainty, and how they are influenced by the model and data.

Quantitative Comparison: Metrics and Experimental Data

Evaluating the quality of uncertainty estimates is as important as generating them. Different metrics target different properties of a good uncertainty quantifier, and there is no universal consensus on a single best metric [21]. The table below summarizes popular evaluation metrics used in machine learning, particularly in cheminformatics.

Table 2: Metrics for Quantifying and Evaluating Uncertainty Estimates

| Metric | Measures | Interpretation | Ideal Value |

|---|---|---|---|

| Spearman's Rank Correlation [21] | The ability of uncertainty values to rank-order the absolute prediction errors. | A higher positive value suggests the model can correctly identify which predictions will have high vs. low errors. | +1.0 |

| Negative Log Likelihood (NLL) [21] | The joint probability density of the observed data under the model's predictive distribution. | Lower values indicate the model's predicted probability distribution (with its mean and uncertainty) better explains the held-out test data. | 0.0 (theoretically) |

| Miscalibration Area [21] | The discrepancy between the empirical and predicted confidence levels (based on error vs. uncertainty). | A lower value indicates the model's stated uncertainty (e.g., "90% confidence interval") is more accurate and trustworthy. | 0.0 |

| Error-Based Calibration [21] | Whether the average absolute error (or RMSE) for a subset of predictions matches the expected value given their uncertainty. | A well-calibrated model shows a linear relationship where, e.g., the subset of predictions with σ ≈ 0.1 has an RMSE of about 0.1. | Slope of 1.0 |

No single metric provides a complete picture. For instance, a model can have a good Spearman's rank but be poorly calibrated, meaning its absolute uncertainty values are inaccurate [21]. Recent studies in cheminformatics suggest that error-based calibration is one of the most reliable and informative methods for UQ validation, as it directly tests the fundamental assumption that a model's stated uncertainty should correlate with its expected error [21].

Experimental Protocol for Uncertainty Evaluation

The following workflow provides a generalizable methodology for evaluating aleatoric and epistemic uncertainty estimates in a predictive modeling pipeline, suitable for tasks like molecular property prediction.

1. Data Splitting and Model Training: * Split the dataset (e.g., molecular structures and properties) into training, validation, and test sets. Ensure the test set includes both in-distribution samples and, if possible, out-of-distribution (OOD) samples to stress-test the model. * Train a model capable of uncertainty estimation. Common choices include: * Ensemble Methods: Train multiple models (e.g., Random Forests or neural networks) with different initializations or on bootstrapped data. The predictive mean is the ensemble average, while the predictive variance (or standard deviation) is a measure of total predictive uncertainty [21]. * Bayesian Neural Networks (BNNs): Use a model that places a prior distribution over weights and approximates the posterior, often via Monte Carlo Dropout or other variational inference techniques. Multiple stochastic forward passes are used to generate a distribution of predictions [15]. * Evidential Regression/Classification: Train a model to output the parameters of a higher-order distribution (e.g., a Normal-Inverse-Gamma prior), which naturally decomposes into aleatoric and epistemic components [15] [21].

2. Uncertainty Quantification:

* For a given test molecule, make a prediction and calculate its uncertainty.

* For a BNN or ensemble, the predictive uncertainty can be calculated as the entropy or variance of the predictive distribution.

* To decompose uncertainty, a common information-theoretic approach is:

* Total Predictive Uncertainty: H[y | x, D] = -∑_c p(y=c | x, D) log p(y=c | x, D) (Entropy of the predictive distribution).

* Aleatoric Uncertainty: E_{θ~p(θ|D)}[H[y | x, θ]] (Average entropy of each model's output distribution).

* Epistemic Uncertainty: H[y | x, D] - E_{θ~p(θ|D)}[H[y | x, θ]] (Mutual Information between parameters and prediction), which represents the disagreement between models [18] [15].

3. Metric Calculation & Visualization:

* Calculate the absolute error for each test prediction.

* Compute the metrics from Table 2 for the entire test set.

* Create an Error-Based Calibration Plot: Bin test predictions by their predicted uncertainty (e.g., standard deviation σ). For each bin, plot the root-mean-square error (RMSE) of the predictions in that bin against the average σ for that bin. A well-calibrated model will have points lying close to the line y = x [21].

The Researcher's Toolkit: Essential Methods for Uncertainty Quantification

Table 3: Research Reagent Solutions for Uncertainty Quantification

| Tool / Method | Function in Uncertainty Analysis | Typical Application Context |

|---|---|---|

| Monte Carlo Dropout [15] | A simple approximation of Bayesian inference in neural networks. Enables estimation of epistemic uncertainty by using dropout at test time and performing multiple stochastic forward passes. | Fast, post-training UQ for deep learning models in virtual screening. |

| Deep Ensembles [15] | Trains multiple models with different random initializations. The variance of their predictions captures predictive uncertainty, often outperforming other methods on calibration. | High-performance UQ for regression and classification tasks in QSAR modeling. |

| Bayesian Neural Networks (BNNs) [15] | Explicitly models a distribution over network weights, formally capturing epistemic uncertainty. Inference is computationally challenging but provides a principled framework. | Research-focused UQ where a rigorous probabilistic model is required. |

| Evidential Deep Learning [15] [21] | The model is trained to directly output the parameters of a prior distribution over the target variable, allowing for the direct computation of both aleatoric and epistemic uncertainty from a single forward pass. | Molecular property prediction where a direct uncertainty decomposition is desired. |

| Latent Space Distance [21] | A non-Bayesian method where uncertainty is estimated as the distance between a test point's latent representation and the representations of the nearest training points. Primarily captures epistemic uncertainty. | UQ for autoencoders or graph neural networks in anomaly detection for new chemistries. |

| Conformal Prediction | Not explicitly in search results, but a crucial modern tool. Provides model-agnostic, distribution-free prediction intervals with finite-sample coverage guarantees, complementing probabilistic UQ. | Creating robust prediction sets for classification tasks in clinical decision support. |

Uncertainty Decomposition Workflow

The following diagram outlines a generalized computational workflow for decomposing and analyzing predictive uncertainty in a machine learning model, integrating the tools from the toolkit above.

A thorough understanding and quantification of aleatoric and epistemic uncertainty is a cornerstone of robust model validation, especially within the framework of simplex optimization accuracy research. As demonstrated, these two types of uncertainty have distinct origins and implications, necessitating different mitigation strategies. While aleatoric uncertainty defines the fundamental limit of predictability for a given model and data generation process, epistemic uncertainty serves as a compass, guiding researchers toward the information needed to improve their models.

The experimental data and protocols presented highlight that there is no single "best" metric for UQ evaluation. A combination of ranking metrics (like Spearman's correlation) and, more importantly, calibration diagnostics provides the most holistic view of UQ performance. For drug development professionals, this translates to a more reliable assessment of which model predictions to trust, when to deploy a model for high-throughput screening, and when to instead commission further experiments to fill knowledge gaps.

Moving beyond the strict dichotomy, as suggested by contemporary research [18] [20], allows for a more nuanced and pragmatic approach. The future of UQ in optimization validation lies in developing task-specific uncertainties and metrics that directly align with the final decision-making goal, such as prioritizing compounds for synthesis. By integrating the sophisticated UQ methods from the researcher's toolkit—from deep ensembles to conformal prediction—into the simplex optimization loop, the scientific community can achieve a new level of validated accuracy and reliability, accelerating the journey from discovery to viable therapeutic.

Applied Simplex Methods: From Mixture Design to AI Model Calibration

Implementing Simplex-Centroid Designs for Bioactive Mixture Optimization

Simplex-centroid mixture design is a powerful statistical methodology used for optimizing the proportions of components in a mixture to achieve desired functional properties. In pharmaceutical and bioactive compound research, this approach is critical for systematically exploring how combinations of different compounds interact to enhance pharmacological efficacy. Unlike traditional experimentation that varies one factor at a time, mixture design allows researchers to efficiently study the entire compositional landscape with a minimal number of experimental runs, making it particularly valuable when working with expensive or scarce bioactive compounds [22]. The core principle of this design is that the total mixture is constrained to 100%, meaning the component proportions are interdependent, and the experimental region forms a geometric simplex (a triangle for three components, a tetrahedron for four, etc.) [23].

This methodology has gained significant traction in natural product optimization due to its ability to identify synergistic interactions between components. For bioactive mixtures, this means discovering formulations where the combined effect exceeds the sum of individual effects, potentially leading to enhanced therapeutic outcomes with lower doses of active compounds. The simplex-centroid design specifically includes experimental points representing pure components, binary mixtures, ternary mixtures, and the overall centroid, providing comprehensive data to model the mixture response surface and locate optimal compositions [22] [23]. This systematic approach is revolutionizing how researchers develop complex formulations in drug development, nutraceuticals, and functional foods.

Experimental Protocols and Workflows

Fundamental Workflow for Bioactive Mixture Optimization

The following diagram illustrates the core experimental workflow for implementing simplex-centroid designs in bioactive optimization studies:

Key Experimental Parameters and Measurements

The experimental implementation requires careful planning and execution across multiple dimensions:

Component Selection and Constraints: The process begins with identifying bioactive components for inclusion based on their known biological activities. Researchers must define minimum and maximum constraints for each component to establish a feasible experimental region. For example, in optimizing a ternary mixture of eugenol, camphor, and terpineol for diabetes management, researchers established constraint boundaries that reflected practical formulation considerations while ensuring adequate exploration of the mixture space [24].

Design Matrix Generation: Using statistical software, researchers generate a simplex-centroid design matrix that specifies the exact proportions of each component for every experimental run. For three components, this typically includes three pure mixtures, three binary mixtures (50:50 ratios), one ternary mixture (33:33:33 ratio), and additional check-point blends depending on the specific design variant. Each mixture is prepared according to these precise specifications, with the total mass normalized to 100% [22] [23].

Bioactivity Assessment Protocols: The formulated mixtures undergo rigorous biological testing relevant to the target application. Common assays include:

- Enzyme Inhibition Assays: Measuring IC50 values for key enzymes like α-amylase, α-glucosidase, lipase, and aldose reductase for antidiabetic applications [24]

- Antimicrobial Testing: Determining minimum inhibitory concentrations (MIC) against target pathogens using standardized broth microdilution methods [25] [26]

- Antioxidant Capacity Evaluation: Employing multiple assays including ABTS, DPPH, FRAP, and CUPRAC to comprehensively assess antioxidant potential [24] [27]

- Cellular Assays: Assessing cytotoxicity, anti-inflammatory effects, or other relevant cellular responses depending on the therapeutic target

All assays should follow standardized protocols with appropriate controls and replication to ensure data reliability for subsequent modeling.

Comparative Performance Analysis

Quantitative Comparison of Optimization Results Across Applications

The table below summarizes experimental results from recent studies implementing simplex-centroid designs for bioactive mixture optimization:

Table 1: Performance Comparison of Simplex-Centroid Mixture Optimization Across Bioactive Applications

| Application Area | Bioactive Components | Optimal Mixture Ratio | Key Performance Metrics | Reference |

|---|---|---|---|---|

| Diabetes Management | Eugenol, Camphor, Terpineol | 44% Eugenol, 0.19% Camphor, 37% Terpineol | AAI IC50: 10.38 µg/mLAGI IC50: 62.22 µg/mLLIP IC50: 3.42 µg/mLALR IC50: 49.58 µg/mL | [24] |

| Antimicrobial Formulation | Saffron Stigma, Leaf, Tepal Extracts | 34% Stigma, 30% Leaf, 36% Tepal | Antifungal MIC: 6.25 mg/mLAntibacterial MIC: 25 mg/mL | [25] [26] |

| Antioxidant Optimization | Purslane, Quinhuilla, Xoconostle, Quintonil | Portulaca oleracea & Chenopodium album binary mixture | Total Phenols: >11 mg GAE/gFlavonoids: >13 mg QE/gAntioxidant Activity: 66.0 TE/g | [27] |

| Food Stabilization | Basil Seed Gum, CMC, Guar Gum | 84.43% BSG, 15.57% Guar Gum | Optimal viscosity and melting properties | [22] |

Analysis of Synergistic Benefits and Validation Accuracy

The comparative data reveals several important patterns regarding the performance of simplex-centroid designs:

Synergistic Interactions: Across multiple studies, optimized mixtures demonstrated significant synergistic effects that surpassed the performance of individual components. The ternary mixture of eugenol, camphor, and terpineol exhibited remarkably low IC50 values for multiple enzymes involved in diabetes pathogenesis, with eugenol and terpineol identified as the primary contributors to bioactivity enhancement [24]. Similarly, the combination of saffron by-products achieved antifungal activity at MIC values substantially lower than individual extracts, highlighting the power of mixture optimization in unlocking hidden potential from natural resources [25].

Model Accuracy and Validation: The predictive capability of models generated from simplex-centroid designs has proven exceptionally accurate across studies. In the diabetes management study, experimental validation of the optimal mixture resulted in IC50 values with less than 10% deviation from predicted values, confirming high model accuracy [24]. This reliability is crucial for pharmaceutical applications where formulation consistency directly impacts therapeutic outcomes and regulatory approval.

Multi-response Optimization: A key advantage of the simplex-centroid approach is its ability to simultaneously optimize multiple response variables. The desirability function approach used in several studies enables researchers to balance sometimes competing objectives, such as maximizing bioactivity while minimizing cost or unwanted characteristics [24] [22]. This multi-dimensional optimization capability makes the methodology particularly valuable for complex pharmaceutical formulations with multiple performance criteria.

Comparison with Alternative Optimization Methodologies

Methodological Comparison Framework

The following diagram illustrates the structural relationship between simplex-centroid design and alternative optimization methodologies:

Comparative Analysis of Optimization Approaches

Table 2: Methodological Comparison Between Simplex-Centroid Design and Alternative Optimization Approaches

| Methodology | Key Characteristics | Advantages | Limitations | Typical Applications |

|---|---|---|---|---|

| Simplex-Centroid Design | Systematic exploration of mixture space; Specialized polynomial models; Design constraint incorporation | Efficient with limited runs; Models synergistic effects; High prediction accuracy; Clear visualization | Limited to mixture variables; Constrained sum (100%) complicates analysis; Requires statistical expertise | Bioactive formulation; Food product development; Pharmaceutical optimization [24] [25] [22] |

| One-Factor-at-a-Time (OFAT) | Varies one factor while holding others constant; Traditional sequential approach | Simple implementation; Intuitive interpretation; Minimal statistical knowledge required | Misses interaction effects; Inefficient resource use; High risk of missing true optimum | Preliminary investigations; Systems with known minimal interactions |

| Metaheuristic Algorithms (PSO, CFO, GA) | Population-based stochastic search; Bio-inspired mechanisms; Randomness incorporation | Global optimization capability; Handles complex landscapes; No gradient information needed | High computational cost; Parameter sensitivity; Premature convergence risk | Data clustering; Engineering design; Complex non-convex problems [6] |

| Full Factorial Design | All possible combinations of factor levels; Independent factors; Linear modeling | Captures all interactions; Straightforward analysis; General applicability | Curse of dimensionality; Impractical for many factors; Inefficient for mixtures | Screening experiments; Independent factor optimization [23] |

Contextual Advantages and Limitations Analysis

The comparative analysis reveals distinct advantages of simplex-centroid designs for bioactive mixture optimization:

Efficiency in Experimental Resource Utilization: Simplex-centroid designs provide comprehensive information about mixture behavior with remarkably few experimental runs compared to alternative methods. For a three-component system, the simplex-centroid design typically requires only 7-10 carefully selected mixtures to model the response surface adequately, whereas a full factorial approach would require many more runs while being less suited to mixture constraints [23]. This efficiency is particularly valuable when working with expensive bioactive compounds or time-consuming biological assays.

Synergistic Interaction Modeling: Unlike one-factor-at-a-time approaches that cannot detect component interactions, simplex-centroid designs specifically model binary and ternary interactions through specialized polynomial models (Scheffé polynomials). This capability is crucial for bioactive formulations where synergistic effects between compounds can significantly enhance therapeutic efficacy [24] [25]. The models generated can precisely quantify how combinations of components produce effects greater than the sum of their individual contributions.

Predictive Accuracy and Validation: The mathematical foundation of simplex-centroid designs enables high predictive accuracy for optimal mixtures, with multiple studies reporting less than 10% deviation between predicted and experimentally validated results [24]. This reliability surpasses many metaheuristic approaches which may provide good optimization but limited predictive capability for untested mixtures. The statistical rigor also supports quantitative assessment of model adequacy through lack-of-fit tests and residual analysis.

However, simplex-centroid designs face limitations when dealing with non-mixture variables or when the experimental region is highly irregular. In such cases, hybrid approaches combining mixture design with other optimization strategies may be necessary to address more complex formulation challenges.

Essential Research Reagent Solutions

Key Materials and Methodological Tools

Successful implementation of simplex-centroid designs for bioactive optimization requires specific research reagents and methodological components:

Table 3: Essential Research Reagent Solutions for Simplex-Centroid Bioactive Optimization

| Category | Specific Items | Function in Optimization Process | Example Applications |

|---|---|---|---|

| Bioactive Components | Eugenol, Camphor, Terpineol; Saffron by-product extracts; Plant phenolic extracts | Active ingredients being optimized for enhanced bioactivity | Diabetes management [24]; Antimicrobial formulations [25]; Antioxidant development [27] |

| Enzymes & Assay Kits | α-glucosidase, α-amylase, lipase; Aldose Reductase Screening Kit; ABTS, DPPH, FRAP reagents | Quantitative bioactivity assessment for response modeling | Enzyme inhibition assays [24]; Antioxidant capacity evaluation [24] [27] |

| Statistical Software | Minitab Statistical Software; Design-Expert; R with mixexp package | Experimental design generation; Response surface modeling; Optimal mixture identification | Design creation and analysis [22]; Optimization through desirability function [24] |

| Analytical Instruments | HPLC-DAD; Microplate readers; Rotary evaporators | Phytochemical characterization; High-throughput bioassay measurement; Extract concentration | Compound identification [25]; Absorbance measurement for bioassays [24]; Extract preparation [25] |

| Microbiological Materials | Bacterial/fungal strains (S. aureus, E. coli, C. albicans); Culture media; Microdilution plates | Antimicrobial activity assessment through MIC determination | Antibacterial/antifungal evaluation [25] [26] |

Implementation Considerations for Research Programs

The effective application of these research reagents requires careful methodological planning:

Statistical Software Selection: The choice of statistical software significantly impacts implementation efficiency. Packages specifically supporting mixture designs (such as Minitab, Design-Expert, or R with appropriate packages) provide built-in capabilities for generating design matrices, modeling response surfaces, and identifying optimal mixtures using desirability functions [22]. These tools automate the complex calculations involved and facilitate visualization of the mixture response surfaces.

Bioassay Standardization: Consistent and reproducible bioactivity assessment is crucial for generating reliable response data. Researchers should implement standardized protocols with appropriate positive and negative controls, replicate measurements to estimate variability, and validate assay performance characteristics before full-scale optimization studies [24] [25]. High-throughput methods such as microplate-based assays are particularly valuable for efficiently testing multiple mixtures.

Extraction and Characterization Methods: For natural product optimization, standardized extraction protocols ensure consistent bioactive compound profiles across experimental runs. Advanced characterization techniques like HPLC-DAD provide quantitative data on key bioactive compounds, helping to explain observed bioactivity patterns and ensure batch-to-batch consistency [25].

Simplex-centroid mixture design represents a methodology superior to traditional one-factor-at-a-time approaches for optimizing bioactive formulations. The experimental evidence across multiple applications demonstrates its exceptional efficiency in resource utilization, accuracy in predicting optimal mixtures, and unique capability to model synergistic interactions between components. The consistently high performance in validation studies, with deviations typically under 10% between predicted and experimental results, underscores the reliability of this approach for pharmaceutical and bioactive product development [24] [25].

The comparative analysis reveals that while alternative methods like metaheuristic algorithms excel in global optimization for complex landscapes, simplex-centroid designs provide the specific advantages of statistical rigor, predictive capability, and efficient experimental resource allocation that are particularly valuable when working with expensive or scarce bioactive compounds. The integration of this methodology with modern analytical techniques and high-throughput bioassays creates a powerful framework for accelerating the development of enhanced natural therapeutics, functional foods, and pharmaceutical formulations.

For researchers implementing these designs, success depends on careful component selection, appropriate constraint definition, standardized bioactivity assessment, and sophisticated statistical analysis. When these elements are properly integrated, simplex-centroid mixture design emerges as an indispensable tool in the modern bio-optimization toolkit, capable of unlocking the full potential of complex bioactive mixtures through systematic, efficient, and predictive experimental strategy.

The search for natural alternatives to synthetic enzyme inhibitors is a critical focus in modern therapeutic development, particularly for managing widespread metabolic disorders like Type 2 Diabetes Mellitus [28]. Monoterpenes and phenolic compounds from plants, including eugenol, camphor, and terpineol, have demonstrated significant potential due to their ability to inhibit key enzymes involved in carbohydrate and lipid metabolism [28] [29]. However, the individual efficacy of these compounds often proves limited compared to combination therapies. This case study explores the application of a simplex-centroid mixture design to optimize a ternary formulation of eugenol, camphor, and terpineol for targeted inhibition of α-amylase, α-glucosidase, lipase, and aldose reductase—enzymes critically involved in diabetes pathogenesis [28]. The research is situated within a broader thesis on simplex optimization accuracy validation, demonstrating how statistical experimental design can predict and validate bioactive formulations with remarkable precision, thereby accelerating the development of natural product-based therapies.

Experimental Design and Methodologies

Simplex-Centroid Mixture Design

The optimization of the three-component mixture employed a simplex-centroid design, a specialized response surface methodology ideal for formulation studies where the total composition must sum to 100% [28]. This design systematically explores the entire experimental space defined by the proportions of eugenol, camphor, and terpineol, rather than testing random combinations. It efficiently evaluates not only the individual effects of each compound but also their binary and ternary interactions, enabling the identification of synergistic effects that would be missed in one-factor-at-a-time experiments. The design generated specific mixture blends which were then tested for multiple biological responses: α-amylase inhibition (AAI), α-glucosidase inhibition (AGI), lipase inhibition (LIP), and aldose reductase inhibition (ALR), with the half-maximal inhibitory concentration (IC₅₀) serving as the critical response variable for each [28]. The resulting experimental data was fitted to mathematical models, and a desirability function approach was applied to identify the optimal formulation that simultaneously minimized all four IC₅₀ values [28].

Enzyme Inhibition Assays

The inhibitory activity of the individual compounds and their mixtures against the target enzymes was assessed using standardized in vitro protocols. The following table summarizes the key experimental conditions for each assay.

Table 1: Experimental Protocols for Key Enzyme Inhibition Assays

| Assay | Enzyme Source | Key Reagents & Measurements | Protocol Summary |

|---|---|---|---|

| α-Amylase Inhibition (AAI) | Porcine pancreas [28] | Enzyme substrate, test compounds, absorbance measurement [28] | IC₅₀ values determined by measuring residual enzyme activity at various inhibitor concentrations [28]. |

| α-Glucosidase Inhibition (AGI) | Saccharomyces cerevisiae [28] | Enzyme substrate, test compounds, absorbance measurement [28] | IC₅₀ values determined by measuring residual enzyme activity at various inhibitor concentrations [28]. |

| Lipase Inhibition (LIP) | Porcine pancreas [28] | Enzyme substrate, test compounds, absorbance measurement [28] | IC₅₀ values determined by measuring residual enzyme activity at various inhibitor concentrations [28]. |

| Aldose Reductase Inhibition (ALR) | Commercial Screening Kit [28] | Aldose Reductase Inhibitor Screening Kit [28] | IC₅₀ determined using a standardized kit protocol [28]. |

| Antioxidant Activity (ABTS) | N/A | ABTS, potassium persulfate, Trolox standard, absorbance at 734 nm [28] | ABTS radical cation scavenging ability measured and expressed as Trolox equivalents [28]. |

| Antioxidant Activity (FRAP) | N/A | FRAP reagent (acetate buffer, TPTZ, FeCl₃), absorbance at 593 nm [28] | Ferric-reducing power measured and expressed as EC₅₀ [28]. |

Experimental Workflow

The following diagram illustrates the sequential workflow of the optimization process, from initial design to final validation.

Results and Data Analysis

Performance Comparison: Individual Compounds vs. Optimized Mixture

The efficacy of the individual compounds was significantly enhanced in the optimized mixture, demonstrating the power of the simplex-centroid design to unlock synergistic interactions. The table below presents a direct comparison of the IC₅₀ values for the key enzyme targets.

Table 2: Inhibitory Performance (IC₅₀, µg/mL) of Individual Compounds versus the Optimized Mixture [28]

| Compound / Mixture | α-Amylase Inhibition (AAI) | α-Glucosidase Inhibition (AGI) | Lipase Inhibition (LIP) | Aldose Reductase Inhibition (ALR) |

|---|---|---|---|---|

| Eugenol | Data for individual compounds not fully provided in search results, but the study concluded eugenol and terpineol significantly enhanced bioactivity [28]. | |||

| Camphor | ||||

| Terpineol | ||||

| Optimized Mixture (Predicted) | 10.38 | 62.22 | 3.42 | 49.58 |

| Optimized Mixture (Validated) | 11.02 | 60.85 | 3.75 | 50.12 |

| Deviation (Validation vs. Prediction) | +6.2% | -2.2% | +9.6% | +1.1% |

The optimal formulation identified was 44% eugenol, 0.19% camphor, and 37% terpineol [28]. The remarkably low proportion of camphor suggests its role may be that of a modulator rather than a primary inhibitor. The experimental validation of this optimal blend showed less than 10% deviation from the predicted IC₅₀ values for all targeted enzymes, confirming the high accuracy and predictive power of the mixture design model [28]. The overall desirability score for this formulation was 0.99 (on a scale of 0 to 1), confirming a near-perfect balance of all four inhibitory responses [28].

The Scientist's Toolkit: Key Research Reagents and Materials

Successful replication of this research requires specific, high-quality reagents and materials. The following table details the essential components used in the featured study.

Table 3: Essential Research Reagents and Materials for Replicating the Study

| Item | Specification / Source | Critical Function in the Experiment |

|---|---|---|

| Eugenol | Analytical standard grade (e.g., Sigma-Aldrich) [28] | Primary bioactive compound; contributes significantly to enzyme inhibition [28]. |

| Camphor | Analytical standard grade (e.g., Sigma-Aldrich) [28] | Bioactive compound; likely acts as a synergistic modulator in the mixture [28]. |

| α-Terpineol | Analytical standard grade (e.g., Sigma-Aldrich) [28] | Primary bioactive compound; works in synergy with eugenol to enhance inhibition [28]. |

| α-Glucosidase | From Saccharomyces cerevisiae (e.g., Sigma-Aldrich) [28] | Target enzyme for assessing antidiabetic potential via carbohydrate digestion blockade. |

| α-Amylase | From porcine pancreas (e.g., Sigma-Aldrich) [28] | Target enzyme for assessing antidiabetic potential via starch digestion blockade. |

| Pancreatic Lipase | From porcine pancreas (e.g., Sigma-Aldrich) [28] | Target enzyme for assessing potential in managing lipid absorption. |

| Aldose Reductase Kit | Commercial Screening Kit (e.g., Biovision) [28] | Standardized system for reliably measuring aldose reductase inhibition activity. |

| ABTS Reagent | 2,2'-azino-bis(3-ethylbenzothiazoline-6-sulfonic acid) [28] | Used to determine the radical scavenging (antioxidant) capacity of the compounds. |

| FRAP Reagent | Freshly prepared from Acetate buffer, TPTZ, and FeCl₃ [28] | Used to determine the ferric-reducing antioxidant power of the compounds. |

Discussion

Validation of Simplex Optimization Accuracy

The core of this case study validates a key thesis: that simplex-centroid mixture design is a highly accurate and reliable tool for optimizing multi-component bioactive formulations. The evidence for this is robust. The deviation between the predicted and experimentally validated IC₅₀ values was less than 10% for all four enzyme targets, a margin considered excellent in biological assays [28]. This high degree of accuracy demonstrates that the mathematical models generated from the simplex design data effectively captured the complex interactions—including synergism and antagonism—between eugenol, camphor, and terpineol.

Furthermore, the study underscores a significant efficiency gain. The simplex design achieved optimization with a minimal number of experimental runs, systematically exploring the ternary mixture space without the need for exhaustive testing of every possible combination [28]. This aligns with broader research efforts, such as the development of the "50-BOA" (IC₅₀-Based Optimal Approach), which also seeks to reduce experimental burden while improving the precision of enzyme inhibition analysis [30] [31]. The validated model provides a predictive framework that can be leveraged for further formulation refinement or scaling, reducing both time and resource expenditure in development cycles.

Broader Implications for Drug Development